Data Quality Framework: 62% to 99% Accuracy

The Problem

A B2B SaaS company discovered that 38% of their analytics data had quality issues: null values in critical fields, duplicate customer records, inconsistent date formats, and broken foreign key relationships. Their data team was spending more time fixing data than analyzing it. Marketing attribution was unreliable, and sales forecasts were consistently off by 20-30%.

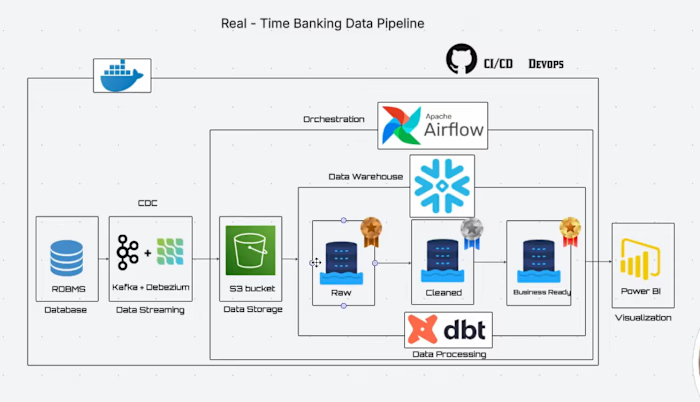

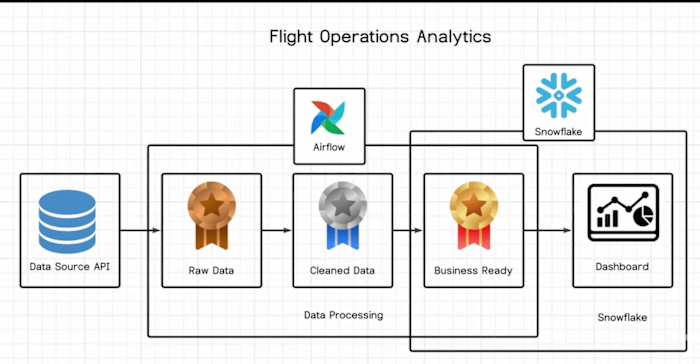

What I Built

I designed and implemented a comprehensive data quality framework with automated monitoring, validation, and remediation. The framework had three layers:

Layer 1 - Detection: Automated profiling scripts that scanned every table daily and flagged anomalies against predefined rules (null rates, uniqueness, referential integrity, freshness).

Layer 2 - Prevention: dbt tests embedded in every transformation model. If a test failed, the pipeline stopped before bad data reached production tables.

Layer 3 - Remediation: Automated cleanup jobs for common issues (deduplication, format standardization, null imputation based on business rules) plus alerting for issues requiring human review.

Key Results

Data accuracy improved from 62% to 99.1% within 4 weeks

Duplicate records eliminated across 12 core tables

Analytics team reclaimed 15 hours per week previously spent on manual data fixes

Sales forecast accuracy improved by 25% due to cleaner input data

Tools Used

Snowflake, dbt, Apache Airflow, Python, Microsoft Power BI

My Role

Solo data engineer. Designed the framework architecture, built all validation rules and automated remediation scripts, and created the monitoring dashboard. Delivered in 6 weeks.

Like this project

Posted Apr 23, 2026