Real-Time Analytics: 48hr Latency to 90 Seconds

The Problem

A SaaS company was running their analytics on batch jobs that only refreshed every 48 hours. Their customer success team was making decisions on stale data. Churn signals were being caught 2 days too late. They needed real-time visibility into product usage, revenue metrics, and customer health scores.

What I Built

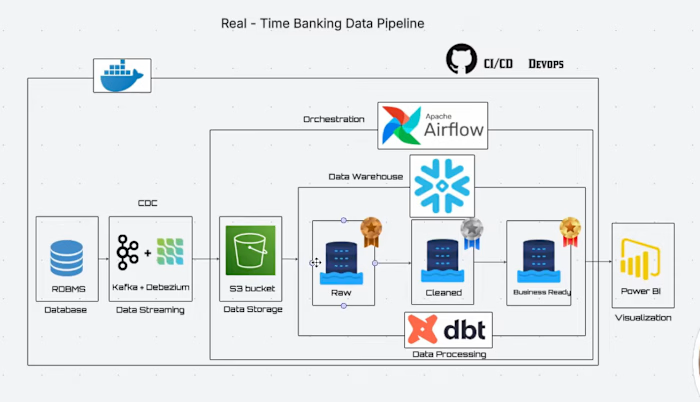

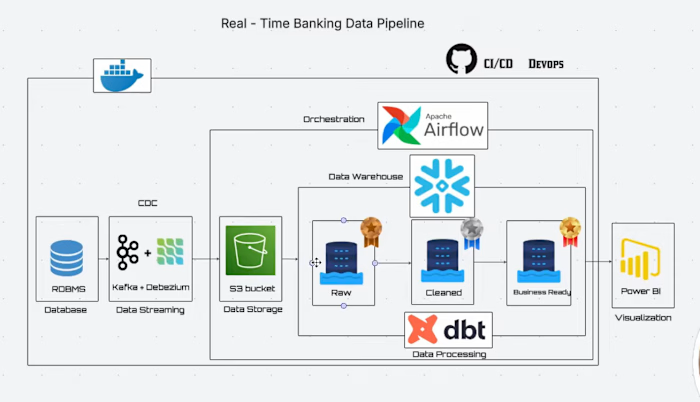

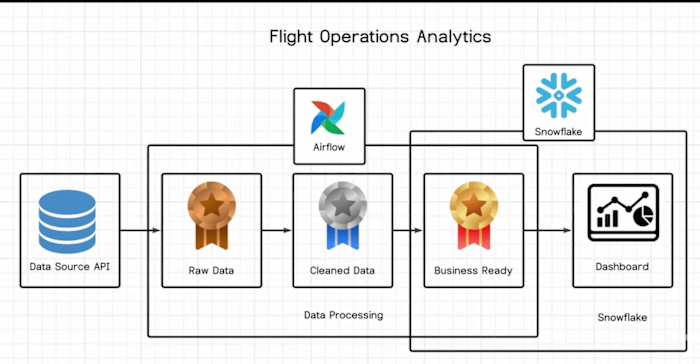

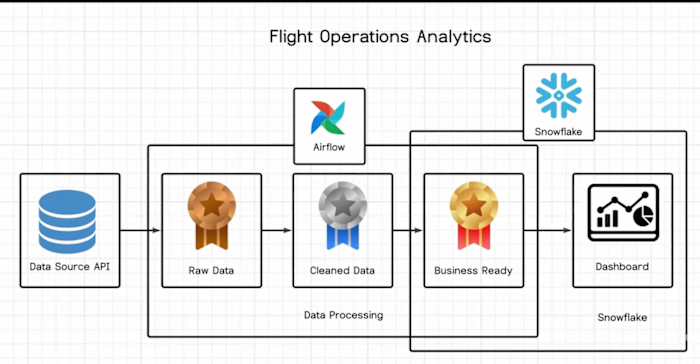

I designed and deployed a real-time streaming analytics platform using Kafka for change data capture, Snowflake as the central warehouse, and dbt for transformation logic. Apache Airflow handled orchestration and monitoring.

The architecture captured every database change event in real-time via Kafka CDC connectors, landed raw events into Snowflake staging tables, and ran incremental dbt models every 60 seconds to produce analytics-ready datasets.

I also built automated alerting for pipeline failures and data quality checks at every stage of the pipeline.

Key Results

Data latency reduced from 48 hours to under 90 seconds

Customer success team caught churn signals 47x faster

Pipeline processes 1.2M events per day with 99.8% uptime

Infrastructure costs stayed flat despite 10x more frequent processing

Tools Used

Kafka, Snowflake, dbt, Apache Airflow, Python, SQL

My Role

Solo data engineer. Owned the full project from architecture design through deployment and monitoring setup. Delivered in 6 weeks.

Like this project

Posted Apr 19, 2026

Real-time SaaS analytics platform using Kafka CDC, Snowflake, and dbt. Reduced data latency from 48 hours to under 90 seconds.