Production ETL: Messy Data to Clean Analytics

The Problem

An e-commerce company had data scattered across 5 different sources: Shopify, Google Analytics, a custom CRM, payment processor logs, and warehouse inventory feeds. None of it was connected. The analytics team spent 3 days every week manually pulling and cleaning data in spreadsheets just to produce a weekly report.

What I Built

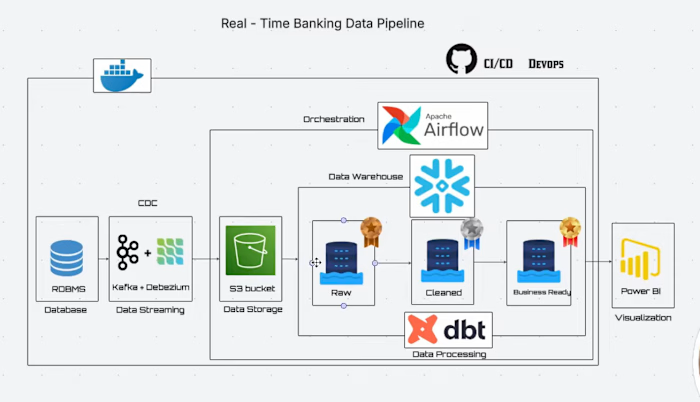

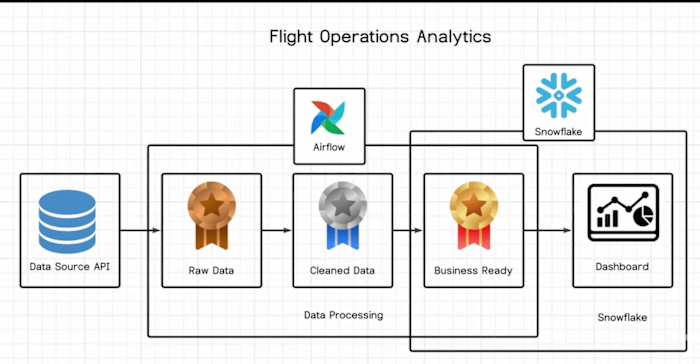

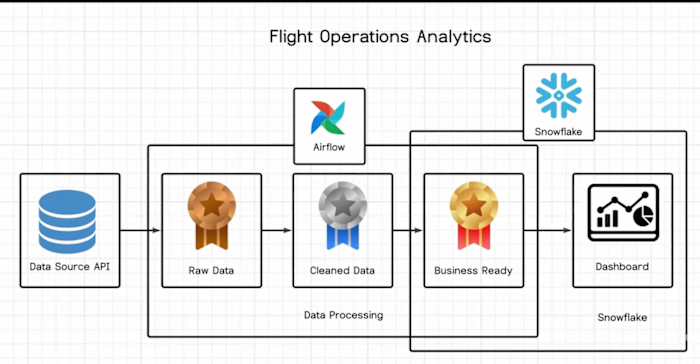

I designed a full medallion architecture ETL pipeline using Snowflake, dbt, and Apache Airflow. Bronze layer ingested raw data from all 5 sources. Silver layer handled deduplication, type casting, null handling, and business logic. Gold layer produced clean, joined datasets ready for dashboards and ad-hoc analysis.

Every transformation was version-controlled in dbt with automated testing. Airflow DAGs ran on a schedule with Slack alerts for any failures.

Key Results

Weekly reporting time cut from 3 days to 15 minutes

Data accuracy improved from approximately 78% to 99.2%

Unified 5 data sources into a single source of truth

Analytics team could self-serve queries for the first time

Tools Used

Snowflake, dbt, Apache Airflow, Python, SQL

My Role

Lead data engineer on a 2-person team. I owned architecture decisions, built all dbt models, and configured the Airflow orchestration. Delivered in 2 weeks.

Like this project

Posted Apr 15, 2026

Designed and deployed a full medallion architecture ETL pipeline using Snowflake, dbt, and Airflow. Messy data to trusted analytics in 2 weeks.

Likes

0

Views

1