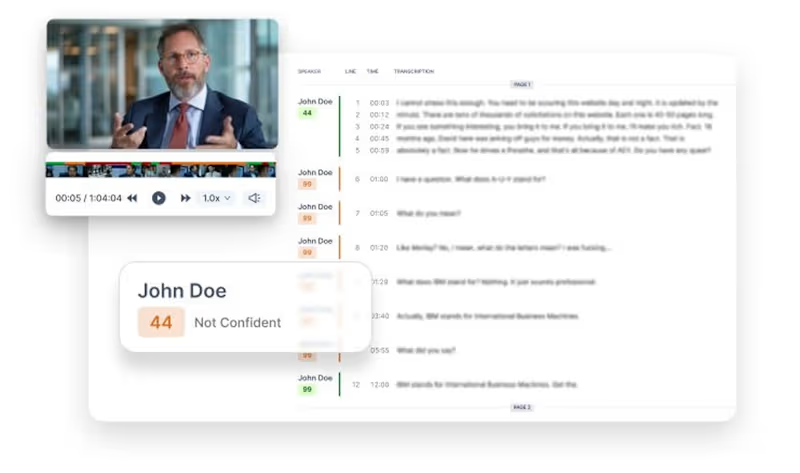

Power BI Data Analyst + ML AI Automation Expert

- 5.0

- Rating

- 94

- Followers

Power BI Data Analyst + ML AI Automation Expert

Connecting code with intelligence

Data Analyst turning Data insights into business impact.

- 5.0

- Rating

- 2

- Followers

Data Analyst turning Data insights into business impact.

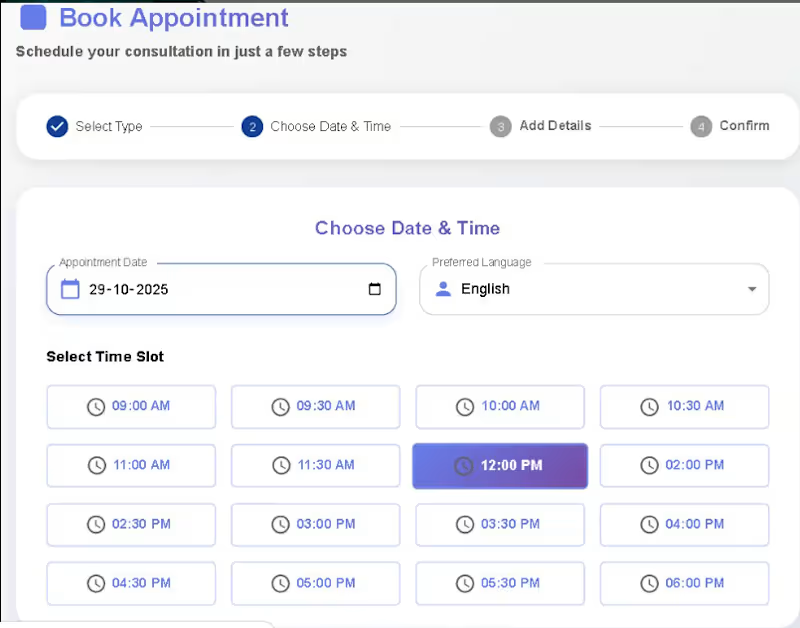

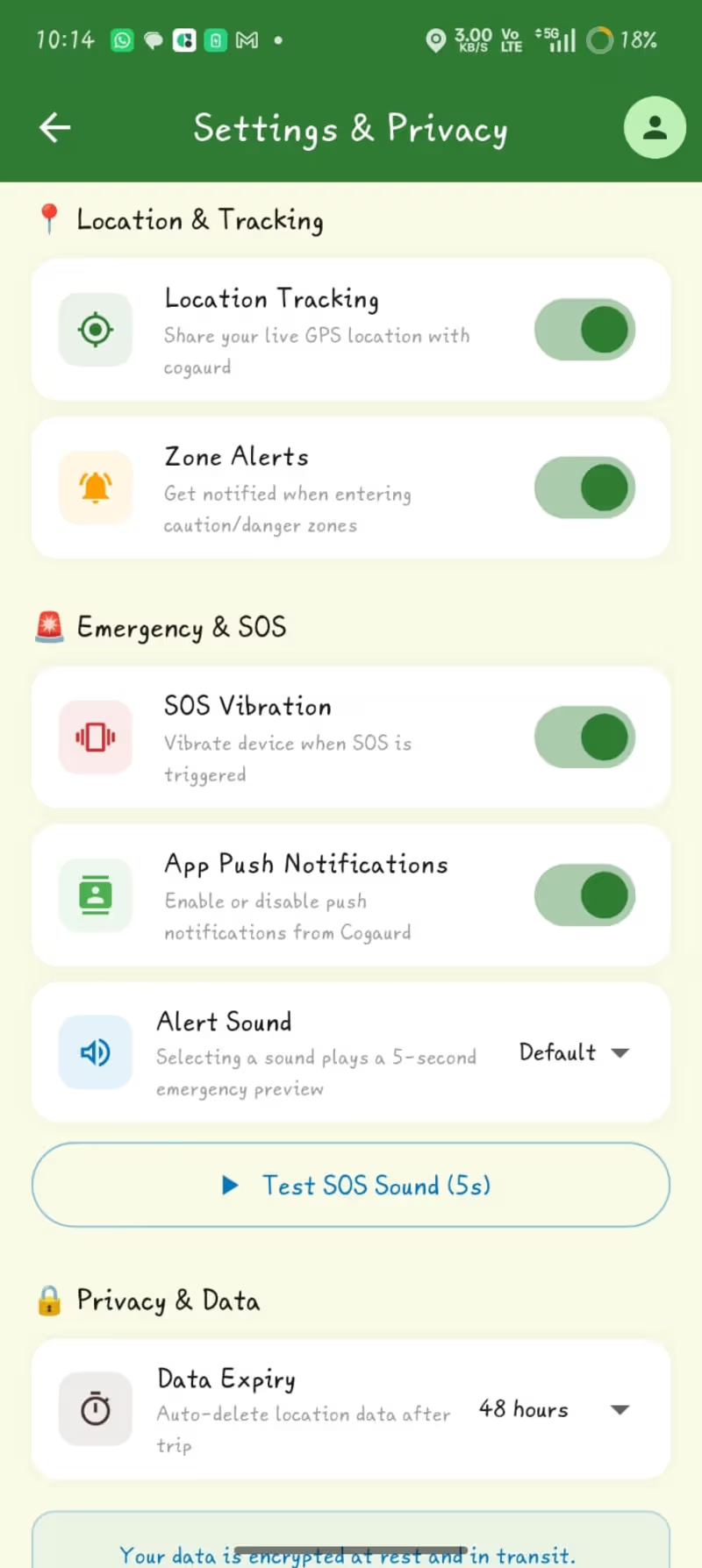

Creative tech solutions in AI and cybersecurity

Creative tech solutions in AI and cybersecurity

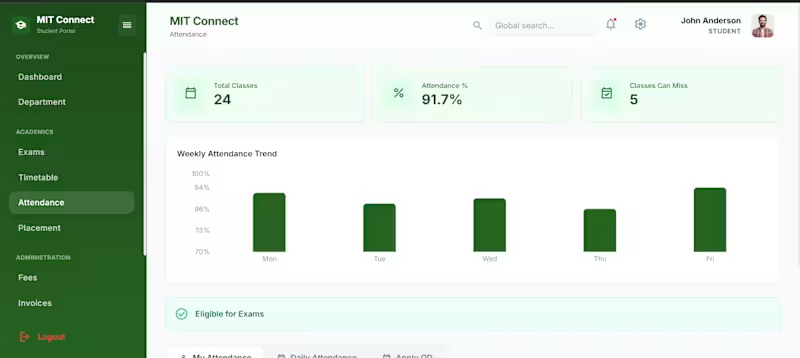

Senior Software Engineer | 10+ Yrs Across Industries

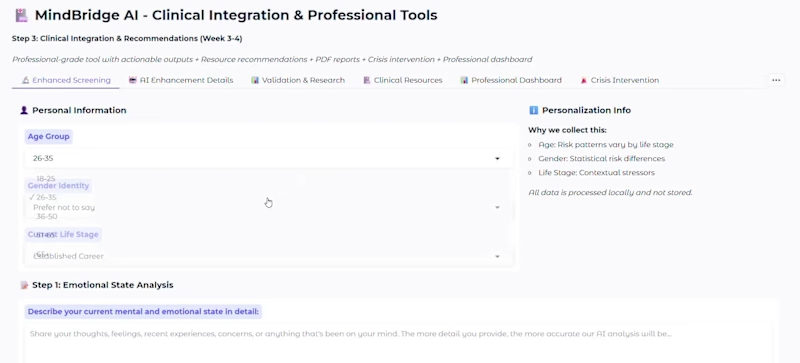

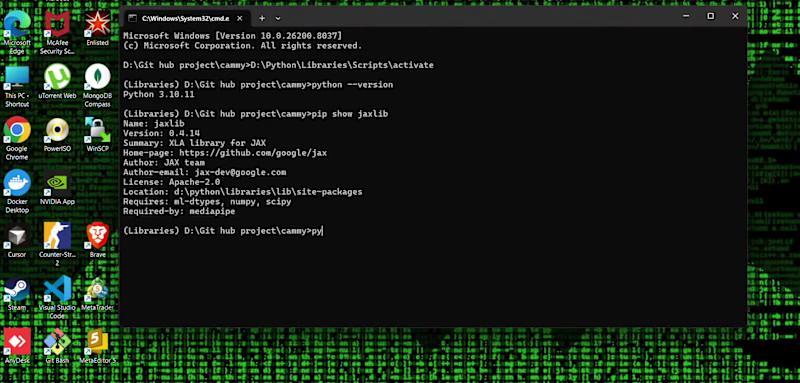

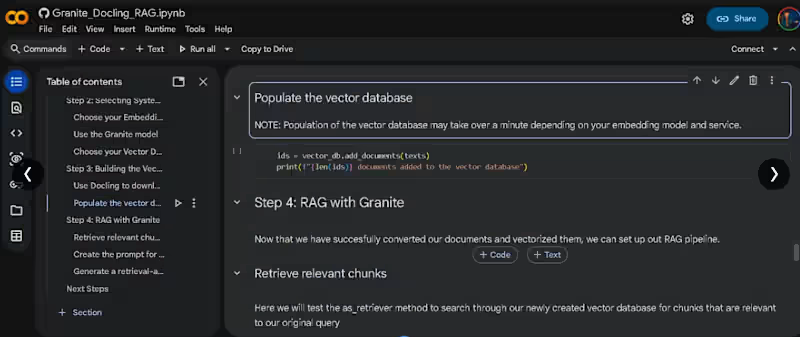

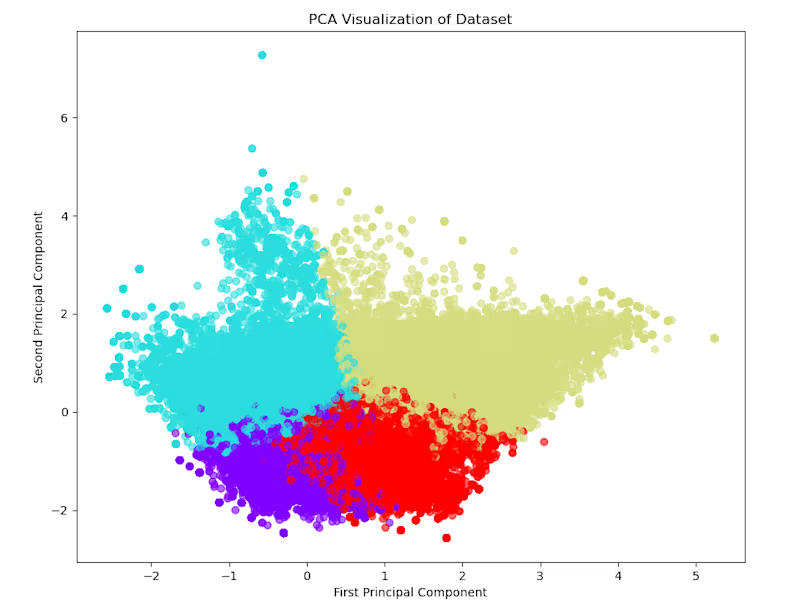

I’m an AI & Machine Learning engineer with expertise in deve

I’m an AI & Machine Learning engineer with expertise in deve

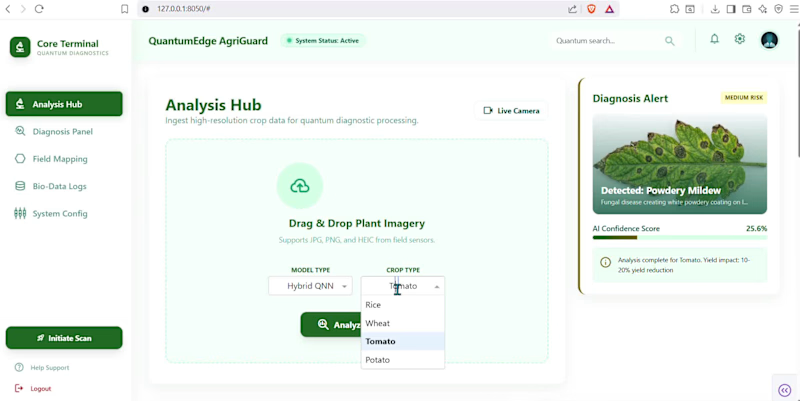

Building scalable Next.js, Flutter & AI applications

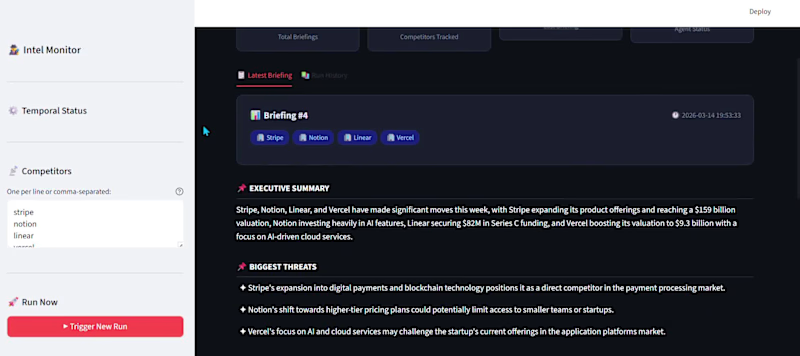

Tech Renaissance Leader: AI, FinTech, Web

Tech Renaissance Leader: AI, FinTech, Web

View more →