pro

Ivam Marchon

Creative Director | Ads Videos That Convert with AI and 3D

- $1k+

- Earned

- 2x

- Hired

- 5.00

- Rating

- 49

- Followers

The objective in this work was to create a series of premium assets for Balto, featuring five specific dog breeds (Pointer, Corgi, Poodle, Westie, Beagle) interacting with a 3D branded element. The project required a seamless blend of AI-generated characters and high-end motion design.

THE CHALLENGE: Raw AI video generations often suffer from significant technical flaws: blurred fur textures, inconsistent lighting, and "shimmering" artifacts. For a premium brand like Balto, these "AI artifacts" create a cheap, amateurish feel that dilutes brand authority. Prompt engineering alone is incapable of producing professional and stable assets.

THE STRATEGY: A Hybrid Digital Pipeline. As the Creative Lead, I developed a proprietary hybrid workflow to bridge the gap between raw AI output and cinematic quality:

- Biological Realism Pass: I executed a heavy post-production layer focused on "fur fidelity" and ocular clarity. By refining the noise-to-texture ratio, I removed the plastic "AI look," ensuring the dogs felt organic and physically present.

- Geometric Fidelity & Interaction: The interaction with the 3D coin required precise physics.

- Iterative Refinement: Every frame was audited for "shimmering" and warped geometry (common failures in AI) and corrected through a multi-pass upscaling and denoising process.

THE CONCLUSION: This project serves as a benchmark for the future of AI in high-end advertising. It proves that AI is a tool, not a replacement for Creative Direction. To achieve this level of quality, a professional eye for VFX and post-production is mandatory. Without this specialized oversight, the result is merely a "cool experiment." With it, it becomes a brand asset of elite quality.

Production Note: The coin in this video served as a spatial and temporal anchor, requiring precise synchronization to ensure realistic physics and interaction.

I was responsible for the high-fidelity AI generation, biological texturing, and character performance. The final high-resolution 3D asset rendering and compositing were managed by the client’s internal team post-delivery.

1

150

There is a massive gap between "generating" a video and directing one. In my recent work for my client, AI was only the engine. The intelligence and sensitivity were 100% human.

For this project, every decision was guided by the "Precision Culture" and the brand’s new 2026 standard. Here is the strategic intent behind the imagery:

1) Cinematographic Intent: Every camera angle and lens choice was planned and intentional; nothing was left to AI chance. By leveraging 3D, I was able to execute the exact camera angles I had envisioned with surgical precision, ensuring there was true direction in every frame. There was true direction in this video.

2) Beyond the Product: The story isn't about selling shears; it's about the trust placed in the tool.

3) The Ritual of Succession: We constructed a narrative of legacy. When the Katana Master presents his blade and the "Hanzo Artist" presents his shears, we are witnessing the transfer of authority from heritage to hyper-modernity.

4) The Hanzo Code: The choice of silence and paused dialogue reflects the core philosophy: strength is calm under pressure.

This required five days of refinement and a lot of cinematographic decisions to ensure the video wasn't just "generated," but directed properly.

Note: This film was created as a creative spec piece for conceptual exploration. It is a narrative interpretation and does not represent Hanzo’s actual manufacturing facilities or locations.

If your brand’s visual narrative needs more than just “generation”, and it needs a directed vision, send me a message and let’s discuss how to elevate your standards for 2026.

0

207

Recently, while working on a client production, I hit a common wall in the AI workflow: the lack of spatial agency.

I had a very specific cinematic vision in mind, a low-angle perspective to set the narrative tone. I spent almost an hour refining prompts, but the results were consistently generic. The AI was guessing, not directing.

The insight was simple: Why fight an algorithm for an angle when I can define the geometry myself? Then I jumped into Blender, spent 4 minutes on a block-out (placing the object, locking the camera height and focal length) and used that as the structural skeleton for the AI.

The shift:

· From Passive Prompting: Hoping the machine "gets it" right.

· To Active Art Direction: Ensuring every frame is a deliberate choice.

Check out the workflow below.

1

379

Testing Kling 2.6 Update for Video Transitions

1

22

Hattori Hanzo Shears needed to market their new apparel line, but physical samples were weeks away.

The Solution: A hybrid pipeline merging 3D precision with AI realism. I created accurate "Digital Twins" in CLO3D and Blender directly from tech packs. Then, using Gen AI, I integrated these garments into hyper-realistic lifestyle scenarios with virtual influencers.

The Result: We bypassed casting and photography costs entirely, delivering a high-end campaign and 360º assets before the factory even finished the first batch.

Check out the full breakdown here: https://ivammarchon.com/3daihattorihanzo/

17

652

Ferrari AD Concept Visual Direction

2

31

Bogtrotter Whiskey Cinematic Ad

7

180

Entrance Screen Design for Monking Mode

0

5

AI-Driven Visual Experience for Fashion & Beauty Event

1

34

AI-Enhanced Fashion Visuals for Éssis

1

20

The Poetry of the Ordinary - Short film in Midjourney

1

23

Digital Fashion with 3D + AI

1

32

3D Fashion Design

2

51

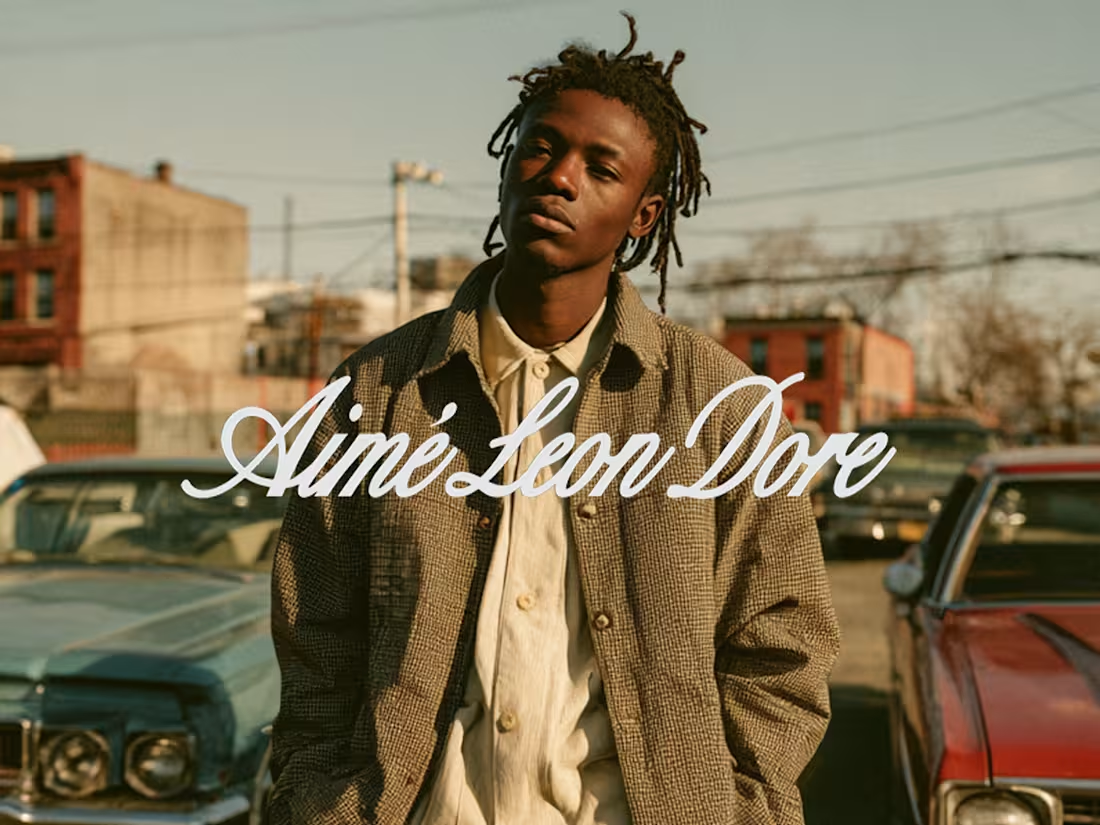

Campaign Concepts in Midjourney: Fashion & Streetwear

2

38

Photoshoot and Videos with AI

0

62

3D Motion Renders

0

25

FOOH Campaign with 3D Elements

0

20