PMF Brainstormer: AI Automation System in n8n

Overview

PMF Brainstormer is a backend automation project built in n8n for idea validation. A user submits a product idea through an API, the workflow runs market research with Exa, pushes that context through five structured Gemini analysis stages, stores the full execution trail in Postgres, and exposes operator-facing state in Airtable.This was not designed as a one-off AI demo. The goal was to build a system that behaves like a real delivery artifact: modular, inspectable, and resilient when external services return imperfect data.

The Problem

Most AI workflow demos look good at the happy-path level but fall apart when you ask harder engineering questions:

What happens when the model returns malformed JSON?

How do you inspect partial failures after the run is over?

How do you let a non-engineering operator review or override a decision?

How do you change prompts at runtime without rewriting the workflow?

I wanted to build a workflow that answered those questions clearly.

The Solution

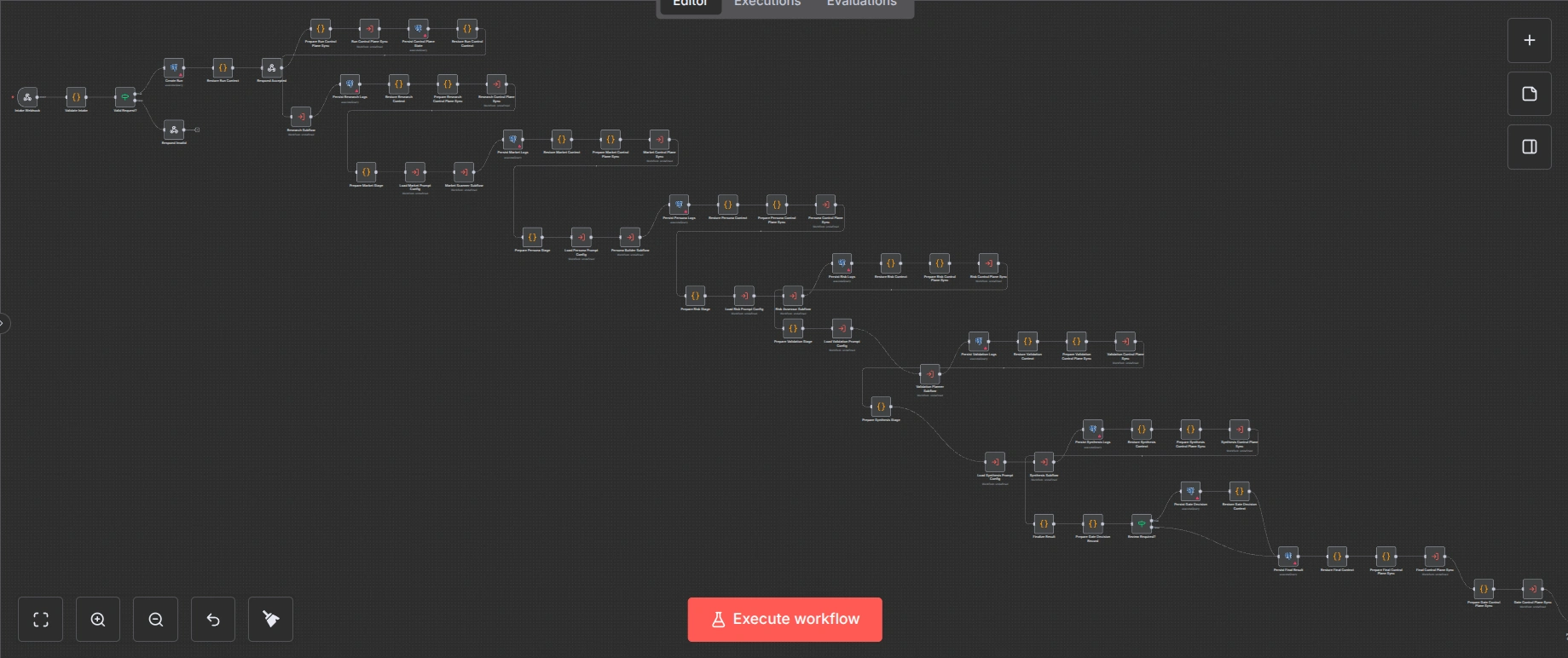

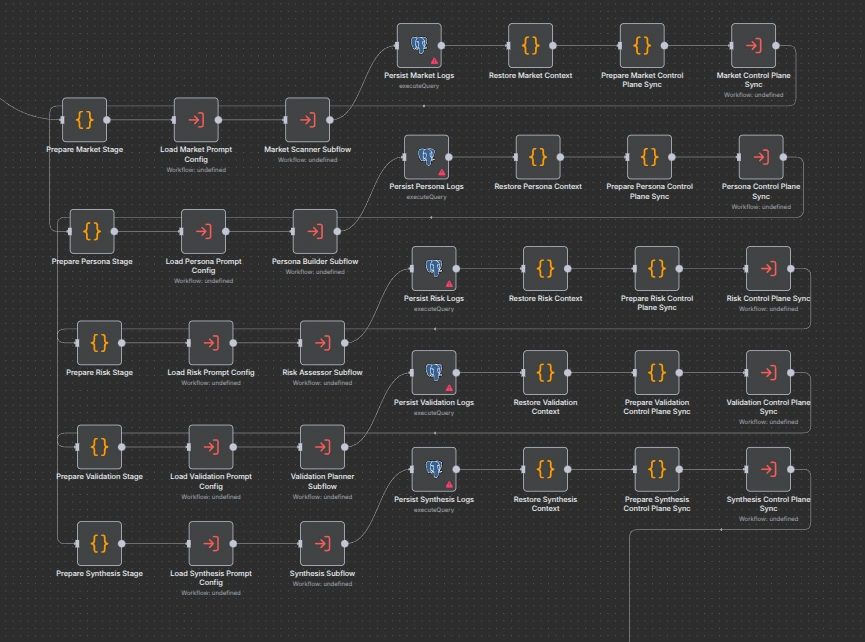

I designed the system around four entry workflows and three reusable subflows.

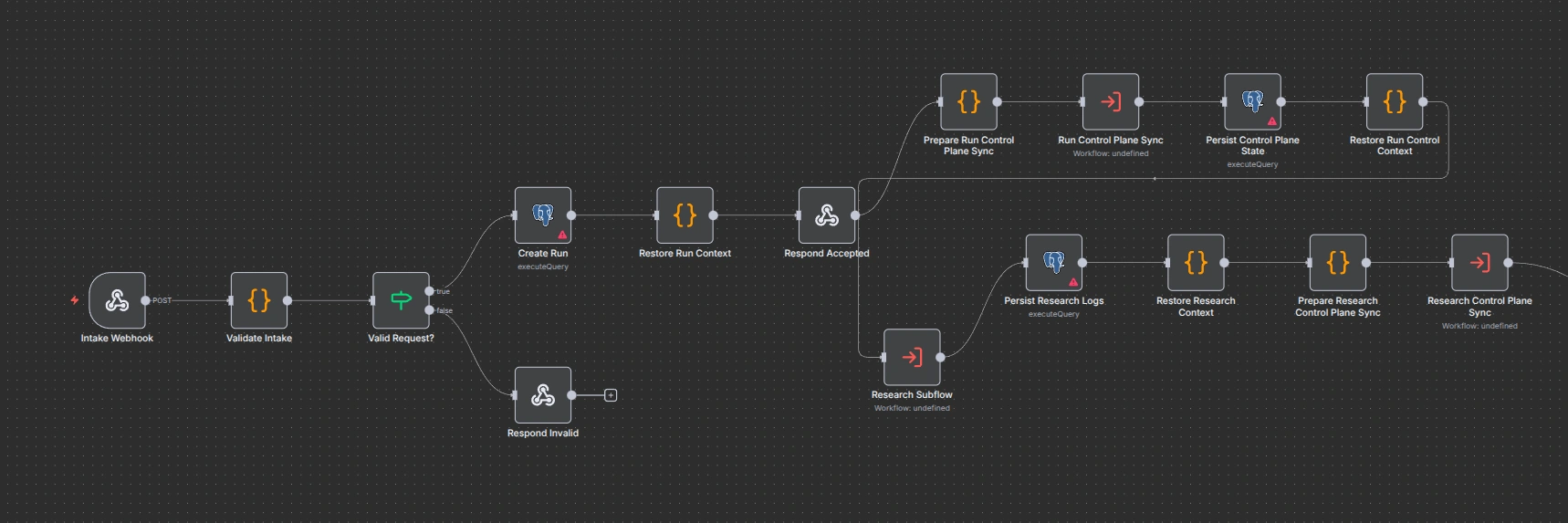

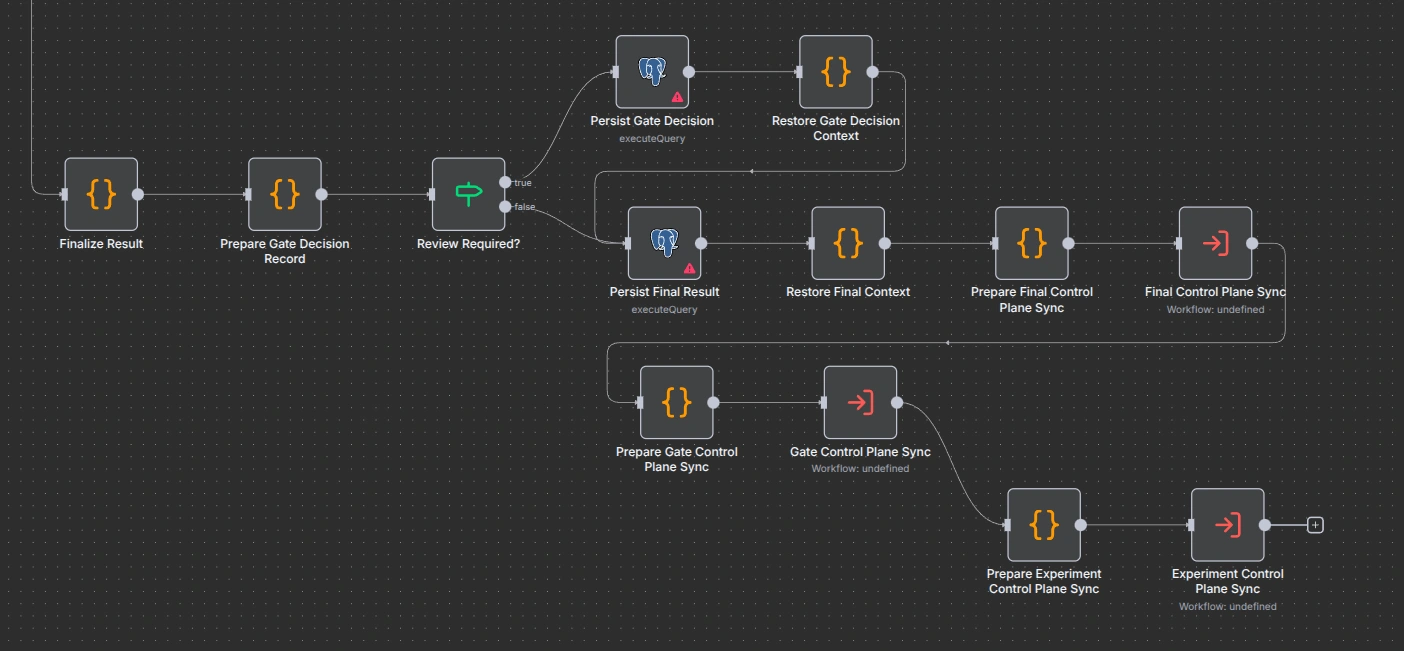

The public API accepts a request and immediately returns

202, so the long-running analysis does not block the caller. A separate status endpoint lets the client poll the current run. If the final synthesis decides the result needs human review, the workflow moves the run into awaiting_review instead of pretending it is safe to auto-complete.Under the hood, the workflow runs three parallel

Exa searches first, then executes five sequential Gemini stages:market_scannerpersona_builderrisk_assessorvalidation_plannersynthesisEach stage uses structured JSON output, deterministic validation, retry logic, and a fallback path that preserves warnings instead of losing state.

What I Built

Async webhook intake with a separate polling endpoint

Parallel research collection before AI synthesis

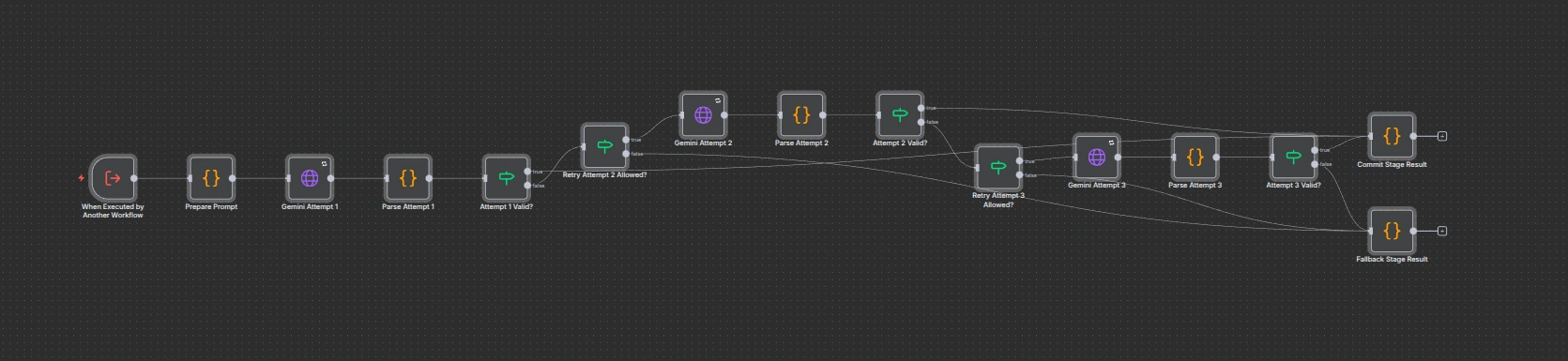

A reusable

Gemini stage subflow shared across five stagesSchema validation and retry logic for LLM output

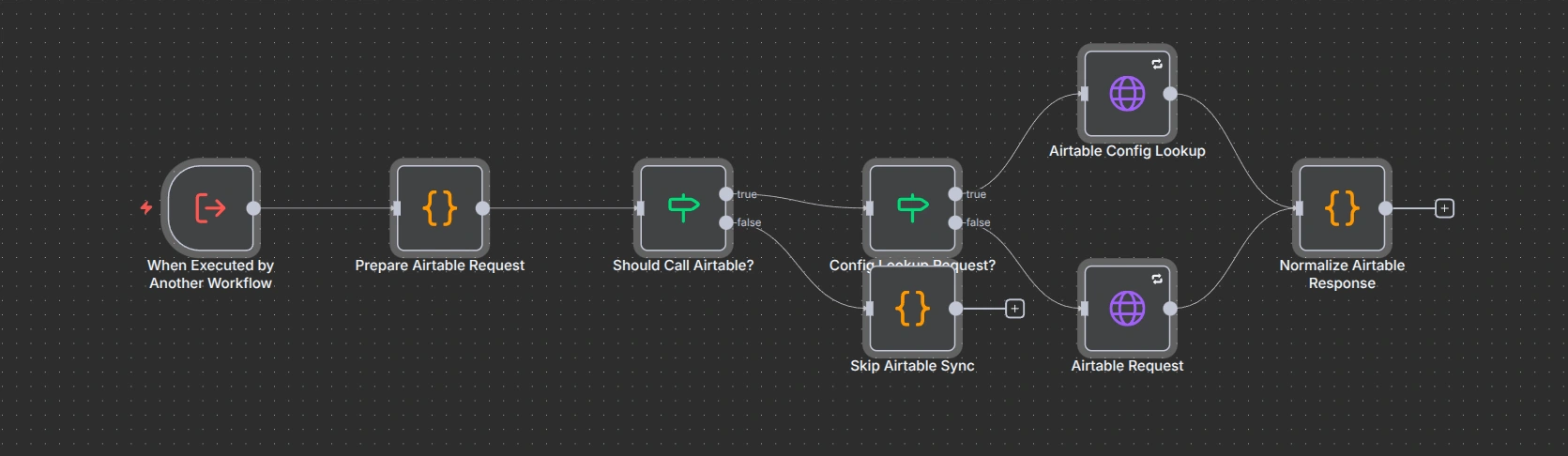

Postgres persistence for runs, stage attempts, and gate decisionsAn

Airtable control plane for runtime prompt config and operator visibilityA manual review endpoint for gated runs

A dedicated error workflow that marks runs as failed and syncs failure state

Key Engineering Decisions

1. Modular workflow boundaries

Instead of building one oversized

n8n canvas, I split the system into entry workflows and subflows. That made the architecture easier to reason about, easier to reuse, and much easier to present.2. Reliability over AI optimism

The system does not assume “structured output” means valid output.

Gemini is asked to return schema-constrained JSON, but the workflow still parses and validates the payload itself. Invalid responses retry. If retries fail, the stage returns explicit fallback data with warnings so downstream stages can still behave predictably.3. Operator-facing control plane

Postgres acts as the durable execution ledger, while Airtable gives operators a lighter-weight interface for prompt configs, review gates, stage attempts, and experiment tracking. This separation kept the system auditable for engineering use while still being manageable for non-technical stakeholders.4. Explicit degraded states

One of the most important parts of this build was making failure visible. Runs can end as

completed, completed_with_warnings, awaiting_review, rejected, needs_changes, or failed. That makes edge cases inspectable instead of invisible.Outcome

The final result is a portfolio-grade automation system that demonstrates much more than prompt chaining. It shows how to build AI workflows that are modular, testable, and operationally legible.

It also created a strong case study artifact because the interesting work is visible in the architecture itself: async APIs, reusable subflows, validation layers, control-plane state, review gates, and audit logs.

Project Gallery

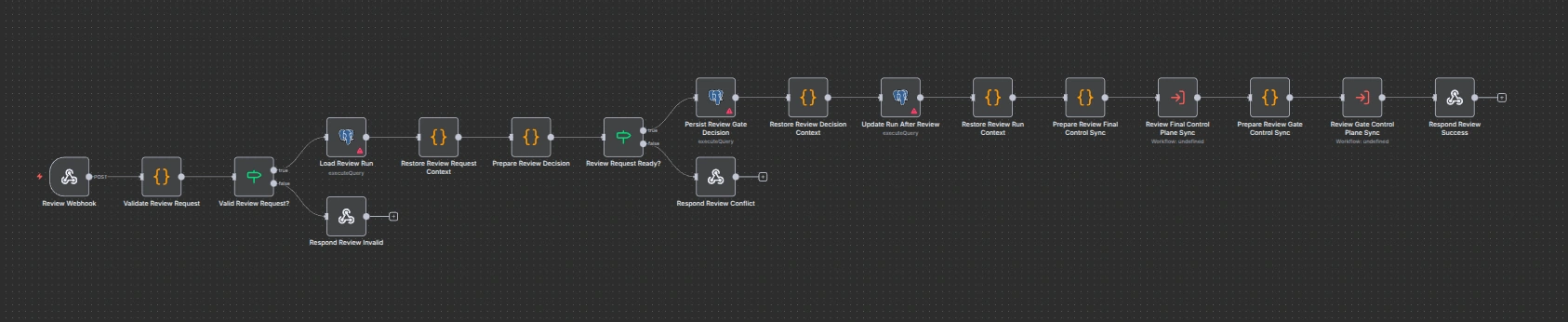

Full orchestration overview

Async intake and parallel research

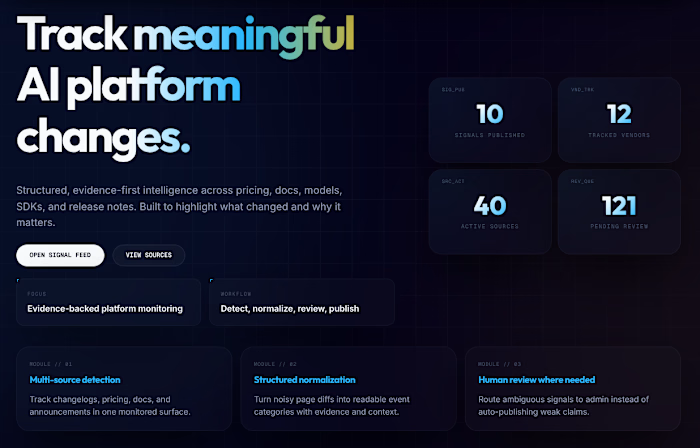

Five structured AI stages with runtime config loading

Review gate and final persistence

Gemini retry + validation subflow

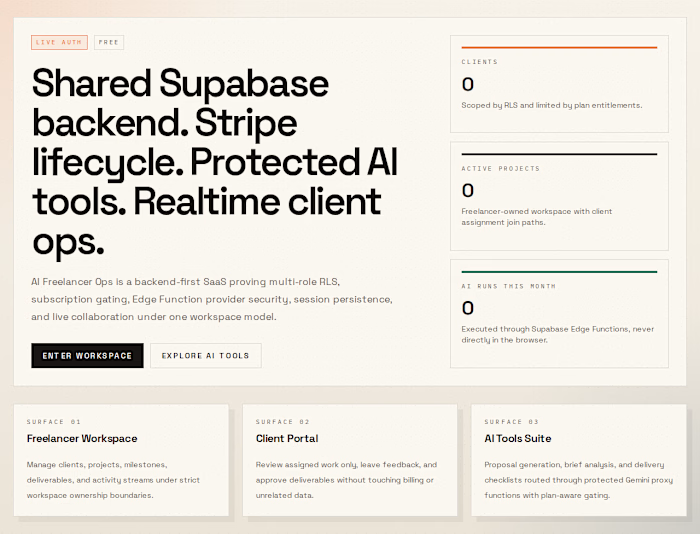

Airtable control plane

Manual review endpoint

Like this project

Posted Mar 17, 2026

Backend n8n ai automation showing async APIs, staged LLM analysis, runtime config, and explicit failure handling.