Review-First Monitoring for AI Platform Changes

Case Study: AI Launch Monitor (Contra Portfolio)

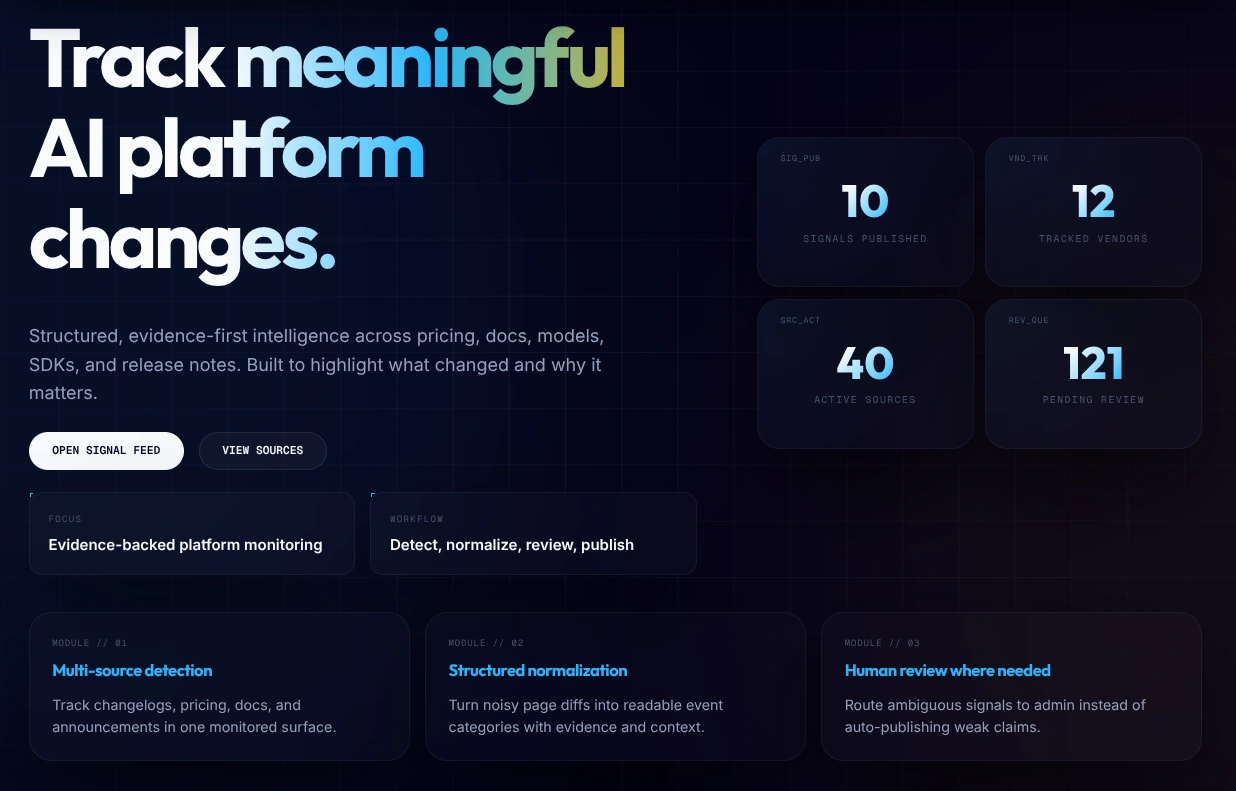

The Project: An automated intelligence layer designed to track and normalize rapid changes across the AI ecosystem.

The Mission: Bridge the gap between messy public data and structured, actionable developer intelligence.

Github Repo: HERE

1. The Challenge: Monitoring a Moving Target

In the current AI landscape, major players like OpenAI, Anthropic, and Google iterate at an unprecedented pace. Critical updates—ranging from subtle pricing shifts to model stealth-launches—are rarely announced in a single, machine-readable location. Instead, they are scattered across fragmented changelogs, pricing tables, and documentation pages.

Manual monitoring is inefficient and error-prone. I built AI Launch Monitor to solve this by autonomously transforming unstructured web data into a high-confidence signal feed.

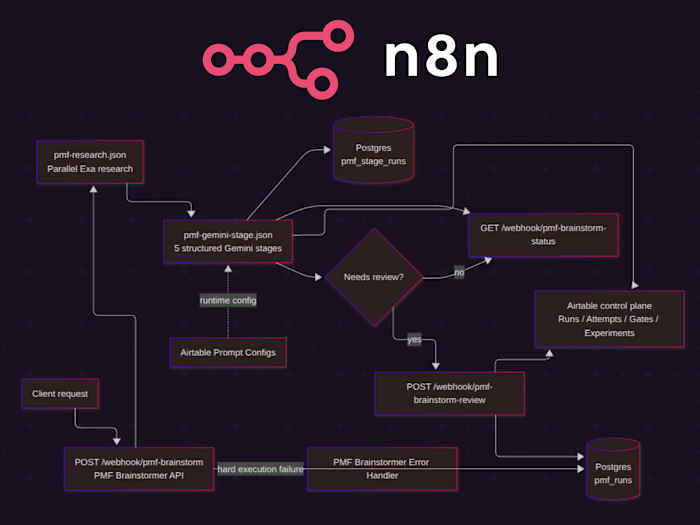

Figure 1: The Ingestion Pipeline — A sophisticated refinery that transforms raw HTML into verified intelligence signals.

2. The Implementation: Intelligent Data Refining

Rather than relying on generic web-scraping, I engineered a multi-stage pipeline that prioritizes precision and reasoning.

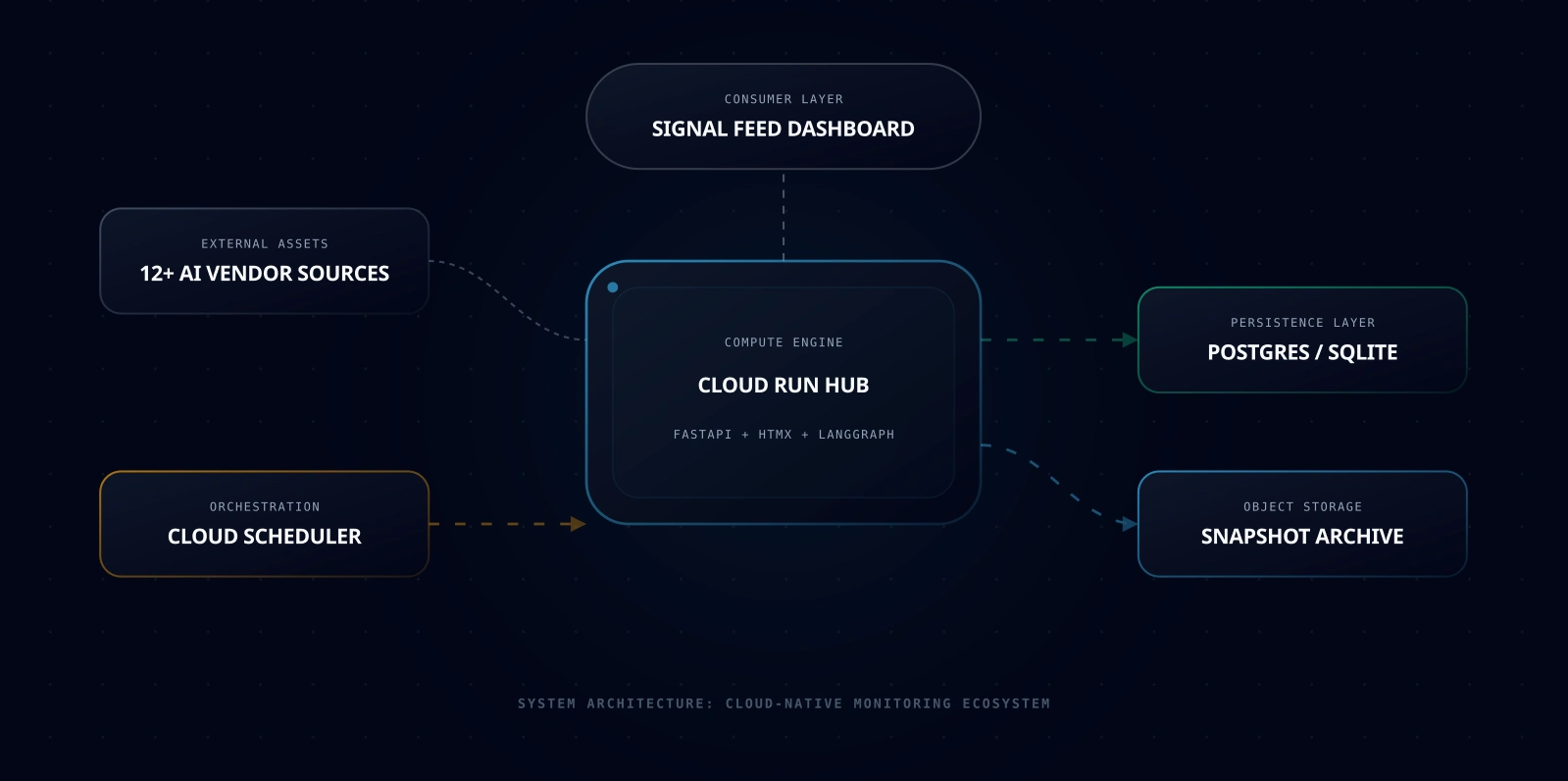

Robust Ingestion: Built using Python and HTTPX, the system performs asynchronous polling of 12+ major AI vendors. It doesn't just "scrape"; it captures periodic snapshots to create a historical archive of truth.

The Reasoning Layer: I implemented LangGraph to handle the heavy lifting of classification. The system analyzes raw text diffs to determine the intent of a change—automatically distinguishing between a minor documentation fix and a major pricing overhaul.

Aura-Grade UI: The dashboard was built with FastAPI and HTMX to deliver a premium, dashboard-centric experience. It leverages the "Aura Intelligence" aesthetic—snappy, minimal, and optimized for high-signal clarity.

*Figure 2: System Topology — A containerized, cloud-native architecture designed for scalability and reliability.*

3. The Core Value: Traceable Integrity

The most significant engineering hurdle was ensuring 100% data accuracy. In a world of LLM hallucinations, AI Launch Monitor is strictly evidence-driven.

Every published signal is linked to an Evidence Chain. Users don't have to take the system's word for it; they can drill down to the exact line of raw source code that triggered the alert, backed by a unique SHA-256 verification hash. This level of traceability is critical for developers who rely on this data for production decision-making.

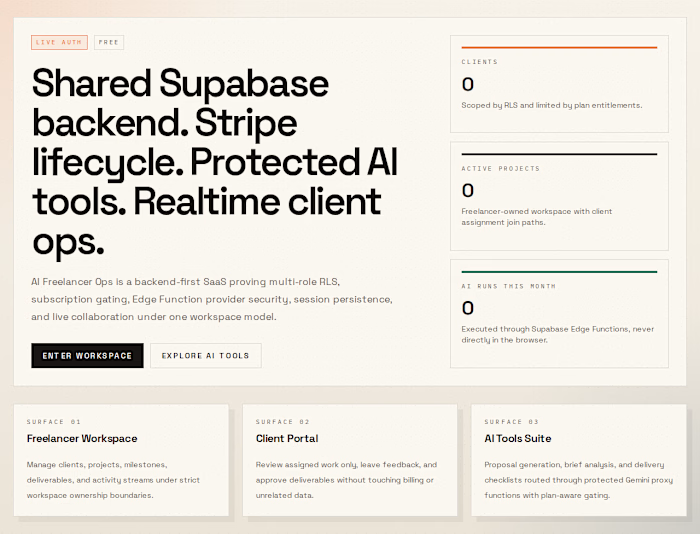

*Figure 3: End-to-End Traceability — Connecting a public notification directly back to its source-level proof.*

Key Outcomes

I successfully delivered a production-ready monitoring platform that balances sophisticated data engineering with a refined user experience.

12+ AI Vendors monitored automatically with tiered depth.

Intelligent Noise Filtering: Only meaningful changes reach the public feed.

100% Verifiable Data: Every update is supported by a raw HTML diff and a provenance log.

Like this project

Posted Mar 29, 2026

Built a review-first monitoring product that tracks AI vendor docs, pricing, SDK, and release-note changes and turns them into structured signals.