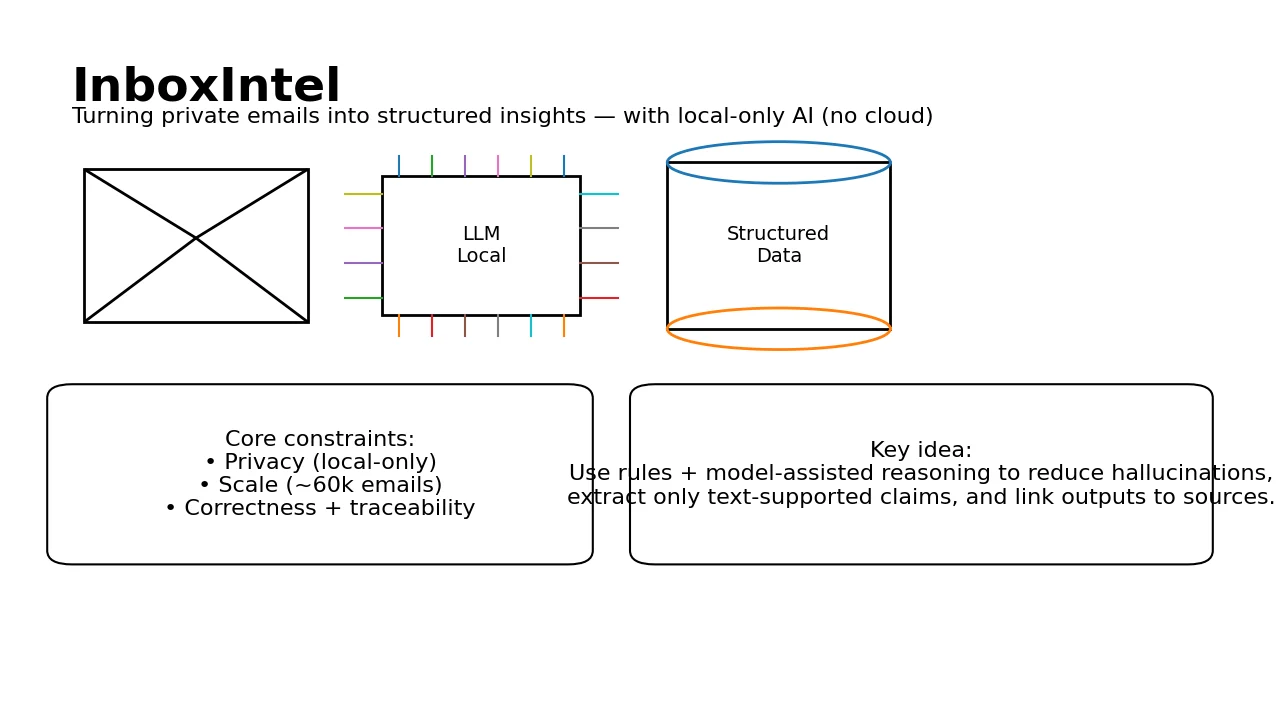

Email Archive Data Structuring with InboxIntel

Problem

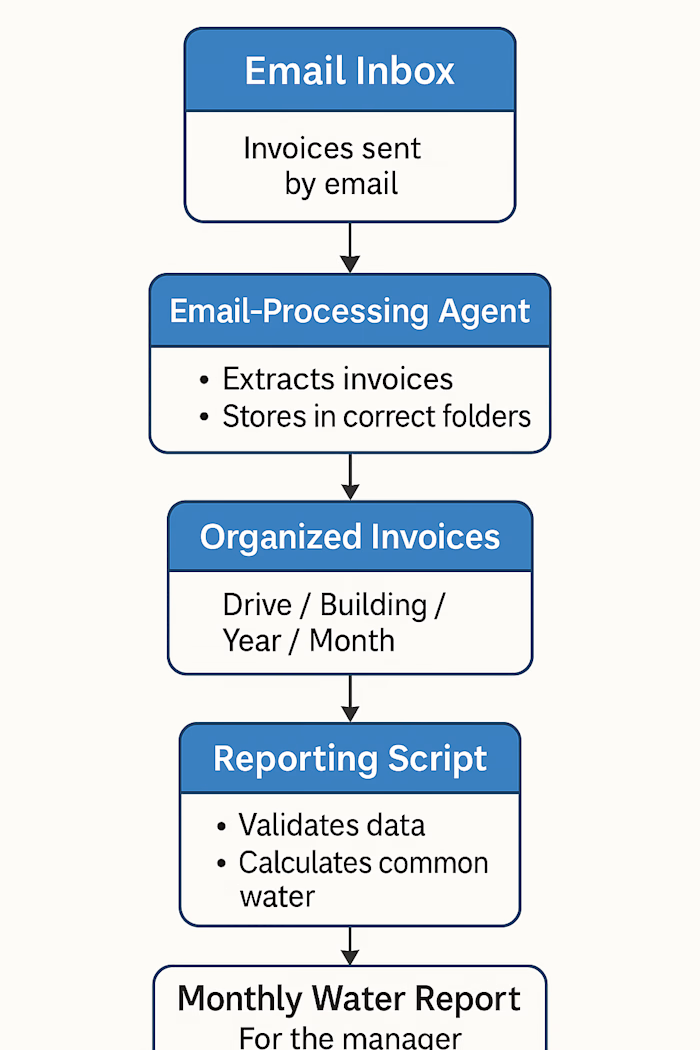

InboxIntel was built to help a client convert a very large, highly sensitive email archive into structured data for analysis—without sending a single byte to the cloud. The client had tens of thousands of emails containing project decisions, deliverables, and payment-related conversations, but manual review was slow, error-prone, and impossible to scale. Privacy constraints ruled out external APIs, and the client also needed audit-grade traceability from every extracted data point back to the original email text.

Solution

We delivered a local-only proof-of-concept pipeline that processes Thunderbird-exported emails at scale and turns them into structured, analyzable records. The system combines deterministic rules with model-assisted reasoning to reduce hallucinations and keep outputs consistent. Core components:

Email parsing & normalization from Thunderbird exports, designed to cope with messy real-world formatting (forwarded blocks, inconsistent structure, attachments).

Business vs non-business filtering using a hybrid approach (rules + a fine-tuned BERT-based classifier) optimized to avoid missing business-relevant emails.

Claim extraction with strict grounding: information is extracted only when explicitly supported by the email text, and each claim is linked back to its source for auditability.

Milestone/payment-signal detection via rule-based logic to identify emails tied to milestone completion and payment requests.

Local AI execution using a locally installed Llama-3-8B-Instruct model to keep all processing on the client machine.

Impact

Turned an inbox-sized “dark archive” into structured insights suitable for downstream analysis (projects, deliverables, payment milestones), while keeping confidentiality intact.

Enabled traceable extraction: every output can be audited against the original email content, which is critical for trust and compliance.

Proved a practical pattern for privacy-first AI: rules + local LLM + conservative extraction, rather than “black box automation.”

My role

Designed and implemented the end-to-end PoC pipeline (parsing → filtering → grounded extraction → milestone detection).

Built the privacy-preserving development workflow, using a smaller curated subset of masked emails plus synthetic examples for testing to avoid exposing confidential content during iteration.

Tuned the system toward safety and correctness, prioritizing reliable, verifiable extraction over aggressive automation.

Tech highlights

Local LLM: Llama-3-8B-Instruct installed and executed on the client machine (no cloud calls).

Hybrid intelligence: rule-based controls + model assistance to reduce hallucinations and increase consistency.

Classifier: fine-tuned BERT-based model for business relevance filtering, optimized to minimize false negatives for business emails.

Grounded extraction: “extract only if clearly supported” + per-claim traceability back to source email text.

Rule-based milestone detection: deterministic signals for payment/milestone-related messages to support financial/project analysis.

Additional Info

Scale & dataset: Phase 1 targeted roughly ~60k emails exported from Thunderbird; development used masked + synthetic samples to stay privacy-safe.

Key tradeoff (the honest bit): optimizing for “don’t miss business email” means some non-business noise can remain—by design. It’s safer to review a few extra emails than to silently drop something important.

Future-ready direction (not implemented in this PoC): richer analytics, thread-level extraction/summarization, and RAG-style retrieval—still keeping everything local.

Like this project

Posted Dec 22, 2025

Converted email archive into structured data for analysis locally.

Likes

0

Views

0