Global FinTech Development for MishiPay

Building a FinTech — 22+ PSPs, 35+ Brands across 18+ countries

Building a FinTech — 22+ PSPs, 35+ Brands across 18+ countriesBackgroundFinTech @ MishiPayThe Operational ChallengesPSPs not compatible with the marketMarket not compatible with PSPsLocal LawsThe Monopoly TaxAbyss Engineered PSPsThe Technical ChallengesIntegrating distinct systemsIsolation in unityDecoupling heavily coupled systemsPhygital difficultiesThe Technical Architecture of the FinTechPayments System of MicroservicesIsolationControlOOP’ingMongoDB DatabaseScaling the FinTechScaling for UsageScaling for PerformanceScaling ObservabilityScaling AvailabilityPayments: Event-drivenHigh-performance event-driven designMitigating monstrous risk of cascading failure

Background

At MishiPay, I worked to redefine the point-of-sale experience by bringing the best of online shopping into the physical store. With a "Total Store" architecture – where solutions like Scan & Go and Self-checkout Kiosk, enabled shoppers to pick up an item, scan the barcode with their smartphone or at the kiosk, pay instantly, and leave the store—completely bypassing the traditional checkout line.

MishiPay products cater to both retail enterprises and offline retail shoppers. For the retailer, a sophisticated dashboard, theft prevention integrations, and real-time inventory insights. For the shopper, a frictionless delightful checkout experience. The business exists at the unique conjunction where B2B infrastructure meets B2C convenience, requiring a backend that is as robust as a banking system but as agile as a consumer app.

FinTech @ MishiPay

Digital payments are the heartbeat of MishiPay. Without a secure, lightning-fast, and global payment infrastructure, the "Total Store" promise falls apart. Handling everything from high-velocity credit card processing and Apple/Google Pay integrations to localized alternative payment methods (APMs) across multiple continents.

The development of such a payment system for MishiPay required solving some very odd operational and technical challenges:

The Operational Challenges

While code is predictable, the global financial landscape is not. Expanding into dozens of countries meant navigating a fragmented ecosystem of legacy banking habits and rigid regional regulations.

PSPs not compatible with the market

You have Stripe and Adyen — great PSPs and available in the majority of the regions — but sometimes you can’t use them because of their feature disparity in different regions. For example, Stripe payment terminals are not available in the UAE. Similarly, Stripe has operations in India but cannot be used for certain use cases where there is a possibility of a transaction with a value lower than their US$0.50 threshold.

Market not compatible with PSPs

Then there are markets like Nordics, where the payment methods preferred by the locals are only available through their local PSPs. For example, Vipps, Swish and MobilePay are available through QuickPay.

Local Laws

Integrations often come with hidden essential extras in their markets — like Italy, where local law requires e-invoicing to be done through government portals in real-time.

Such unique overheads arise quite often, and they are never just a matter of APIs or webhooks. Each one requires amending our underlying payment systems and processes — putting our tech through deep inspection, redesign, overhauls, testing, and production.

The Monopoly Tax

Clients sometimes have exclusivity contracts with their PSPs and we have to adhere to and integrate with them to secure their business. The exclusive PSPs protected by their contract usually operate very slow. In one instance, it took us 8 months of just weekly meetings before any integration documentation was made available to us.

Abyss Engineered PSPs

Stripe, Adyen, RazorPay are the exemplary, world-class leaders of FinTech for digital payments. Their SDKs are verified to perform & endure, with documentation so clear that it could be followed by a 5th grader.

However, not all are the same. In one instance, the documentation was so disconnected from reality that I had to physically visit a PSP's office to sit with their engineering team. I ended up helping them debug their own internal routing logic just to unblock our integration. When the "sandbox" environment doesn't match the "production" environment, the only way forward is hands-on intervention.

The Technical Challenges

Building a unified interface for 22+ different ways of "moving money" is a massive exercise in abstraction and defensive programming.

Integrating distinct systems

To onboard and maintain 22+ PSP integrations is hard, complex work; each one of them is as different as chalk and cheese in its integration approach.

The first pillar that held this system was maintaining uniformity among the integrations, using abstractions, primitives, low-level design and architecture.

Isolation in unity

The payments engine with 22+ PSPs promised a Five 9s Uptime SLO, requiring a unique approach that goes beyond redundancy. Building on the original idea of uniformity across the integrations, only the IO workload of the transactions was isolated and distributed. This new design scaled 1000x times better than the traditional client-server model.

Decoupling heavily coupled systems

Among the highest order of complexities comes the complexities of a tightly coupled heavily dependent system. In payments, some of these tightly coupled inter-dependents participants are merchants, acquirers, issuers and networks.

Phygital difficulties

Digital payments in retail-tech also have physical presence — a phygital payment terminal to accept tap, swipe and insert. More than 10 terminals from different PSPs with varying operating principles like Serial, Bluetooth, Wifi, over-the-Internet and more.

The Technical Architecture of the FinTech

This was a long journey, which can be read on my Medium, but here is the rundown of the final production version of the FinTech that is catering to 35+ Brands across 18+ countries with 22+ PSP integrations.

Payments System of Microservices

To move away from the bottlenecks of a monolithic architecture, microservices were adopted. The expected pros were clear: independent scalability, fault isolation, and the ability to deploy updates to a single PSP integration without risking the entire payment flow.

Isolation

Monolith systems grow fat as the team size increases. The fat code base produces fat PRs. Which reduce credibility of their reviews and reliability of their deployments.

While the isolated microservice — and independence over its database, tech stack and governance; Enabled us to be able to think particularly about the payments system and its roadmap.

Control

Payments systems are sensitive in their nature, tighter controls were implemented over its changes, PR reviews, deployments and credentials management.

OOP’ing

To cater 22+ PSPs integrations in our system. A curated set of HTTP APIs were defined for all possible functions of the payment system with a firm shell and a strict core but flexible middleware. The firm shell being our interaction contracts (or protocol), the strict core is our structured database modelling with integrity check layers, and flexible middlewares composed of distinct variables across PSPs like their endpoints, request-body and other meta-data.

MongoDB Database

MongoDB is used as the primary DB for the payment system. The microservice stored every payment-session and each of its transaction-records in a detailed format. Maintaining an event log (or event source) usable for audit, debugging and forward compatibility. Special care and effort were put into achieving atomicity, integrity and consistency in a NoSQL database.

Scaling the FinTech

Scaling a payment system isn't just about handling more users; it's about handling more critical operations where a single failed API hit can mean a lost sale.

Scaling for Usage

To handle 1M+ transactions; moved the system from managed containers to Kubernetes. Where the pre-emptive scale-ups were managed using Helm to sustain the regular and anticipated traffic. And unforeseen random spikes in traffic were autoscaled using KEDA (Kubernetes event-driven autoscaler), allowing us to scale based on queue depth rather than just CPU/Memory.

Scaling for Performance

Fast systems do not necessarily form good products, but good products must be fast. Major performance optimizations were –

Ensured that the query planner is using the optimal index, defined compound indexes identical to the query, or explicitly instructed query planner to use an index in the code.

The queries and the indexes both keep the lowest-cardinality fields first as the filters.

Some indexes extended with all the minimal required fields to eliminate collection lookups

Replacing a group of DB queries by LRU cache set during service startup

Merged the collections that are being queried together frequently, strictly sticking to an extend only approach, maintaining backward-compatibility

Placed the chatty services close together as a sidecar in a K8 pod

Scaling Observability

The payments system are an onion cabbage stack to monitor, a transaction can fail at any end across — customer (card holder), service-provider (us), merchant (merchant bank), issuer (card holder’s bank), acquirer (usually the PSP or the bank backing it), card-network (eg. VISA, MasterCard).

An aggressive stance is taken to tackle this, dissecting all PSP integrations in low-level code, then placing essential logs and event metrics in between. Gathering as many data points as possible that can help in diagnosing an issue, payment failure, or an audit mismatch.

Scaling Availability

Essentials put together and refined, such as — Active-passive DR setup, database replicas, playbooks and pipelines to perform the migration of traffic to the DR setup during an outage, including steps to promote the SQL replicas to primary and more.

Payments: Event-driven

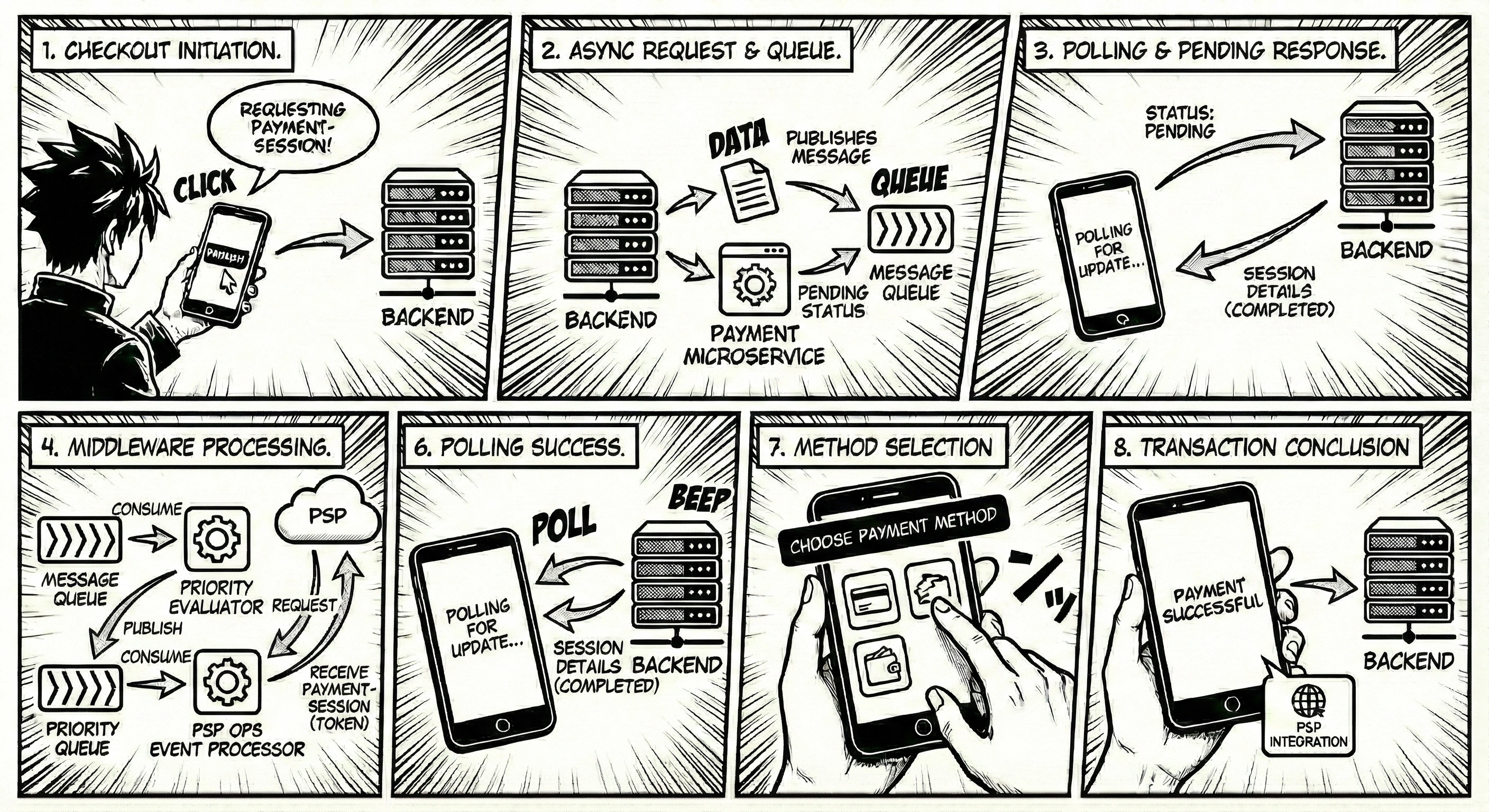

Utilized an event-driven design to keep all 22+ PSP integrations under the same roof while providing a "fast lane" (priority queue) for high-traffic PSP communications.

High-performance event-driven design

Implementation of event retry mechanisms based on their nature and failure reason, with an jittered exponential backoff delay to prevent thundering herd and mitigate back pressure

The DLQ (dead-letter queue) setup to handle events which failed during initial processing

Heavy utilisation of StatsD and Datadog, recording metrics like the following at the producer side — batch-size, bytes per message, produced bytes per batch, acks per publish, retry-rate, in-buffer time, and producer latency

And, at the consumer side, recording metrics like — consume rate, consumed bytes, records per request, commit rate, and consumer latency

Also, overall performance indication metrics like — record lag, partition lag, etc as reported from the broker as well as the self-calculated one (event creation timestamp minus current timestamp)

KEDA managed auto-scaling for the event processors, primarily based on the consumption lag

Mitigating monstrous risk of cascading failure

The event-driven design decoupled the 22+ PSP system from our one system; A protection from the monstrous risk of cascading failures, originating from PSP systems to our own.

If you are interested in building a FinTech yourself, please reach out—I would love to discuss how we can work together

Like this project

Posted Jan 20, 2026

Building a FinTech — 22+ PSPs, 35+ Brands across 18+ countries

Likes

0

Views

3

Timeline

Jul 1, 2022 - Aug 1, 2023

Clients

MishiPay