HapticAI — The Copilot for Reality

⚡ The TL;DR

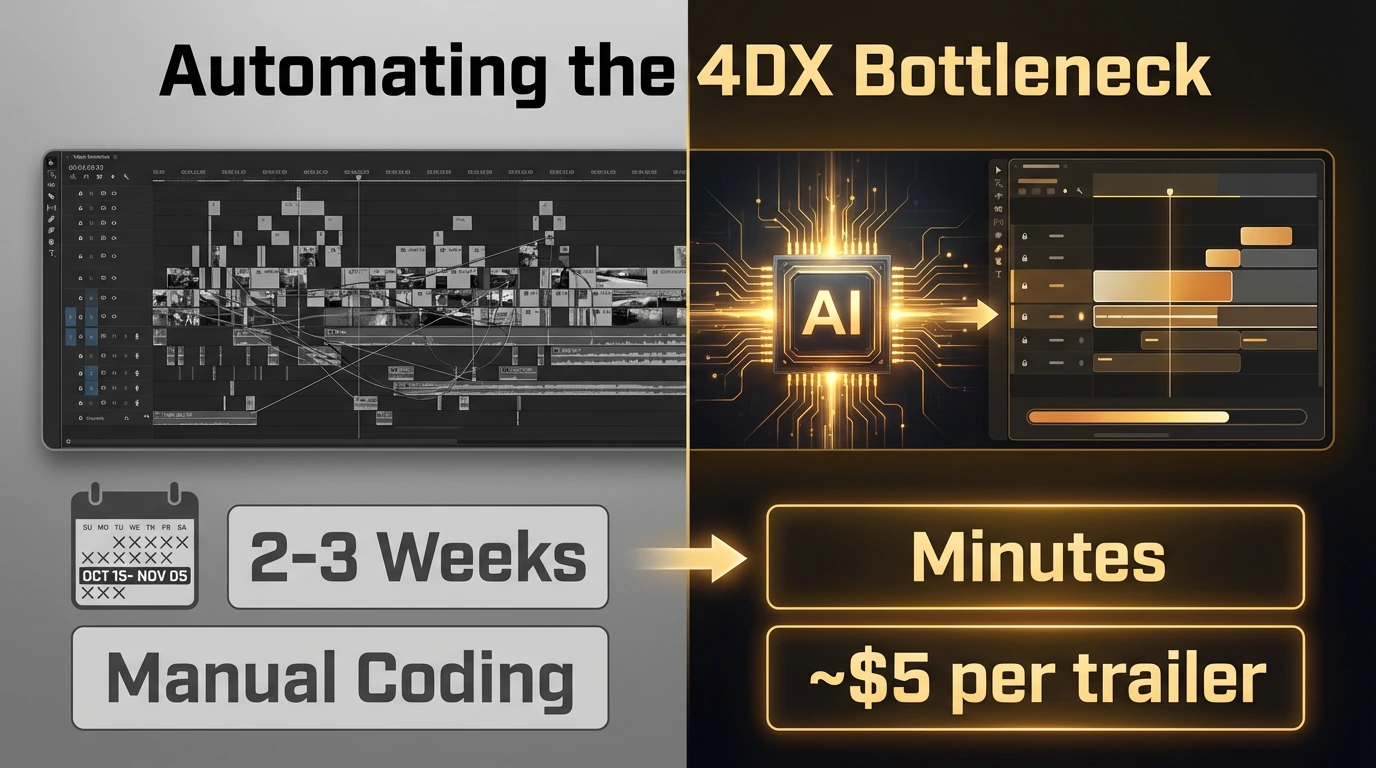

Created as a competitive entry for the Google Gemini 3 API Hackathon, HapticAI is a revolutionary AI pipeline that bridges the digital and physical worlds. It transforms standard 2D video into immersive, 4DX-ready haptic experiences (seat motion, wind, water, scent) in a matter of minutes. By leveraging a custom two-pass architecture with Google's bleeding-edge Gemini 3 Pro, HapticAI turns a weeks-long manual coding process into an instant, $5 automated workflow.

🎬 The Problem: When Hardware Outpaces Content

Modern 4DX cinemas are engineering marvels. With over 780 equipped screens globally, audiences can physically feel a movie through seat tilts, wind gusts, water sprays, and environmental effects.

But there is a massive hidden bottleneck: Content Creation.

Currently, every single 4DX movie requires a highly specialized team to manually code thousands of physical effects frame-by-frame. It takes 2 to 3 weeks of manual labor just to program a single film. The hardware is ready, but the authoring process is stuck in the past.

💡 The Solution: AI That Feels the Movie

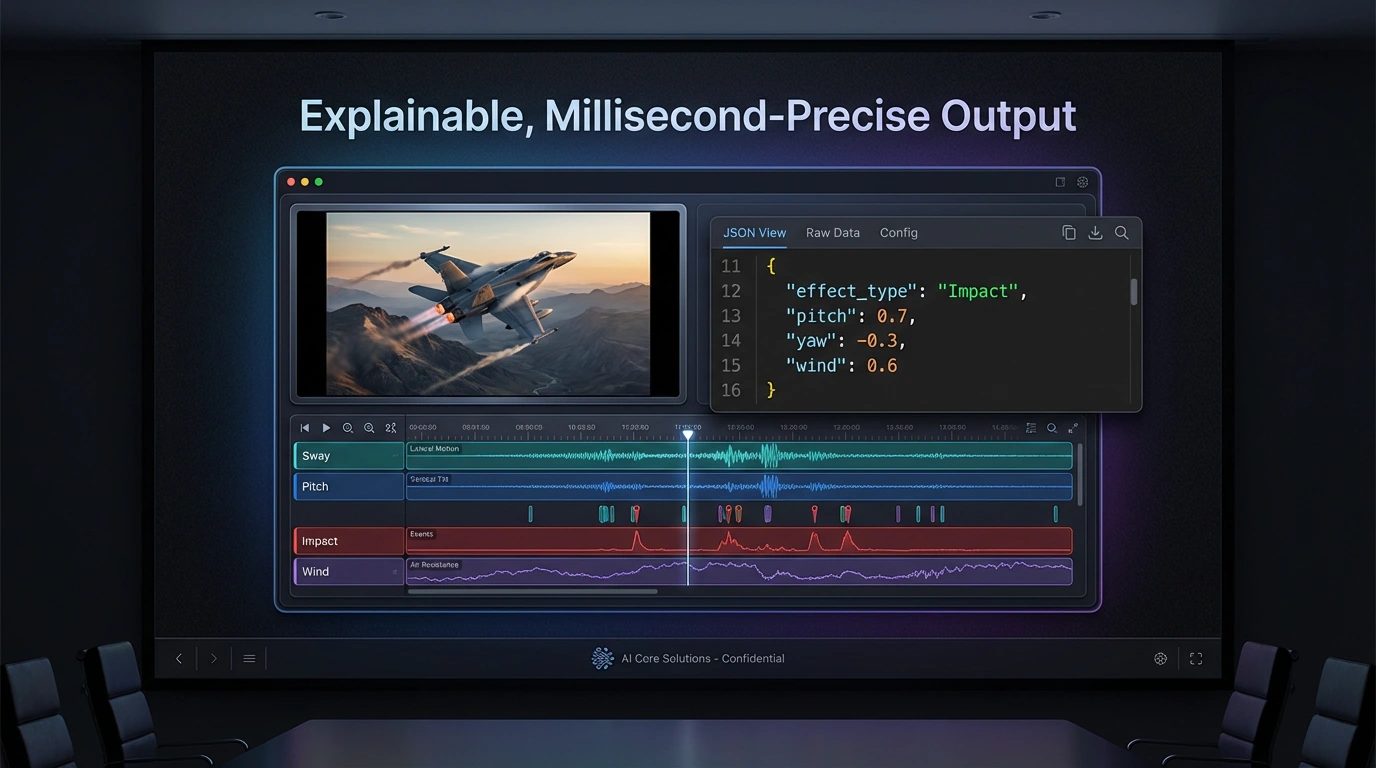

I built HapticAI to completely automate the video-to-motion workflow. Instead of relying on human operators to guess the physics of a scene, HapticAI ingests the native video, understands both the narrative arc and the physical forces at play, and generates a millisecond-precise timeline of haptic events synced perfectly to the action.

Because this was built under the strict time constraints of a hackathon, I had to prioritize rapid execution, highly optimized prompt engineering, and an architecture that prioritized both speed and physical accuracy.

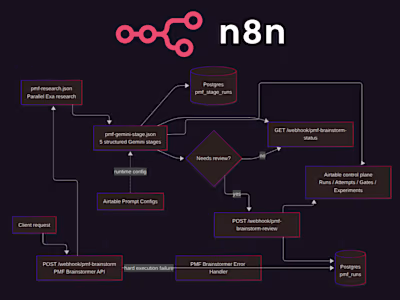

⚙️ Under the Hood: The Two-Pass Architecture

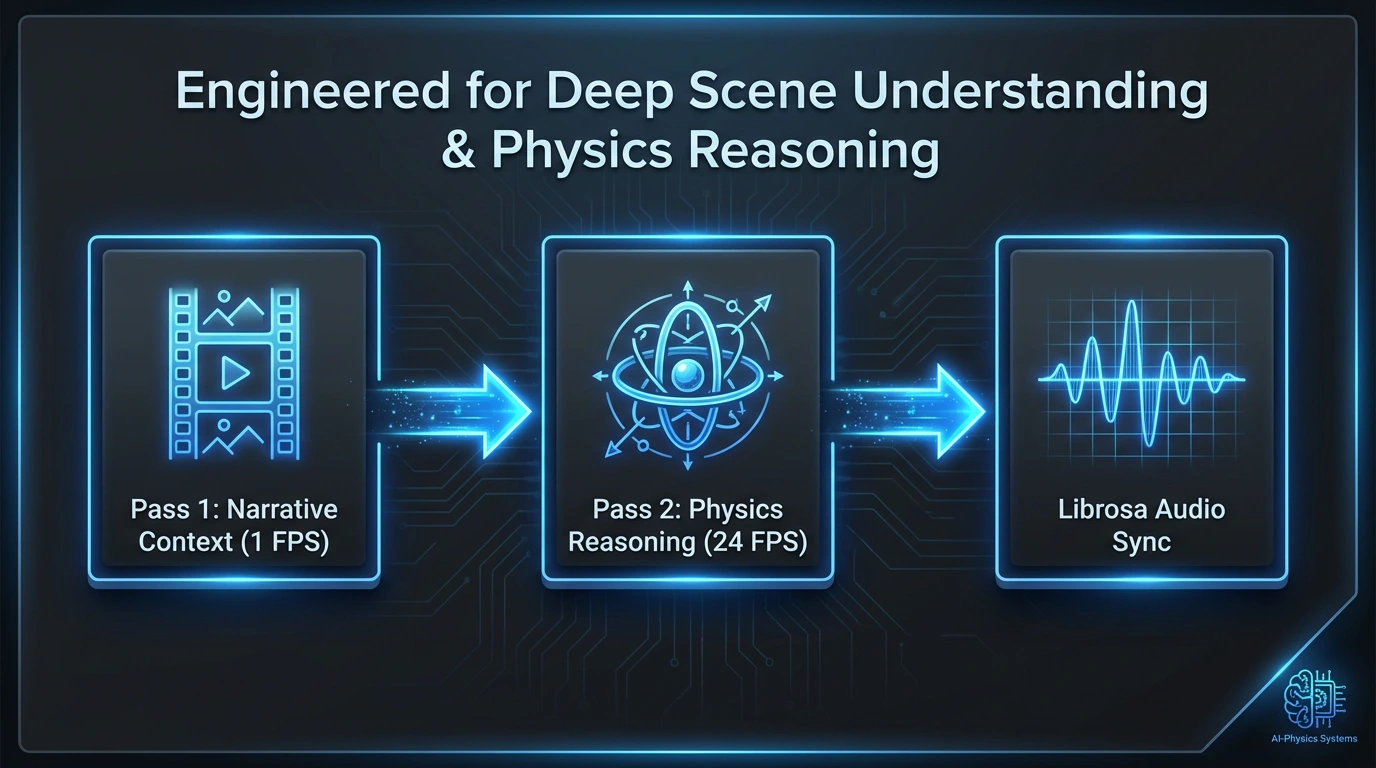

To ensure the AI didn't just react to random flashes on screen, but actually understood the context of the physics, I designed a sophisticated two-pass pipeline using Gemini 3 Pro’s newest multimodal capabilities:

Pass 1 — Narrative Context (The Big Picture)

The full video is uploaded via the Files API and analyzed at 1 FPS.

Using Gemini 3 Pro with Structured JSON Output and Thinking (low), the AI performs scene segmentation.

It returns timestamped scene boundaries with narrative summaries, giving the model full "story-arc awareness" before it analyzes the micro-details.

Pass 2 — Physics Reasoning (The Micro-Details)

The video is split into 15-second overlapping segments and fed to the model inline at native 24 FPS to leverage Gemini 3 Pro’s High-FPS Video Understanding.

Running on Media Resolution HIGH (280 tokens/frame) and Thinking (high), the model performs deep physics reasoning to calculate directional force vectors.

It emits haptic events via Function Calling using two custom tools:

add_motion_event() (pitch, yaw, heave) and add_environment_effect() (wind, water, fog, strobe, scent).Audio-Visual Sync (Post-Processing)

Visuals alone aren't enough for physical impacts. I integrated Librosa to snap the AI's event timing to audio transients at 44.1kHz, blending RMS audio energy with Gemini's semantic intensity scores for pixel-and-pitch-perfect synchronization.

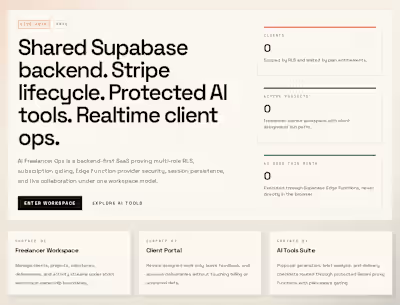

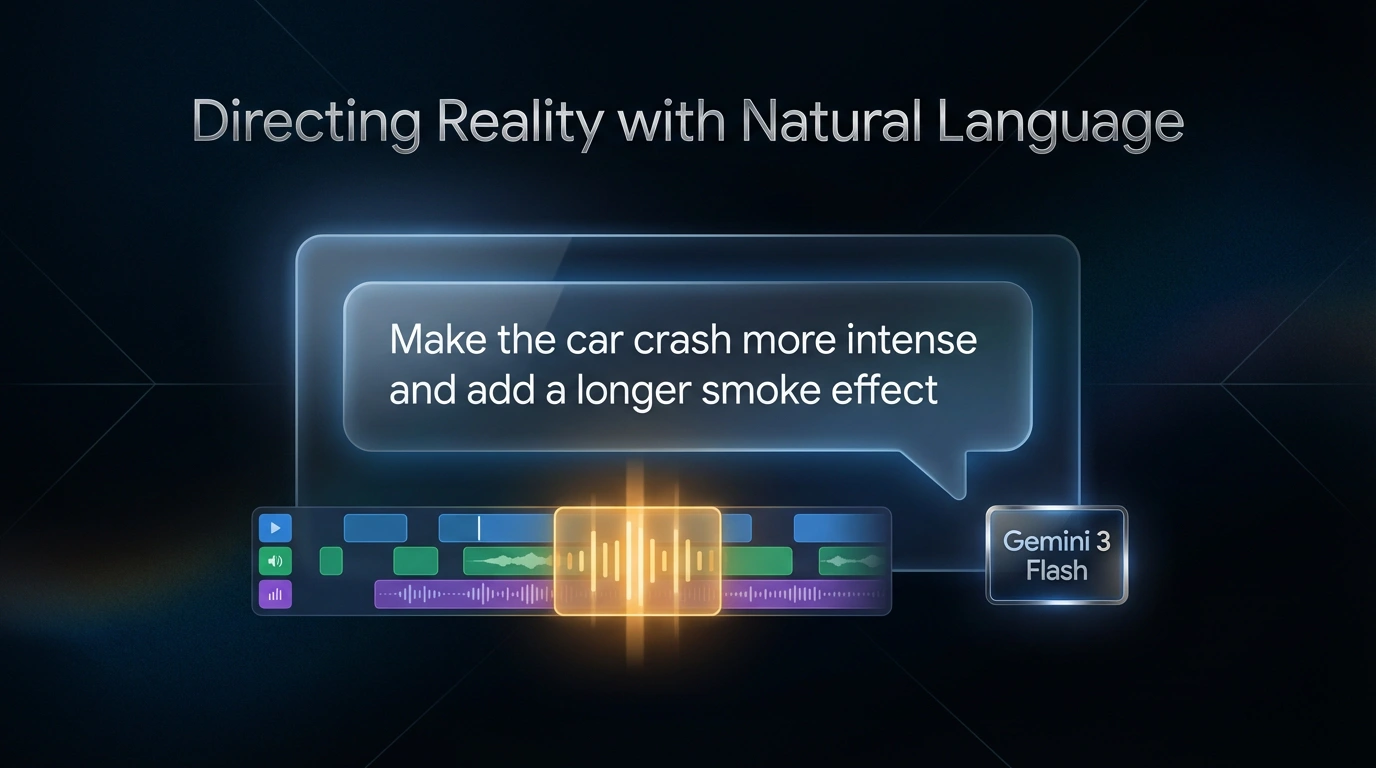

🛠️ The AI Editor: Directing Reality with Natural Language

To keep human creators in the driver's seat, every AI-generated haptic event includes an explainable reasoning field.

Furthermore, I built a natural-language timeline editor powered by Gemini 3 Flash. If a creator wants to tweak a scene, they don't have to touch code. They can simply type, "Make the explosions in the second scene more intense and add a longer smoke effect," and the AI instantly refines the generated haptic track conversationally.

🚀 The Business Impact

HapticAI proves that complex physical authoring can be automated without sacrificing quality. By shifting the workload to AI, the platform delivers massive ROI for content studios:

Massive Time Savings: Reduced a tedious manual coding process from 2–3 weeks down to mere minutes.

Drastic Cost Reduction: Processing a high-action 3-minute movie trailer now costs approximately $5 in AI compute.

Infinite Scalability: The pipeline is optimized for action-dense content and scales effortlessly via parallel processing.

Like this project

Posted Mar 14, 2026

Built a full-stack AI system that transforms standard 2D video into 4DX-ready haptic experiences using a two-pass Gemini 3 Pro architecture.

Likes

1

Views

1

Timeline

Jan 28, 2026 - Feb 10, 2026