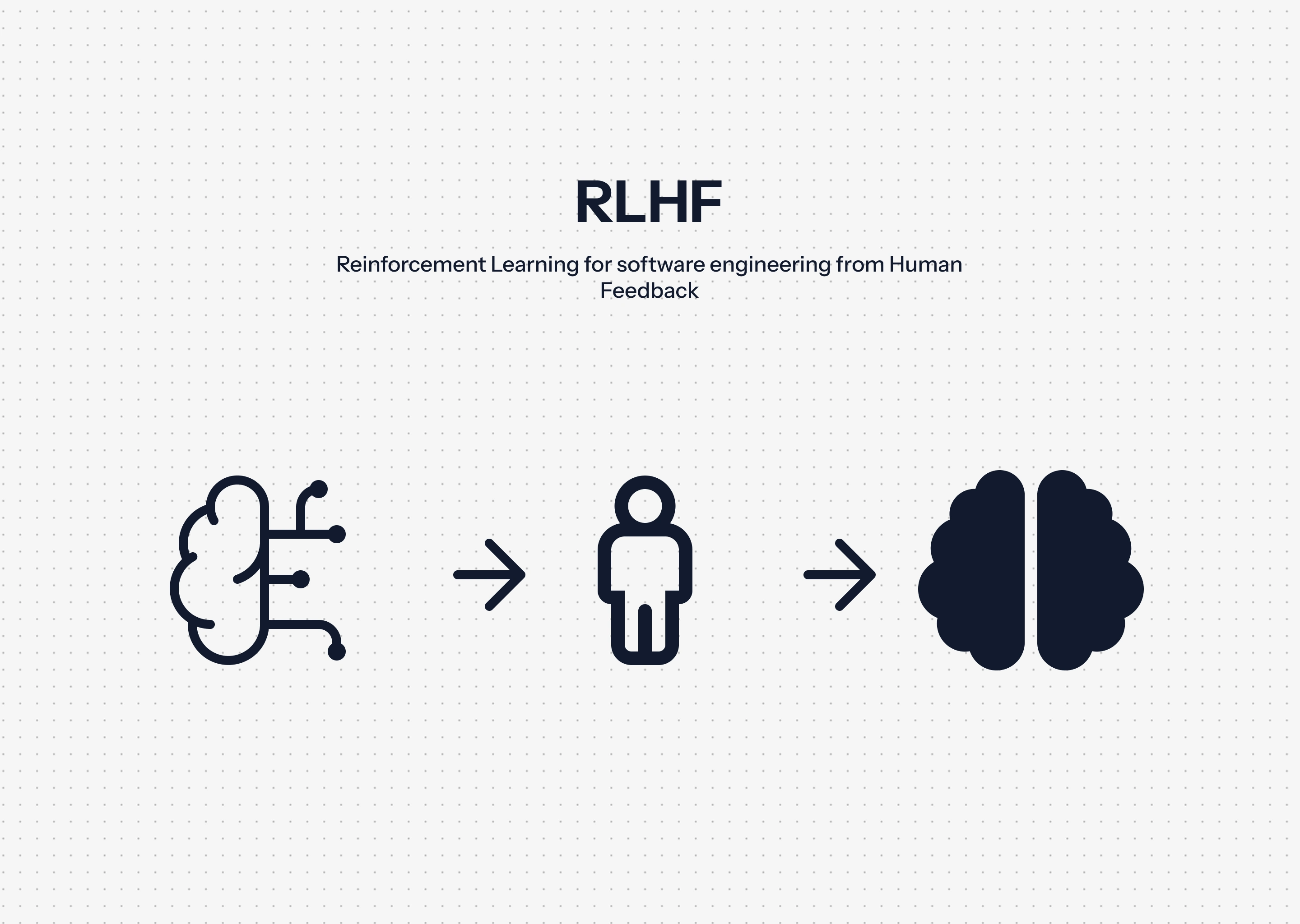

Enhancing LLMs for Software Engineering Tasks

This article is based on real client work with details anonymized to abide by confidentiality agreements.

Through ongoing work, we contribute to large-language-models (LLMs) training through providing human feedback for software engineering contexts. Our work involves evaluating and ranking model-generated code, writing reference implementations, and providing structured feedback to improve correctness, readability, and adherence to best practices.

LLMs are used daily by engineers around the world from small tasks such as finding functions for a niche library, to debugging, to building entire landing pages. LLMs providing clear and correct responses help to save time, prevent frustration, and improve the quality of work produced.

Through this work, we helped improve how AI systems reason about real-world software engineering tasks such as debugging, refactoring, algorithmic problem-solving, and developing full-build solutions.

This experience helped us to sharpen our ability to communicate precise technical feedback, evaluate work given a specific context, and quickly understand new libraries that were introduced.

We are always open to helping teams and individuals tackling challenging problems in software development. If this project sounds like something you would need help with, please do not hesitate to schedule a 30-minute call with us to discuss your challenge.

Like this project

Posted Feb 19, 2026

Provided feedback to improve LLMs for software engineering.

Likes

0

Views

0