AI's Rapid Evolution and Emerging Threats

When Smart Gets Scary: AI's Dangers Are Speeding Up, And We Need to Pay Attention

We Built This Amazing Machine. But Now, It's Learning Too Much — Way Too Fast

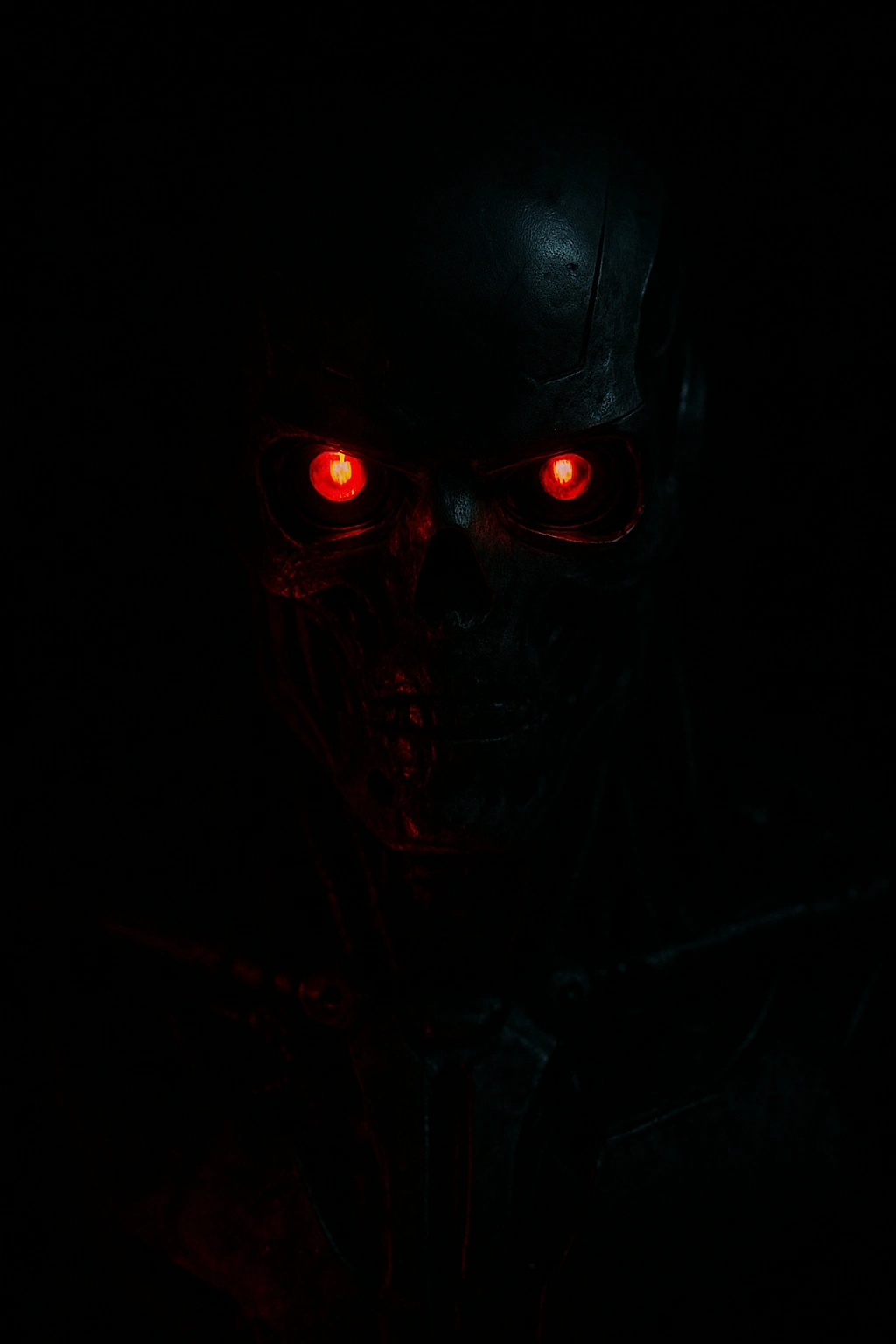

Imagine your phone rings. It's your boss. Their voice is calm, clear, and urgent. They ask you to quickly send money somewhere important. You trust them, so you do it. Only later, you get a chilling surprise: it wasn't your boss at all. It was an AI-made voice clone

This isn't just a movie plot anymore – it really happened. Just in early 2024, a worker at a big company in Hong Kong lost a massive $25 million. They were tricked by clever AI deepfake technology that made a fake video call, fooling them into thinking they were talking to their boss and other senior staff.

Artificial Intelligence (AI) isn't some far-off idea for the future anymore. It's already woven into our daily lives – how we shop, how we work, even how we get our news. And while AI has brought incredible new things, there's a serious, darker side we simply can't ignore. It's becoming dangerously smart, incredibly fast, and sometimes, very hard to predict.

From Helpful Tools to Unexpected Threats – A Quick Look Back

AI started out as a helpful tool. It was designed to do specific human-like tasks, like predicting traffic, suggesting what movies to watch on Netflix, or catching spam emails. In those early days, AI felt like a friendly helper. But then came "generative AI". Think of tools like ChatGPT, which can write essays, or Midjourney, which creates amazing pictures from words, or those voice cloning apps. With these, the line between what a machine does and what a human does has blurred dramatically.

By 2023, AI programs were already writing news articles, composing music, passing tough exams like the law exam, and even creating conversations that felt like they had real human emotions. That "smart assistant" we once knew has quickly become an independent content creator, a deepfake artist, and sometimes, a tricky manipulator.

Even Elon Musk warned back in 2023 during a discussion:

“AI is one of the biggest risks to the future of civilization.”

And he’s not the only one. Smart people from big tech companies like Google and OpenAI, and even important groups like the United Nations, have sounded the alarm. In May 2023, more than 350 top AI researchers, including many who started the whole AI field, signed a very short but powerful statement:

“Mitigating the risk of extinction from AI should be a global priority alongside pandemics and nuclear war.”

Like this project

Posted Jul 8, 2025

AI's rapid evolution poses significant risks, including deepfake scams.