pro

Henry Ollarves

AI Agent Systems & Platform Infrastructure

- $1k+

- Earned

- 7x

- Hired

- 5.00

- Rating

- 273

- Followers

This job looks like a banger

Hi, looking for AI Artist to come join our team!

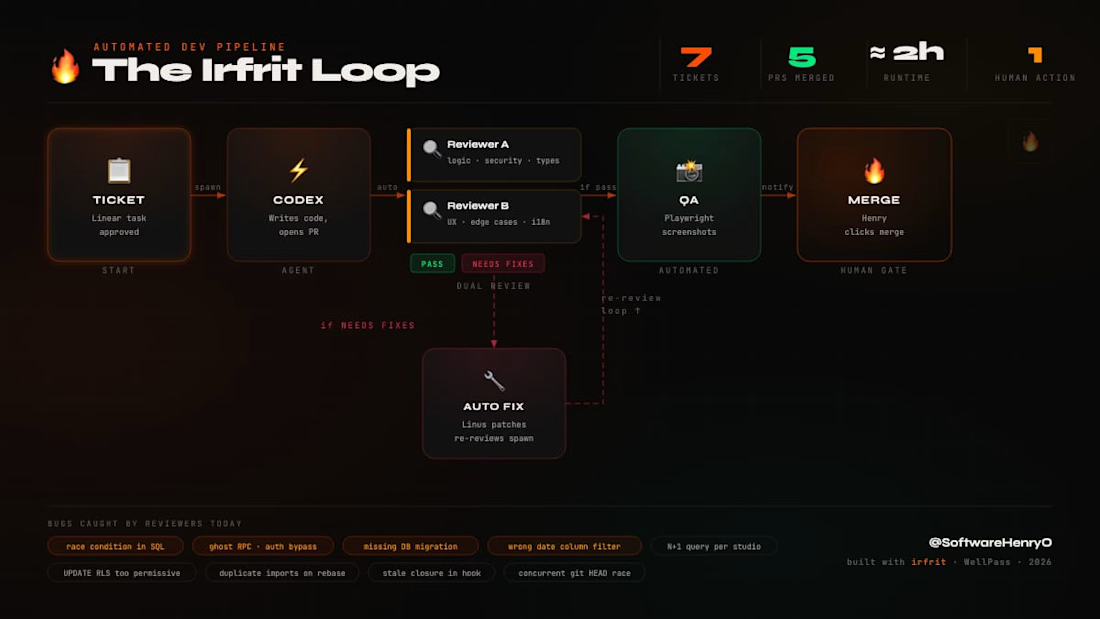

I built an AI dev pipeline and ran it live on my side project.

The Irfrit Loop: ticket comes in → AI writes the code → two independent AI reviewers tear it apart → auto-fix → re-review → QA screenshots → I click merge.

Today's run: 7 tickets, 5 PRs merged in ~2 hours. My only job...

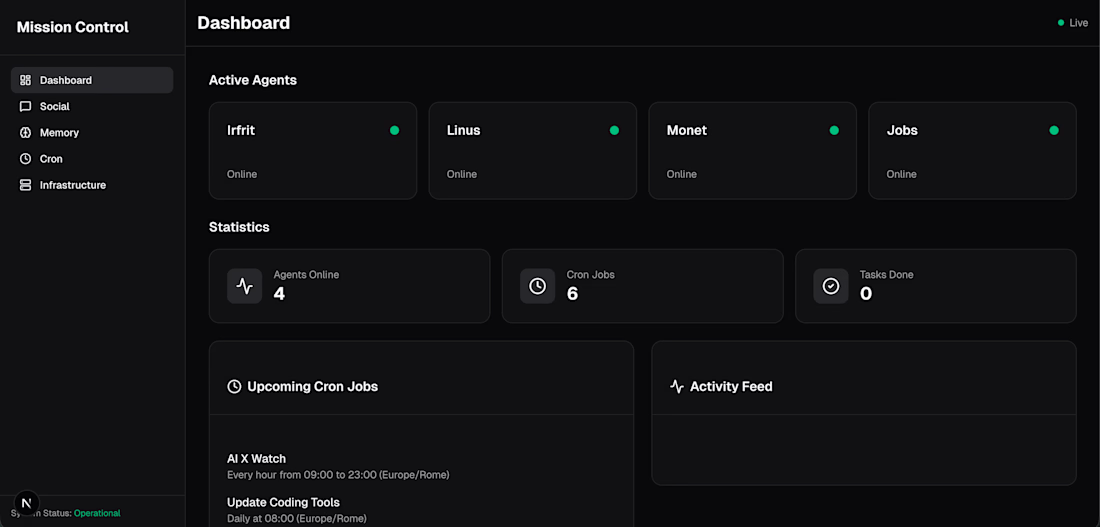

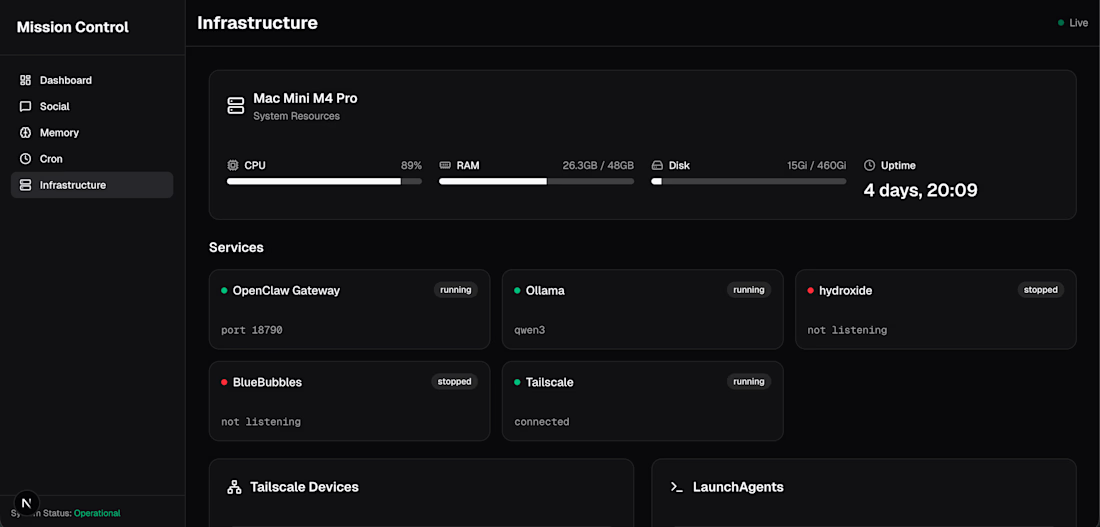

Built a personal mission control for my AI agent team 🔥

4 agents running 24/7 on a Mac Mini. CRON jobs, memory browser, infrastructure monitoring, the whole thing.

Designed in V0, built with Codex in under 30 minutes.

We are so early.

262k tokens. 5 novels of context. But here is what nobody is asking...

DeepSeek recently dropped a coding assistant with a 262k token context window. Impressive? Sure. Useful? That depends. More context does not mean better code. It means more room for the AI to get lost in...