The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

🟩 DAILY SIGNAL // MAY 13 2026

🫡📶📡

AI doesn’t have a safety problem — it has a governance lag. And lag always turns into risk. ⚠️🧩

I’ve watched this unfold across multiple production lanes — different stacks, same fracture pattern. The model evolves faster than the rules. The system isn’t “unsafe.” The oversight is. 🧠🔍

When no one owns model behavior, integration drift, output validation, or intervention authority, a vacuum forms. And in cybersecurity, vacuums don’t stay empty. They fill with misconfigurations 🧨, silent failures 🫥, shadow integrations 🕳️, and unclaimed incidents 🚨.

This is the layer between strategy and execution — the one most teams pretend doesn’t exist. I didn’t learn this from a whitepaper. I learned it from a deployment that almost failed because the model behaved correctly… but the organization didn’t. ⚡🧵

AI is a self‑driving system. The danger isn’t the model making a mistake — it’s when everyone assumes someone else is steering. 🛞🤖

Safety isn’t a checklist. It’s a chain of custody. Break the chain, and even a perfect model becomes unpredictable. 🔗⚠️

Drop your biggest governance challenge — I’ll respond to every one.

🫡📶📡

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

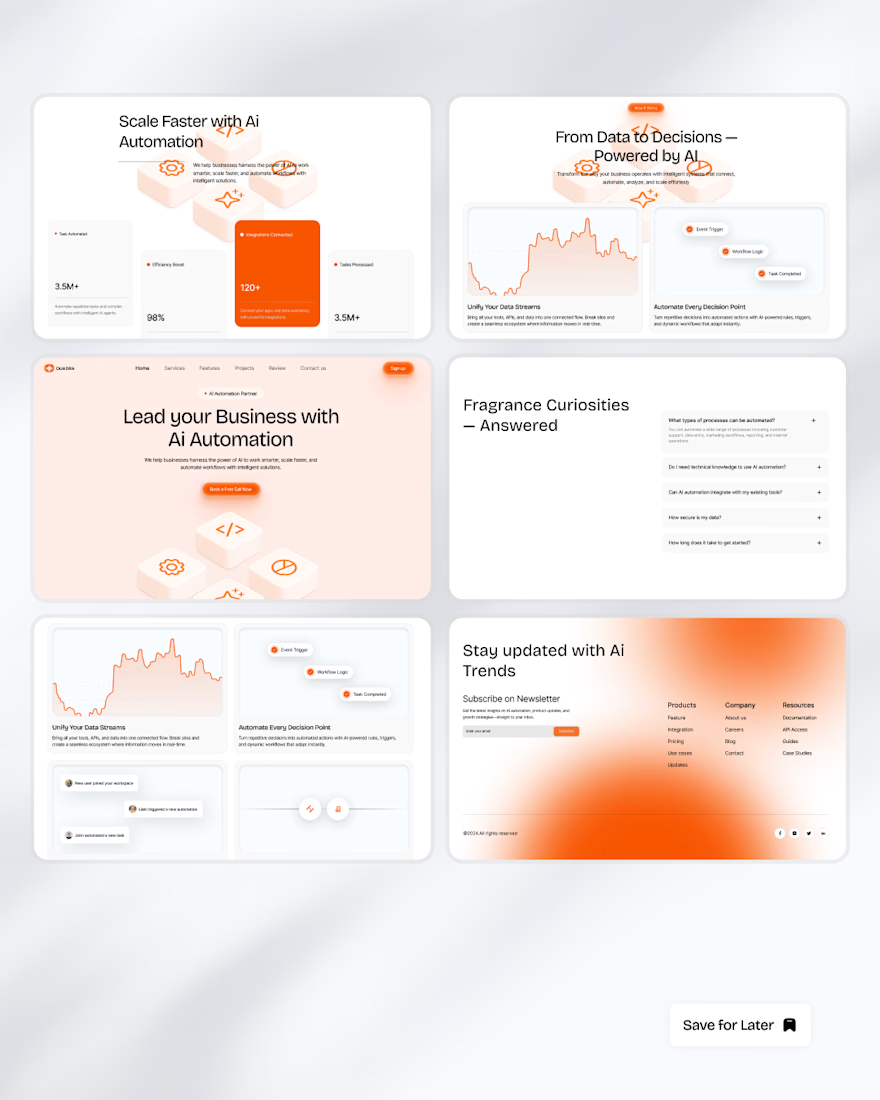

Designed a modern AI automation SaaS website focused on simplifying complex workflows through clean UI, structured layouts, and conversion-focused product design.

Built with scalability, usability, and modern SaaS aesthetics in mind.

Exceptional attention to detail. The professionalism and strategic thinking behind this project are immediately obvious.

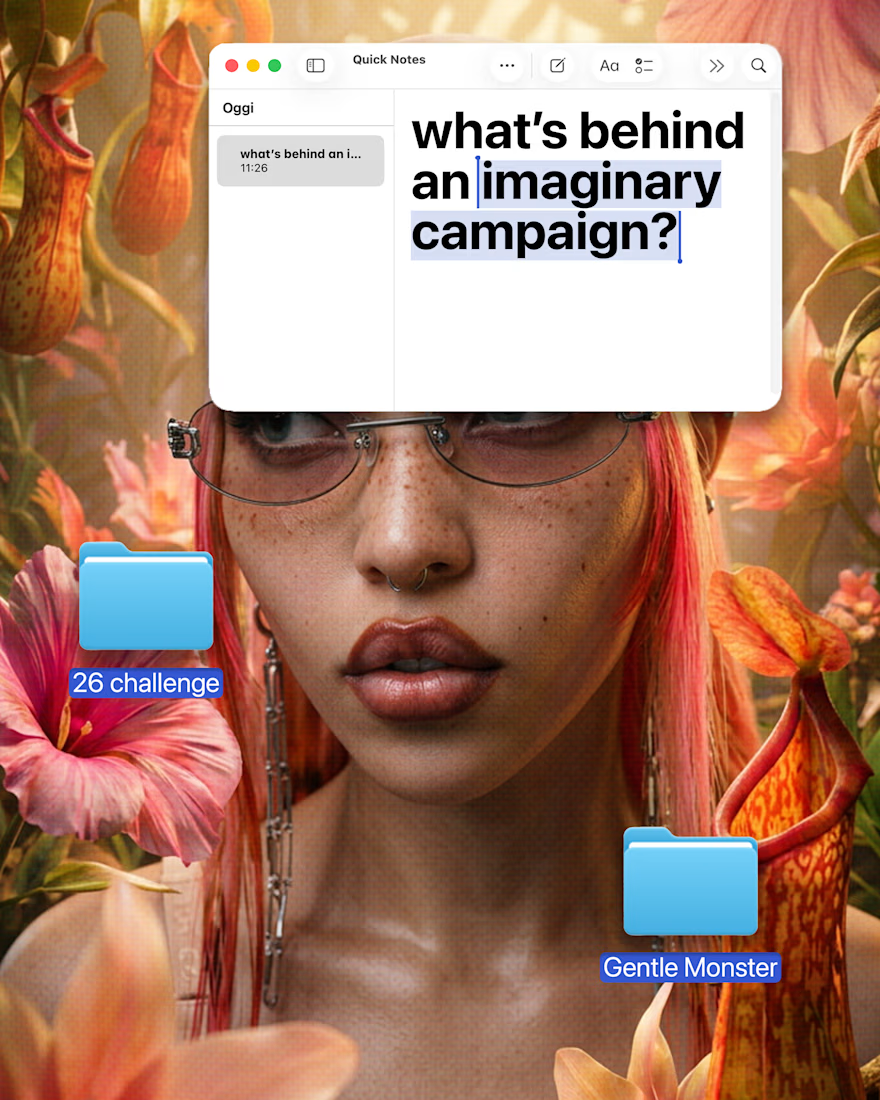

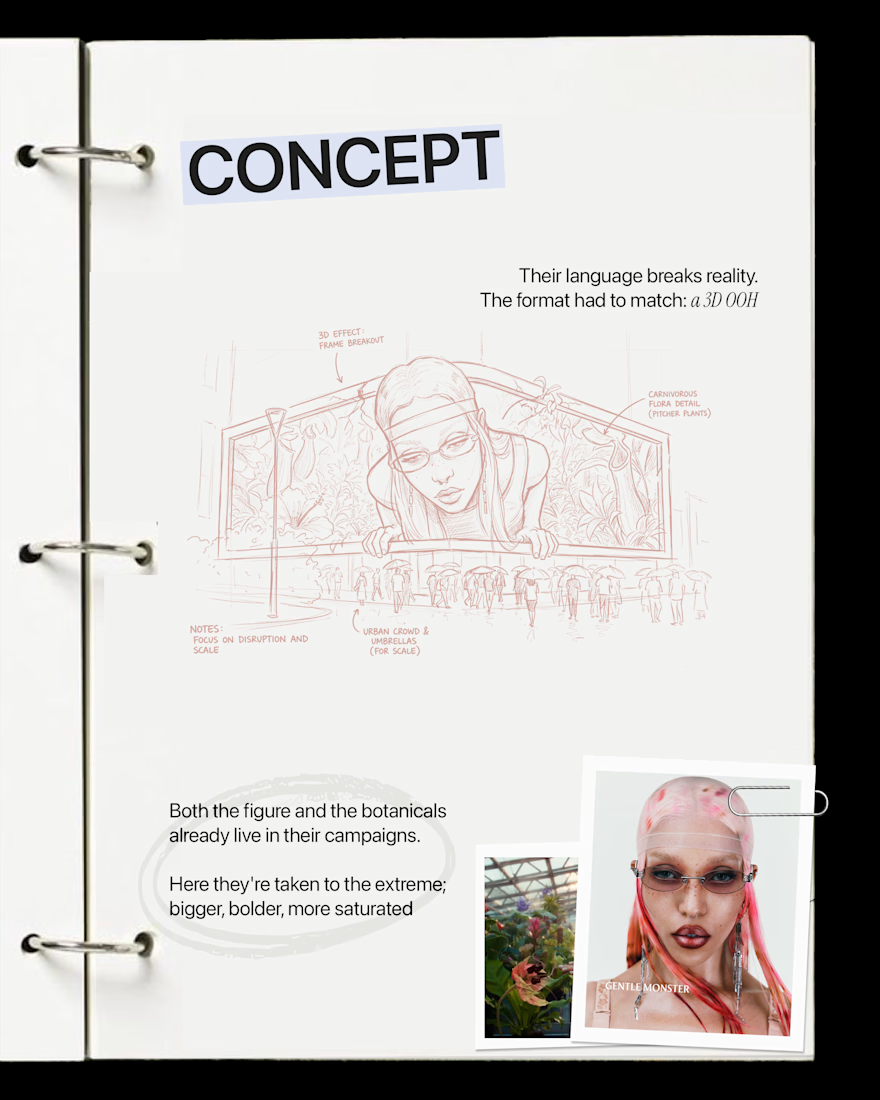

I designed an imaginary 3D OOH campaign for Gentle Monster. Here's what's behind it.

G is the first letter of my 26Lab — one imaginary campaign for each letter of the alphabet.

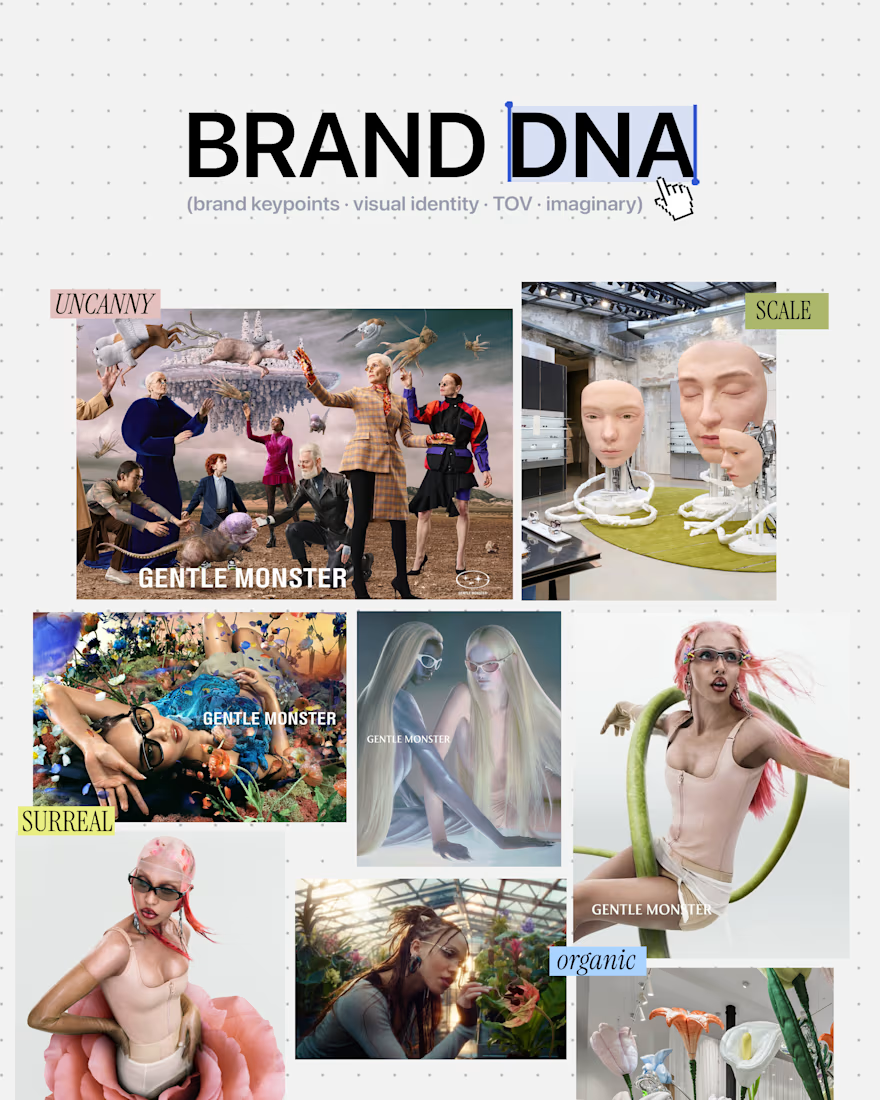

Gentle Monster was an easy choice. A brand that already lives outside reality, where surrealism isn't a style but a baseline. Character and botanical elements are already part of their visual language — I just took both to a different scale.

For the visualization I used Flora: its node-based system was key to keeping the character and the environment consistent across iterations — dozens of them — without losing direction.

AI handled the execution. The concept, the direction, the choices — that part was mine.

#artdirection #3DOOH #conceptdesign

Trending

Claude

Claude has entered the design space. How are you using Claude Design?

Contra University

Learn from expert creatives how to earn more using next-gen AI tools.

creativeaiflow

Creative AI workflows are evolving. What tools do you use, and what are their strengths and weaknesses?

portfolioreview

The best portfolios tell a story, not just show a grid. Share yours for feedback.

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?