Umidjon Z

Full Stack Developer Nextjs | FastAPI | Ai Integration

New to Contra

Umidjon is ready for their next project!

Internal Platform making documents and internal knowledge into a fully searchable AI chat assistant, built on a production-grade RAG stack.

What has implemented:

📥 Document ingestion: PDF, DOCX, spreadsheets, and scanned images — auto extracted, cleaned, chunked, and indexed into your knowledge base.

🔍 OCR extraction: Scanned documents and image-based PDFs become fully searchable with no text layer required.

⚡ Hybrid search: BM25 keyword search and semantic vector search run together for best-of-both-worlds retrieval and maximum recall.

🎯 Rerankingl reranking retrieved results before they reach the LLM, delivering sharper and more relevant answers.

🗄️ Vector DB (supported): Elasticsearch, Pgvector, Qdrant, Weaviate, Pinecone.

🏛️ Relational DB (supported): PostgreSQL, SQLite, MySQL.

💬 Chat webapp: A polished, self-hosted frontend connected to any LLM: OpenAI, Anthropic, Ollama, or your own model.

🛠️ Admin dashboard: Manage users, roles, knowledge bases, retrieval settings, and model configurations from one place.

📊 Observability: Track user's token usage, response, latency, and cost.

🐳 Fully Dockerized: Clean, reproducible, and portable deployments on any Linux server or VPS

0

34

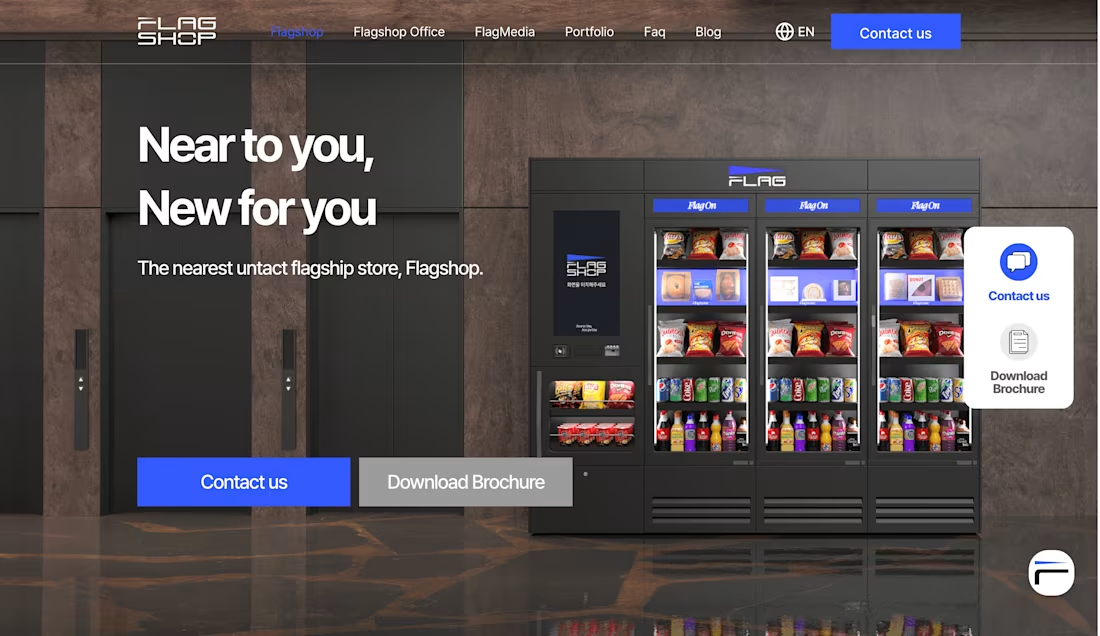

The website for "FLAGSHOP”, a Korean AI-powered smart vending machine management platform. Flagshop helps businesses maximize revenue by automating unmanned retail operations through intelligent inventory management and predictive analytics.

0

40

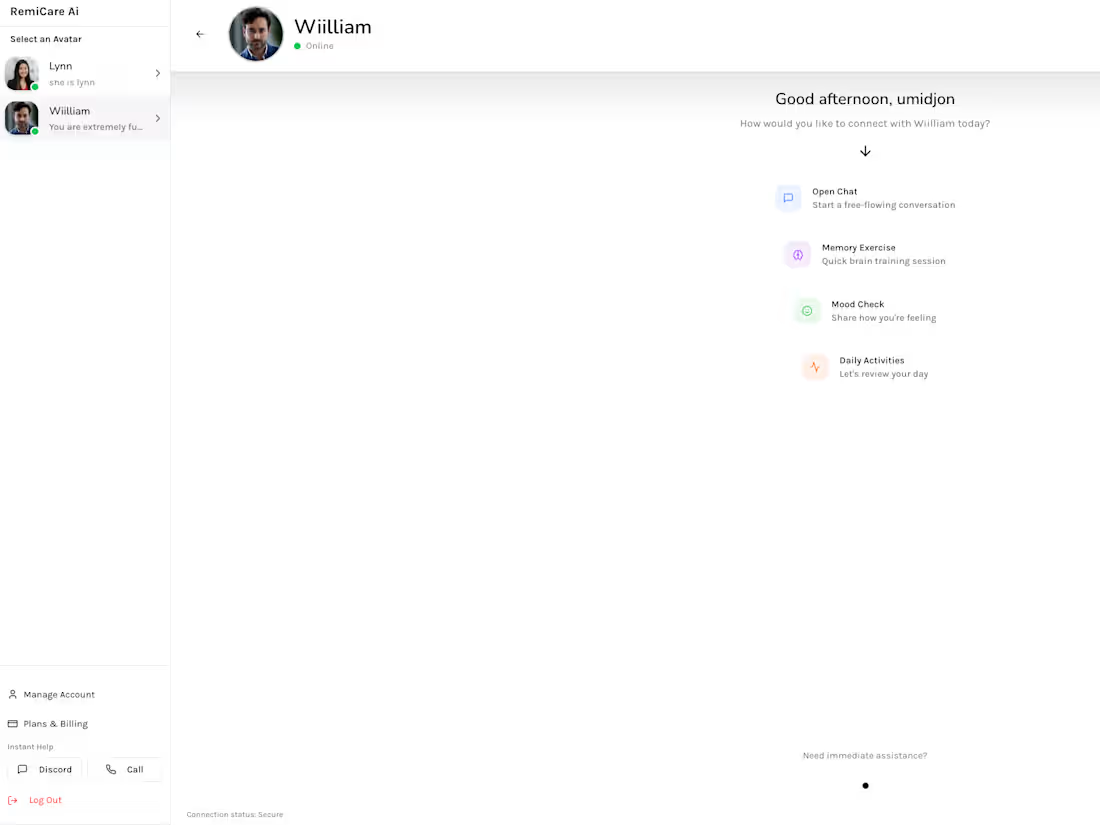

Full-stack AI companion platform built end to end: from real-time voice conversations to lip-synced video avatars.

Real-time conversational AI with sub-second latency using WebSockets

Custom voice cloning per companion profile

AI video avatar generation with lip sync from single photo upload

Stripe subscription and payment integration

Admin dashboard for live call monitoring and profile management

Conversion-optimized marketing homepage

Full production deployment included

Stack: Next.js · FastAPI · WebSockets · Stripe API · Voice AI · Video Synthesis

0

50

This is a self-hosted enterprise RAG platform that turns internal documents into scoped AI assistants. Each assistant is grounded strictly in its own knowledge base never the open internet.

Capabilities:

Multi-domain knowledge bases

· PDF ingestion with table & image parsing

· per-model LLM & prompt config

· inline source citations

· relevance scoring

· out-of-scope query rejection

· multi-query retrieval

· role-based access control

· pluggable LLM backend

· fully self-hosted, data stays on your infrastructure.

0

90