Uğurcan Uzunkaya

AI and Software Engineer who builds end-to-end products

New to Contra

Uğurcan is ready for their next project!

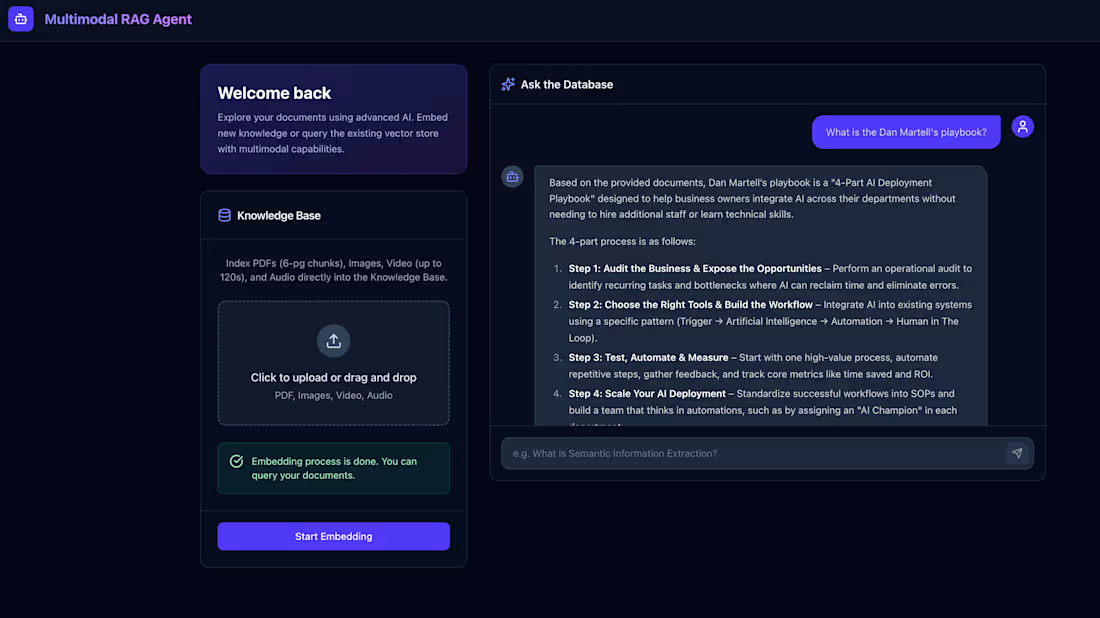

What started as a terminal script became a full-stack, Dockerized, multimodal AI application. Built live on stream.

The final architecture: FastAPI backend, React 19 frontend, Weaviate vector DB, all orchestrated with a single "docker-compose up --build".

The real upgrades:

True multimodal. PDF, images, video, audio, all indexed and queryable through one interface.

Async everywhere. Background tasks handle heavy embedding. The UI stays responsive. No blocking.

Production-grade frontend. Glassmorphic React dashboard with real-time polling and markdown-rendered AI responses.

One-click deployment. Docker handles networking, volume mapping, and environment injection across all three services.

From a "while True" loop in a terminal to a cloud-ready, TDD-compliant full-stack product. Three iterations.

0

17

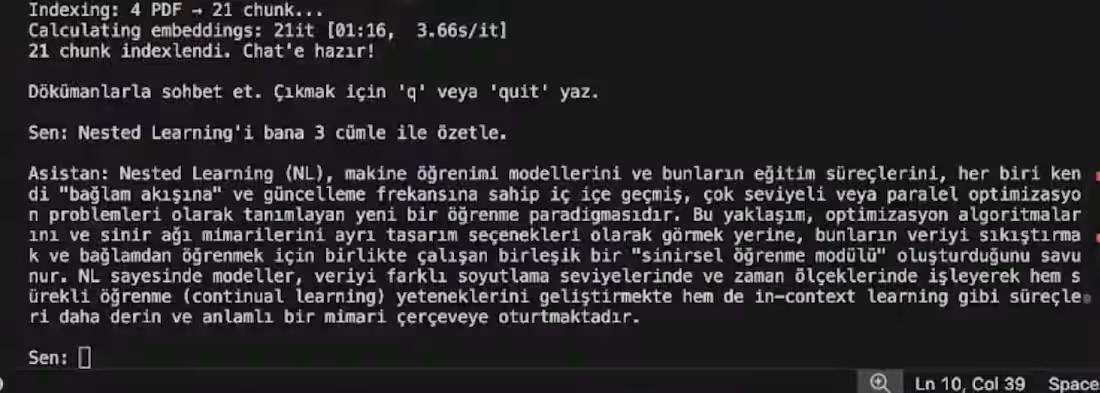

Same RAG system. This time we migrated it to a production-ready FastAPI backend. Live on stream again.

Three upgrades that actually matter:

In-memory storage is dead. Replaced with Weaviate running in Docker. Embeddings now persist across restarts.

No more blocking. The /api/embed endpoint offloads processing to a background task. The API stays responsive while heavy lifting happens in the background.

Idempotency built-in. Already embedded a PDF? The server detects it and skips re-processing. No duplicate vectors, no wasted Gemini API calls.

Added concurrency guards, pytest coverage, and ruff for linting. The kind of engineering decisions that don't show on the surface but matter at scale.

From a CLI prototype to a structured, testable, cloud-ready backend. All on camera.

0

17

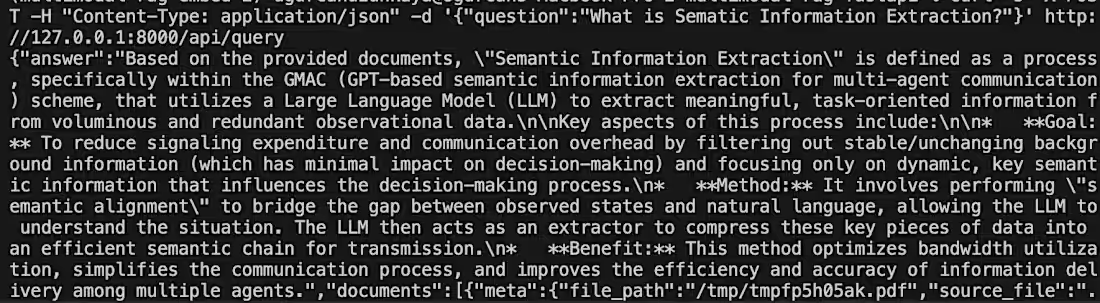

Built a Multimodal RAG system live on stream. From zero to working CLI in one session.

The goal: a terminal-based RAG pipeline using Haystack, Gemini Multimodal Embeddings, and Flash Lite, querying complex research PDFs through a conversational loop.

Three real engineering lessons from the build:

Chunking isn't optional. Raw PDFs exhaust token limits instantly. 6-page splits solved it.

InMemoryDocumentStore works for prototyping, but re-vectorizes on every launch. Persistent DBs like Weaviate are the next step.

Haystack's pipeline is strict. Mis-wiring a retriever to a generator crashes the loop immediately. API contracts matter.

Result: a CLI that maps user queries to the top 4 semantic chunks in RAM and returns grounded, non-hallucinated answers from your own documents.

Every mistake, every fix, on camera.

3

1

34

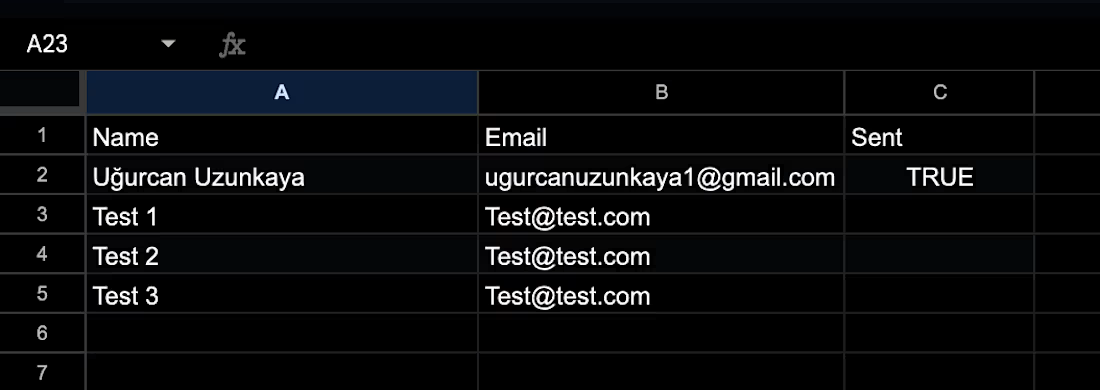

3 hours a week onboarding community members. Automated for $0.00/month.

Open Google Sheet. Track contacts. Send emails one by one. Every week.

So I built a serverless pipeline instead.

Every Monday, Cloud Scheduler triggers a Cloud Run Job. It reads new applications, sends onboarding emails via Resend, logs to Firestore, then shuts down. No idle compute. No manual work.

Two decisions worth noting:

SQLite on GCS crashed from file-locking issues. Switched to Firestore. Boot times improved immediately.

Hardcoded credentials replaced with Workload Identity Federation. Zero secrets in the repo.

Result: scales to zero, deploys on git push, runs on GCP's Always Free tier.

1

49

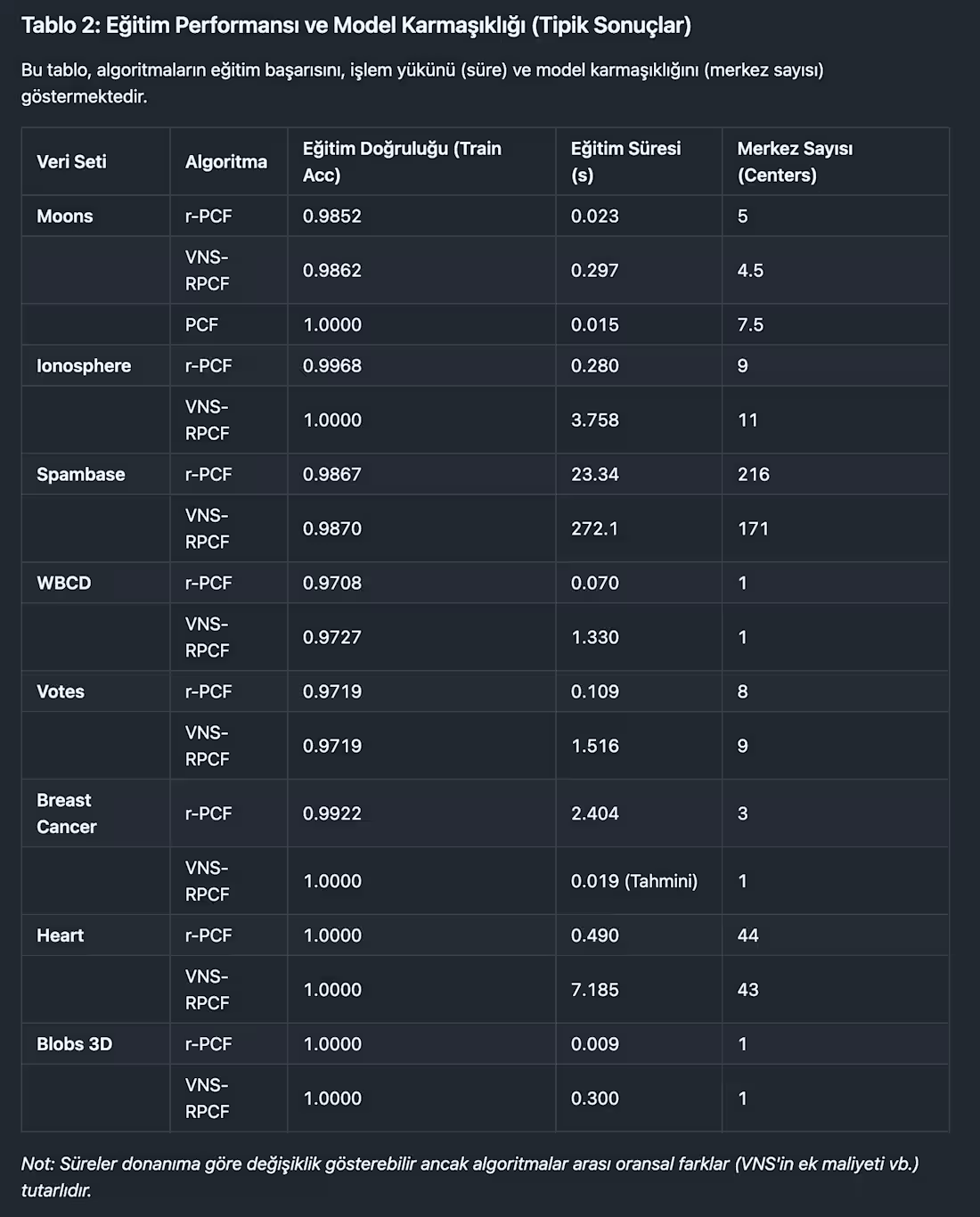

I implemented different optimization models and use metaheuristic (VNS) enchacement to see how the algorithms change the accuracy and other metrics.

0

29

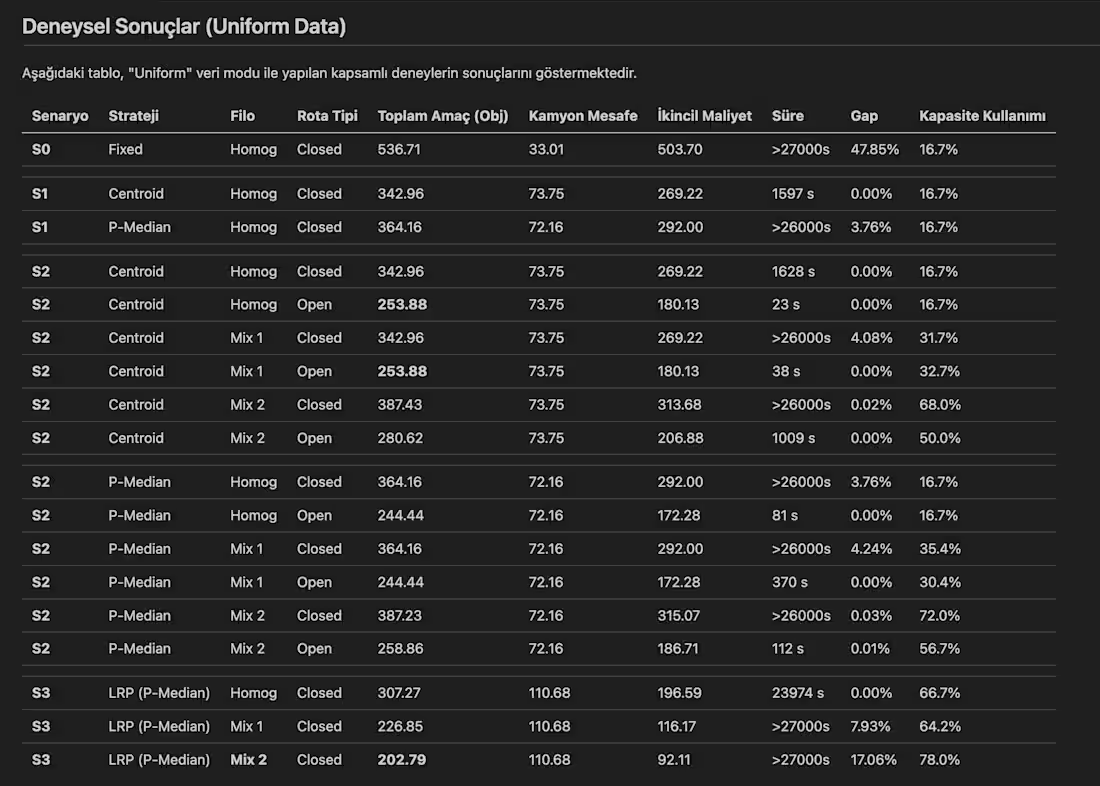

I worked on a project that tested multiple sceanarios on a vrp model. I created the backend, models, optimization parts then use parallelism to test every possible scenarios written to get the results faster in the limited time window.

0

35

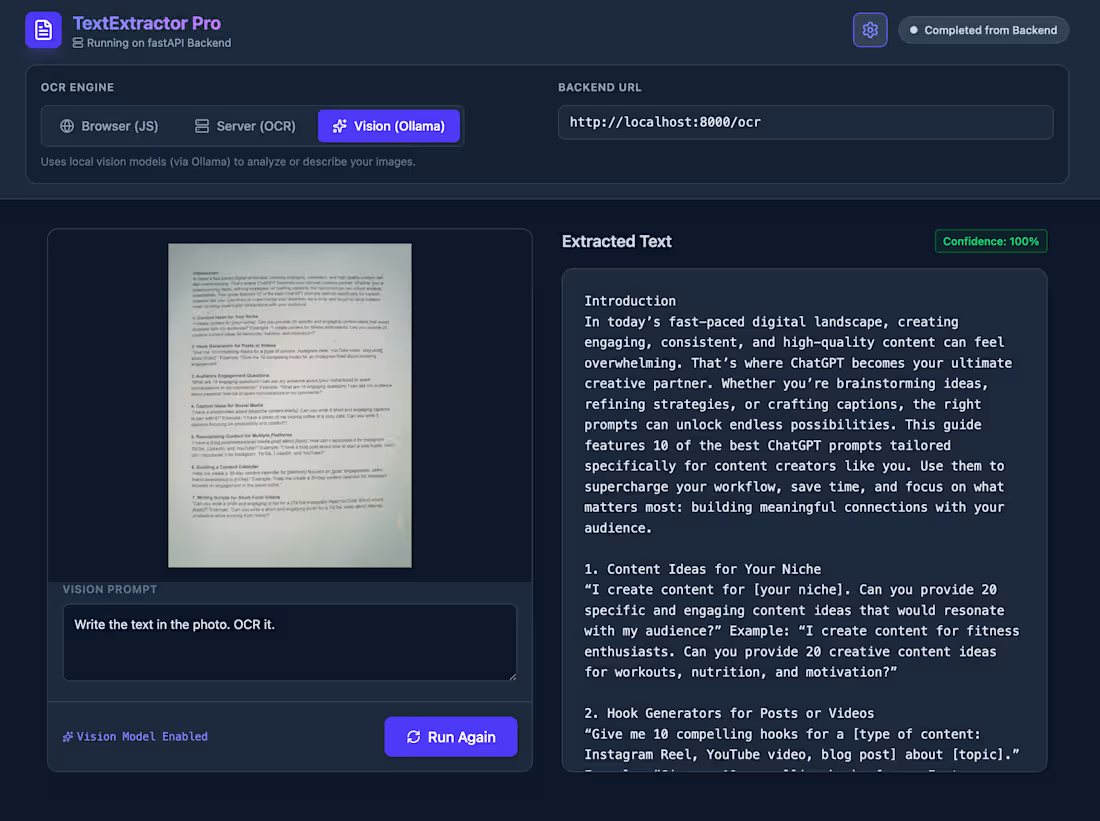

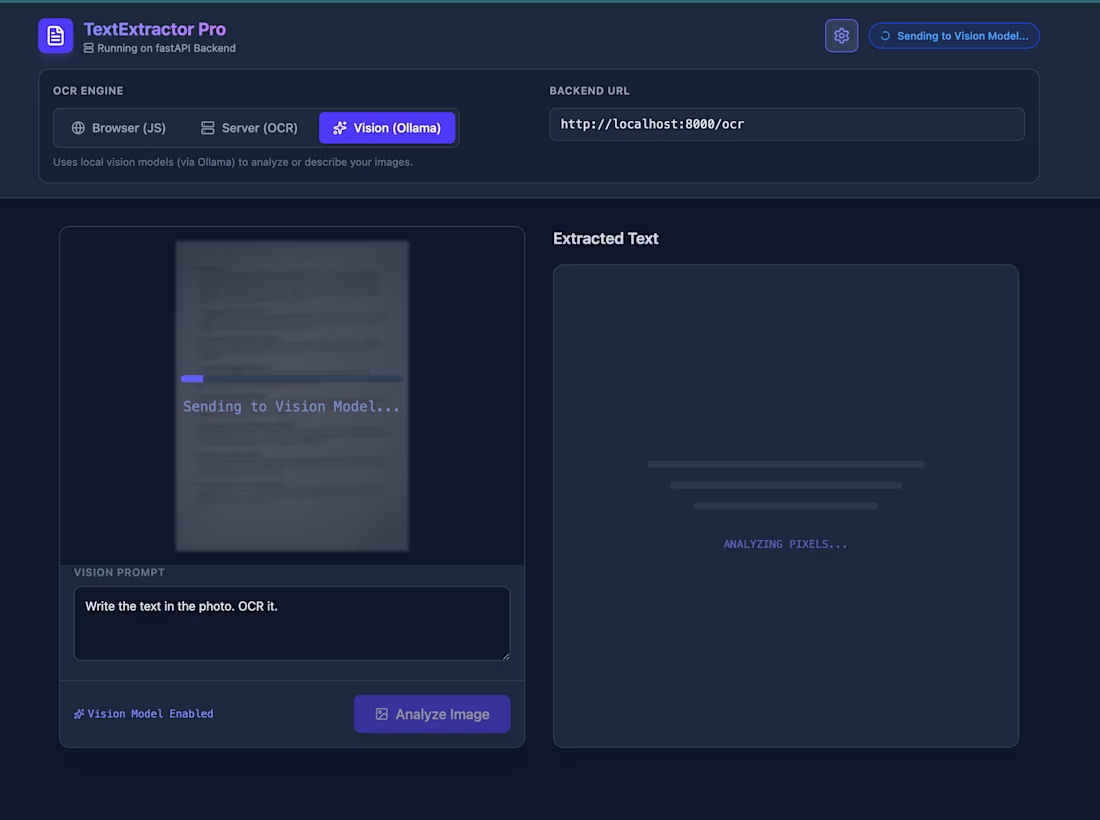

From the previous work I done, you can prompt and get more from vision models. Either ocr it or get more information or comment about the photo you given. Also, there is confidence levels for you to see how confident the system with its feedback.

0

39

From an ocr app I created. It uses local llm models, backend ML model and frontend library to ocr the given photo.

0

41