Taimoor Khan

Fullstack Developer: Next.js & AI Agents

Ready for work

Taimoor is ready for their next project!

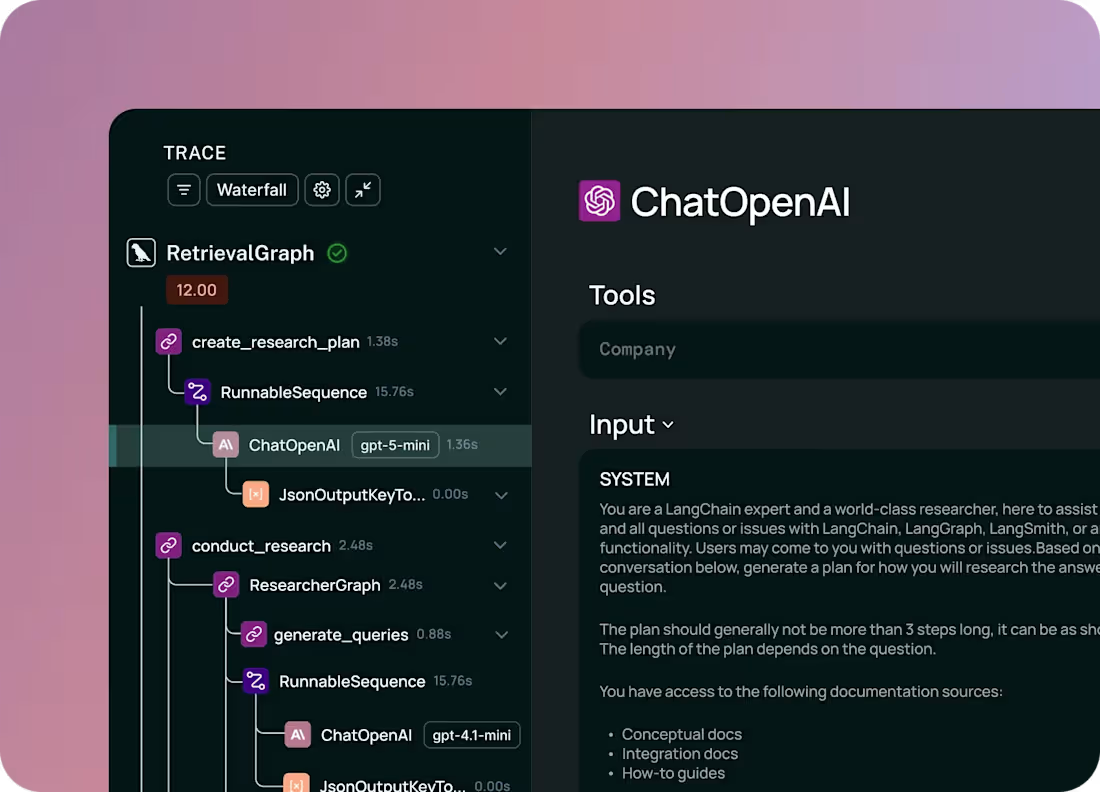

My AI agent was working… but I had no idea why it worked sometimes and completely fell apart other times. That’s when I plugged in LangSmith , and realized I’d been flying blind.

Suddenly, my “agentic AI” wasn’t a black box anymore, I could see everything:

Every tool call, LLM invocation and retry the agent made, also token burned & cached, state transition across agent steps

What surprised me most wasn’t the bugs , it was the waste. One trace showed my system prompt alone was 6.5k tokens. Not because it needed to be… but because I never saw it before.

So I fixed it.

Refactored prompts -> 1.5k tokens, same output quality

Switched to dynamic system prompts, injected only when complexity demanded it

Lesson learned: If you’re building agentic systems without observability

1

60

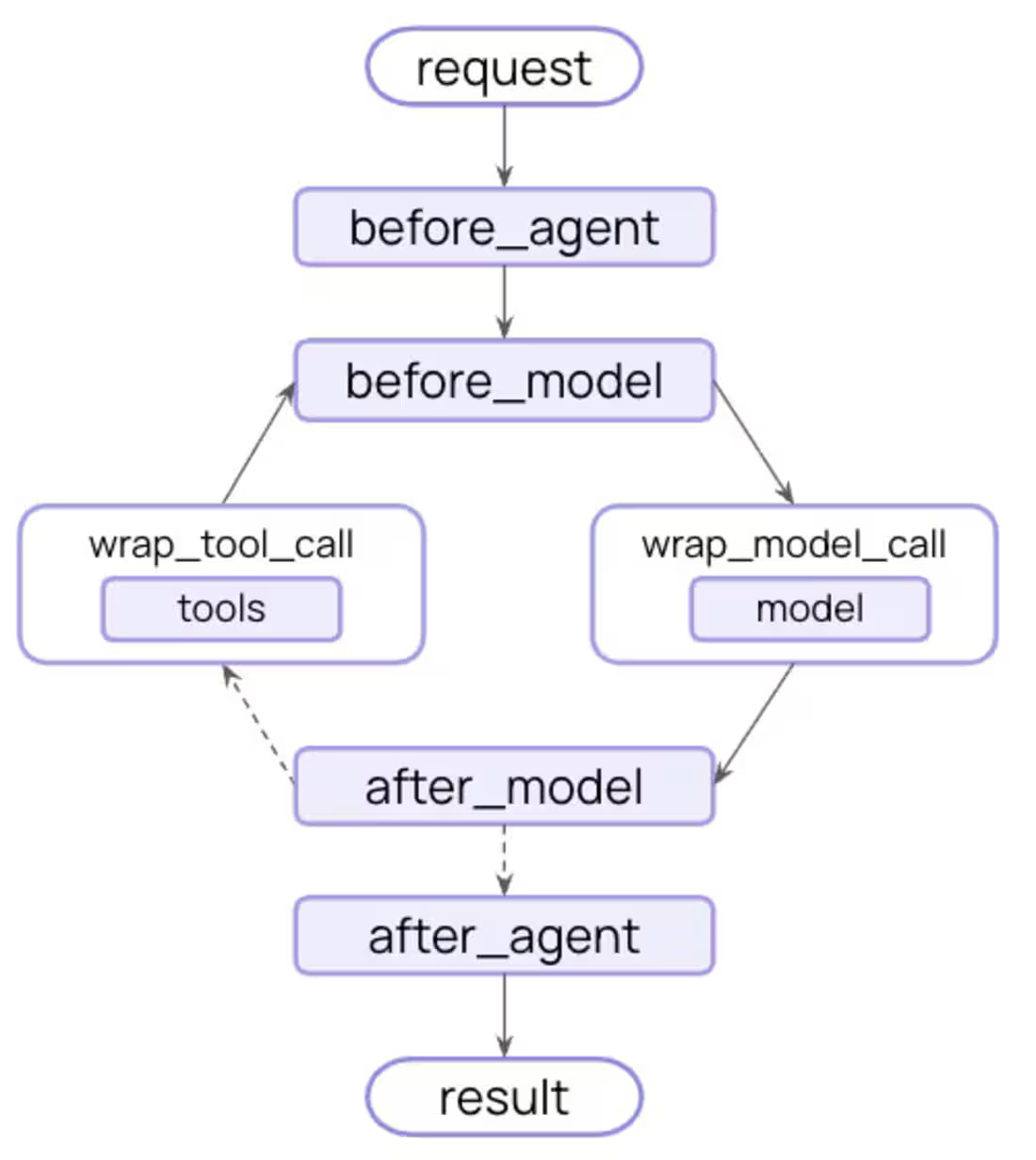

if you didn’t know LangChain (https://www.langchain.com/) V1 launched last month, you’re officially late to the party, at least I was until I stumbled into it while upgrading a client’s system.

What I discovered completely changed the project. I had developed RAG agent with LangChain v0.3

The plan? Add document generation and agent behavior on top. Then I dug into V1.

The result?

Enabled intent-based tool use, real tools, short/long-term memory, stable multi-step reasoning, runtime model switching, and predictable actions.

What started as a "simple upgrade" turned into building something that actually felt intelligent.

The lesson? When a framework makes a leap this big, resistance is more expensive than rewriting.

I'll be sharing how I built the document generation tools and the lessons that saved me hours of debugging.

0

48

Developed UI for Shopify custom store fronts

0

3

Developed Backend for dating App

0

4

E-commerce Website Development

0

16