Romans Numcevs

Python backend engineer | APIs, pipelines, RAG

New to Contra

Romans is building their profile!

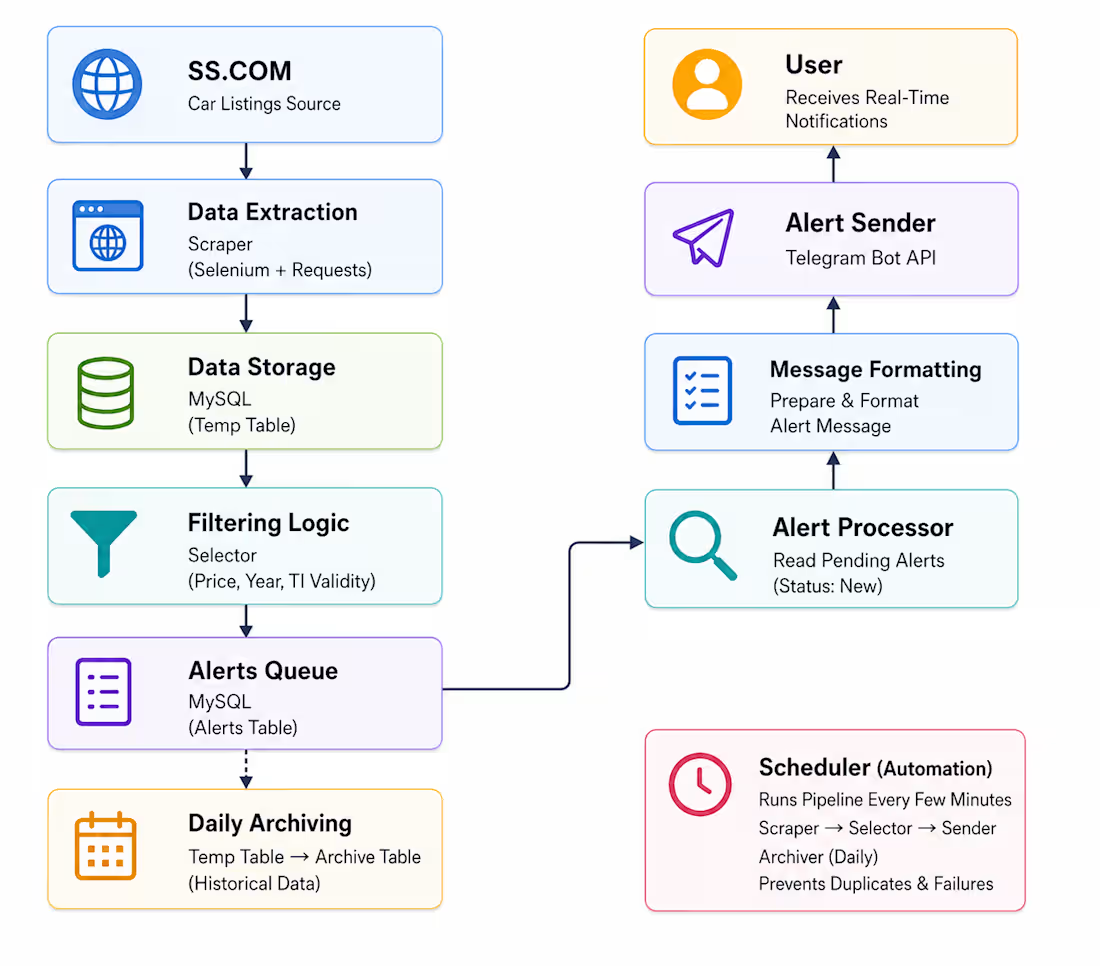

This project is an automated data pipeline that continuously monitors car

listings from SS.COM (http://SS.COM), processes them, filters high-value deals, and

delivers real-time alerts via Telegram.

The system is designed to run autonomously on a server, ensuring up-to-date

monitoring with minimal manual intervention.

How it works

1. Scraper collects new listings

2. Data stored in SQL table

3. Selector filters best deals

4. Alerts queued in another SQK table

5. Sender delivers alerts to Telegram

6. Archiver moves old data to history

0

22

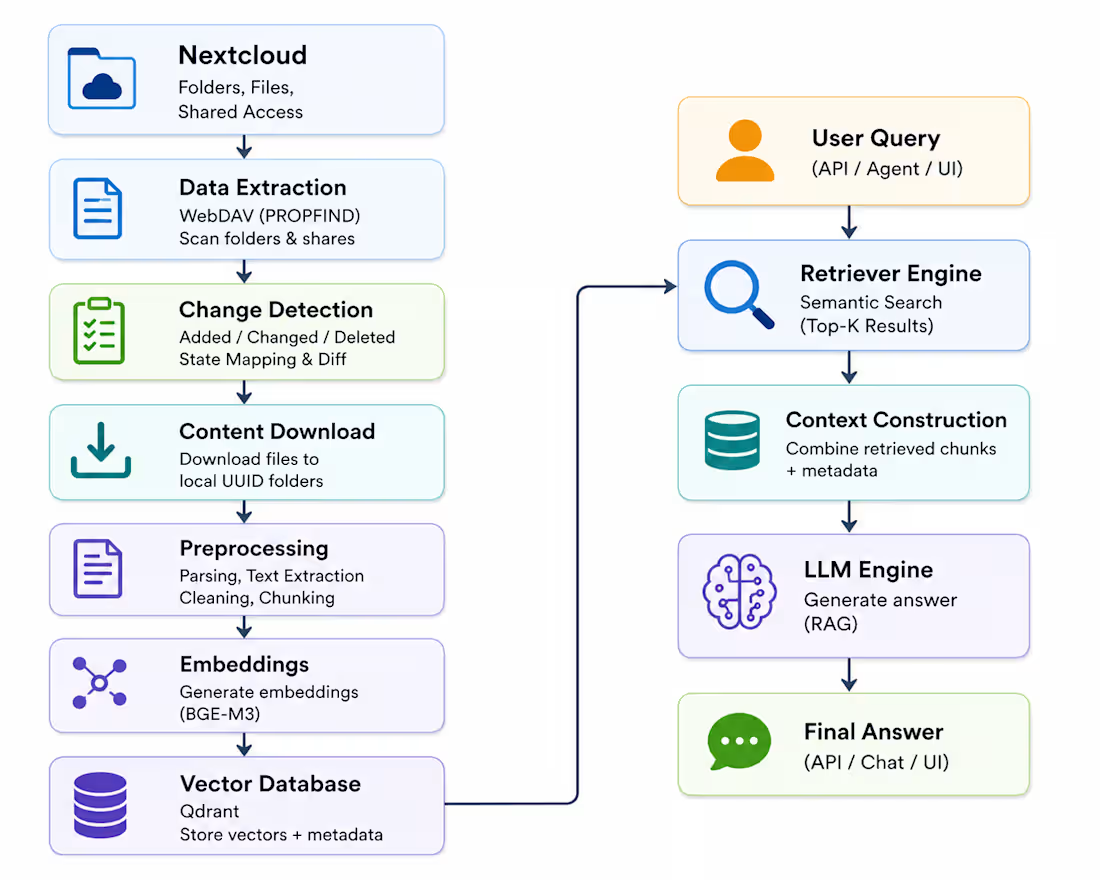

The automated system that synchronizes Nextcloud content and transforms it into a searchable AI-powered knowledge base. The solution connects cloud storage with backend services, processes documents, and enables fast semantic search using vector embeddings.

How It Works

1. Extract Data Connects to Nextcloud via WebDAV and scans folders + access rights.

2. Detect Changes Identifies added, updated, or removed content.

3. Sync Platform Updates backend groups and user permissions via API.

4. Download Content Stores files in structured collection folders.

5. Process Documents Extracts text and splits into searchable chunks.

6. Vectorize Content Converts data into embeddings stored in Qdrant.

7. Enable AI Search Retrieves relevant data and generates answers using LLM.

0

34

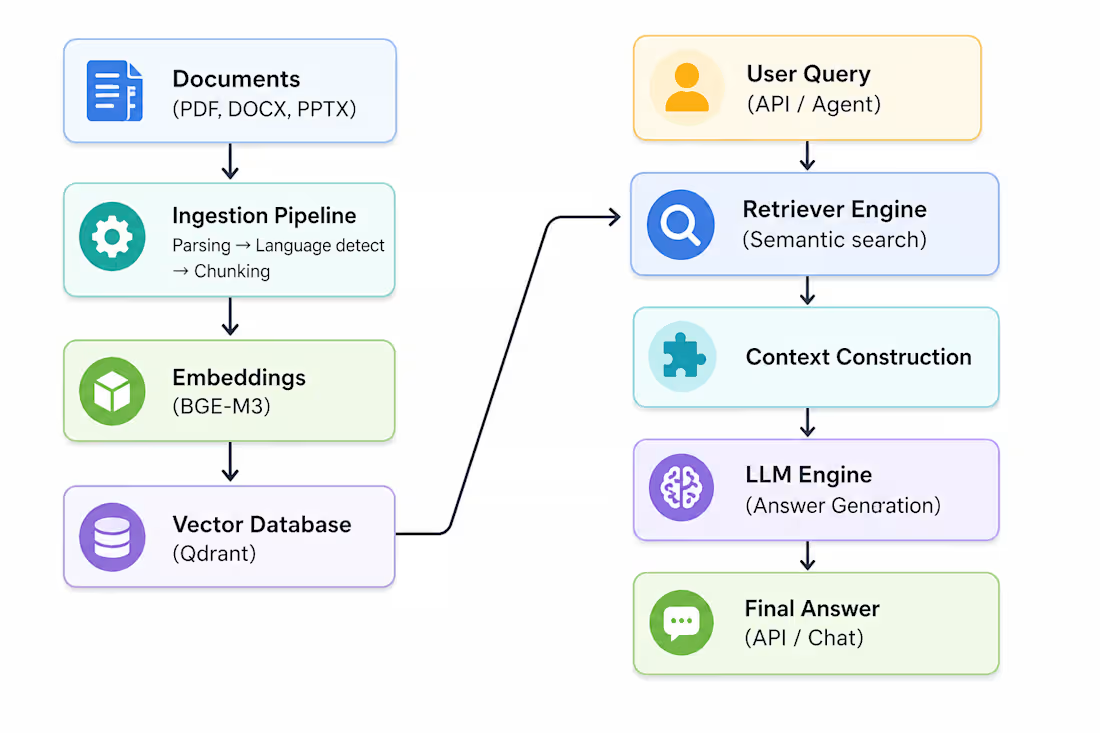

End-to-end AI system that converts documents into a searchable knowledge base and answers user queries using semantic search and LLMs. Designed as a production-ready pipeline with ingestion, vector search, and real-time AI responses.

How It Works

1. Documents are uploaded and processed into structured text

2. Content is split into hierarchical chunks and embedded

3. Embeddings are stored in a vector database (Qdrant)

4. User query is converted into a vector

5. Relevant document chunks are retrieved

6. Context is constructed and passed to LLM

7. Final answer is generated and returned via API or chat

0

39