What? A Short AI Rom-Com

NOTE: FINAL RESULT AT THE VERY END OF THIS CASE STUDY

THE RULES

Submit a short film made with AI for the AI Film Festival. The deadline was two months after the announcement.

At least 70 percent of all film assets had to be created with Google AI tools

The final video had to be generated entirely with Google DeepMind VEO 3.1

Runtime had to land between 7 and 10 minutes

You had to choose one of two themes: The Inner Life Of or Rewrite Tomorrow

SUCCESS CRITERIA

Make something that actually moves people

Build characters that feel real and stay consistent across many scenes

Finish the whole thing solo, in a very short window of time

WHAT SPARKED THE STORY

It started with something personal: I only hear for about 40 percent, and for years I pretended everything was fine.

That turned into a twelve-page script about Frank, an aging jogger doing exactly the same.

I studied directing at Columbia University and loved my years in New York, so I dropped the story right into my old neighborhood.

From there it became a playful rom-com. Frank meets a woman at a hearing institute, stumbles through sign-language lessons, misreads every cue, and slowly learns what happens when you finally decide to listen.

A sweet comedy about connection hiding in plain sight.

I initially had the intention to create a Pixar style cartoon, because I felt realism might prove too difficult for AI. but since I had just made a realistic character for an AI music video called ‘Yellow Tail’ with a similar old man, I felt like it might just be possible to make it realistic.

And so my initial characters came to life as real people.

BUILDING THE WORLD

The film has 22 scenes spread across different locations, interiors, exteriors, day, night, and everything in between.

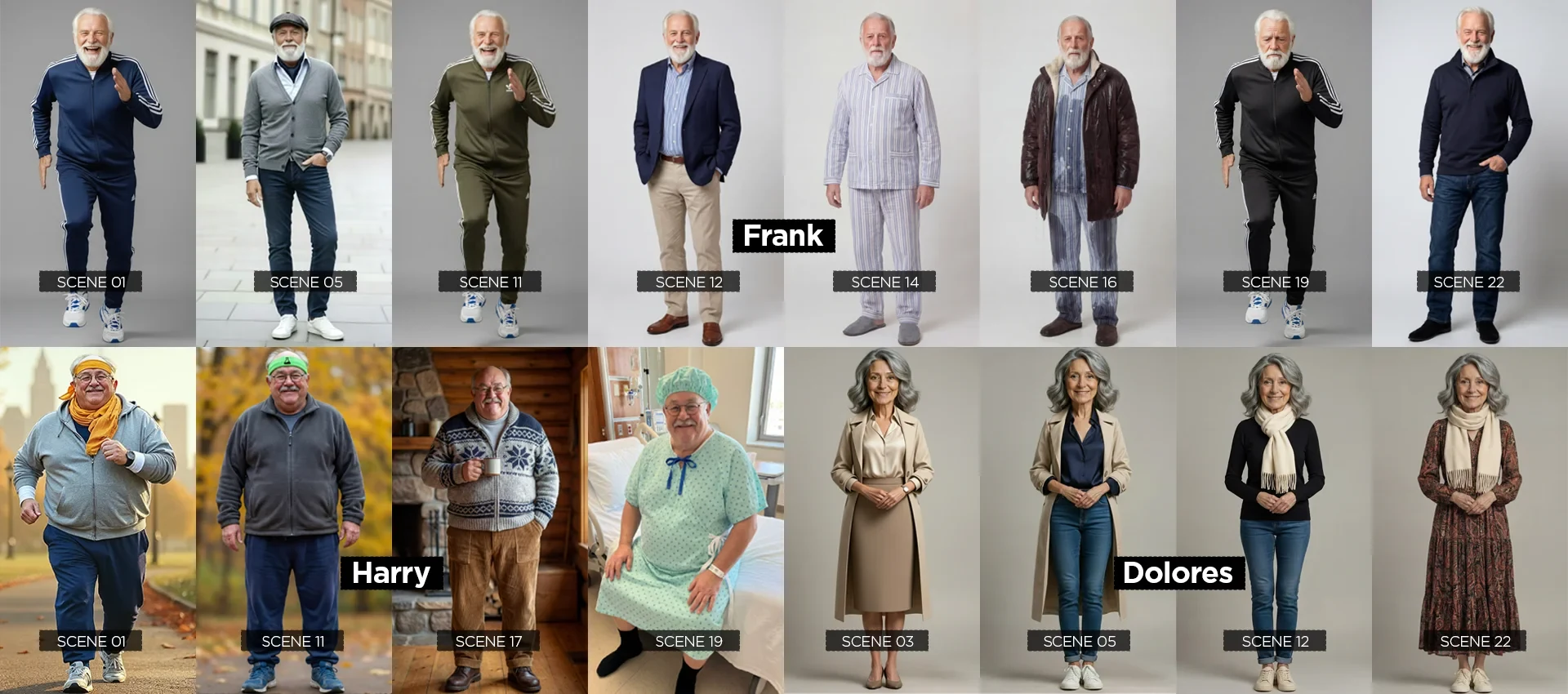

Because the story jumps across different moments in time, almost every character needed multiple outfits to keep continuity believable.

So before I could “film” anything, I had to build every look for every character from scratch.

Multiple scene outfits for Characters

LOCATIONS

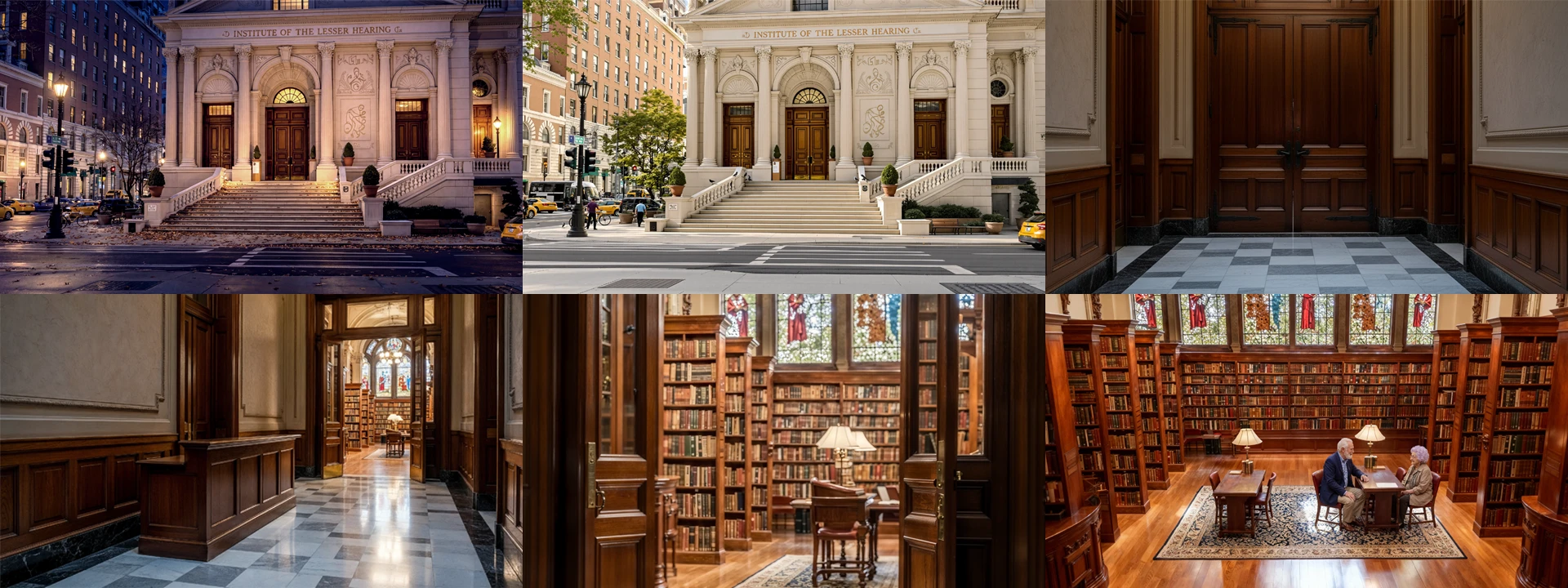

What are AI generated characters without a digital world to live in? All these locations were created and based on real-life areas.

Central Park & Riverside Park

The Institute of the Lesser Hearing

Frank's Neighborhood (The Upper West Side of NYC)

Generic Crossroads in NYC

INGREDIENTS

Each character needed to stay instantly recognizable, so I built an Ingredient set for each one: one close-up, one full-body. Simple, but surprisingly powerful.

It kept results stable in Nano Banana, and later in NB Pro, and it was crucial for VEO’s Ingredients mode, which generates video using both your prompt and your image references instead of a single first frame.

Ingredient for the character Glenda

GOOGLE GEMS & VEO 3.1 TIME STRUCTURED PROMPTS

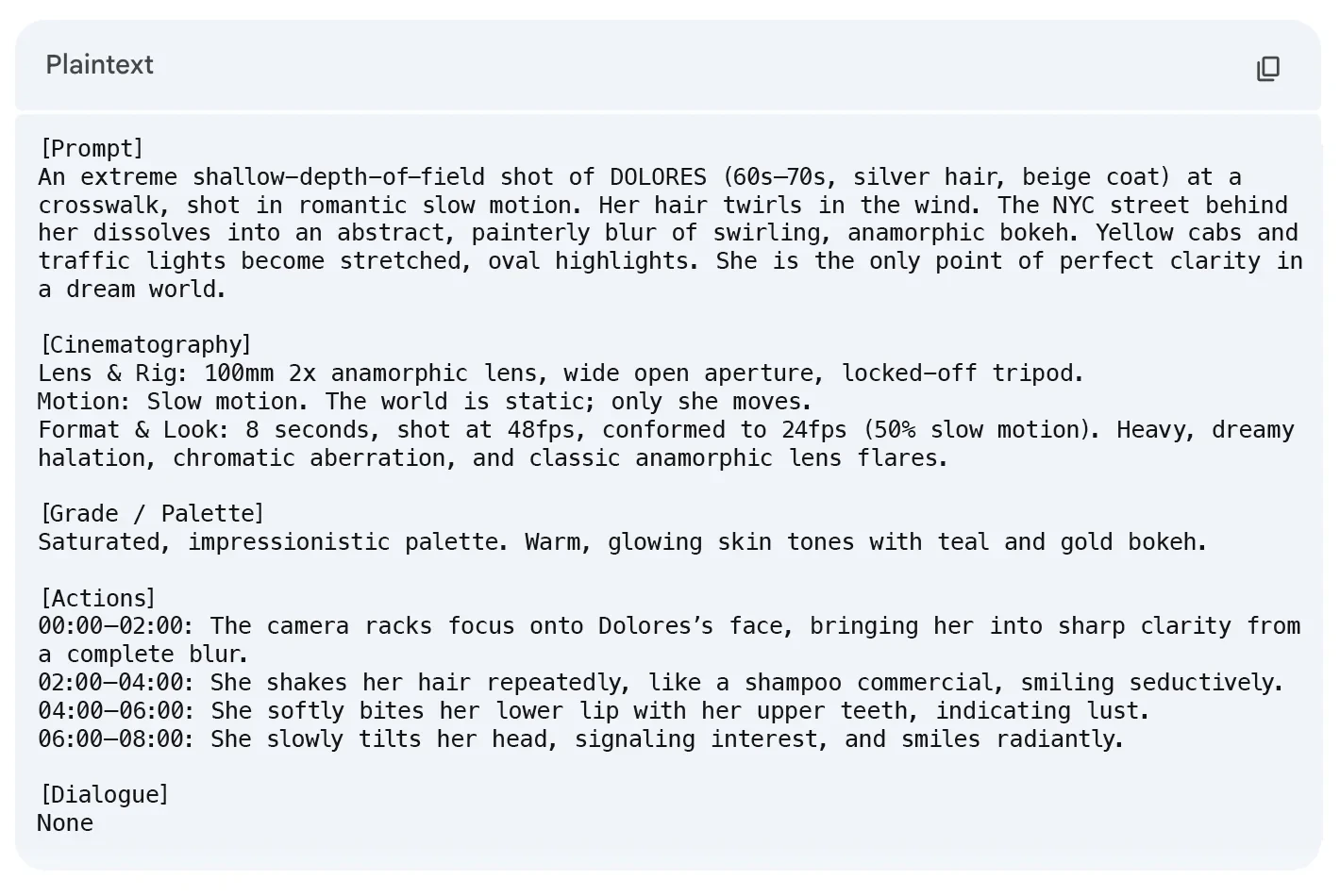

To dial in the prompts, I built a custom Gem in Gemini 3. Think of it as Google’s version of a GPT, but tuned specifically for this film.

I based it on instructions by @jboogxcreative (Tyler Bernabe) on Patreon and adjusted them to my workflow, which sped things up a lot. I’d describe what I wanted, and the Gem would return four different prompt interpretations. From there I’d test them using Begin and End Frames or VEO’s Ingredients mode in Flow.

Here is an example of one of the actual prompts I used, and the shot it produced

Prop Creation

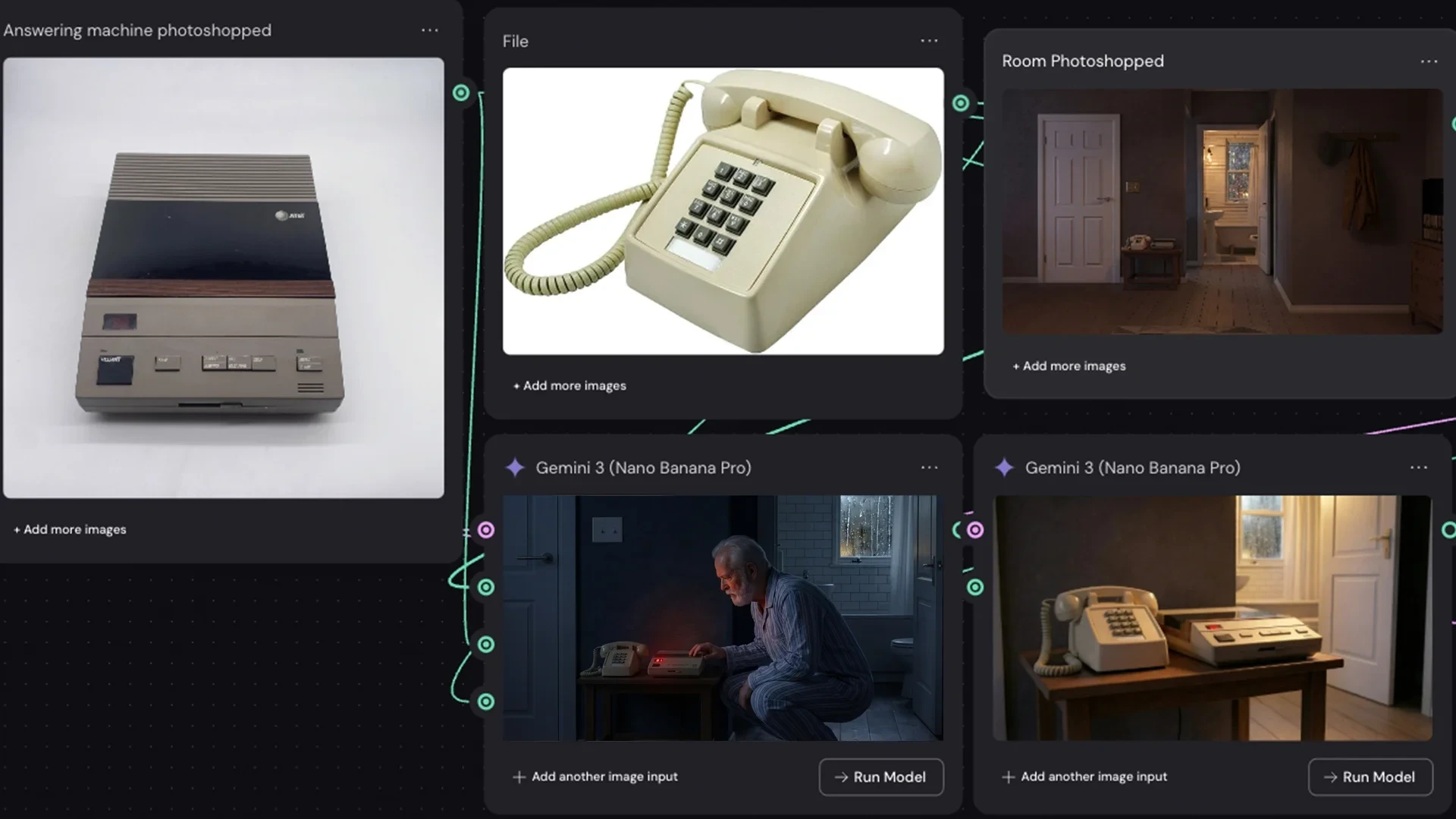

To keep the props grounded in reality, I hunted down real items on Google (search), cleaned them up, or had Nano Banana generate polished product-shot versions.

Then I pulled everything into Weavy AI, which lets you wire up different nodes, add a prompt, and shape the output exactly how you want it.

That’s how I built scenes with specific objects, like this 80s AT&T answering machine paired with a retro push-button phone.

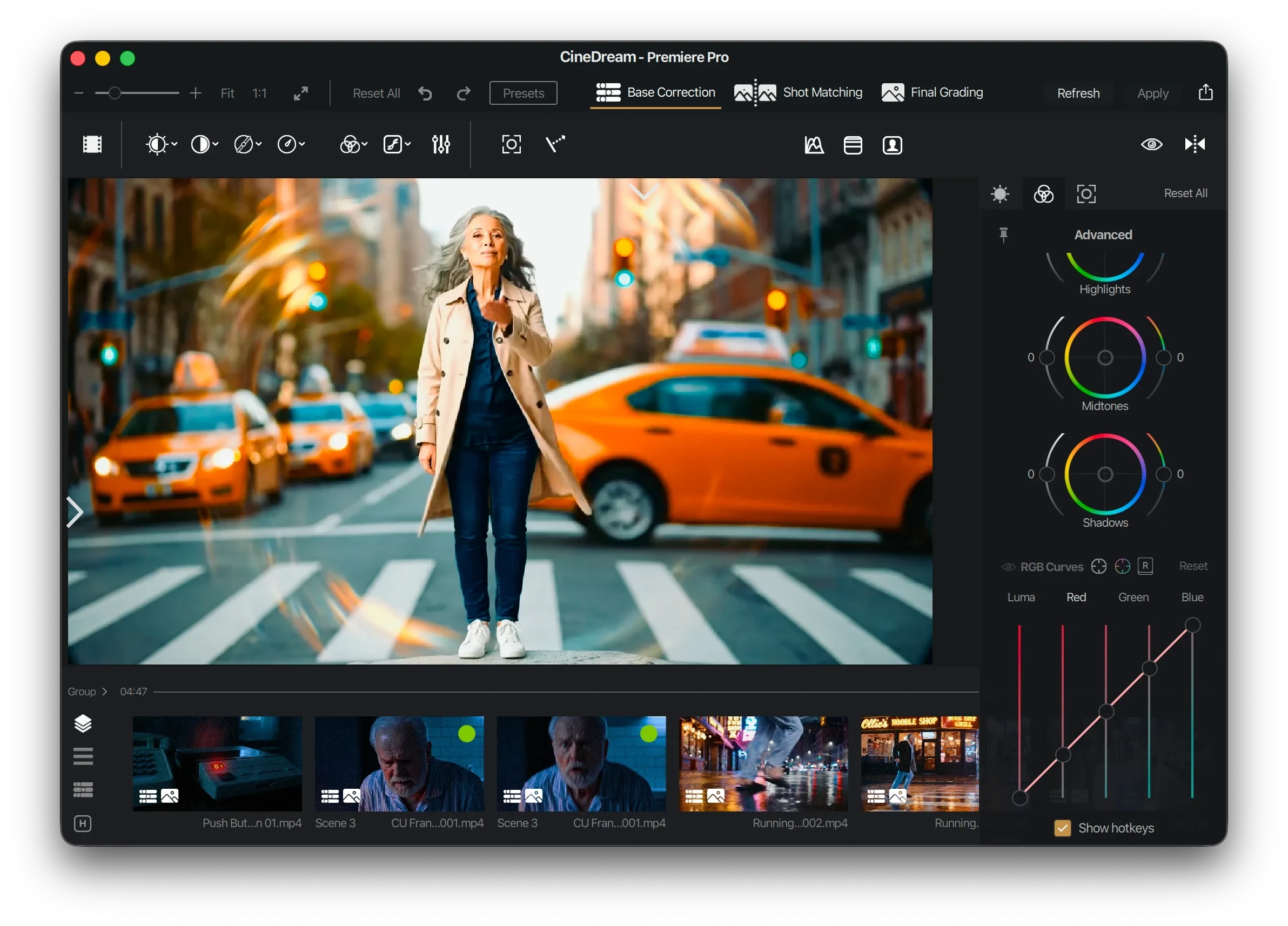

CINEDREAM

Color grading is always tricky. I’m not a colorist, so I looked for something that felt intuitive instead of technical.

I found a plugin that made the process much easier. It adds film grain in a natural way, less in the highlights, more in the shadows, and comes with a solid set of LUTs for quickly finding a look.

I ended up liking it so much that I added a link for anyone who wants to check it out: Click here for CineDream

In short: an easy way to give AI-generated footage a proper film feel.

The CineDream interface – Intuitive for non-colorists who want great results fast.

VOICES

VEO 3.1’s biggest perk is instant, perfectly synced video.

The catch: you don’t always get the same voice twice.

No matter how hard I pushed for a New York accent (or Glenda’s East End vibe), VEO often threw back standard American, random British, or whatever it felt like that day. Some takes even arrived with unexpected soap-opera background music, so a few moments needed dubbing.

At first I tried setting first frames with the characters in a recording booth, hoping for clean audio. Instead I got voices that sounded like they were recorded inside a giant bass drum. In the end the best method was simple: regenerate the takes, pick the video I liked, and sync it with the cleanest audio from another pass.

Zelda in a voice over recording booth

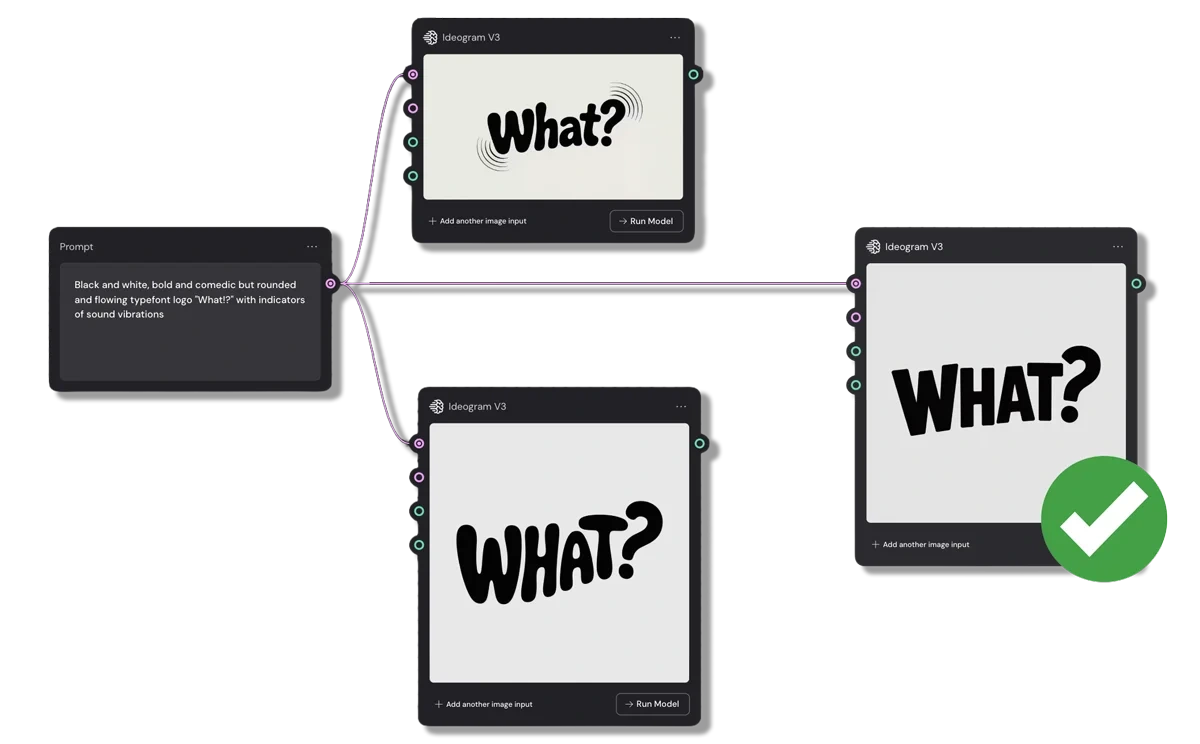

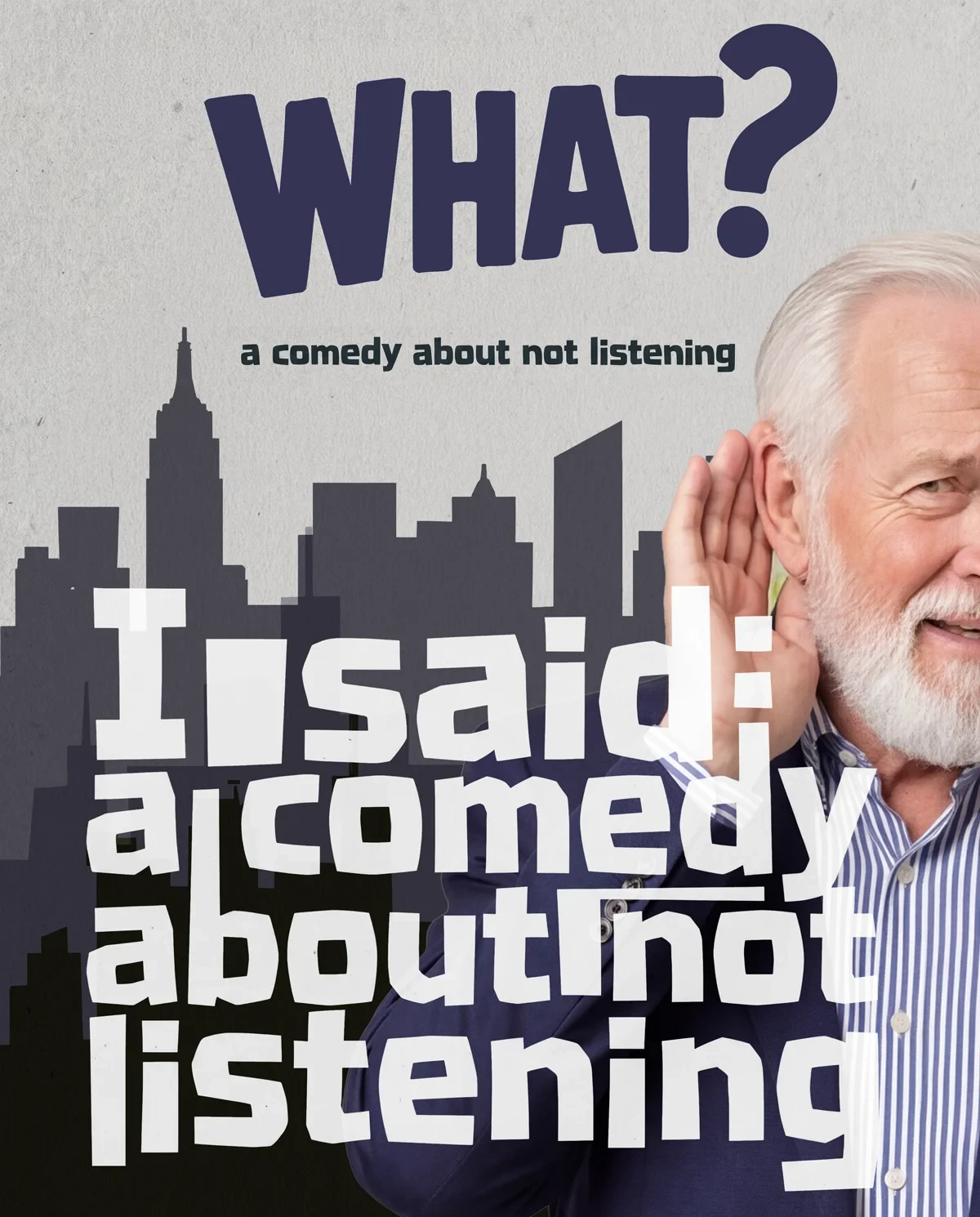

FILM LOGO

For the logo, I used Ideogram inside Weavy, a node-based AI system where multiple models work together. Ideogram is especially strong at clean typography and graphic layouts, which made it a great fit for a simple, bold title.

The film was originally called Deaf, but What? felt way less on the nose.

And I loved the side effect. People can now say, “I’ve seen What?”

“What have you seen?”

“Exactly!”

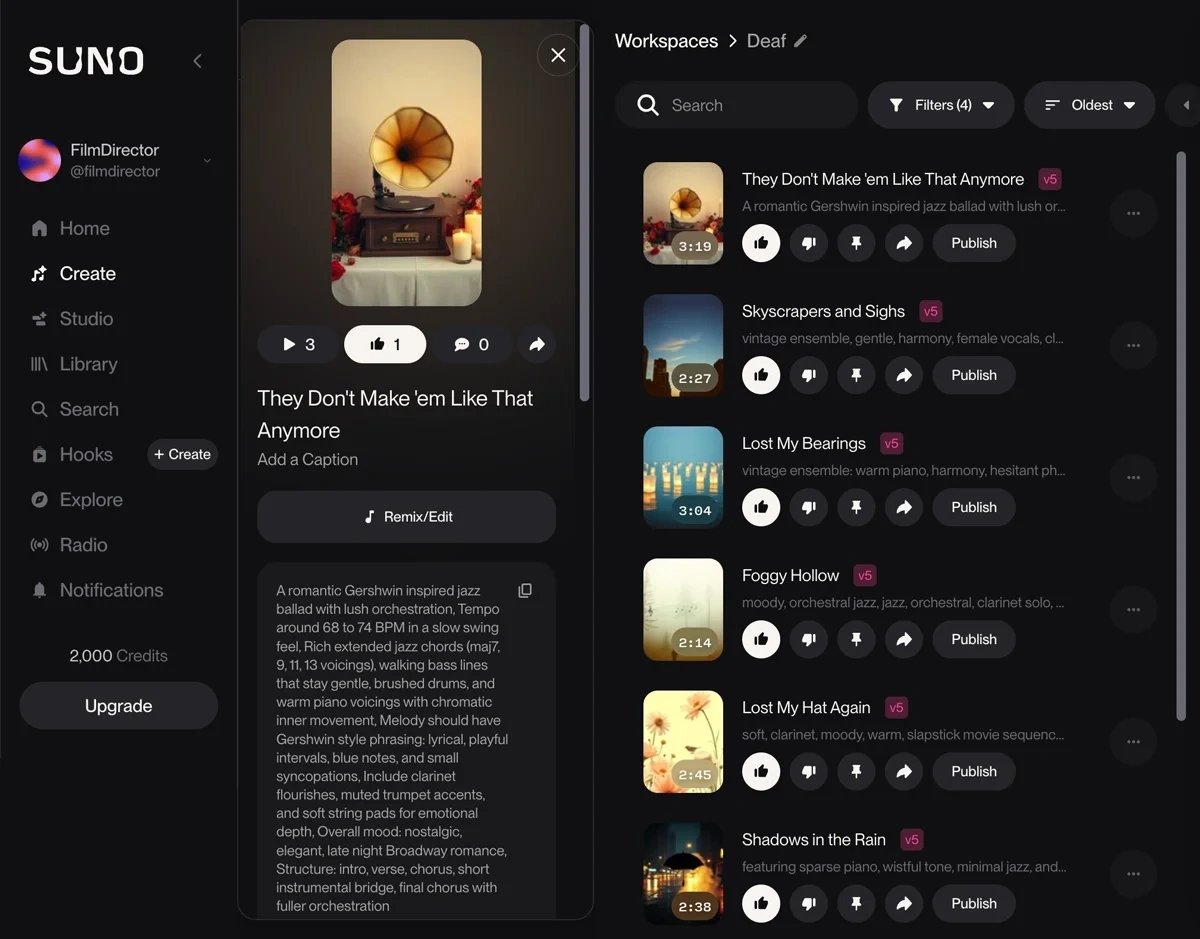

MUSIC

I wanted the film to feel like New York. And when I think of NYC, I think of Woody Allen’s Manhattan. So of course the film needed some Gershwin style orchestration and a bit of jazz.

The Suno AI interface

The song “They don’t make them like that anymore” was a happy accident. I wrote the line for Frank to mumble, but VEO made him sing it. So I thought; fine, let’s make it a real song.

I had a blast playing with the music. Hitting cues, syncing a heartbeat monitor to the rhythm, even letting Frank whistle during the chase scene. Little things, but they make the whole film feel alive.

Frank whistles to the music soundtrack

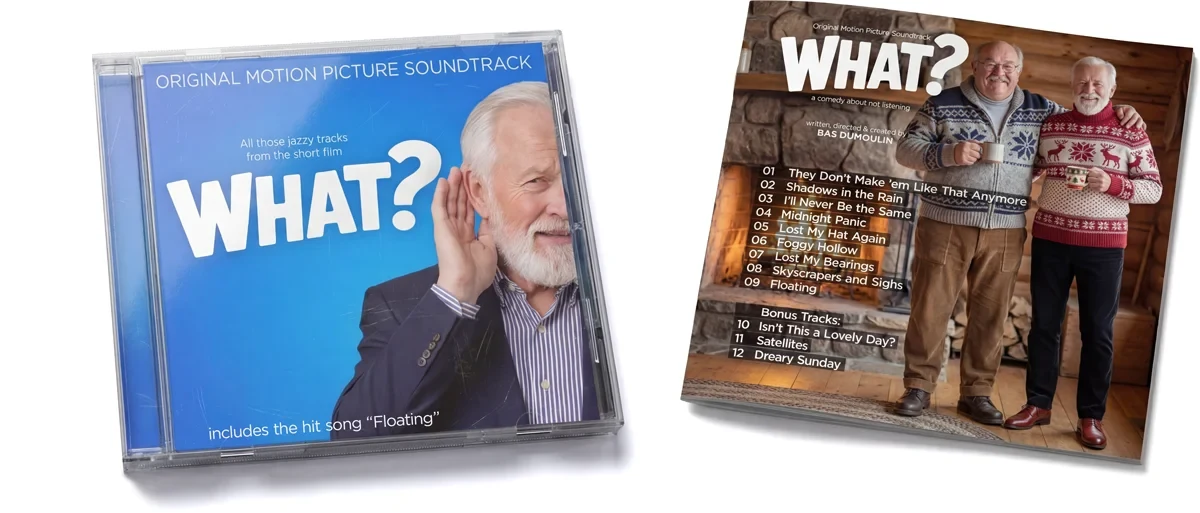

All tracks are available on all major platforms, because honestly, it deserved a life of its own.

The artwork for the jewelcase CD and booklet.

On the cutting room floor

There was an extra scene that leaned into the festival’s second theme, Rewrite Tomorrow. I liked it, but to stay under the ten-minute runtime, it had to go.

Painful, but necessary.

This scene ended up being cut from the film

BEHIND THE SCENES - FEATURETTE

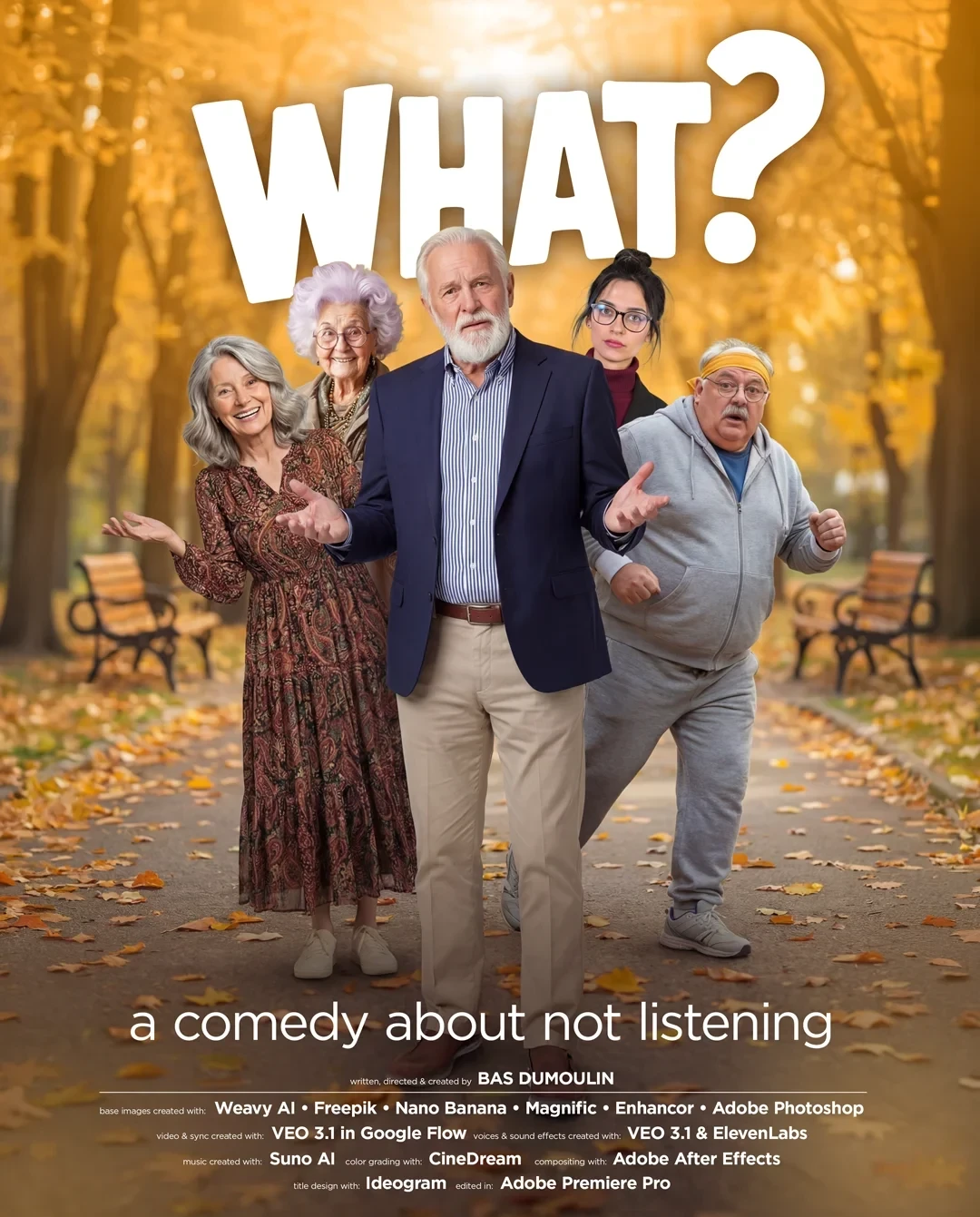

POSTERS

I’d heard Gemini 3 was shockingly good at graphic design, so I put it to the test. It nailed the poster on the very first try.

I still had to hop into Photoshop to make space for the credits, since Gemini filled every inch of the layout, but honestly… that was a tiny fix. Overall I was seriously impressed.

THE FINAL RESULT

FINAL THOUGHTS:

What started as an experiment turned into something very personal.

I wanted to see if I could tell a story the old-fashioned way, through dialogue, timing, awkward silences, and people with flaws.

No masked sci-fi creatures. No abstract voice-over explaining the theme. Just characters trying to connect, and sometimes failing.

The tech challenge was very real though. Every generated shot was limited to 8 seconds, which turns emotional continuity into a bit of a puzzle. Holding a performance, matching eyelines, connecting reactions, all of that suddenly becomes editing gymnastics. Fun ones. Sometimes painful ones.

A bigger challenge was resisting the temptation to lean on spectacle. AI makes it very easy to impress. It’s much harder to stay small, human, and a little uncomfortable. That’s where I wanted this film to live.

What is about aging, miscommunication, loneliness, and the quiet hope that you might still be understood if you try one more time.

I made it because I wanted to see if traditional storytelling still holds up inside a very new medium. For me, it does.

Like this project

Posted Dec 23, 2025

Created a short film using AI tools for a festival, focusing on storytelling and emotional connection.