Wav2Lip Video Generation Pipeline

Wav2Lip Video Generation Pipeline

This project enables you to create lip-synced videos from an input image and generated audio from a given text prompt. The pipeline converts text into speech (TTS), and then uses the Wav2Lip model to animate the image with synchronized lip movements to match the audio.

🔥 Features

Convert any text into audio using TTS (Text-to-Speech)

Use Wav2Lip to generate a lip-synced video from a static face image and audio

Automatic audio extraction and video frame preparation

Resize factor customization to fit different GPU memory requirements

CUDA support for fast inference

🛠️ Setup

1. Clone the Repository

2. Set Up Python Virtual Environment (Recommended)

3. Install Dependencies

Make sure you have

ffmpeg installed and accessible via command line.4. Download Required Models

a. Clone Wav2Lip Repository

Clone the official Wav2Lip repository into the project root directory:

b. Wav2Lip Checkpoint (.pth file)

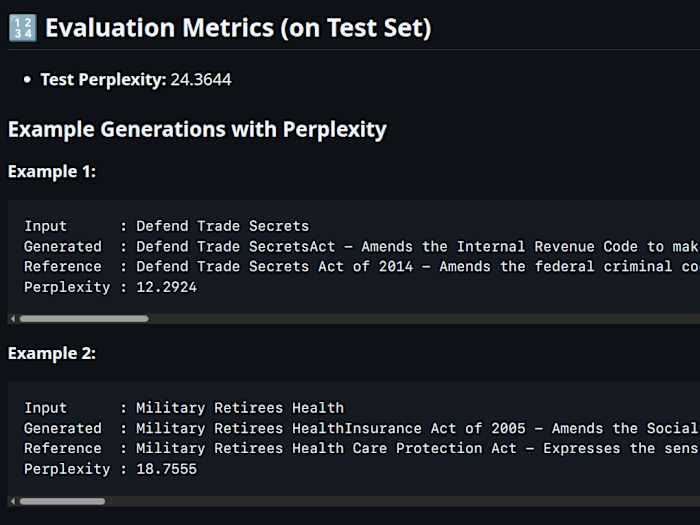

⚙️ How It Works

Input text is converted into audio using a TTS system.

The audio is saved to

./audio/output.wavThe image and audio are passed to the Wav2Lip model.

A video is generated where the lips of the image move in sync with the spoken audio.

🚀 Usage

Run the full pipeline:

🐞 Common Issues & Fixes

1. CUDA error: no kernel image is available for execution on the device

CUDA error: no kernel image is available for execution on the deviceYour GPU (e.g., RTX 4090) may not be supported by the current PyTorch installation.

Fix: Reinstall PyTorch with support for compute capability 8.9:

2. invalid load key, '<' or TorchScript Errors

invalid load key, '<' or TorchScript ErrorsOccurs when the wrong model file is used (e.g., a TorchScript

.pt file instead of a PyTorch .pth checkpoint)Fix: Use the

.pth file from the correct source (e.g., Hugging Face link above)3. Image too big to run face detection on GPU

Image too big to run face detection on GPUFix: Use the

--resize_factor argument (e.g., 2 or 4)4. Output video not found

Fix: Ensure

ffmpeg is installed and accessible from the command line.📁 Project Structure

📜 License

MIT License. See

LICENSE file for more information.🙏 Acknowledgements

Like this project

Posted Jun 28, 2025

Developed a pipeline to create lip-synced videos from text and images.

Likes

0

Views

13