The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

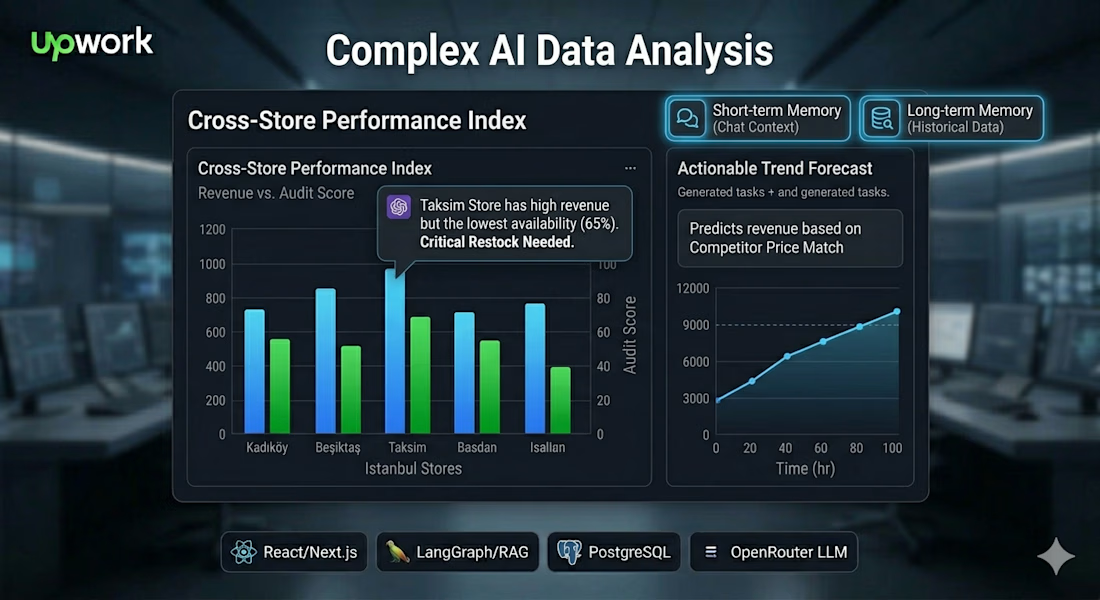

AI Operations Agent: RAG-Powered Retail Intelligence & Task Automation

This project was built for large-scale restaurant groups and multi-unit retail operators who manage high volumes of data across dozens or hundreds of locations. Specifically designed for Regional Managers and Operations Directors, the system serves as an enterprise-grade "Digital Consultant" that bridges the gap between fragmented POS/inventory data and daily on-the-ground execution. By transforming millions of rows of restaurant performance metrics into high-priority tasks, it provides a centralized platform for leadership to monitor KPIs, approve AI-suggested corrective actions, and ensure operational consistency across their entire portfolio.

1. What We Built

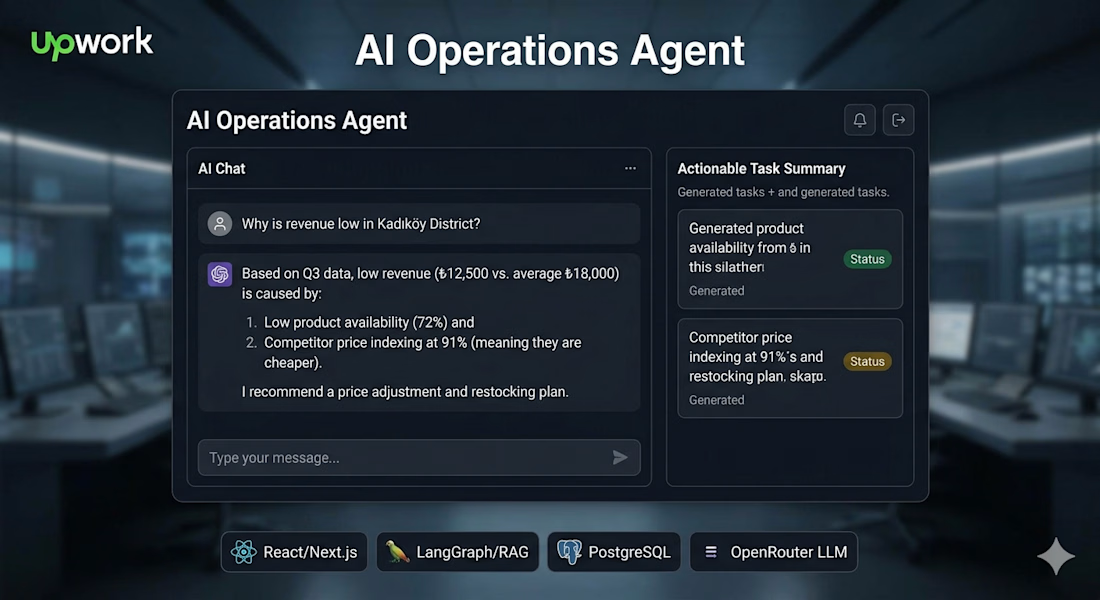

We developed a production-ready Autonomous AI Operations Agent designed to bridge the gap between complex retail data analysis and daily execution. The system acts as a digital consultant for regional managers, transforming raw KPIs into actionable tasks.

Analytical AI Chat: A free-form conversational interface where users can query performance data (e.g., "Show me the top 5 worst profitable stores in Istanbul for the last 3 months").

Task Management Dashboard: A structured workflow where AI-suggested actions are automatically logged for manager approval or rejection.

Automated Action Logic: The agent uses an "Action Suggestion Map" to identify specific defects (like low audit scores or high food waste) and suggest precise corrective measures.

Persistent Memory: Includes both short-term memory for the current chat session and long-term RAG memory to maintain context over time.

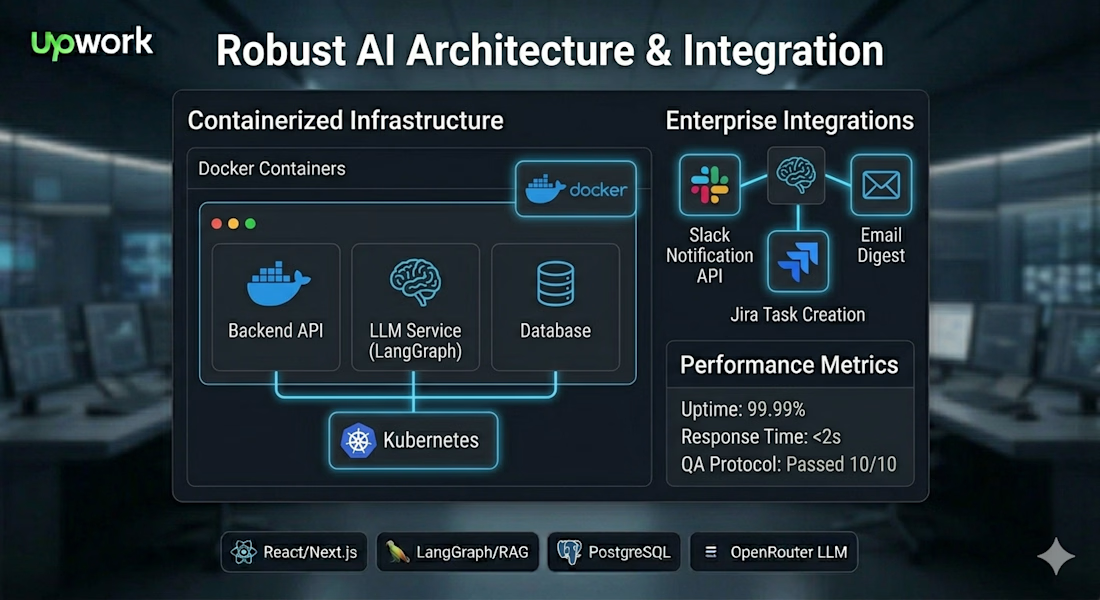

2. How We Built It (The Stack)

The system was engineered for scalability and reliability using a modern, containerized stack:AI Orchestration: LangGraph was used to manage complex, multi-turn reasoning and agentic workflows.

Frontend: React/Next.js 14 for a responsive, real-time user interface.

Backend & Data: Node.js paired with a PostgreSQL database capable of handling 1M+ records.

LLM Access: Integrated via OpenRouter to allow for flexible model selection and switching.

Infrastructure: Fully Dockerized to ensure consistent deployment across environments.

3. Challenges We Faced

As the system scaled from prototype to processing millions of records, we encountered several critical engineering hurdles:

Response Latency: The initial monolithic prompt architecture led to response times exceeding 60 seconds, far slower than the required "ChatGPT-like" speed.

Prompt Verbosity & Errors: Complex questions involving multiple variables caused the LLM to lose focus, leading to "reasoning errors" and incorrect SQL generation.

Hallucination Risks: In multi-branch queries, the model occasionally fabricated data points, particularly around manager hours and performance metrics.

Context Switching Bugs: The agent sometimes struggled to "let go" of a previous topic, continuing to reference an old store when the user had asked about a new city.

4. How We Solved It

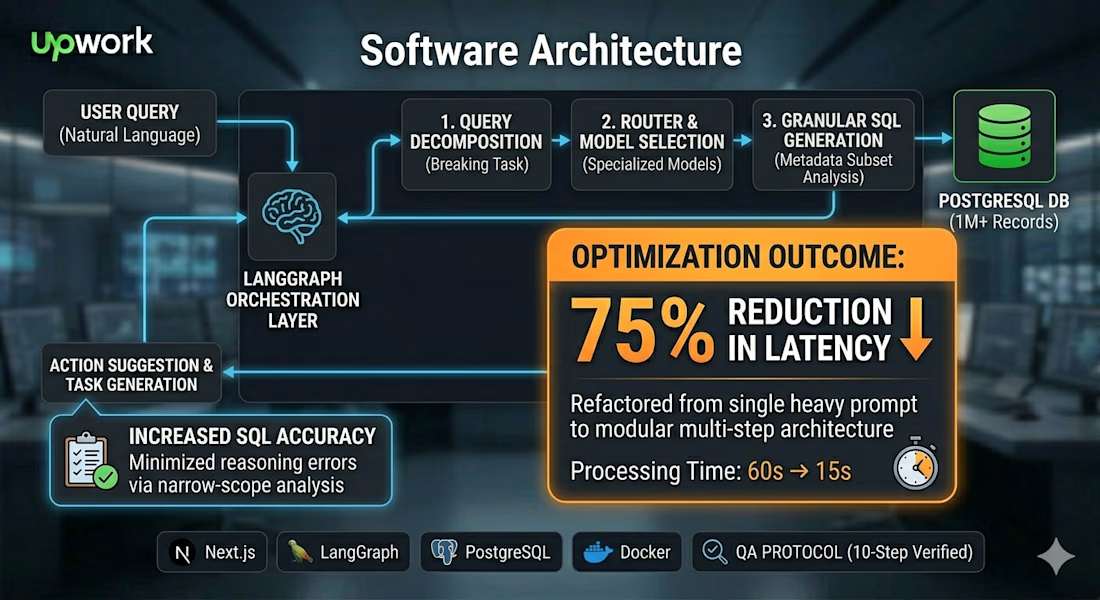

We re-engineered the core pipeline to transition from a single, heavy agent into a Modular Multi-Step Architecture:

75% Latency Reduction: By decomposing the main logic into smaller, task-specific nodes, we dropped processing time from 60s down to 15s.

Task Decomposition & Specialized Models: We stopped using a "one-size-fits-all" model. Instead, we implemented a router that uses lighter, specialized models for SQL generation and action identification, and flagship models only for final reasoning.

Granular SQL Generation: Breaking the metadata analysis into narrow sub-steps eliminated SQL hallucinations. The model now only "sees" the specific schema needed for the current sub-task, ensuring 100% accuracy.

10-Point Testing Protocol: We implemented a rigorous QA protocol that specifically verified bug fixes for context switching, task duplication, and chart coverage before final delivery.

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

Tiny launch day ✨

Prism is live on Product Hunt

It's a free browser-based tool that adds prismatic refractions and chromatic aberration to photos.

Upload an image → play with the settings → export your result.

I made it while building aftrglo — a bigger project to make sharing creative work online feel lighter. Prism is one small creative wander from that process. And today it gets its own little launch 🐣

Built for creatives who want to add that strange little something to a visual without opening heavy software.

It came from the same place as all my experiments:

I made something for myself,

kept playing with it,

then realized it might be useful for someone else too.

So I cleaned it up and decided to share it before the bigger project is ready.

Come play with it and let me know what you think 🪩

Curious to see what you make with it too, as the results can go from extra cool to wonderfully weird

Amazing design!

This looks special!

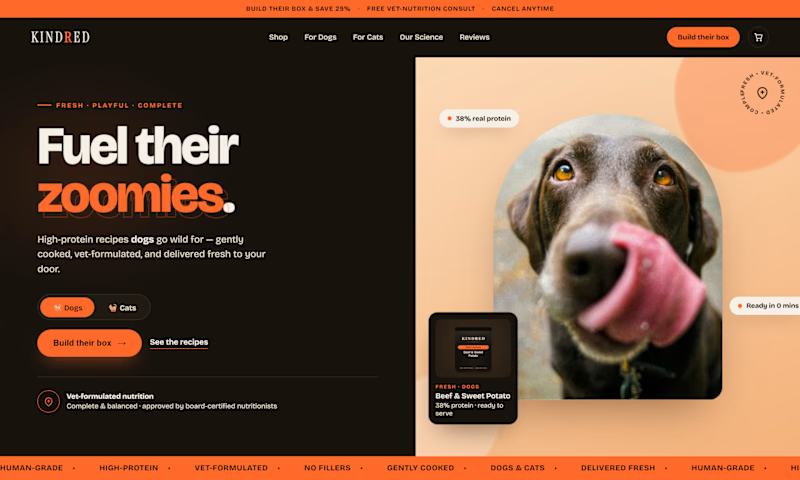

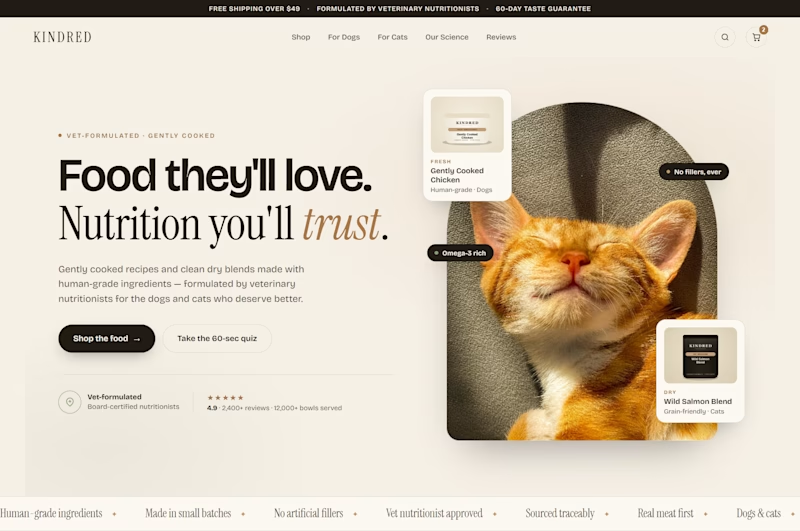

Same brand. Same product. Which one sells more?

Two heroes. Two completely different directions.

V1 — dark, bold, high energy. Orange hits hard. The kind of hero that stops a scroll.

V2 — clean, editorial, refined. Cream and serif. Feels like a brand you'd trust with your pet's health.

I'll let you vote. Drop your answer below.

7 voted

24%

22 voted

76%

29 votes

Closed

Trending

Claude

Claude has entered the design space. How are you using Claude Design?

Contra University

Learn from expert creatives how to earn more using next-gen AI tools.

creativeaiflow

Creative AI workflows are evolving. What tools do you use, and what are their strengths and weaknesses?

portfolioreview

The best portfolios tell a story, not just show a grid. Share yours for feedback.

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?