The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

I've used LLMs for 3 years but never truly understood them until a recent flight without internet.

I watched Andrej Karpathy's breakdown. As an OpenAI co-founder, he makes it click.

The big realization: LLMs are text predictors.

Long contexts (huge chat history) make accurate prediction harder. This is a major reason why hallucinations happen.

I used to cram everything into one giant thread. I didn't realize I was breaking the model.

My new approach:

Separate chats for different tasks.

Short, concrete instructions.

Since most models use similar training data, the real difference is your prompting.

Short context = Better outputs.

If you want to learn more, here is the video:

https://www.youtube.com/watch?v=zjkBMFhNj_g

Exactly. Keeping context short has been a game changer.

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

10 Key Data Preparation Steps for Building Accurate AI Models

Improve AI Model Accuracy with Structured Data Preparation

Looking to build high-performing AI and machine learning models? Learn the essential data preparation steps, including data collection, cleansing, validation, feature engineering, dataset splitting, and annotation, designed to improve model accuracy, reduce bias, and scale AI workflows efficiently.

Explore the complete guide to AI data preparation: https://www.hitechbpo.com/blog/data-preparation-steps.php

ai models are booming right now It's the most efficient way to launch your product or showcase a new launch

the client was open with with me being creative(total freedom😊) with the image of the materials she send and her last request was just to add in the flyer.

send a her a slow paced version but i find it cool to post the fast paced one here.

My question for fellow creators: do you prefer working from a strict script or having total creative freedom?

want me to work with your product. definitely leave a comment

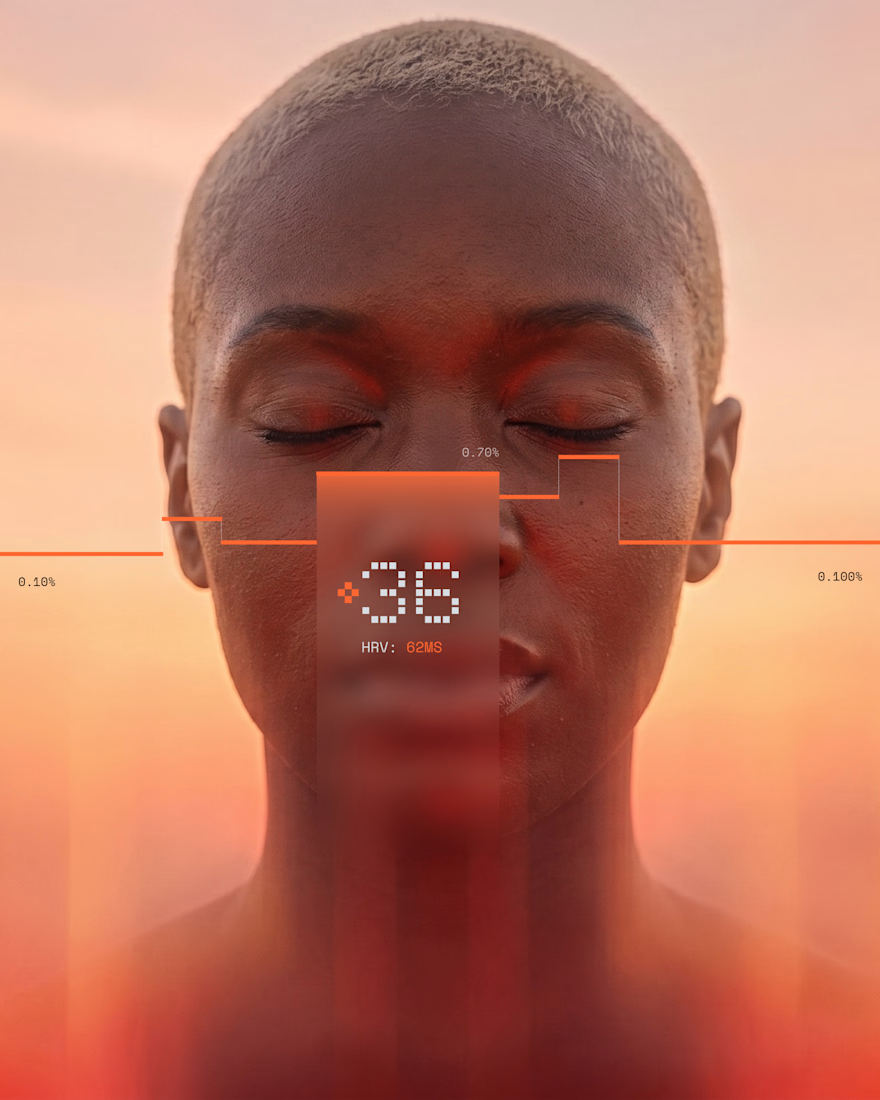

Your body generates 1,847 data points every night. You wake up and check your phone for notifications.

Built a concept around flipping that. What if the first thing you read in the morning was actually about you.

Not a score. Not a streak. Just a cold, precise read three lines, what's happening inside, what to do with it.

wow, this design is beautiful, especially alongside your phrasing. inspiring work, genuinely!

Challenges

View allTrending

Claude

Claude has entered the design space. How are you using Claude Design?

Contra University

Learn from expert creatives how to earn more using next-gen AI tools.

creativeaiflow

Creative AI workflows are evolving. What tools do you use, and what are their strengths and weaknesses?

portfolioreview

The best portfolios tell a story, not just show a grid. Share yours for feedback.

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?