Molka B

AI & QA Automation Engineer | AI Bias Audit& Web/App Testing

New to Contra

Molka is ready for their next project!

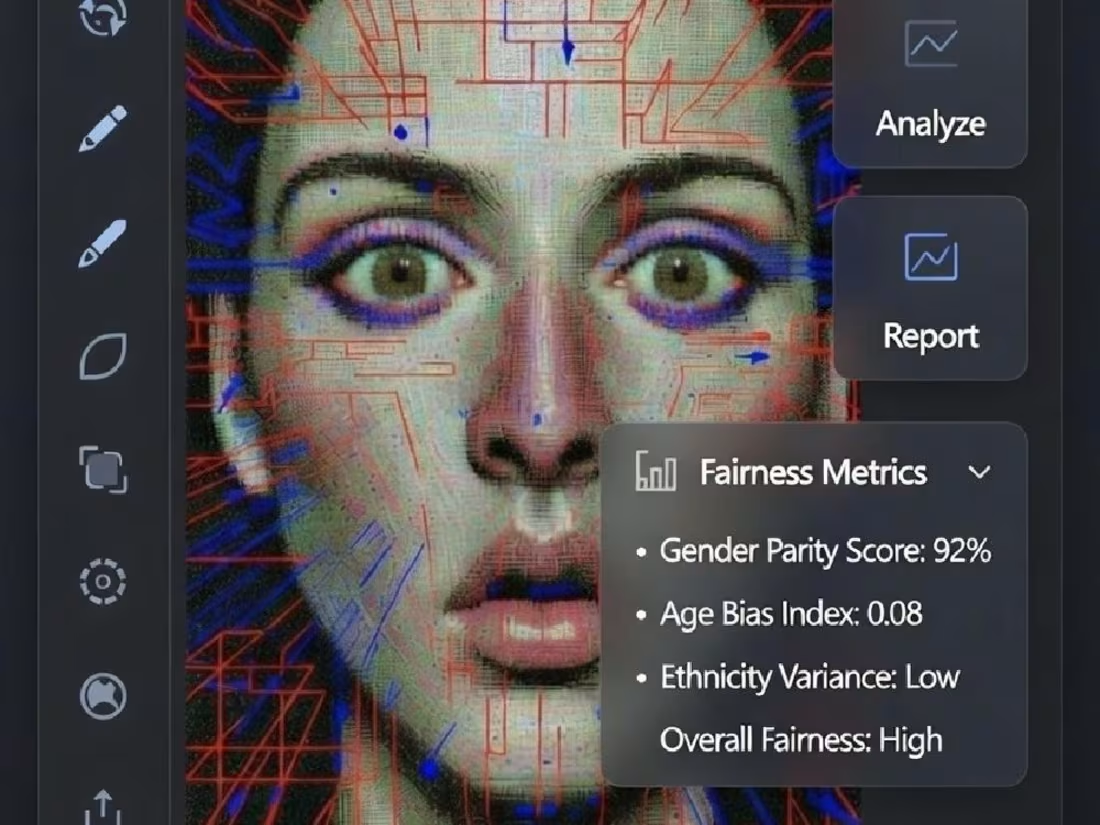

AI Bias Detection & Mitigation Tool for Hiring Models

My role: AI/ML Engineer & Fairness Auditor

Project description:

AI Bias Detection and Mitigation Framework for Recruitment Systems.

Built a complete, production-ready tool that:

Detects gender, race, and age bias in hiring ML models

Uses industry-standard fairness metrics (Disparate Impact, Demographic Parity, Equal Opportunity)

Applies Reweighing mitigation (AIF360) → improves fairness while keeping accuracy loss < 2%

Includes interactive Gradio demo for live testing

Full business impact section + compliance notes (EEOC 4/5th rule, GDPR, EU AI Act)

1

94

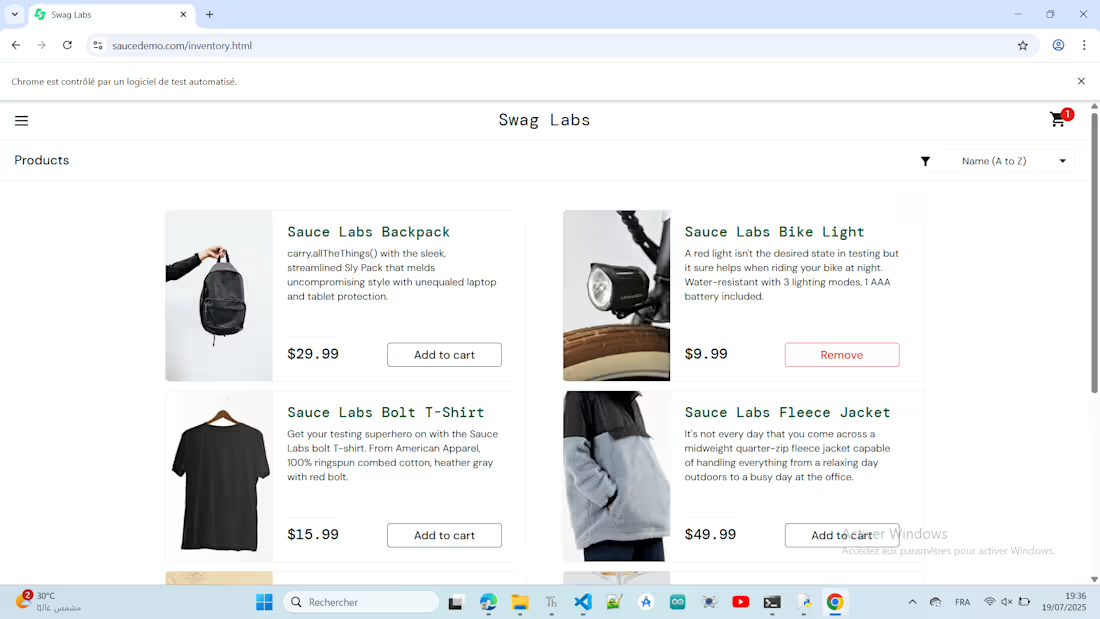

E-commerce Automation Framework

My role : Test Automation Engineer (UI & API)

Project description:

Designed and developed a comprehensive automated testing framework for an e-commerce website. The framework covers UI automation using Selenium WebDriver and Pytest, alongside robust API testing to ensure backend reliability. It enables fast, repeatable test execution with detailed reporting to quickly identify issues.

1

70

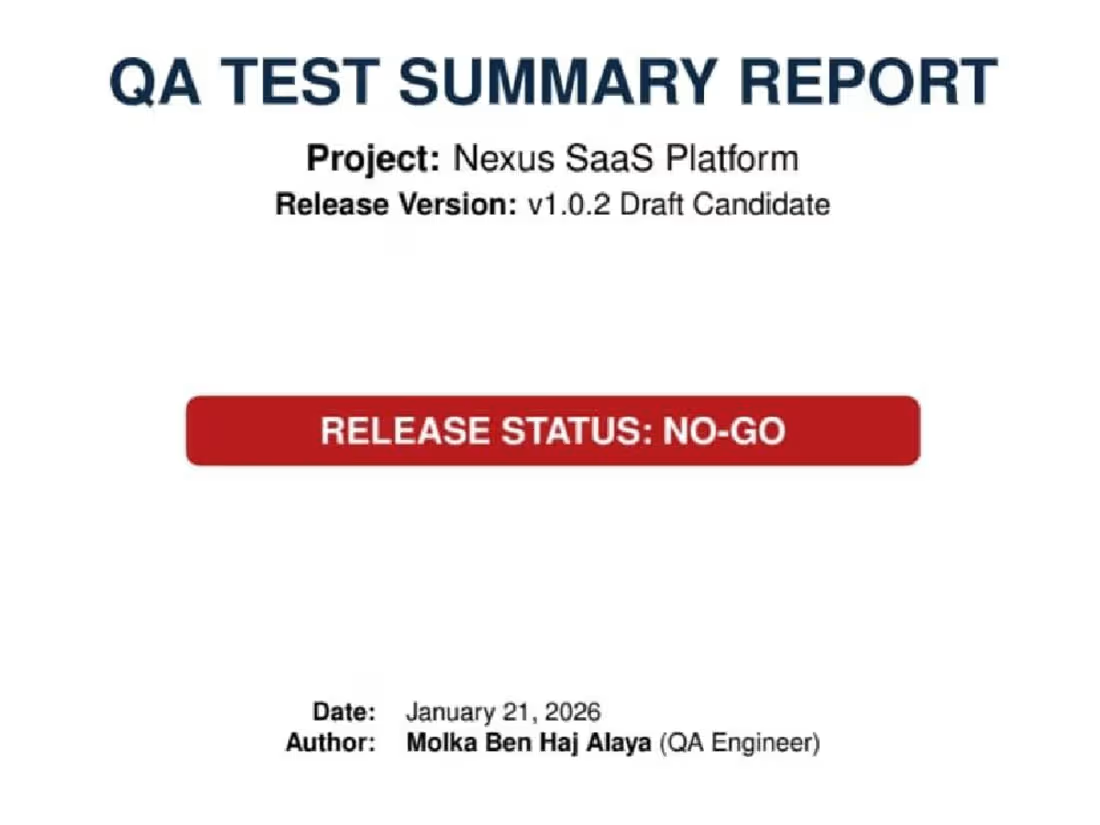

QA Test Summary Report – SaaS Platform Release

My role : QA Engineer / Test Lead

Project description:

Executed risk-based QA testing for a SaaS platform (v1.0.1), focusing on Authentication, RBAC, and User Dashboard. Identified critical security vulnerabilities, session integrity failures, and major functional defects. Delivered a professional test summary report with defect metrics, exit criteria validation, and actionable recommendations. Ensured the client had a clear release decision and mitigation plan, improving software reliability and compliance.

1

60

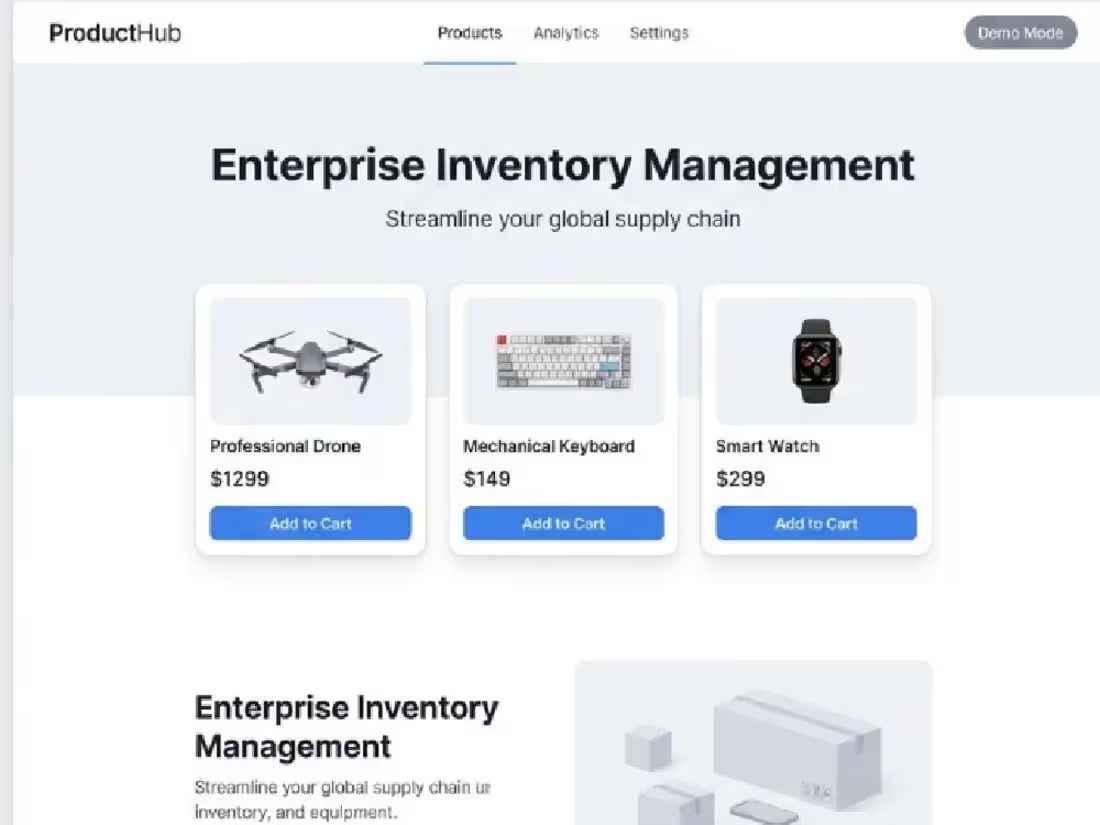

AI-Powered QA Automation Suite

My role: Senior QA Automation Engineer (Lead)

Project description:

AI-Powered QA Test Case Generator & Execution Dashboard is a comprehensive, enterprise-level quality assurance solution designed to bridge the gap between AI-driven test generation and robust execution reporting. The suite provides a full-cycle automation workflow: from creating test cases using GPT-4 from natural language user stories to executing them across API and UI layers, finishing with high-impact business metric analysis.

1

91

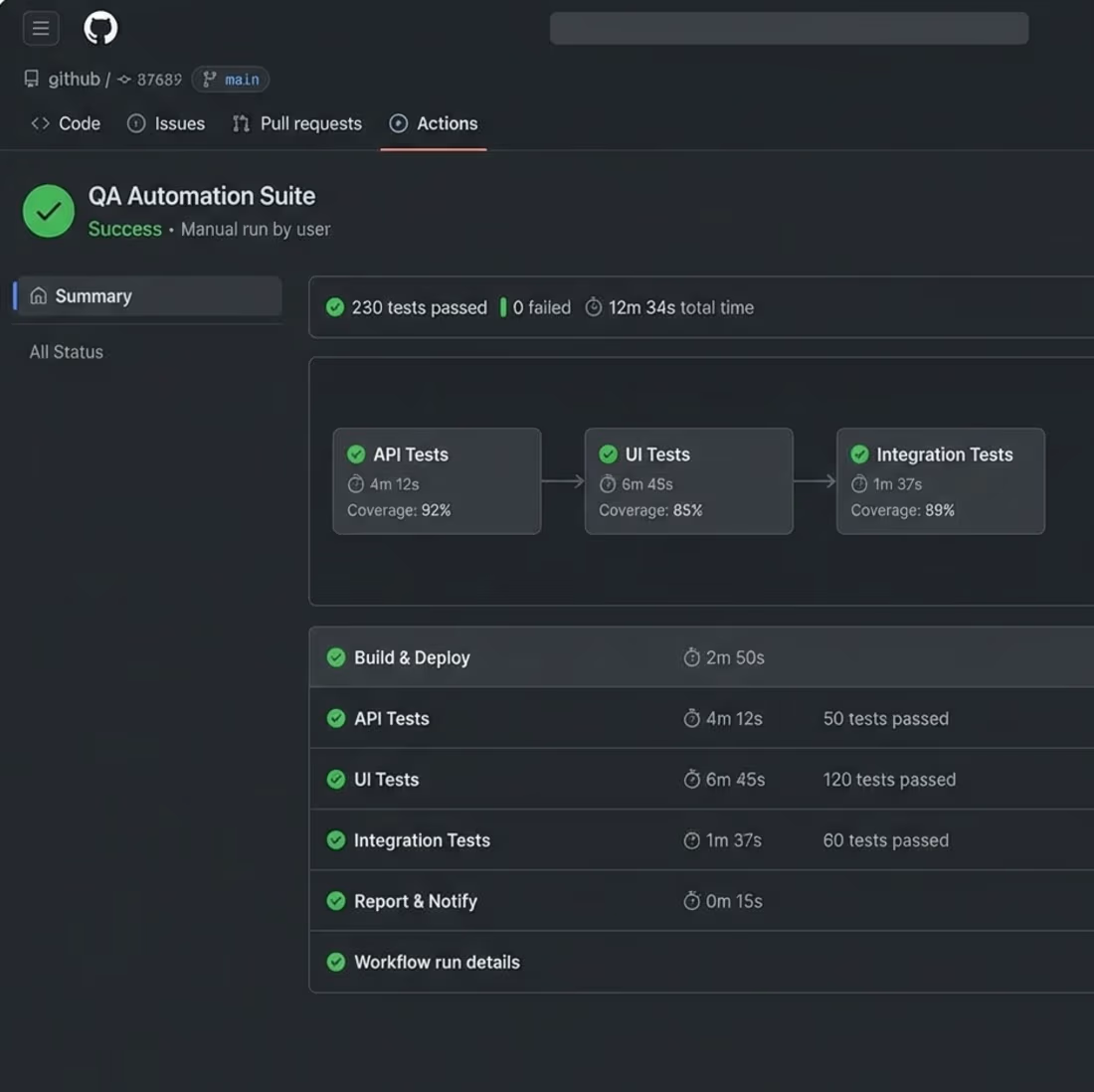

Enterprise SaaS QA Automation: AI-Powered Testing & CI/CD

My role : Senior QA Automation Engineer

Project description:

Architected and implemented comprehensive test automation framework for enterprise SaaS platform, achieving 92% API and 85% UI test coverage. Developed 230+ automated tests using Pytest and Selenium with Page Object Model design pattern. Integrated AI-powered test prioritization and flaky test detection achieving 95% accuracy. Implemented GitHub Actions CI/CD pipeline reducing regression testing from 2 days to 6 hours (75% reduction). Enabled daily releases while improving production defect detection by 82%. Technologies: Python, Pytest, Selenium WebDriver, GitHub Actions, Docker, Scikit-learn

1

45

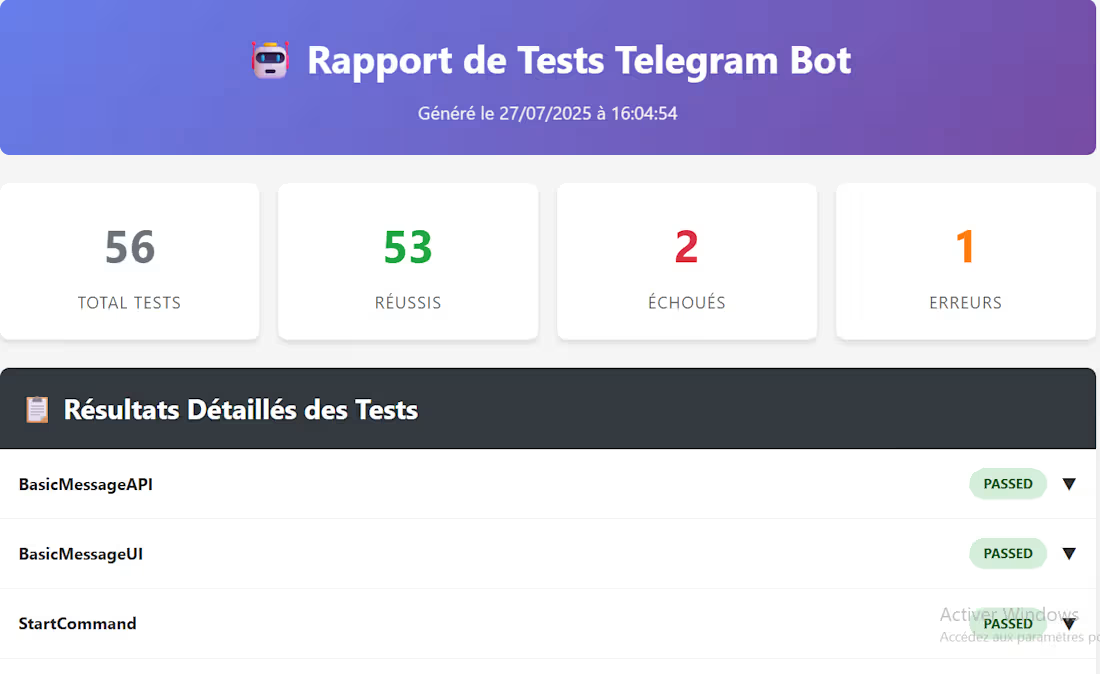

Professional Telegram Bot Testing Framework with AI-Powered Reports

My role : Senior QA Automation Engineer & Test Framework Architect

Project description:

Developed a comprehensive automated testing framework for Telegram bots featuring multi-layered testing strategies (API, UI, integration), real-time screenshot capture, and AI-enhanced HTML reporting. The framework includes security validation, performance benchmarking, concurrent user simulation, and professional dashboards with interactive metrics. Built with Python/pytest, it supports CI/CD integration, generates beautiful visual reports, and handles edge cases like multilingual input, injection attacks, and stress testing. Perfect for enterprise bot validation and quality assurance.

1

59

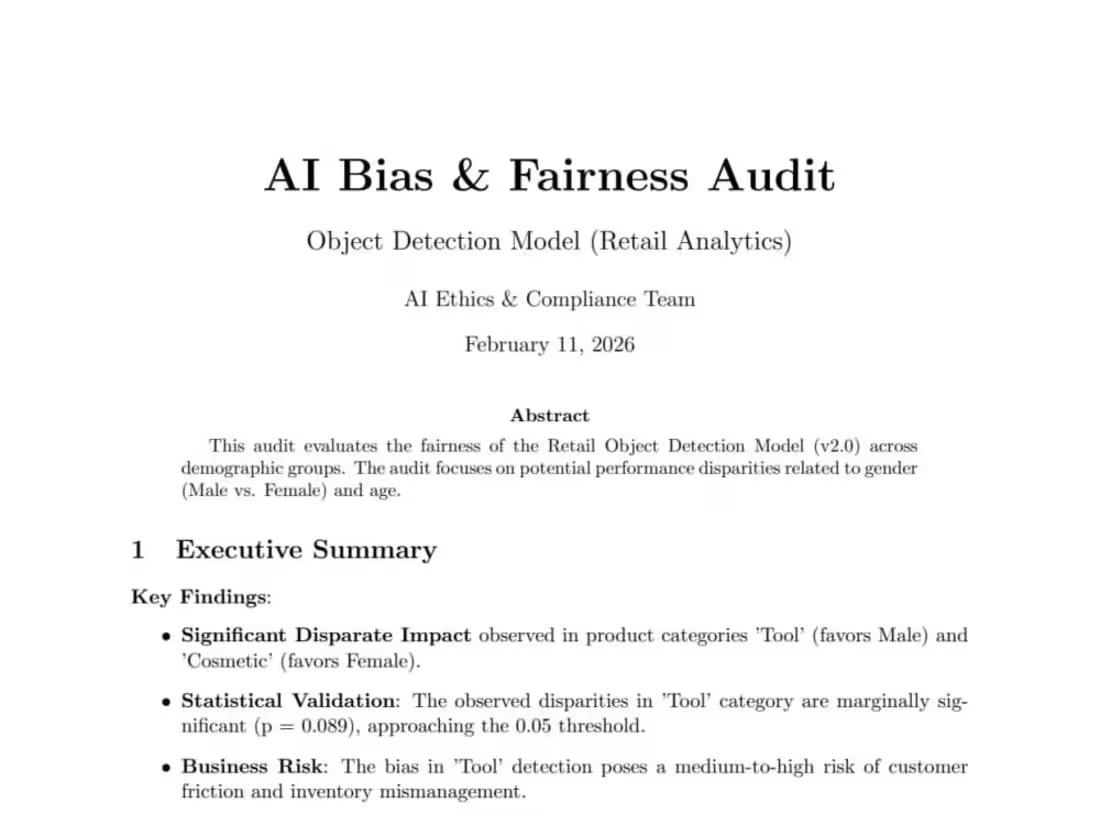

AI Bias & Fairness Audit – Retail Object Detection Model

My role: AI Bias & Fairness Auditor | ML Risk & Compliance Analyst

Project description:

Conducted a full AI Bias & Fairness audit on a retail object detection model using demographic evaluation (gender-based analysis). Measured Disparate Impact, Statistical Parity, Equal Opportunity Difference, and performed statistical significance testing (Chi-Square). Identified category-specific bias and business risk impact. Implemented mitigation via threshold calibration and reweighting. Delivered a professional compliance-ready report with risk matrix and mitigation roadmap.

1

65

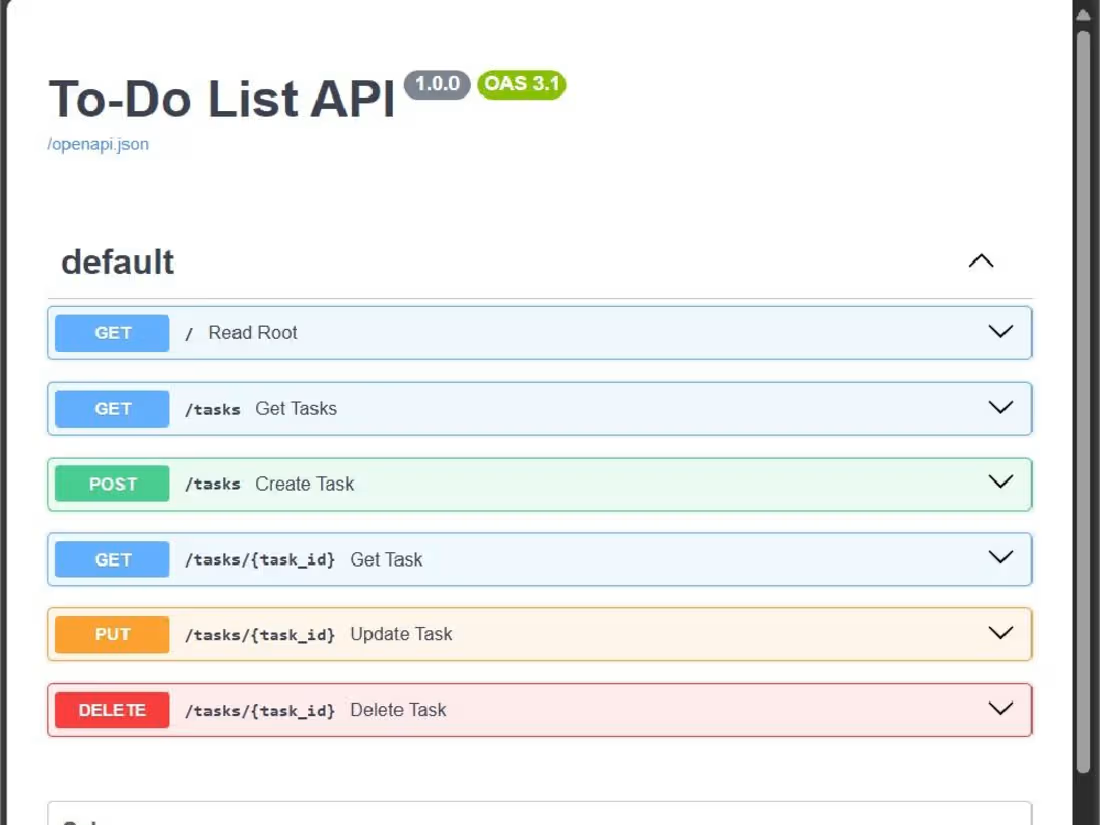

Modern To-Do List API with FastAPI & PostgreSQL

My role : Backend Developer / API Engineer

Project description :

Developed a robust RESTful API for managing tasks, featuring full CRUD operations, data validation with Pydantic, and PostgreSQL integration using SQLAlchemy ORM. Delivered automated test coverage with Pytest, along with Swagger/OpenAPI interactive documentation to ensure quality and maintainability. The project improved task management efficiency and offers a scalable foundation for further enhancements such as authentication and deployment pipelines.

1

50

AI Bias Audit - Object Detection Model (Retail Analytics)

My role :AI Bias & Fairness Specialist / ML Model Auditor

Project description:

Conducted a comprehensive fairness audit of an object detection model (YOLOv8-Large) for retail analytics, identifying gender, skin tone, and age biases. Delivered actionable mitigation recommendations including data rebalancing, adversarial debiasing, and post-processing calibration. Provided Python-based metrics, visualizations, and a detailed report to ensure ethical, reliable, and regulation-compliant AI

1

74