pro

Vlad Ioan

AI Infrastructure Engineer | On-Premise LLM & RAG

New to Contra

Vlad is building their profile!

Built two production AI agents via Dify tool-calling: nmap network

scanner (natural language → structured scan results) and Active

Directory lookup (LDAP/NTLM auth, user/group queries). LLM

orchestration with Granite-4.0-Tiny as tool-calling model.

1

8

Built production RAG system using Dify 1.13.x, Qdrant vector

database, BGE-M3 embeddings and bge-reranker-v2-m3 reranker.

Chunk size 800/overlap 200. Integrated with internal document

repositories. Achieves reliable retrieval on air-gapped

infrastructure with no cloud dependency.

1

12

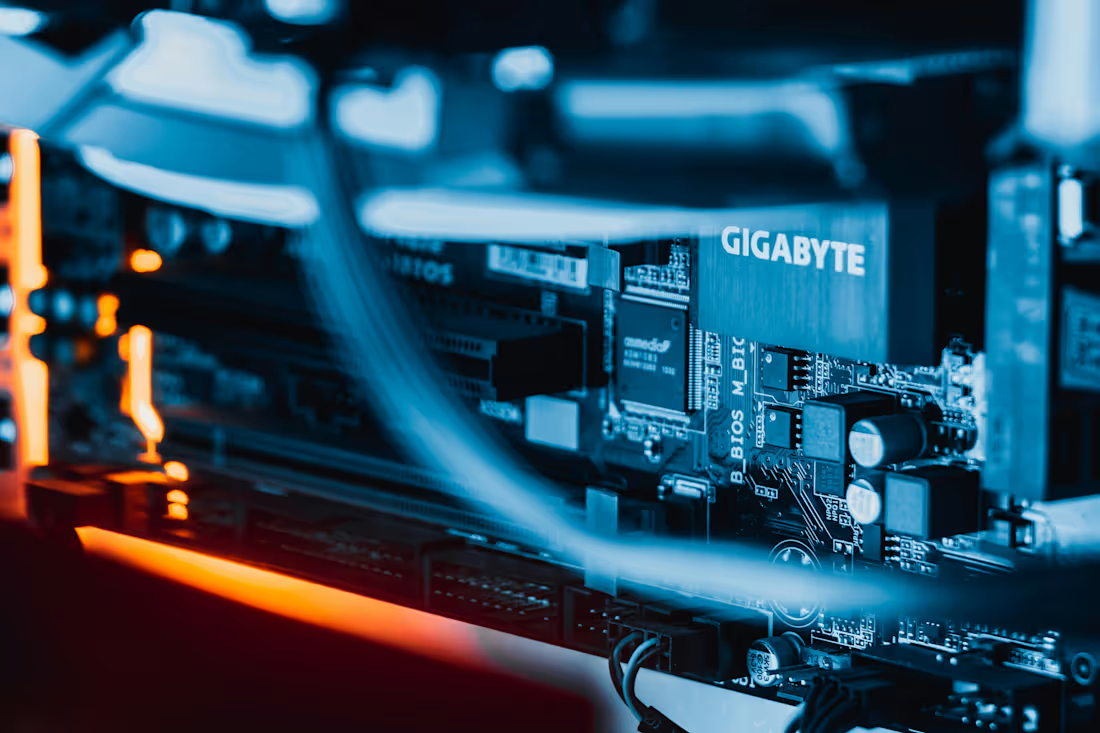

Deployed a multi-model CPU inference cluster on 2× HP ProLiant

DL360 Gen9 servers (376GB RAM total). Running 5 concurrent LLM

endpoints: Qwen3-Coder-30B, Qwen3-Next-80B, GLM-4.7-Flash,

Granite-4.0-Tiny — all quantized (GGUF/Q4_K_M). Optimized with

ik_llama + MKL for +62% throughput. Ollama-compatible API,

Open WebUI frontend, Opik observability.

1

17