Tobechukwu Samuel

AI/ML Engineer building intelligent AI systems.

New to Contra

Tobechukwu is building their profile!

🏗️ Bulldozer Price Prediction — End-to-End Machine Learning Project

🚜 Overview

How do you accurately estimate the value of heavy equipment at auction?

In this project, I built a machine learning model to predict the sale price of bulldozers using historical auction data — effectively creating a data-driven “blue book” valuation system.

This project simulates a real-world ML workflow:

Handling messy, real-world data

Engineering meaningful features

Iterating through models and tuning

Evaluating performance using proper metrics

🎯 Problem Statement

Auction prices for heavy equipment can vary significantly based on:

Machine specifications

Usage and configuration

Market conditions over time

The objective is to build a model that can accurately predict the SalePrice, enabling:

Better pricing decisions

Reduced uncertainty in auctions

Data-driven valuation systems

📊 Dataset

The dataset is split into three time-based sets:

Train Set → Data up to 2011

Validation Set → Jan 2012 – April 2012

Test Set → May 2012 – Nov 2012

This structure mimics real-world forecasting, where models are trained on past data and evaluated on future data.

⚠️ Due to size limitations, the dataset is not included in this repository.

👉 Download here:

https://www.kaggle.com/competitions/bluebook-for-bulldozers/data

⚙️ Machine Learning Workflow

🧹 Data Preprocessing

Converted saledate to datetime format

Extracted time-based features:

Year, Month, Day, Day of Week

Handled missing values:

Numerical → median imputation

Categorical → encoded as numerical values

🧠 Feature Engineering

Created time-based features from sale date

Leveraged machine attributes and configuration data

Improved model performance through iterative feature refinement

🌲 Model Used

RandomForestRegressor

Why?

Handles non-linear relationships well

Works great with structured/tabular data

Robust to noise and missing values

📈 Results

Metric Training Validation

MAE 2953.82 5951.25

RMSLE 0.1447 0.2452

R² 0.9588 0.8818

🔁 Iteration Journey (What Actually Happened)

This project wasn’t a straight line — and that’s where the real learning happened.

Stage Validation RMSLE

Baseline Model 0.2936

First Tuning Attempt 0.5638 ❌

Final Optimized Model 0.2452 ✅

💡 Key Takeaway:

Better hyperparameters don’t guarantee better performance — experimentation does.

🔧 Hyperparameter Tuning

Used RandomizedSearchCV (100 iterations) to explore the parameter space.

Best parameters:

n_estimators=40

min_samples_leaf=1

min_samples_split=14

max_features=0.5

💡 Key Insights

Feature engineering had the biggest impact on performance

Poor tuning can significantly degrade model accuracy

Time-based splits are crucial for realistic evaluation

Iteration and experimentation are core ML skills

🚀 Future Improvements

Apply log transformation to improve RMSLE

Experiment with LightGBM / XGBoost

Build a deployment-ready app (Streamlit)

Add feature importance visualization

📁 Project Structure

bulldozer-price-prediction/

│

├── notebook.ipynb

├── README.md

(http://README.md)├── .gitignore

└── requirements.txt

🧑💻 Author

Toby Chuks

GitHub: https://github.com/tobychuks01

(https://github.com/tobychuks01)LinkedIn: https://www.linkedin.com/in/toby-chuks-630b44217

⭐ Final Note

This project reflects more than just building a model —

it demonstrates the importance of iteration, experimentation, and learning from failure in machine learning.

0

7

❤️ Heart Disease Prediction using Machine Learning

📌 Problem Statement

Can we predict whether a patient has heart disease using clinical features?

Early detection of heart disease can save lives, making this a critical real-world machine learning problem.

📊 Dataset

Source: UCI Heart Disease Dataset

Includes medical attributes such as:

Age

Cholesterol levels

Chest pain type

Maximum heart rate

Blood pressure

⚙️ Project Workflow

Data Exploration (EDA)

Data Preprocessing

Model Training

Model Evaluation

Hyperparameter Tuning

🤖 Models Used

Logistic Regression

K-Nearest Neighbors (KNN)

Random Forest Classifier

📈 Model Performance

MetricScore (%)Accuracy73.47%Precision83.00%Recall74.95%F1 Score73.36%

🔍 Key Insights

Logistic Regression performed best after tuning

Cross-validation revealed a drop in performance → highlighting generalization challenges

High precision suggests the model is effective at identifying positive cases

Real-world medical datasets are complex and rarely achieve extremely high accuracy

🧠 Lessons Learned

Accuracy alone is not enough — precision and recall matter more in healthcare

Overfitting can give misleading results without proper validation

Simpler models (like Logistic Regression) can outperform complex ones

🛠 Tools & Libraries

Python

Pandas

NumPy

Scikit-learn

Matplotlib

Seaborn

🚀 Future Improvements

Feature engineering

Try advanced models (XGBoost, LightGBM)

Improve recall (important for medical predictions)

Deploy model as a web app

📎 Project Notebook

Check the full implementation in the Jupyter Notebook.

🙌 Acknowledgements

UCI Machine Learning Repository

🔬 Feature Importance (Logistic Regression)

The model coefficients reveal which features most influence heart disease prediction.

🔺 Features Increasing Risk

ca (number of major vessels) → Strongest predictor

oldpeak (ST depression) → Indicates heart stress during exercise

exang (exercise-induced angina) → Associated with higher risk

restecg abnormalities → Signals irregular heart activity

🔻 Features Decreasing Risk

Certain chest pain types (non-anginal)

Some dataset-specific patterns

Gender-related differences (model-specific behavior)

💡 Insight

The model heavily relies on cardiovascular stress indicators and blood flow patterns, which aligns with real-world medical understanding of heart disease.

0

9

Dog Breed Classification System

🔗 GitHub Repository (https://github.com/tobychuks01/dog-breed-classifier?utm_source=chatgpt.com)

🔗 Live Demo (https://breedy.streamlit.app/?utm_source=chatgpt.com)

Built a deep learning computer vision application capable of classifying 120 dog breeds from uploaded images.

Implemented transfer learning using ResNet and EfficientNet architectures with PyTorch and Torchvision.

Improved model performance from ~3% CNN baseline accuracy to 78.97% ensemble accuracy through optimization and fine-tuning.

Applied advanced deep learning techniques including label smoothing, gradual unfreezing, weight decay, learning rate scheduling, and Test Time Augmentation (TTA).

Deployed a production-ready Streamlit application with real-time image inference and probability predictions.

2

2

41

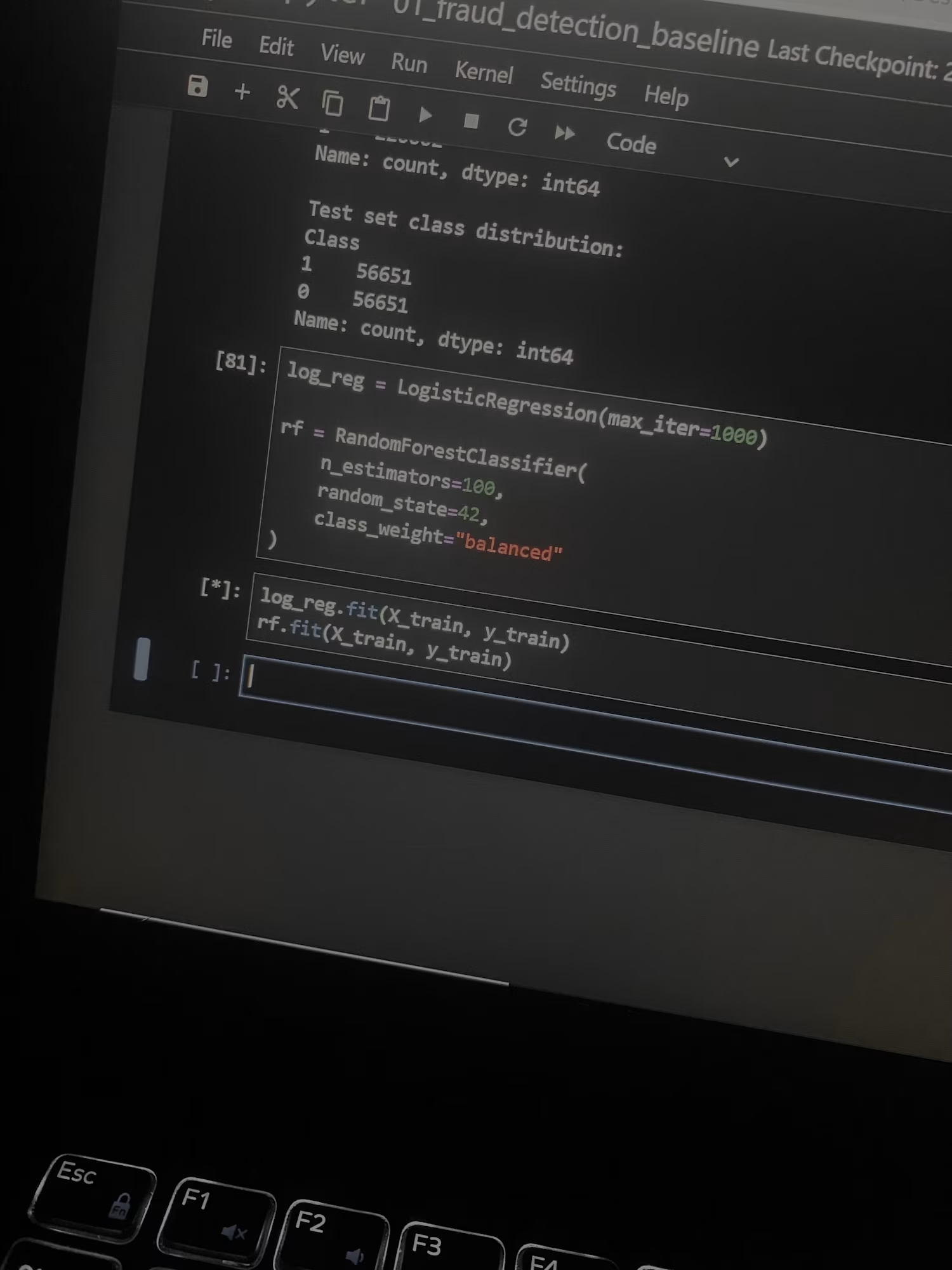

Credit Card Fraud Detection System

🔗 GitHub Repository (https://github.com/tobychuks01/Credit-card-fraud-Transaction-Model?utm_source=chatgpt.com)

Developed an end-to-end fraud detection pipeline using XGBoost, LightGBM, Random Forest, and Logistic Regression on 284K+ financial transactions.

Solved extreme class imbalance (~0.17% fraud cases) using SMOTE while preventing data leakage through correct training pipeline design.

Achieved ROC-AUC score of 0.985 using XGBoost with strong fraud recall and precision balance for real-world deployment scenarios.

Performed threshold tuning experiments and evaluated business tradeoffs between fraud recall and false positives.

Built a deployment-ready prediction system with Streamlit UI and API integration for real-time fraud prediction.

Applied feature importance analysis to identify key fraud-driving transaction variables.

0

8