Tejaswini Pericharla

Data Engineer

New to Contra

Tejaswini is building their profile!

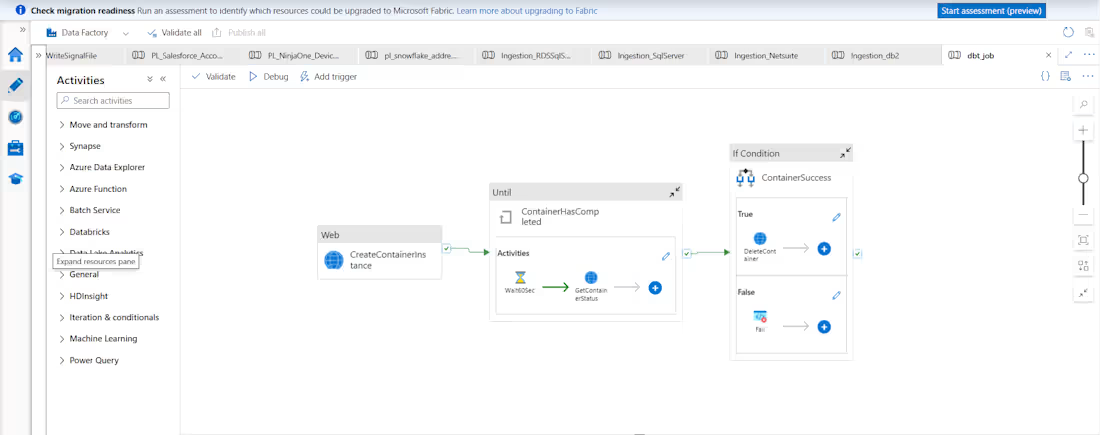

Excited to share a solution where I've designed and orchestrated end-to-end data pipelines using Azure Data Factory, integrating container-based workflows, dynamic routing, status monitoring (Until loops), and conditional execution handling. The architecture ensures reliable, automated data movement with clean orchestration logic and scalable execution across systems.

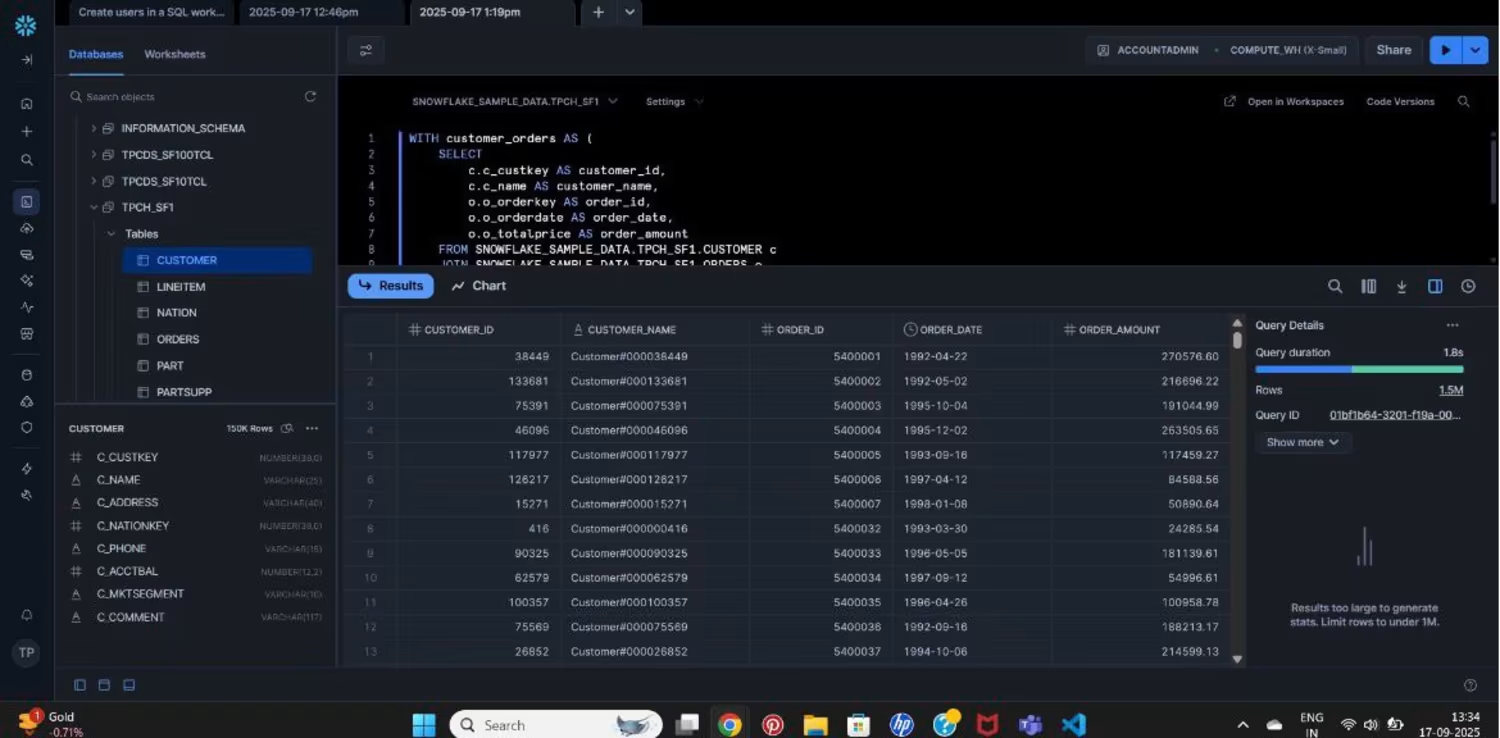

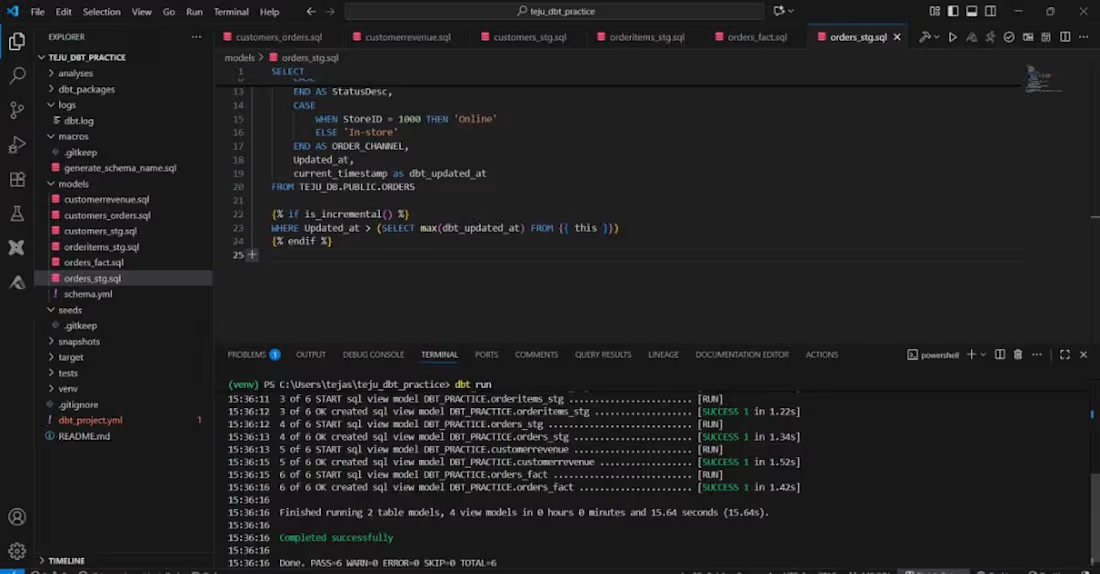

I've also transformed manual SQL scripts into modular, production-ready dbt models, building structured Snowflake layers for analytics-ready datasets with incremental loading and optimized performance. To complete the loop, I've developed a real-time Streamlit dashboard for live monitoring and geo-visualization. From raw data to actionable insights, this project reflects the passion for creating scalable data intelligence systems .

0

6

I build end-to-end data intelligence solutions using Snowflake, dbt, and Azure Cloud. I transform raw data into structured staging and fact models, implement incremental loading strategies, and create analytics-ready datasets to generate customer and revenue insights. By leveraging dbt’s modular modeling approach and Snowflake’s scalable cloud data warehouse, I deliver clean, optimized, and reliable data pipelines that power business intelligence.And honestly, once the pipeline is complete and I see the data lineage end-to-end, it feels like my baby — something I built with care and precision.

0

41