sammed jangade

Data Engineer building scalable pipelines and insights

New to Contra

sammed is building their profile!

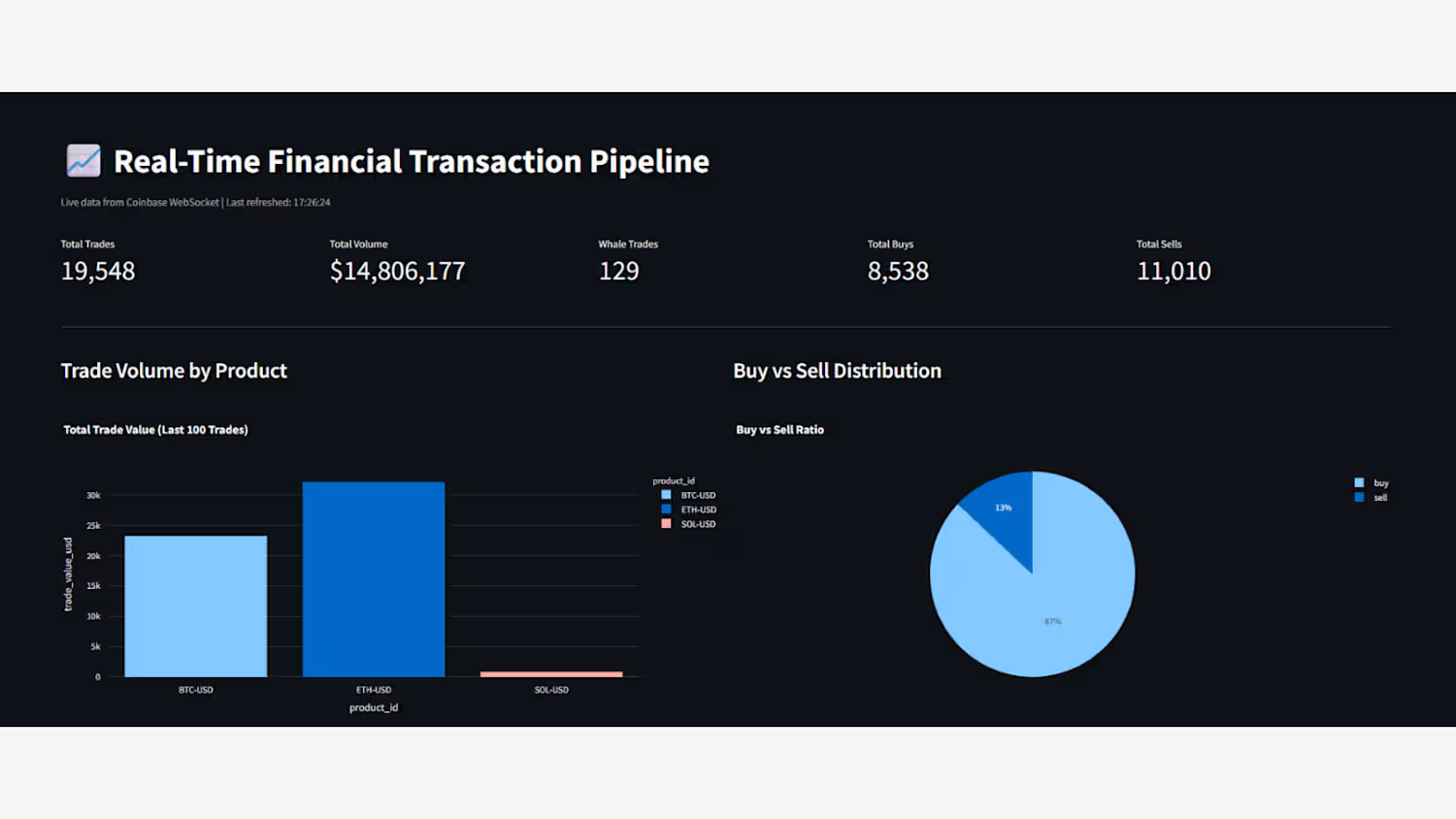

CryptoPulse – Real-Time Financial Transaction Pipeline

This project establishes a high-performance, serverless streaming architecture designed to ingest and analyze live cryptocurrency market data. By utilizing a reactive AWS stack, the pipeline processes thousands of trade events per second with sub-second latency, providing immediate insights into market sentiment and volume distribution.

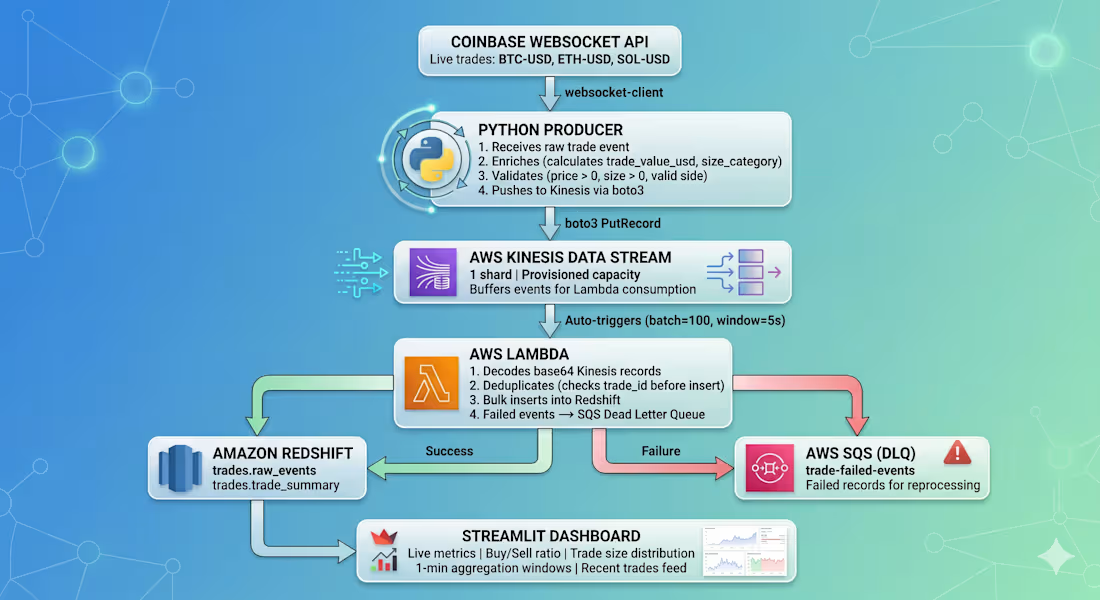

The Architecture

Live Ingestion: A custom Python Producer maintains a persistent connection to the Coinbase WebSocket API. It captures raw trade events for BTC, ETH, and SOL, performing real-time data enrichment (calculating USD trade value) and rigorous validation before streaming.

Streaming & Buffering: AWS Kinesis Data Streams acts as the high-throughput backbone, decoupling the data producer from consumers and ensuring the system can scale to handle sudden market volatility.

Serverless Processing: An AWS Lambda function is auto-triggered in optimized batches (100 records or 5-second windows). It handles base64 decoding, deduplicates trades via trade_id to ensure exact-once processing, and executes bulk inserts into storage.

Fault Tolerance: To ensure zero data loss, a Dead Letter Queue (AWS SQS) automatically captures any failed records, allowing for isolated troubleshooting and reprocessing without stalling the main pipeline.

Storage & Visualization: Data is persisted in Amazon Redshift for analytical depth. A Streamlit Dashboard queries these tables to visualize live buy/sell ratios, trade size distributions, and 1-minute moving aggregations.

Key Technical Strengths

Event-Driven Scaling: The serverless design ensures costs only scale with actual trade volume.

Data Integrity: Multi-stage validation and SQS-based error handling guarantee high-quality data for financial analysis.

Low-Latency Insights: The path from trade execution on Coinbase to visualization on the dashboard is completed in near real-time.

0

9

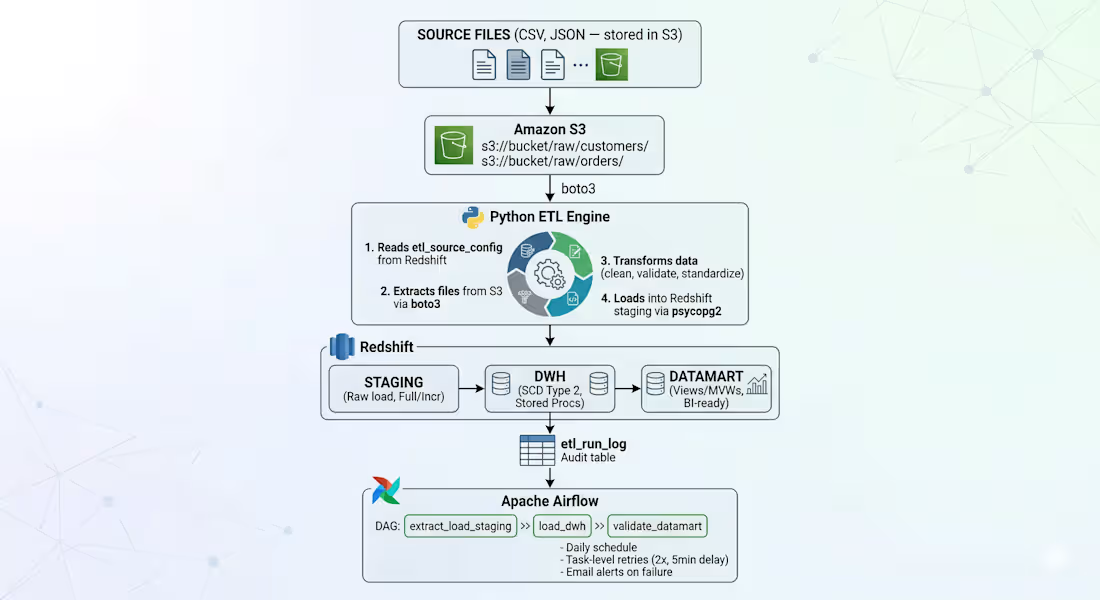

ApexFlow — Metadata-Driven ETL Framework

This project features a zero-code onboarding architecture that decouples ETL logic from data structures. By utilizing a configuration-first approach, new data sources can be integrated into the production pipeline solely through metadata updates, requiring no manual code changes or DAG modifications.

The Workflow

Dynamic Ingestion: A Python engine queries a etl_source_config table in Redshift to identify active tasks, then uses Boto3 to pull CSV/JSON files from specific S3 paths defined in the config.

Automated Processing: The engine dynamically validates and standardizes data based on the metadata schema before loading it into Redshift Staging via psycopg2.

Advanced Modeling: Data flows from Staging to a DWH layer (handling SCD Type 2 history via Stored Procedures) and finally into Datamarts for BI consumption.

Orchestration: Managed by Apache Airflow, the pipeline handles daily scheduling, 2x task retries, and automated failure alerts.

Key Impact

Scalability: Drastically reduces "Time-to-Data" by allowing non-developers to onboard new endpoints via config rows.

Resilience: Centralized etl_run_log provides a full audit trail for every automated run.

Efficiency: Eliminates redundant script creation, ensuring a single, hardened codebase manages all data movement.

0

18