Custom AI integration — MCP Powered + LLM Integration Imad Dhin

A production-ready “AI base layer” added to any existing app/website/custom software that enables:

RAG search over your private data (docs, DBs, tickets, CRM).

Agentic workflows via MCP (Model Context Protocol) tools to safely call your systems (e.g., create order, schedule meeting, query inventory).

Structured AI features (assistants, summarization, extraction, autofill, drafting, Q&A) with guardrails, evals, and observability.

Provider-agnostic LLM orchestration (OpenAI, Anthropic, Gemini) with fallbacks, caching, and cost controls.

What's included

Use cases concept

- Full Product concept details with all the requirements needed.

- Success metrics (accuracy, latency, CTR, resolution rate).

Data Connect & Index (RAG)

- Connectors: Firestore/SQL, S3/GCS, Notion, GDrive, CMS,...

- Pipeline: normalization → PII redaction → chunking (semantic + headings) → embeddings → hybrid index (vector + keyword).

- Vector store options: pgvector, Pinecone, Weaviate, Qdrant.

MCP Tooling Layer

- Implement MCP servers exposing business actions (examples: orders.create, calendar.findSlots, tickets.search, inventory.get).

- AuthZ via service tokens/JWT; per-tool scopes; input/output JSON Schema validation.

LLM Orchestration & Guardrails

- Prompt packs (system+task), tool-calling, JSON-only responses, re-ranking, grounding checks.

- Caching (Redis), rate limiting, sensitive-data filters, profanity/PII classifiers.

Evaluation & Monitoring

- Golden datasets + regression tests (offline).

- Online metrics: acceptance rate, correction rate, cost/req, p95 latency; traces in Langfuse/OpenTelemetry.

Deployment & SDKs

- Infra: Vercel/Cloud Run/Kubernetes; IaC (Terraform),...

- SDKs: Flutter/React Native, Swift/Kotlin, JS/TS (Next.js), Python; REST/gRPC endpoints or any SDK you prefer to work on.

- Runbooks + dashboards; feature flags for gradual rollout.

Security & Compliance (On negotiation!)

- SSO (OAuth/SAML), per-tenant encryption, Row-Level Security where applicable.

- Redaction on ingest + response filters; audit trails for tool calls.

- Cost ceilings and rate limits per user/tenant.

Measurable Outcomes (On negotiation!)

• ≥X% deflection of support tickets via AI replies.

• ≤p95 latency budget for search/answer.

• ≥Y% accuracy on golden evals; continuous regression gating in CI.

Packaging (client-friendly) + End to End product

- Deliverables: Architecture doc, infra code, MCP servers, AI gateway, pipelines, SDKs, dashboards, eval suites, runbooks.

- Handover: Admin panel (+ feature flags), cost dashboards, playbooks for new use-cases.

FAQs

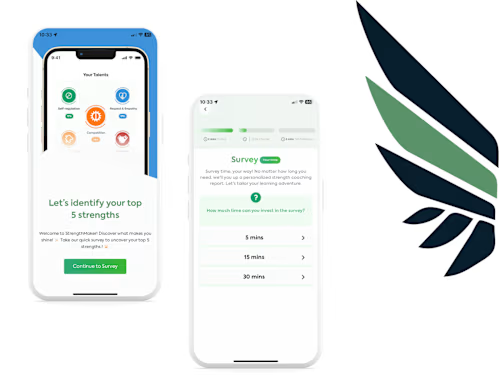

Example work

Contact for pricing

Tags

Claude

Google Gemini

AI Automation

AI Developer

AI Engineer

Service provided by

Imad Dhin maxRabat, Morocco

- $50k+

- Earned

- 30

- Paid projects

- 5.00

- Rating

- 166

- Followers

Custom AI integration — MCP Powered + LLM Integration Imad Dhin

A production-ready “AI base layer” added to any existing app/website/custom software that enables:

RAG search over your private data (docs, DBs, tickets, CRM).

Agentic workflows via MCP (Model Context Protocol) tools to safely call your systems (e.g., create order, schedule meeting, query inventory).

Structured AI features (assistants, summarization, extraction, autofill, drafting, Q&A) with guardrails, evals, and observability.

Provider-agnostic LLM orchestration (OpenAI, Anthropic, Gemini) with fallbacks, caching, and cost controls.

What's included

Use cases concept

- Full Product concept details with all the requirements needed.

- Success metrics (accuracy, latency, CTR, resolution rate).

Data Connect & Index (RAG)

- Connectors: Firestore/SQL, S3/GCS, Notion, GDrive, CMS,...

- Pipeline: normalization → PII redaction → chunking (semantic + headings) → embeddings → hybrid index (vector + keyword).

- Vector store options: pgvector, Pinecone, Weaviate, Qdrant.

MCP Tooling Layer

- Implement MCP servers exposing business actions (examples: orders.create, calendar.findSlots, tickets.search, inventory.get).

- AuthZ via service tokens/JWT; per-tool scopes; input/output JSON Schema validation.

LLM Orchestration & Guardrails

- Prompt packs (system+task), tool-calling, JSON-only responses, re-ranking, grounding checks.

- Caching (Redis), rate limiting, sensitive-data filters, profanity/PII classifiers.

Evaluation & Monitoring

- Golden datasets + regression tests (offline).

- Online metrics: acceptance rate, correction rate, cost/req, p95 latency; traces in Langfuse/OpenTelemetry.

Deployment & SDKs

- Infra: Vercel/Cloud Run/Kubernetes; IaC (Terraform),...

- SDKs: Flutter/React Native, Swift/Kotlin, JS/TS (Next.js), Python; REST/gRPC endpoints or any SDK you prefer to work on.

- Runbooks + dashboards; feature flags for gradual rollout.

Security & Compliance (On negotiation!)

- SSO (OAuth/SAML), per-tenant encryption, Row-Level Security where applicable.

- Redaction on ingest + response filters; audit trails for tool calls.

- Cost ceilings and rate limits per user/tenant.

Measurable Outcomes (On negotiation!)

• ≥X% deflection of support tickets via AI replies.

• ≤p95 latency budget for search/answer.

• ≥Y% accuracy on golden evals; continuous regression gating in CI.

Packaging (client-friendly) + End to End product

- Deliverables: Architecture doc, infra code, MCP servers, AI gateway, pipelines, SDKs, dashboards, eval suites, runbooks.

- Handover: Admin panel (+ feature flags), cost dashboards, playbooks for new use-cases.

FAQs

Example work

Contact for pricing