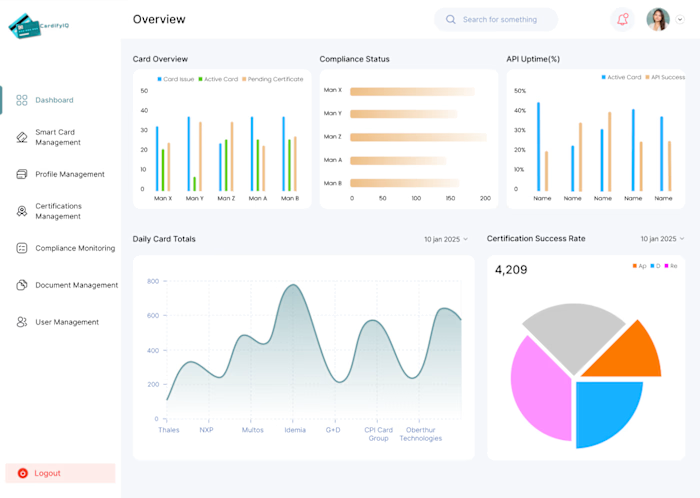

Advanced Prompt Engineering Frameworks Development

Designed and optimized advanced prompt engineering frameworks for GPT-4 and other LLMs to improve response accuracy, reasoning quality, and output consistency. Implemented structured prompt chaining, role-based prompting, system-level instruction design, output formatting control, and hallucination mitigation techniques. Built evaluation pipelines to test response reliability and token efficiency. Improved output precision, reduced ambiguity, and increased task completion accuracy across automation and AI assistant systems.

Like this project

Posted Apr 8, 2026

Created and optimized prompt engineering frameworks for GPT-4 and other LLMs.

Likes

1

Views

0