Whitepaper Finder

Whitepaper Agent

A specialized AI agent designed to research and analyze whitepapers using Arxiv, built with Next.js 14, LangChain, and OpenAI.

📖 Documentation

Architecture Overview: Understand how the "Brain" (LangChain), "Library" (Arxiv), and "interface" works together.

Challenges & Limitations: Read about the technical challenges like language barriers (Spanish/English) and static knowledge bases.

Features

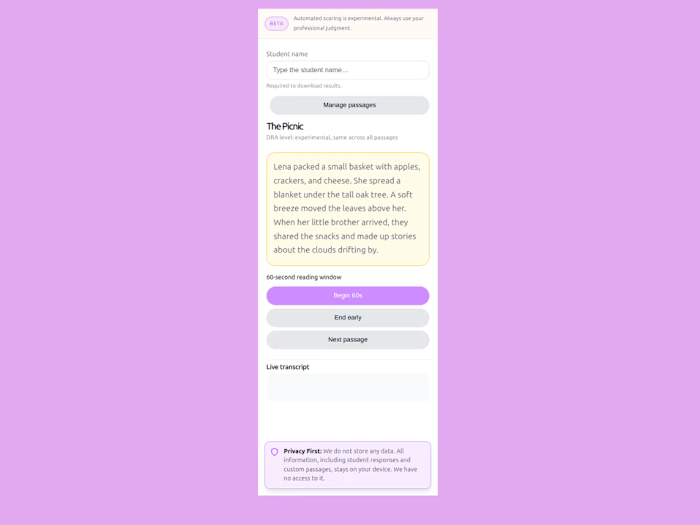

🧠 Intelligent Research Agent: Uses LangChain to reason about queries and decide when to fetch external data.

📚 Arxiv Integration: Automatically searches and retrieves recent scientific papers to answer technical queries.

💬 Smart Context: Maintains conversation history in Local Storage for a seamless experience.

🚀 Streaming Responses: Real-time feedback using Server-Sent Events (SSE).

🌐 Language Bridge: Seamlessly handles Spanish queries by finding relevant English papers and synthesizing answers back in Spanish.

🎨 Modern UI: Polished interface with shadcn/ui and responsive design.

Project Structure

Getting Started

Prerequisites

Node.js 18+

npm or pnpm

OpenAI API key

Installation

Clone and install dependencies:

Configure environment variables:

Edit

.env.local and add your OpenAI API key:Run the development server:

Open http://localhost:3000 in your browser.

Configuration

Environment Variables

Variable Description Default

OPENAI_API_KEY Your OpenAI API key Required OPENAI_MODEL Model to use gpt-4o OPENAI_TEMPERATURE Response creativity (0-1) 0.2 OPENAI_MAX_TOKENS Maximum response length 2048Customizing Prompts

Prompts are decoupled in

src/lib/prompts/system-prompts.ts. To add a new prompt:Then use it in the ChatContainer:

Best Practices Applied

This project follows best practices from:

React Best Practices - Parallel fetching, Suspense boundaries, memoization

Agentic Patterns - Tools integration, reasoning loops, prompt engineering

UI/UX Guidelines - Accessible components, clean typography, responsive layout

Scripts

License

MIT

Like this project

Posted Mar 29, 2026

AI agent for whitepaper research via Arxiv. Features LangChain reasoning, streaming chat, multilingual support. Built with Next.js 14 & TypeScript.

Likes

1

Views

2