Gesture Vision (Gesture Based Desktop Interaction Utility)

Gesture Flow AI

Engineered a low-latency, computer vision-based desktop utility that replaces traditional mouse and keyboard inputs with real-time hand tracking. Built using Python, Google MediaPipe, and OpenCV, the system leverages a split-hand architecture to map complex human gestures to precise OS-level commands (cursor control, volume mapping, macros). Wrapped in a custom PyQt6 frameless UI for real-time skeletal feedback.

Features

Gesture Flow completely redesigns gesture-based interaction by utilizing a split-hand architecture. By isolating specific system actions to specific hands, it entirely eliminates "accidental" or overlapping gestures.

Right Hand: Precision Cursor & Clicking

Your right hand is your invisible mouse.

Movement: Simply raise your right hand and extend your Index Finger. The cursor dynamically follows the tip of your finger with high-precision smoothing.

Left Click: Pinch your Thumb and Index Finger together.

Right Click: Pinch your Thumb and Middle Finger together.

Note: Clicks are stateful and debounce-protected, eliminating double-clicking or "spam" clicking issues.

Left Hand: Dynamic Volume Control

Your left hand is your physical slider.

Volume Control: Extend your Thumb and Index Finger (in an "L" shape) and bring them into frame.

Simply stretch or pinch the distance between these two fingers to seamlessly map your system's master volume from 0% (pinched) to 100% (stretched).

Both Hands: Global System Shortcuts

Instant Screenshot: Bring both hands into the frame and hold up the Peace Sign (Index + Middle fingers extended) simultaneously. Alternatively, hold up Two Closed Fists. An instant screenshot of your desktop will be captured.

Interactivity

The application doesn't just run in a terminal; it features a stunning, frameless Glass morphism UI built with PyQt6. You get real-time, glowing neon skeletal feedback of your hands overlaid dynamically on the screen, letting you know exactly what the AI sees without obstructing your work!

Tech Stack

Python 3.10+

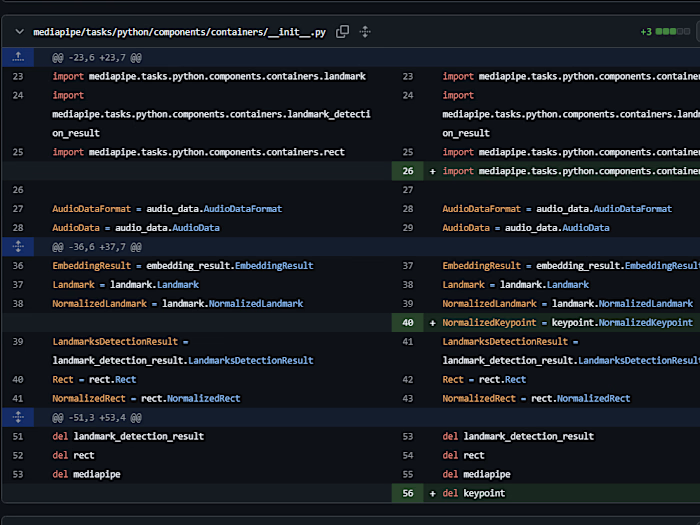

Google MediaPipe - For lightning-fast, high-confidence skeletal hand tracking.

OpenCV (cv2) - For webcam feed processing and drawing the neon skeleton canvas.

PyQt6 - For rendering the transparent, glassmorphic desktop overlay.

PyAutoGUI - For cross-origin mouse control and screenshot capture.

Pycaw - For direct interaction with the Windows Core Audio API for volume control.

Under the Hood

One Euro Filter: The application implements the 1€ filter for mouse cursor smoothing. High-frequency noise (jitter) is eliminated when moving slowly, while lag is minimized during fast hand movements.

Asynchronous Threading: To prevent the heavy AI-tracking loop from freezing the graphical interface, the core camera/gesture engine is decoupled using PyQt

QThread.Like this project

Posted May 11, 2026

Engineered a low-latency, computer vision-based desktop utility that replaces traditional mouse and keyboard inputs with real-time hand tracking.

Likes

0

Views

1