Transform Your Docs into a Searchable AI Assistant with RAG ...

Internal Platform making documents and internal knowledge into a fully searchable AI chat assistant, built on a production-grade RAG stack.

What has implemented:

📥 Document ingestion: PDF, DOCX, spreadsheets, and scanned images — auto extracted, cleaned, chunked, and indexed into your knowledge base.

🔍 OCR extraction: Scanned documents and image-based PDFs become fully searchable with no text layer required.

⚡ Hybrid search: BM25 keyword search and semantic vector search run together for best-of-both-worlds retrieval and maximum recall.

🎯 Rerankingl reranking retrieved results before they reach the LLM, delivering sharper and more relevant answers.

🗄️ Vector DB (supported): Elasticsearch, Pgvector, Qdrant, Weaviate, Pinecone.

🏛️ Relational DB (supported): PostgreSQL, SQLite, MySQL.

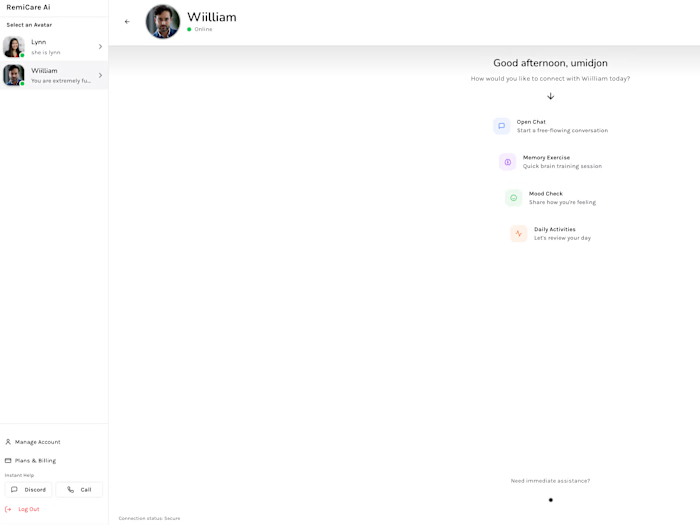

💬 Chat webapp: A polished, self-hosted frontend connected to any LLM: OpenAI, Anthropic, Ollama, or your own model.

🛠️ Admin dashboard: Manage users, roles, knowledge bases, retrieval settings, and model configurations from one place.

📊 Observability: Track user's token usage, response, latency, and cost.

🐳 Fully Dockerized: Clean, reproducible, and portable deployments on any Linux server or VPS

Like this project

Posted Apr 12, 2026

Internal Platform making documents and internal knowledge into a fully searchable AI chat assistant, built on a production-grade RAG stack. What has impleme...