Development of NeuroScale AI Inference Platform

NeuroScale Platform

A production-hardened AI inference platform that solves configuration drift, the Backstage adoption paradox, and the gap between AI code generation and governed production deployment — with 21 verified checks across 6 milestones.

Proof: smoke test output (single command, any machine)

Executive Summary: NeuroScale is a self-service AI inference platform on Kubernetes. A developer fills in a Backstage form, the platform creates a pull request, ArgoCD deploys it, and a production-grade KServe inference endpoint is live — with cost attribution, drift control, and policy guardrails enforced automatically at every stage.

Table of Contents

1. Why NeuroScale Exists: Addressing 2026 ML Infrastructure Pain Signals

The 2026 platform engineering pain signals this repo directly addresses:

Pain Industry Signal NeuroScale Answer Complexity / cognitive load Developers shouldn't need Kubernetes expertise to deploy a model Backstage Golden Path template — one form, one PR Reliability / drift Manual cluster changes break overnight ArgoCD GitOps — Git is the source of truth; drift is auto-corrected Governance & security Unsafe configs reach production Kyverno admission policies + CI policy simulation Cost waste No resource bounds = unbounded spend Required requests/limits +

owner/cost-center labels enforced before merge + live OpenCost showback Scale friction Adding a new service requires editing GitOps boilerplate ApplicationSet auto-discovers new model folders — zero registration overhead2. Architecture: Control Plane and Data Plane

Component interaction diagram

Loading

Mermaid detail: Control Plane and Data Plane

Loading

3. System Flow: How Everything Connects

3.1 GitOps: How Deployments Are Triggered

GitOps in NeuroScale is implemented with ArgoCD app-of-apps + ApplicationSet. There is one root Application (

bootstrap/root-app.yaml) that watches infrastructure/apps/. That directory contains both static Application manifests for platform infrastructure and one ApplicationSet that auto-discovers model endpoint folders.Trigger chain (new model deployment):

Loading

Key design decision — why ApplicationSet instead of per-app Application files:

Previously each new model required a manual

infrastructure/apps/<name>-app.yaml file. This was purely mechanical boilerplate.The ApplicationSet replaces all those files. Drop a folder under

apps/, and ArgoCD discovers and deploys it automatically.The platform scales to hundreds of services without any GitOps layer changes.

Automated sync settings (from

bootstrap/root-app.yaml):selfHeal: true means ArgoCD continuously reconciles. prune: true means resources removed from Git are removed from the cluster.3.2 Backstage: How Services Are Cataloged and Scaffolded

Backstage serves two functions in NeuroScale:

a) Service Catalog — every deployed inference service is a cataloged Component with ownership metadata. The

owner and cost-center labels required by Kyverno feed directly into catalog attribution.b) Golden Path Scaffolder — the

KServe model endpoint template at backstage/templates/model-endpoint/template.yaml generates the file required by the GitOps pipeline: Loading

The critical non-obvious piece: Backstage is not the deployment engine. It is the UX that generates Git artifacts. ArgoCD is the deployment engine. This separation means Backstage can be down without affecting running inference endpoints.

3.3 KServe: How Inference Is Handled

KServe operates in serverless mode (Knative-based) in this platform. The request path for a deployed

InferenceService named demo-iris-2 is: Loading

Why Kourier instead of Istio: The cluster runs on local k3d with constrained RAM. Istio adds ~1 GB memory overhead. Kourier is a minimal Envoy-based gateway that Knative supports natively and costs ~100 MB. The ingress config patch at

infrastructure/serving-stack/patches/inferenceservice-config-ingress.yaml sets disableIstioVirtualHost: true to signal this choice to KServe.ClusterServingRuntime:

infrastructure/kserve/sklearn-runtime.yaml defines a reusable runtime that all sklearn-based InferenceService objects reference. This separates how to serve from what to serve, allowing the runtime image to be patched in one place.4. Repository Map

5. Milestone status: what's verified and what breaks it

Milestone Business problem eliminated Verified by smoke test A — GitOps spine Configuration drift — any manual

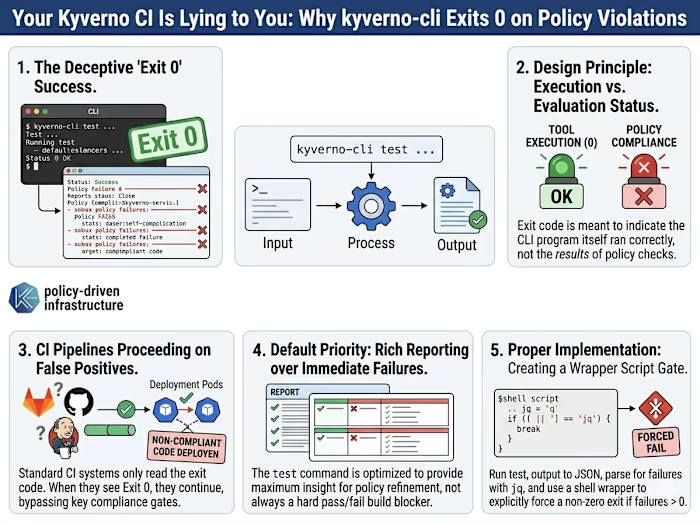

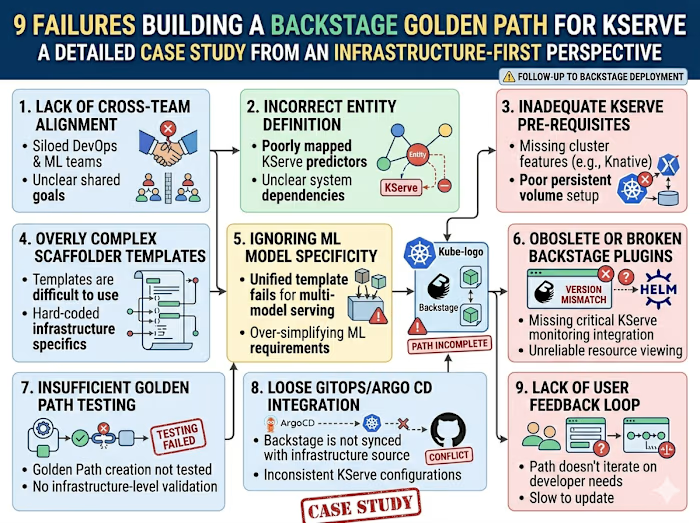

kubectl change is auto-reverted; infrastructure is recoverable via git revert [✓ PASS] Drift self-heal: nginx-test recreated in ~20s B — AI serving baseline Inference with no deployment path — KServe is GitOps-managed; a new model is a PR, not a kubectl command [✓ PASS] InferenceServices: 2/2 Ready=True · [✓ PASS] Inference request: demo-iris-2 → {"predictions":[1,1]} C — Golden Path Backstage adoption paradox — developers interact with a form, not Kubernetes YAML; the platform handles the rest [✓ PASS] demo-iris-2 InferenceService exists (scaffolder output) D — Guardrails Security theater — policies that appear to work but don't block anything; the kyverno-cli false-green was undetected for 2 weeks before being fixed [✓ PASS] Non-compliant InferenceService correctly denied E — Cost + portability No cost accountability — unbounded resource consumption with no ownership trail; any engineer can reproduce the full platform on a laptop in one command [~ SKIP] Resource-delta PR comment + bootstrap script validated in CI F — Production hardening Scale friction — adding a new model required manual GitOps boilerplate; ApplicationSet eliminates that entirely [✓ PASS] ApplicationSet generates 3 child Applications · [✓ PASS] OpenCost deployment healthyWhat each milestone cost to build (the failures, not the happy path)

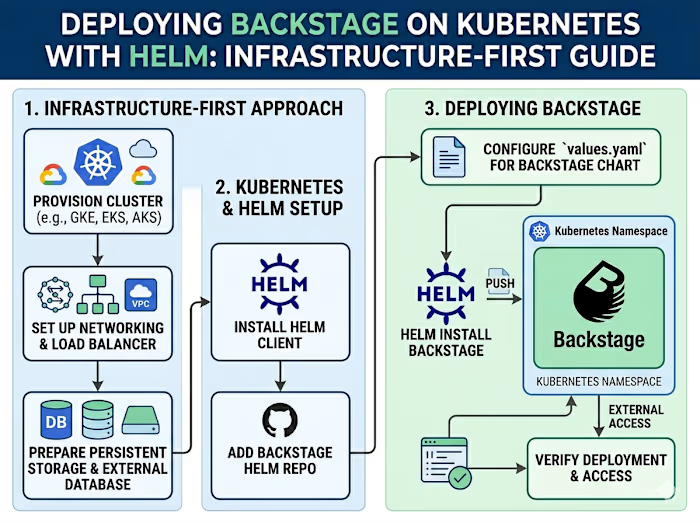

Milestone Hardest failure Time lost Business impact A — GitOps spine ArgoCD

Unknown state caused by repo-server CrashLoopBackOff — looks like a manifest error but is a controller connectivity failure 40 min Zero drift correction during outage window B — KServe serving Default KServe config assumes Istio; disableIstioVirtualHost is not disclosed in getting-started docs 3 hours All InferenceService creation blocked cluster-wide C — Backstage Golden Path 9 distinct failures; CI false-green on Kyverno policy violations for 2 weeks ~6 hours PR-time enforcement was silently not enforcing D — Guardrails kyverno-cli apply exits 0 on violations; $PIPESTATUS[0] required 2 weeks undetected Guardrails existed but did not enforce E — Cost + portability $patch: delete in a Kustomize file deleted the live CRD cluster-wide; all InferenceServices gone in seconds 4 min recovery SEV-1 equivalent: all inference endpoints deleted simultaneously F — Production hardening Backstage dangerouslyDisableDefaultAuthPolicy vs dangerouslyAllowOutsideDevelopment — these are different with different security implications 1 hour diagnosis Silent security exposure in production profileFull Reality Check documentation for each milestone: 7. Reality Check Documentation

Full Backstage CrashLoopBackOff incident postmortem: infrastructure/INCIDENT_BACKSTAGE_CRASHLOOP_RCA.md

6. Quickstart: Running the Demo Locally

Prerequisites

Docker Desktop (or Rancher Desktop)

k3d installedkubectl installedhelm installedFirst-time setup on any laptop

The script checks all prerequisites, creates the k3d cluster, installs ArgoCD, and prints the admin password and port-forward commands when finished.

Resume an existing cluster (daily startup)

Verify the platform is healthy

Morning health gate (manual checks)

Open all UIs at once (new in Milestone F)

This starts all four port-forwards as background processes and prints a URL table with credentials. Press Ctrl+C to stop all tunnels.

Open required tunnels (individually)

Demo: GitOps drift self-heal (Milestone A)

Demo: Inference request (Milestone B / C)

Demo: Policy block (Milestone D)

7. Reality Check Documentation

This platform was not built on the happy path. Every milestone hit real failures. The docs below document what broke, the exact terminal output, the root cause, and the business impact.

Milestone Reality Check Document Key Failures Documented A — GitOps Spine docs/REALITY_CHECK_MILESTONE_1_GITOPS_SPINE.md ArgoCD repo-server

connection refused; Argo Unknown comparison state; app stuck not syncing B — KServe Serving docs/REALITY_CHECK_MILESTONE_2_KSERVE_SERVING.md Istio vs Kourier ingress mismatch; kube-rbac-proxy ImagePullBackOff; Knative CRD rendering conflict C — Golden Path docs/REALITY_CHECK_MILESTONE_3_GOLDEN_PATH.md Backstage CrashLoopBackOff (Helm values mis-nesting); blank /create/actions (401 on scaffolder); PR merged but app stayed OutOfSync D — Guardrails docs/REALITY_CHECK_MILESTONE_4_GUARDRAILS.md InferenceService CRD removed by patch; Kyverno label name mismatch; CI policy simulation false-green (fixed in Milestone E) E — Cost Proxy + Portability docs/REALITY_CHECK_MILESTONE_5_COST_PROXY.md CI false-green root cause + fix; cost proxy design trade-offs; bootstrap script decisions F — Production Hardening docs/REALITY_CHECK_MILESTONE_6_PRODUCTION_HARDENING.md ApplicationSet design; namespace quota reasoning; OpenCost label strategy; multi-env Backstage values; guest auth vs disabled authFull incident postmortem for the Backstage CrashLoopBackOff: infrastructure/INCIDENT_BACKSTAGE_CRASHLOOP_RCA.md

8. Guardrails: What Gets Blocked and Why

Admission-time enforcement (Kyverno)

Policy What It Blocks Business Reason

require-standard-labels-inferenceservice InferenceService without owner + cost-center labels Cost attribution is impossible without ownership metadata; OpenCost relies on these labels require-standard-labels-deployment Deployment without owner + cost-center labels Same attribution requirement for non-inference workloads require-resource-requests-limits Deployment without CPU/memory requests and limits Unbounded resources cause node contention and unpredictable cost disallow-latest-image-tag Deployment with :latest image Non-reproducible rollouts; breaks rollback guarantees disallow-root-containers Deployment containers without runAsNonRoot: true Container breakout from root containers can escalate to host accessNamespace-level governance (ResourceQuota + LimitRange)

Resource Request cap Limit cap Reason CPU (aggregate) 4 cores 8 cores Prevents unbounded consumption on a shared cluster Memory (aggregate) 8 Gi 16 Gi Prevents OOM cascades across all namespaced workloads Pods — 20 Bounds KServe's hidden pod proliferation (1 ISVC → ~5 pods) InferenceServices — 5 Bounds total active model endpoints in default namespace

PR-time enforcement (CI)

Check Tool What It Catches Schema validation

kubeconform Malformed YAML, wrong API versions, missing required fields Policy simulation kyverno-cli apply + stdout check Policy violations against actual rendered manifests before merge (dual exit-code + stdout check guards against false-greens) Helm rendering helm template + render_backstage.sh Helm values hierarchy bugs (the exact failure class from the Backstage RCA) Resource delta Python + PyYAML CPU/memory requests in changed apps/ manifests; posts as PR comment and GitHub Actions job summary9. Operational Runbook: ArgoCD Sync Recovery, KServe Restart, and Backstage Token Refresh

See

docs/archive/MILESTONE_C_POSTMORTEM.md for the full runbook.Common recovery commands:

10. Platform Architecture: Key Design Decisions and Where They Live

Question Where to Point "Walk me through the GitOps flow" Section 3.1 +

bootstrap/root-app.yaml + infrastructure/apps/model-endpoints-appset.yaml "How does Backstage trigger a deployment?" Section 3.2 + backstage/templates/model-endpoint/template.yaml "How does KServe handle a request?" Section 3.3 + infrastructure/kserve/sklearn-runtime.yaml "How do you prevent bad configs from reaching prod?" Section 8 + infrastructure/kyverno/policies/ "How do you prevent containers running as root?" infrastructure/kyverno/policies/disallow-root-containers.yaml "How do you prevent runaway resource consumption?" infrastructure/namespaces/default/resource-quota.yaml + limit-range.yaml "How do you attribute costs to teams?" owner/cost-center labels enforced by Kyverno + OpenCost dashboard at localhost:9090 "Show me a real incident you've debugged" infrastructure/INCIDENT_BACKSTAGE_CRASHLOOP_RCA.md "What failed and what did you learn?" docs/REALITY_CHECK_MILESTONE_*.md "Why Kourier instead of Istio?" Section 3.3 + docs/REALITY_CHECK_MILESTONE_2_KSERVE_SERVING.md "How can I verify the platform works on my laptop?" scripts/bootstrap.sh + scripts/smoke-test.sh + scripts/port-forward-all.sh "How do you scale from 3 models to 300?" ApplicationSet in infrastructure/apps/model-endpoints-appset.yaml — zero GitOps boilerplate per model "What's different between dev and prod Backstage?" infrastructure/backstage/values.yaml vs values-prod.yaml — replicas, auth provider, limits, probe thresholds "How would you promote this to a real cloud cluster?" docs/CLOUD_PROMOTION_GUIDE.md — phase-by-phase: EKS/GKE Terraform, ArgoCD bootstrap, ingress swap, wildcard DNS, TLS (cert-manager or ACM), production Backstage, OpenCost billing APINote: This repo runs on local k3d (zero-cost, fully reproducible). The cloud promotion path — EKS/GKE Terraform, ingress swap, DNS, TLS, production Backstage — is documented step-by-step indocs/CLOUD_PROMOTION_GUIDE.md. The application manifests require no changes to run on a cloud cluster; only the cluster and network layer changes.

Like this project

Posted May 4, 2026

Built & documented NeuroScale, a production AI inference platform on Kubernetes. 5+ technical articles, content reviewed by a Backstage core maintainer.