I designed and delivered an

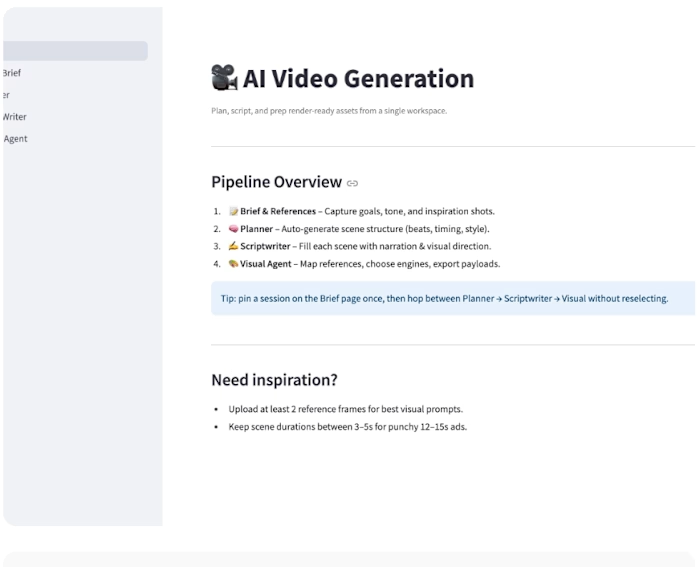

I designed and delivered an end-to-end MVP for a real-time AI talking avatar system, where live browser speech is converted into an AI-generated response and rendered through a lip-synced avatar video on GPU hardware.

The objective was to validate technical feasibility, latency characteristics, and perceived real-time interaction before committing to production hardening. The system integrates speech-to-text, LLM-based reasoning, text-to-speech, and video synthesis into a single, runnable pipeline, deployed on an A100 GPU.

Like this project

Posted Feb 3, 2026

I designed and delivered an end-to-end MVP for a real-time AI talking avatar system, where live browser speech is converted into an AI-generated response and...

Likes

0

Views

0

Timeline

Dec 30, 2025 - Jan 30, 2026