Development of ARGUS Context-Aware AI Assistant

👁️ ARGUS

"Every other AI waits to be asked. ARGUS has already been watching."

Gemini Live Agent Challenge — UI Navigator Category Built with Gemini 2.0 Flash · Google Cloud Run · Firestore · FastAPI · PyAutoGUI

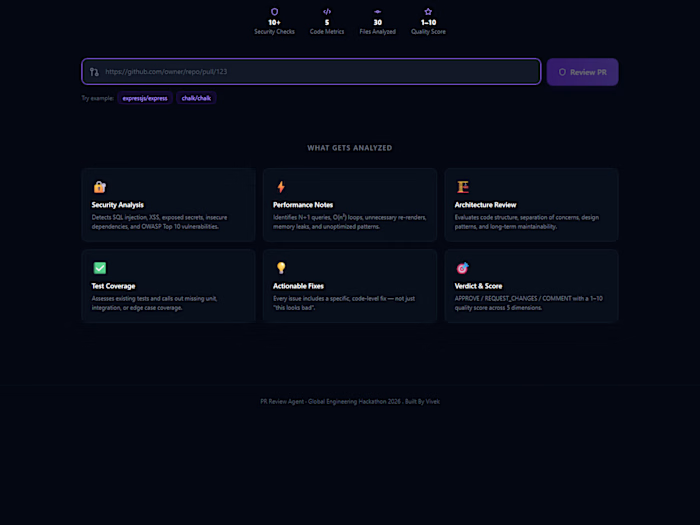

What Is ARGUS?

Most AI agents are reactive. You open them, explain your problem from scratch, and wait for a response. Every single time.

ARGUS is different. It's ambient.

ARGUS silently watches your screen every 10 seconds, builds a rolling 1-minute context window of exactly what you've been doing, and when you say "ARGUS" — it already knows your problem before you finish explaining it.

No copy-pasting error messages. No explaining which file you were in. No context. ARGUS was there. It saw everything.

The Demo Scenario

A developer has been debugging for 1 minute. Three failed attempts. Stack Overflow tabs everywhere. They lean back and say: "ARGUS... help me." ARGUS responds: "I've been watching. You hit the same null reference error twice — at 14:32 and 14:38. I also saw you visit three Stack Overflow pages on async handlers. The fix is in your useEffect cleanup function. Want me to apply it?" The mouse moves on its own. The fix is applied. Tests pass.

Architecture

Why ARGUS Is Different

Feature Traditional AI Agents ARGUS Activation You open it and explain Say "ARGUS" — it already knows Context You provide it manually Built automatically over 1 minute Screen Reading DOM scraping / APIs Pure pixel vision — works on ANY app Execution Simulated / sandboxed Real mouse movement, real clicks Memory None between turns Rolling Firestore context window Interruption Turn-based Say "stop" mid-action

Tech Stack

Layer Technology Purpose AI Brain Gemini 2.0 Flash Vision analysis, context reasoning, coordinate detection Backend FastAPI + Cloud Run WebSocket orchestration, hosted on GCP Context DB Google Cloud Firestore Persistent rolling 1-minute observation window Audit Log Google Cloud Storage Screenshot history and action log Screen Eyes Python mss Ultra-fast screenshot capture Pixel Filter NumPy diff Only sends changed frames to API — saves quota Hands PyAutoGUI Real mouse movement and keyboard execution Voice SpeechRecognition Wake word detection and command capture Transport WebSockets Persistent real-time client-server connection

Project Structure

Setup & Installation

Prerequisites

Python 3.10+

Windows 10/11

Gemini API key (free at aistudio.google.com)

Google Cloud account (free $300 credit)

1. Clone the repo

2. Create virtual environment

3. Install dependencies

4. Configure environment

Create a

.env file in the root folder:5. Run verification tests

6. Launch ARGUS

This opens the backend server in a separate window and starts the client automatically.

How To Use

No microphone? Type

argus <your command> directly in the terminal.Emergency stop: Move mouse to the top-left corner of screen instantly stops all actions (PyAutoGUI failsafe).

Google Cloud Deployment

Deploy Backend to Cloud Run

Update client to use Cloud Run URL

After deployment, update your

.env:GCP Services Used

Cloud Run — Serverless backend hosting

Cloud Firestore — Context memory database

Cloud Storage — Screenshot audit trail

How It Works — The Execution Loop

Findings & Learnings

What worked exceptionally well:

The pixel diff filter was critical — reduced API calls by ~80% vs naive screenshot every 10 seconds, making the free tier viable for a full demo

Gemini 2.0 Flash's vision accuracy for coordinate detection exceeded expectations — it correctly identifies UI elements even in complex, cluttered screens

The rolling context window approach (Firestore + timestamp filtering) proved more reliable than in-memory storage for the 1-minute window

What was challenging:

Balancing screenshot frequency vs API quota on free tier required careful tuning of the diff threshold

PyAutoGUI coordinate system differs from Gemini's perceived coordinates on high-DPI screens — required scaling compensation

WebSocket reconnection logic needed careful handling to avoid losing the context window on network drops

What we'd build next:

Multi-monitor support

Persistent long-term memory (beyond 1 minute) using Vertex AI embeddings

Native Gemini Live API streaming for true real-time interruption handling

Mobile screen support via ADB

Proof of Google Cloud Deployment

See

/demo-proof-vid/gcp_proof.mp4 in this repository — a screen recording showing the ARGUS backend running live on Google Cloud Run with console logs visible.Direct link to Cloud Run deployment:

https://8080-cs-ea032a80-41da-48fc-ac6b-a77c111c1936.cs-asia-southeast1-palm.cloudshell.dev/healthLicense

MIT License — see LICENSE file.

Built For

Gemini Live Agent Challenge 2026

Category: UI Navigator

Tagline: "It never stops watching."

ARGUS — In Greek mythology, Argus Panoptes had 100 eyes and never slept.

Like this project

Posted Mar 17, 2026

Developed ARGUS for context-aware AI assistance.