pro

Kelvin Desman

Mind of an engineer and the heart of an entrepreneur.

- $50k+

- Earned

- 1x

- Hired

- 10

- Followers

For every feature I shipped with an AI agent, I shipped more than two fixes.

1,000+ PRs. 84 days. Solo. The throughput was real — but 360 of those PRs were fixes against 150 features. That ratio is the part nobody puts in their recap post.

The core problem: the agent is a 20× author, not a 20× reviewer. I had no leverage on verification — just guards built from past failures, useless against anything new.

What I'd change: second agent for adversarial review only, and changes small enough that being wrong is cheap.

Agentic engineering doesn't remove the hard part. It moves it.

1

27

Built a RAG job-hunting agent for Indonesian developers applying to remote USD jobs.

28 days of real usage. Here's what I learned.

The problem: applying to 50+ remote jobs manually is exhausting. Wrong roles, no resume tailoring, hours wasted per application.

The workflow now:

One message → agent retrieves relevant jobs → ranks them → generates tailored resume + cover letter → user approves or skips.

Stack (still running $0 free tier):

· Embeddings: Cloudflare Workers AI qwen3-embedding-0.6b

· Vector search: Cloudflare Vectorize, cosine similarity

· Database: Cloudflare D1 / SQLite, one batched query

· Semantic cache: Cloudflare KV, cosine ≥0.92 threshold

· LLM: JSON-mode slot extraction + reply

Only variable cost: ~$0.002/turn LLM usage

28-day numbers:

· 235 sessions

· 329 return visits

· 743 agent turns

· 357 job cards surfaced → 46 approved applications

~20 approvals per 100 sessions (target was 10–15)

Total LLM cost: ~$1–4

Example matches from one natural-language query:

Linear — 96/100 · DatAds — 95/100 · Spotify — 100/100

Two decisions I'd make again:

· The scorer is deterministic — pure function, 7 weighted components, 0–100. No model drift. Users see exactly why a job scored highly. I didn't trust LLM ranking for something this consequential.

· There's a regex fallback behind every LLM extraction. If the model fails or times out, the conversation continues. Users never hit a dead end.

What's next:

· Tracking downstream outcomes — recruiter replies, interviews Detecting expired/duplicate posts more aggressively Calibrating scoring weights from real approval behavior

· The pipeline runs cheaply and generates real approvals. The next challenge is proving it improves actual job outcomes — not just application volume.

This is the kind of system I enjoy building: AI-native product on constrained infra, with real users and measurable north-star metrics.

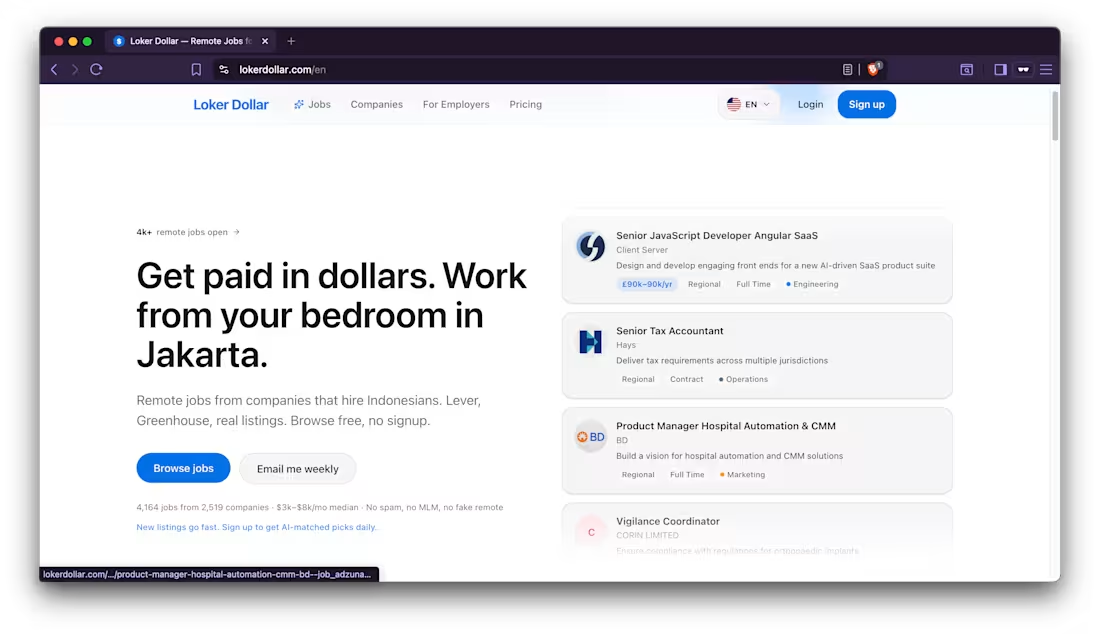

If you're working on something similar — hiring pipelines, talent matching, AI agents for job seekers — I'd enjoy comparing notes. And if you're an Indonesian dev hunting remote USD roles, the product is live at lokerdollar.com (http://lokerdollar.com).

What would you build differently? 👇

#RAG (https://www.linkedin.com/search/results/all/?keywords=%23rag&origin=HASH_TAG_FROM_FEED) #CloudflareWorkers (https://www.linkedin.com/search/results/all/?keywords=%23cloudflareworkers&origin=HASH_TAG_FROM_FEED) #BuildInPublic (https://www.linkedin.com/search/results/all/?keywords=%23buildinpublic&origin=HASH_TAG_FROM_FEED) #AIEngineering (https://www.linkedin.com/search/results/all/?keywords=%23aiengineering&origin=HASH_TAG_FROM_FEED) #RemoteJobs (https://www.linkedin.com/search/results/all/?keywords=%23remotejobs&origin=HASH_TAG_FROM_FEED) #IndonesianDevelopers (https://www.linkedin.com/search/results/all/?keywords=%23indonesiandevelopers&origin=HASH_TAG_FROM_FEED)

3

71

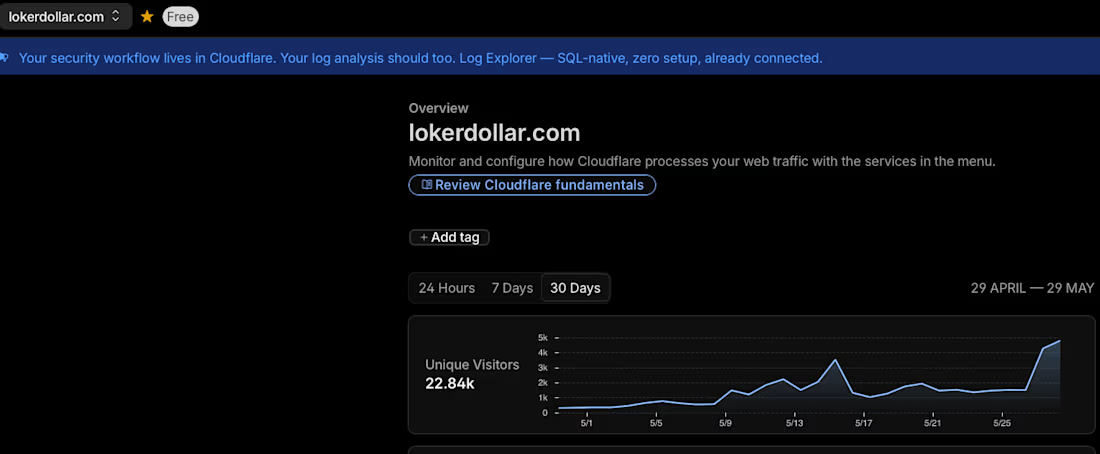

60 days ago, lokerdollar.com (http://lokerdollar.com) had zero visitors.

This morning Cloudflare reported 22,840.

Then I opened the real analytics. 468.

The other ~22k were bots crawling a few thousand job pages. The number I was about to brag about was 98% machines.

───

The honest 60-day growth evaluation.

$0 ads. 100% organic.

What's working:

SEO is doing real work — 20% CTR at position 5.5 for a 2-month-old site. The Bahasa Indonesia job summaries earning their keep, content English aggregators don't bother to write.

GEO / agents: people are finding it through ChatGPT. → The build-in-public loop works — Threads sends traffic back.

Under the hood: an AI agent does the work a team would — thousands of jobs ingested and auto-summarized in EN + ID, IDR salary conversion, timezone-fit scoring, all autonomous, on a routing layer that keeps cost controlled.

What's not (yet):

Retention is the frontier. People land, read for ~5 minutes, and don't return. Activation is step one — getting them to take a single action — but the real game is giving them a reason to come back.

Same lesson as the bot traffic — I was trusting numbers that didn't tell the truth. The outbound-click event never fired. You can't improve a funnel you can't see, so I built toward the one thing that was lit: traffic. Fixing the measurement first.

───

What I'm sitting with:

I spent 60 days proving I can get people to the product. The next 30 are about giving them a reason to stay.

Traffic is a vanity metric until someone comes back.

hashtag#buildinpublic (https://www.linkedin.com/search/results/all/?keywords=%23buildinpublic&origin=HASH_TAG_FROM_FEED) hashtag#indiehacker (https://www.linkedin.com/search/results/all/?keywords=%23indiehacker&origin=HASH_TAG_FROM_FEED) hashtag#remotework (https://www.linkedin.com/search/results/all/?keywords=%23remotework&origin=HASH_TAG_FROM_FEED) hashtag#startup (https://www.linkedin.com/search/results/all/?keywords=%23startup&origin=HASH_TAG_FROM_FEED) hashtag#saas (https://www.linkedin.com/search/results/all/?keywords=%23saas&origin=HASH_TAG_FROM_FEED)

2

59

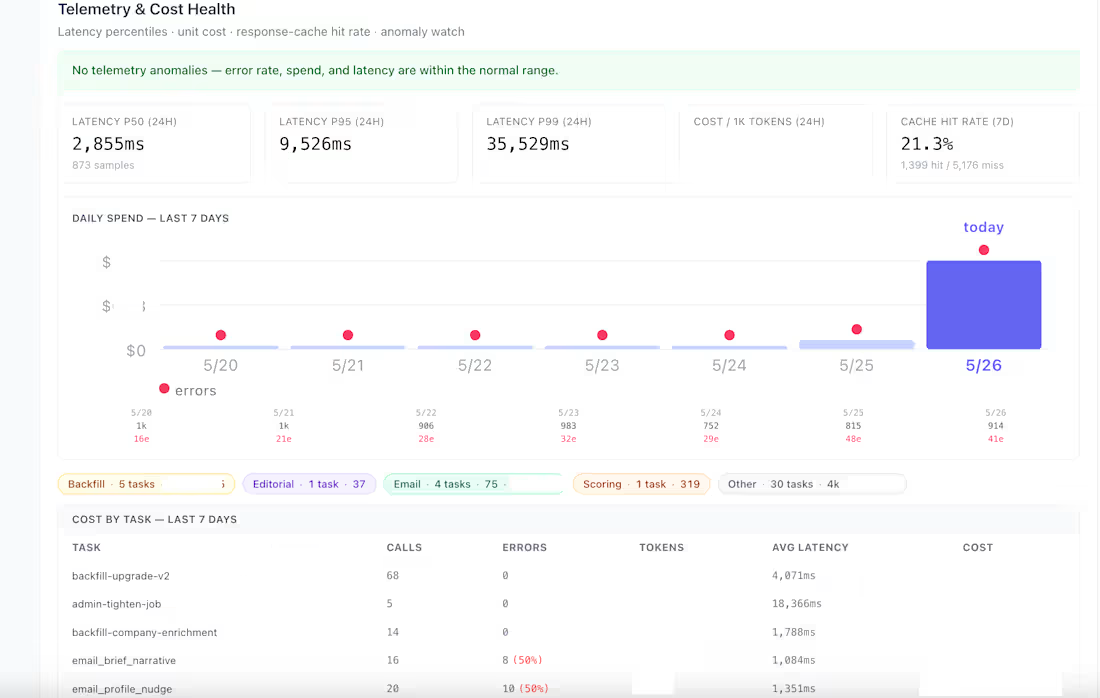

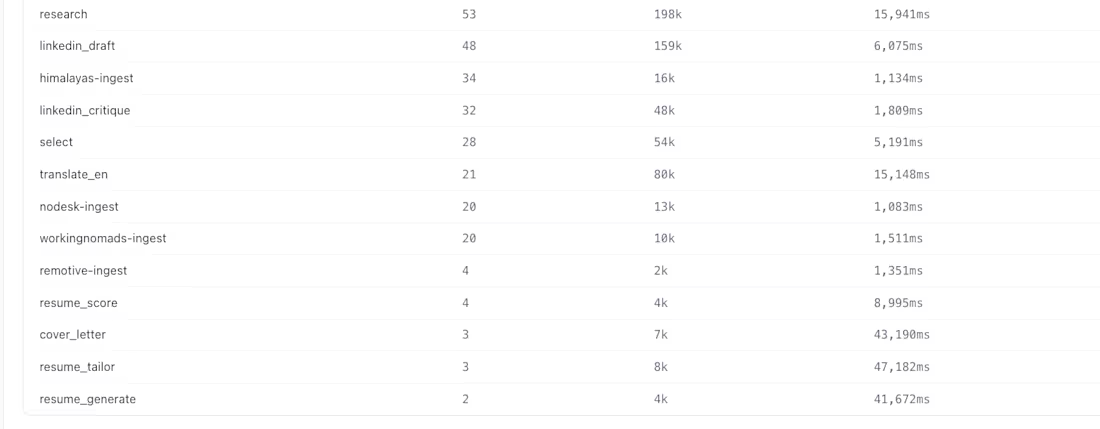

Most LLM telemetry posts brag about what they monitor. Here's mine — including what I'm still missing.

Built this for lokerdollar.com (http://lokerdollar.com), solo, in production:

Covered:

— p50 / p95 / p99 latency per task

— cost per 1k tokens + daily burn + per-task spend

— provider success rates + circuit breaker state

— cache hit rate, quota / rate limit tracking

— model leaderboard scored on live traffic

Gaps I'm still chasing:

— prompt versioning tied to quality signals

— TTFT for streaming UX (matters for chat tasks)

— per-user cost attribution

— semantic error taxonomy, not just HTTP codes

— push-based degradation alerts (today's playbook is reactive)

The lesson from running this solo: observability is never "done." It's a backlog that evolves with the product — and the gap list is more honest signal than the green checkmarks.

→ Open for AI platform / full-stack work. If you want this layer for your team, my DMs are open.

1

43

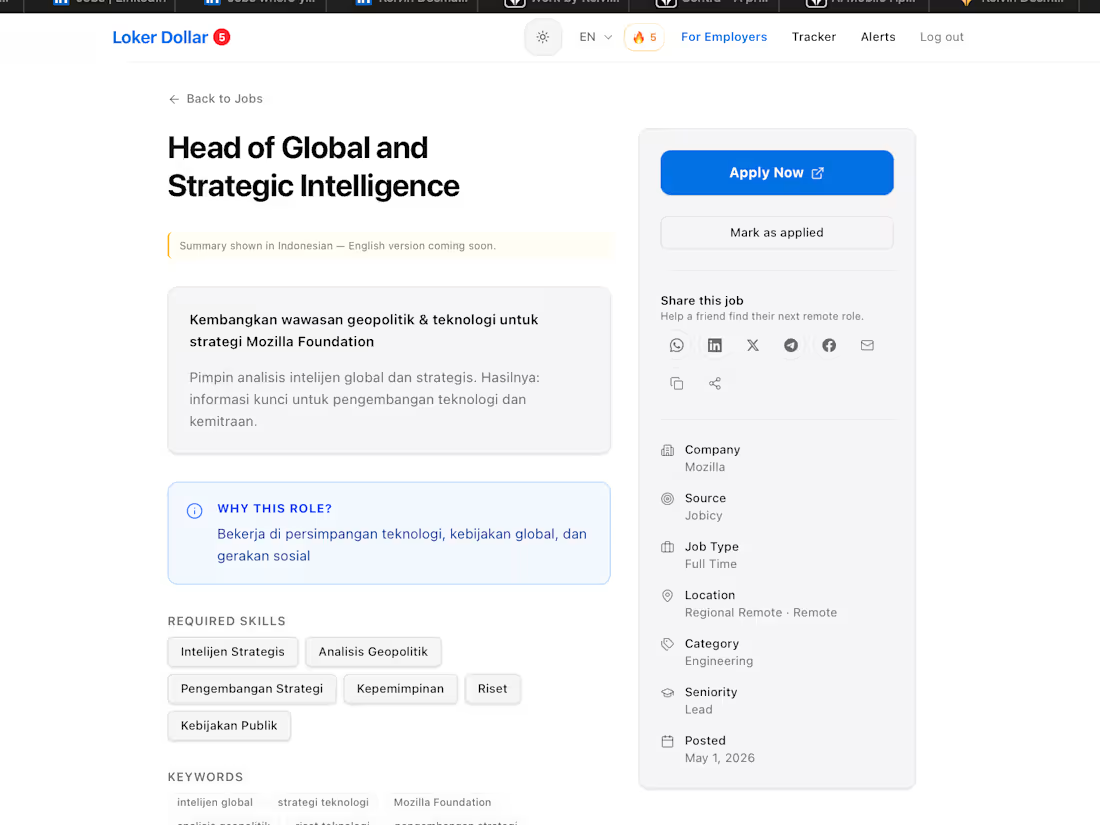

LokerDollar.com

0

4

35,000 AI calls last week. 23 million tokens.

Zero human in the loop.

Here's what that actually looks like inside lokerdollar.com (http://lokerdollar.com).

───

What those pipelines do:

Job ingestion + enrichment (15 sources)

→ job boards, Reddit, Hacker News — each with its own

AI pipeline to normalize, classify, and enrich

Job summaries

→ every job rewritten with local salary context,

timezone fit, and red/green flags for the candidate

Content generation

→ draft → critique → polish chain for every blog post

Email automation

→ AI writes the subject line AND the opening hook

for every transactional email individually

User-facing career AI

→ resume parsing, scoring, tailoring, regeneration

→ cover letter writing

Self-monitoring

→ the routing logic itself is AI — a "select" task

that decides which provider handles each call

───

The part most "AI startup" posts miss:

91% of these tokens never touch a user.

They run quietly — ingesting, normalizing, enriching,

translating, scoring, deduping.

User-facing AI (resume tools, cover letters) is

barely 1% of the actual workload.

───

The moat isn't the chatbot.

The moat is the 30 invisible pipelines behind it —

doing boring work consistently, on free providers,

24 hours a day, with no human in the loop.

Most "AI startups" hit a wall because they built a feature.

The ones that survive build a pipeline.

───

This is the kind of stack I help other solo founders

and small teams set up. If you're stuck paying frontier

prices for routine work, the routing layer pays for

itself within a week.

Drop a comment — happy to share the pattern.

#BuildInPublic #AI #SoloFounder #IndieHacker #Engineering

1

47

I read 4,192 remote job listings end to end to settle one argument.

Should you chase the "AI" title?

The numbers say no — and this time I put them on the slide.

Three pay averages from listings that publish a salary range:

AI Engineer / ML Engineer → $54,400/yr min.

AI Trainer / annotator / RLHF → $75,231/yr min.

Senior+ in any field → $124,462/yr min.

The "AI" label, on its own, pays less than "senior" in any track you're already on.

The full chart deck breaks down six filters every hiring manager applies — silently — across the board:

→ Portfolio outranks degree 3.3×.

→ AI baseline (in non-AI titles) outnumbers AI specialty 2.6×.

→ "Specialist" beats "generalist" in JD bodies 11×.

→ Senior listings outnumber junior listings 4.4×.

→ Tool mentions ranked: Claude 90, ChatGPT 65, Cursor 36, Copilot 26, Gemini 20, v0 9.

→ AI Engineer pay < AI Trainer pay < Senior pay.

Stop chasing the title. Ship one quarterly portfolio piece in a tool the market names by hand.

Specialize loud. Generalize quiet.

#RemoteWork (https://www.linkedin.com/search/results/all/?keywords=%23remotework&origin=HASH_TAG_FROM_FEED) #AIJobs (https://www.linkedin.com/search/results/all/?keywords=%23aijobs&origin=HASH_TAG_FROM_FEED) #CareerStrategy (https://www.linkedin.com/search/results/all/?keywords=%23careerstrategy&origin=HASH_TAG_FROM_FEED) #DataDriven (https://www.linkedin.com/search/results/all/?keywords=%23datadriven&origin=HASH_TAG_FROM_FEED)

0

48

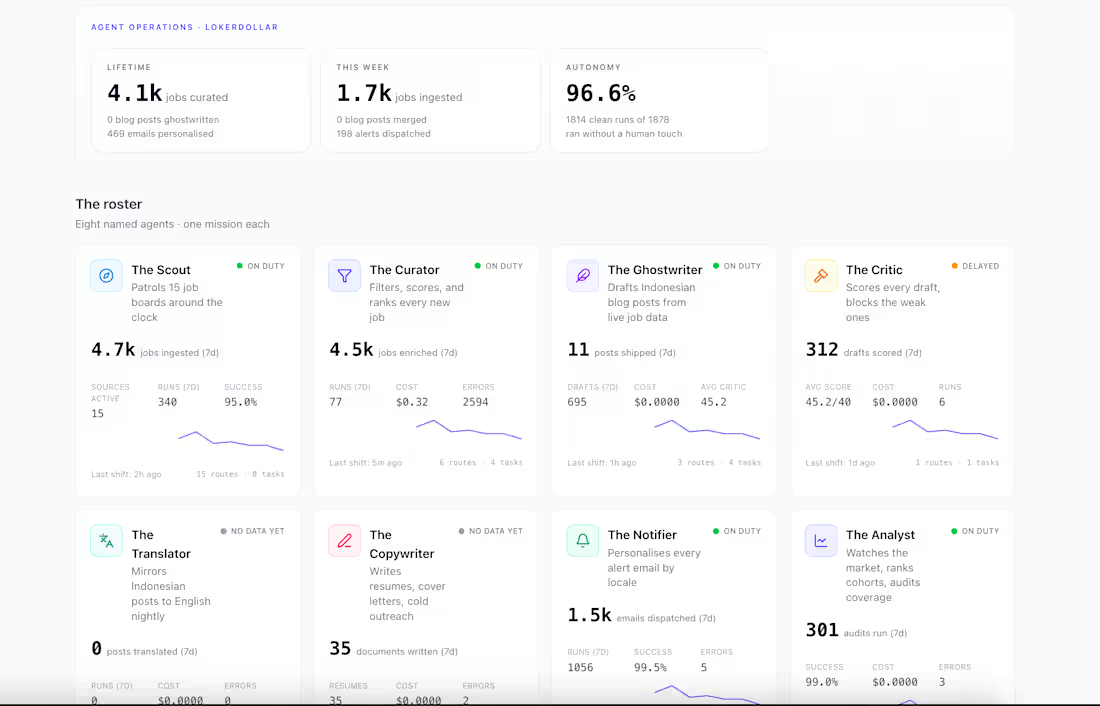

I run lokerdollar.com (http://lokerdollar.com) alone.

No team. No content writers. No ops staff.

Just me — and 8 AI agents running 24/7 in the background.

This week they:

→ Ingested 1,700+ new jobs from multiple sources

→ Scored and filtered every single one

→ Dispatched 1,500+ personalised alert emails

→ Ghostwrote Indonesian blog posts from live job data

→ Ran market audits

80% of it happened without me touching anything.

I used to do all of this manually. Scraping, curating, writing, emailing. It was slow and it capped how far the product could go.

Now I've built what I call an "agent roster" — eight named agents, each with one job. The Scout finds jobs. The Curator scores them. The Ghostwriter writes content from the data. The Notifier sends the right alert to the right person. The Analyst watches the market.

They don't replace the product vision. They execute it.

This is what excites me about the moment we're in. Solo founders can now run operations that used to require a whole team. The leverage is real — I'm watching it in my own dashboard.

A few things I've learned building this:

Give every agent exactly one job. The second you let an agent do "a few things," it becomes unreliable.

Autonomy rate matters more than cost. $0.00 per run means nothing if a human still has to review everything.

Start with the highest-volume, most repetitive task first. For me that was job ingestion. The ROI was immediate.

I'm now thinking about how to help other founders and small businesses build their own agent operations — whether that's a product, a pipeline, or just a conversation.

If you're running any kind of data-heavy operation and you're doing too much of it by hand — I'd love to talk.

Drop a comment or DM me if you want to explore what this could look like for your business.

3

2

100

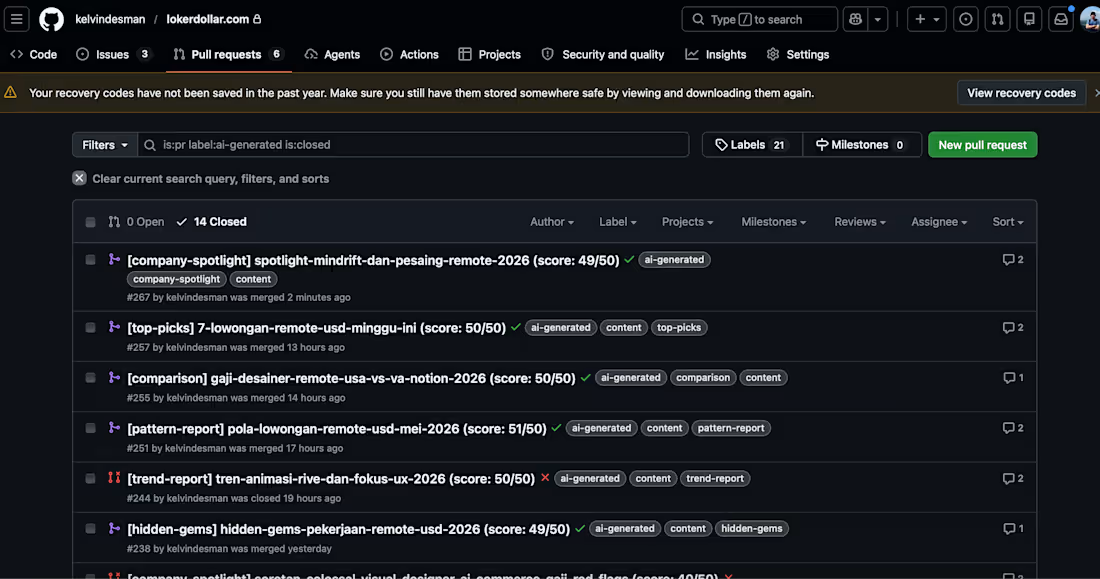

I’m using GitHub as the human-in-the-loop review process for my AI-assisted publishing engine.

The agent drafts the post.

But GitHub handles the judgment layer:

• Every article becomes a pull request

• Labels define the content type

• Scores show quality checks

• Comments become editorial feedback

• Merge means publish

• Closed means reject

It’s basically a developer-native CMS: pull requests for review, labels for structure, CI for quality checks, comments for feedback, and merge history as the audit trail.

For lokerdollar.com (http://lokerdollar.com), this means I can scale content production without removing human taste from the process.

AI writes fast.

GitHub slows it down just enough to make it useful.

I’m sharing the exact markdown → GitHub PR → Next.js publishing workflow soon.

0

52

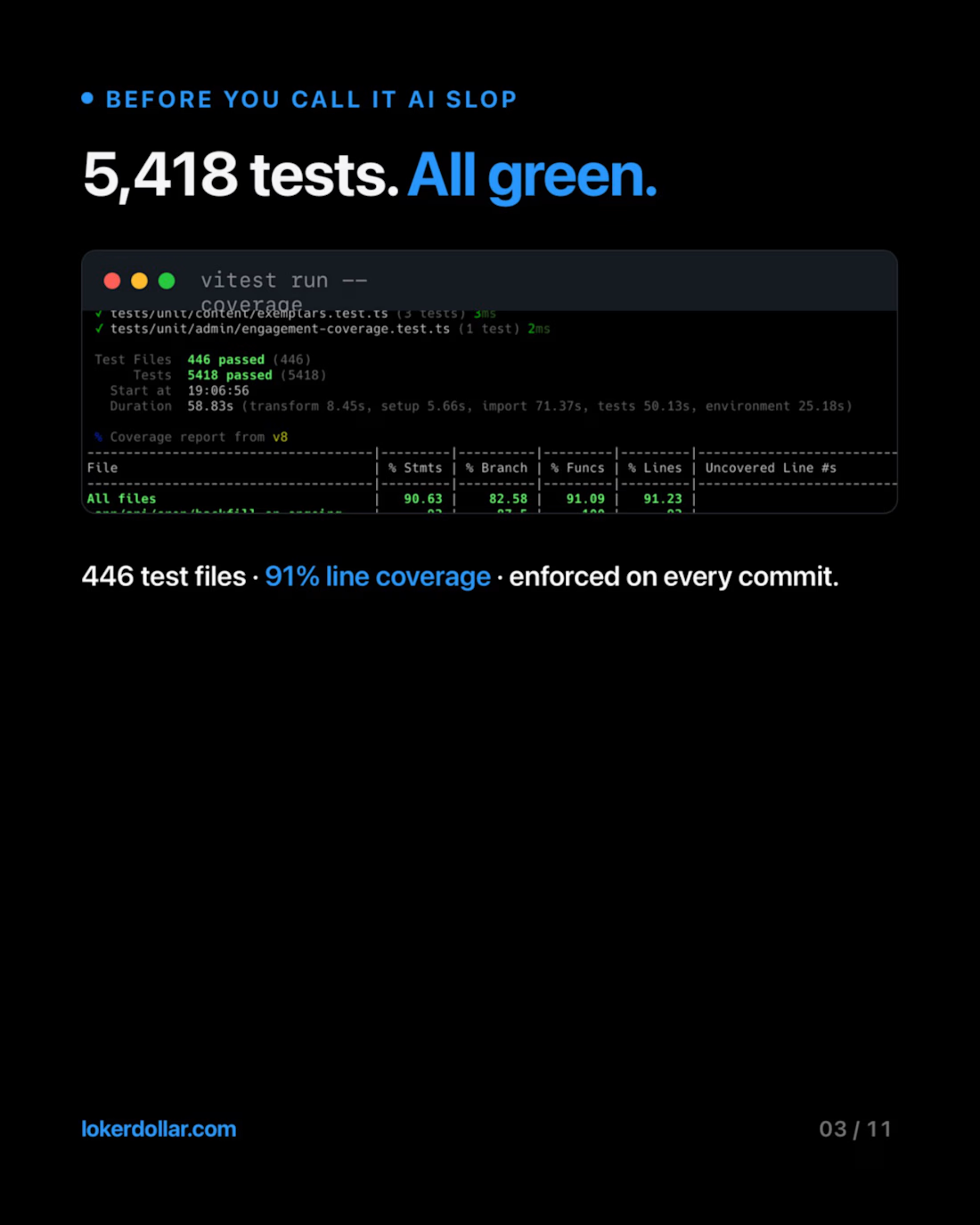

lokerdollar.com (http://lokerdollar.com) — Remote Job Aggregator for Indonesian Talent

• Architected on Next.js 15 (App Router, RSC), TypeScript, Cloudflare Workers, D1, R2, and KV — zero-runtime-latency SEO pages powered by an ahead-of-time AI enrichment pipeline

• Designed a freemium monetization model (Guest / Free / Open Profile / Pro), Talent Visibility opt-in and a B2B employer discovery product

• Built an autonomous CI/CD repair pipeline using GitHub Actions + anthropics/claude-code-action@beta that detects pipeline failures and pushes fixes to PRs without manual intervention

• Runs a PostHog A/B testing loop on the hero section and conversion funnel; full observability via Sentry and Cloudflare Worker logs

• 100% of planning, implementation, and review flows through Claude Code, Codex, and Antigravity — spec-driven, agentic, and reproducible

0

42

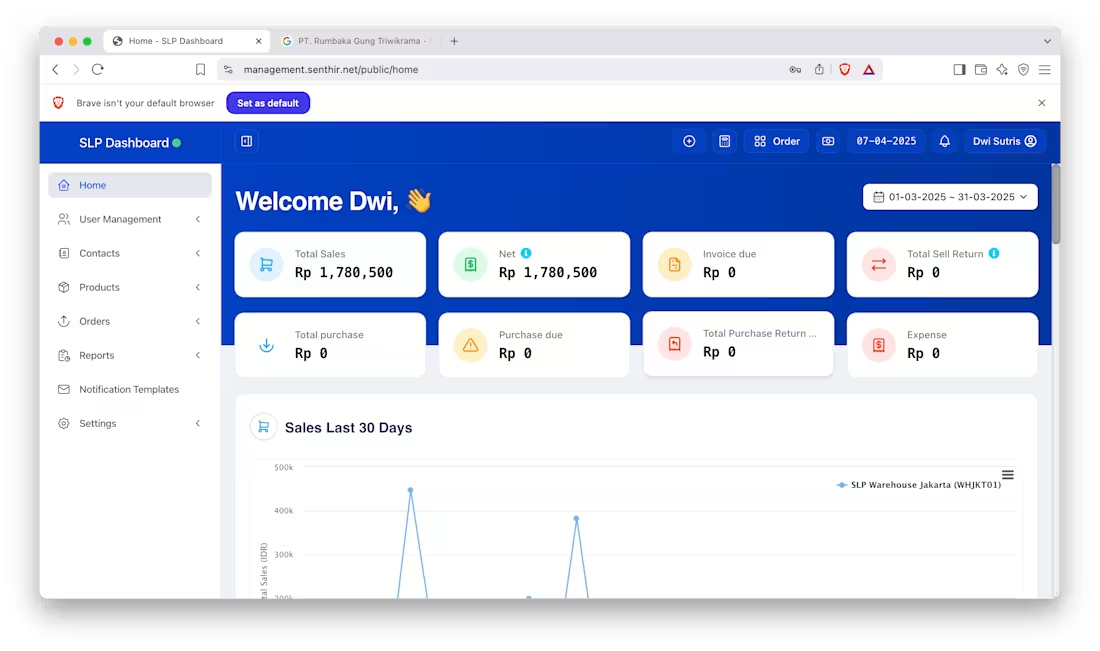

Logistics and Inventory Fulfillment System

0

10

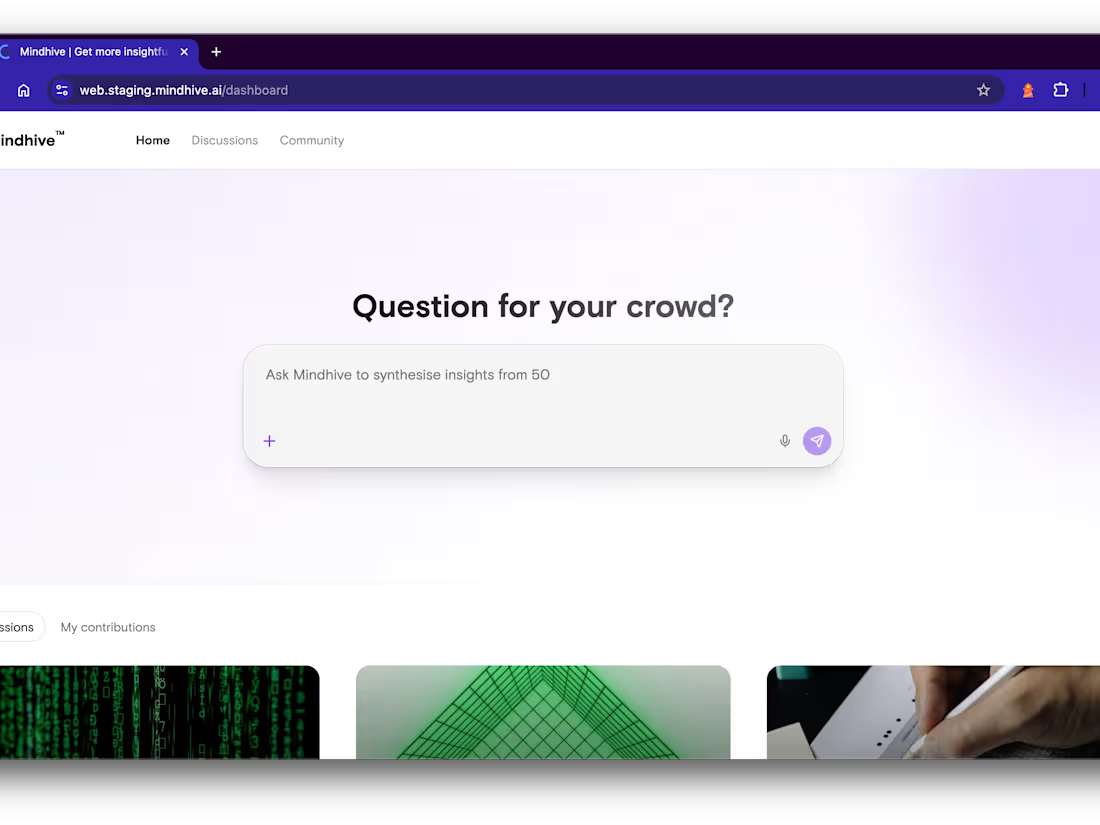

Mindhive

0

10

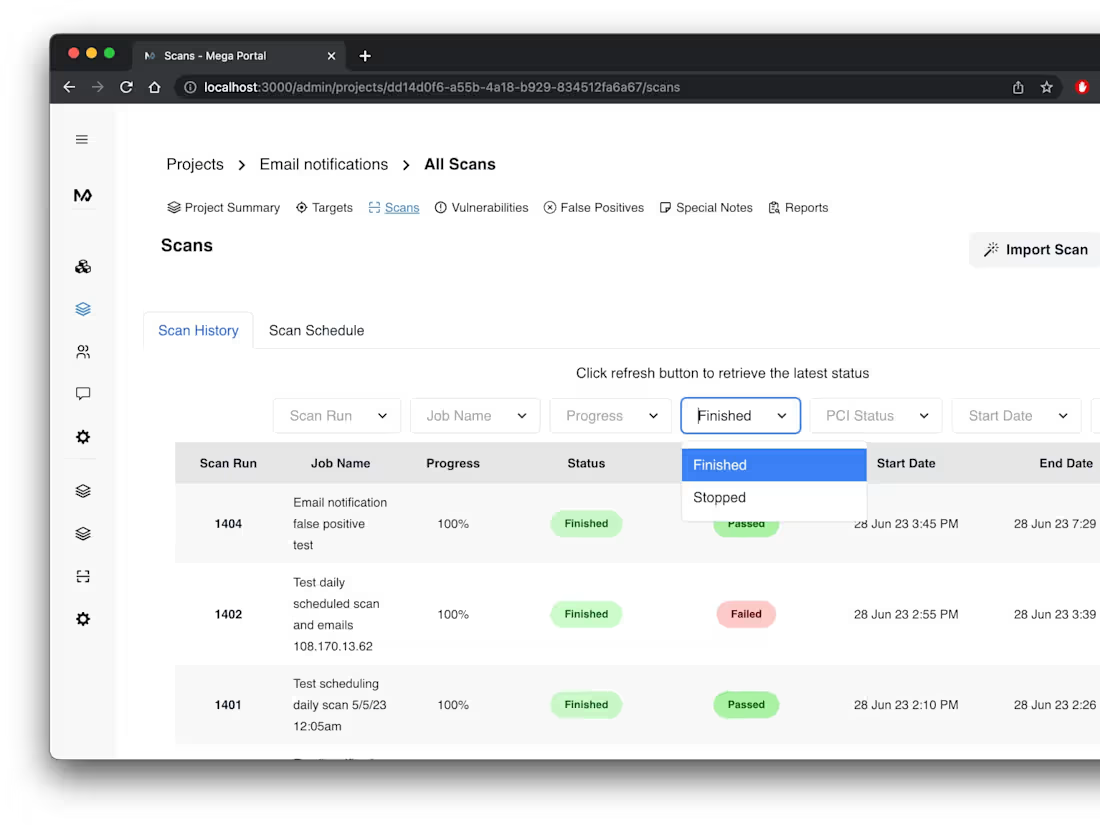

Megaplant IT Client Portal

0

12

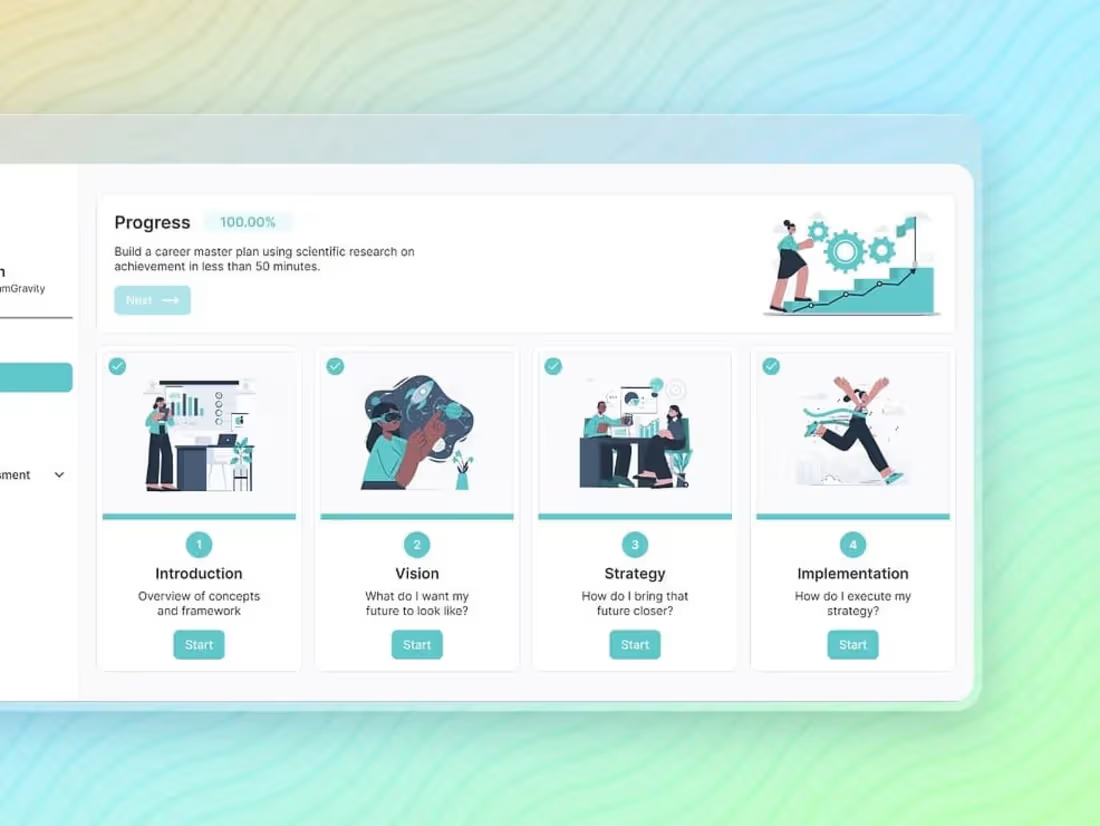

mydreamgravity

0

7

Yeahnah Hybrid app

0

6

Admisi Institut Teknologi Indonesia

0

9