Kami Harcej

AI decisions made clear, safe and actionable

New to Contra

Kami is ready for their next project!

AI is not making people smarter.

It’s making them faster at skipping thinking.

Most AI tools are optimized for one thing:

→ giving answers as quickly as possible

That works for productivity.

But for learning?

It’s a problem.

Users don’t struggle.

They don’t reason.

They don’t retain.

So I built something different.

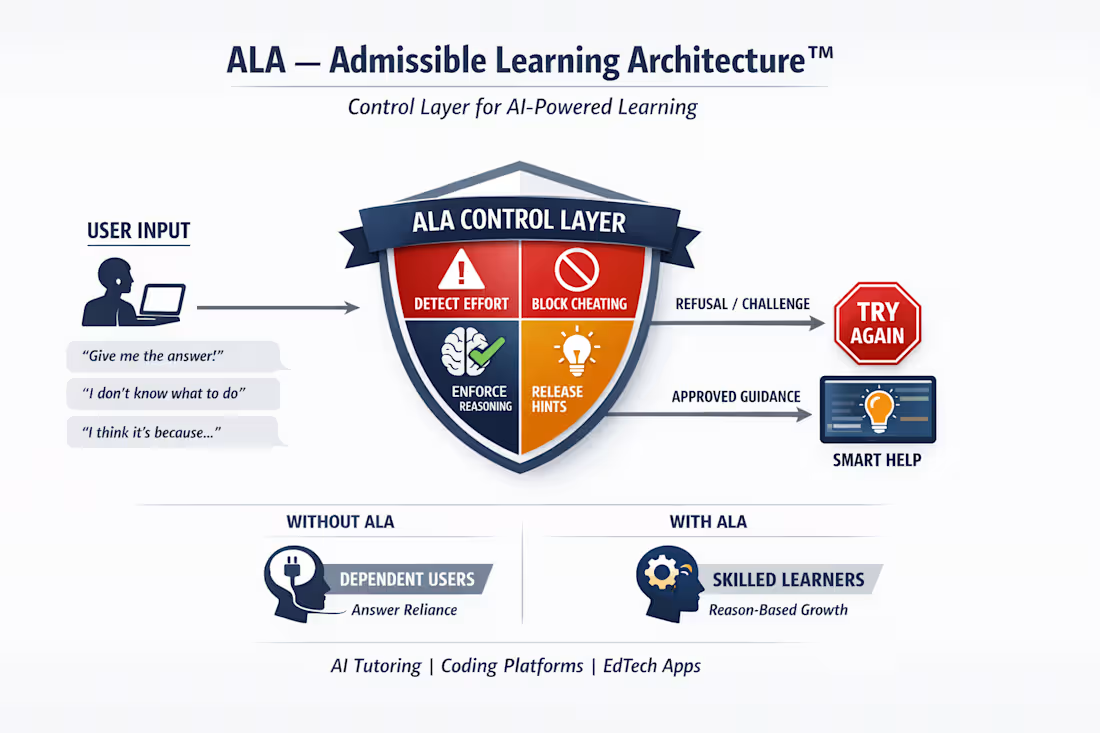

ALA - Admissible Learning Architecture™

A control layer for AI that enforces real learning.

Instead of answering immediately, it:

• detects low-effort input

• blocks answer-seeking behavior

• evaluates reasoning

• only allows hints when appropriate

In simple terms:

→ it decides when the AI is allowed to help

This is especially relevant if you’re building:

• AI tutors

• coding education platforms

• edtech products

Because the real risk isn’t AI replacing learning…

It’s users becoming dependent on it.

ALA - Admissible Learning Architecture™ is built to solve exactly that.

I’m opening early access for teams who want to integrate it.

Comment “ALA” or message me if you want access.

#AI (https://www.linkedin.com/search/results/all/?keywords=%23ai&origin=HASH_TAG_FROM_FEED) #ArtificialIntelligence (https://www.linkedin.com/search/results/all/?keywords=%23artificialintelligence&origin=HASH_TAG_FROM_FEED) #EdTech (https://www.linkedin.com/search/results/all/?keywords=%23edtech&origin=HASH_TAG_FROM_FEED) #AIinEducation (https://www.linkedin.com/search/results/all/?keywords=%23aiineducation&origin=HASH_TAG_FROM_FEED) #FutureOfLearning (https://www.linkedin.com/search/results/all/?keywords=%23futureoflearning&origin=HASH_TAG_FROM_FEED) #SaaS (https://www.linkedin.com/search/results/all/?keywords=%23saas&origin=HASH_TAG_FROM_FEED) #Startup (https://www.linkedin.com/search/results/all/?keywords=%23startup&origin=HASH_TAG_FROM_FEED) #Founders (https://www.linkedin.com/search/results/all/?keywords=%23founders&origin=HASH_TAG_FROM_FEED) #TechStartups (https://www.linkedin.com/search/results/all/?keywords=%23techstartups&origin=HASH_TAG_FROM_FEED) #ProductDevelopment (https://www.linkedin.com/search/results/all/?keywords=%23productdevelopment&origin=HASH_TAG_FROM_FEED) #AITutors (https://www.linkedin.com/search/results/all/?keywords=%23aitutors&origin=HASH_TAG_FROM_FEED) #DigitalEducation (https://www.linkedin.com/search/results/all/?keywords=%23digitaleducation&origin=HASH_TAG_FROM_FEED)

3

122

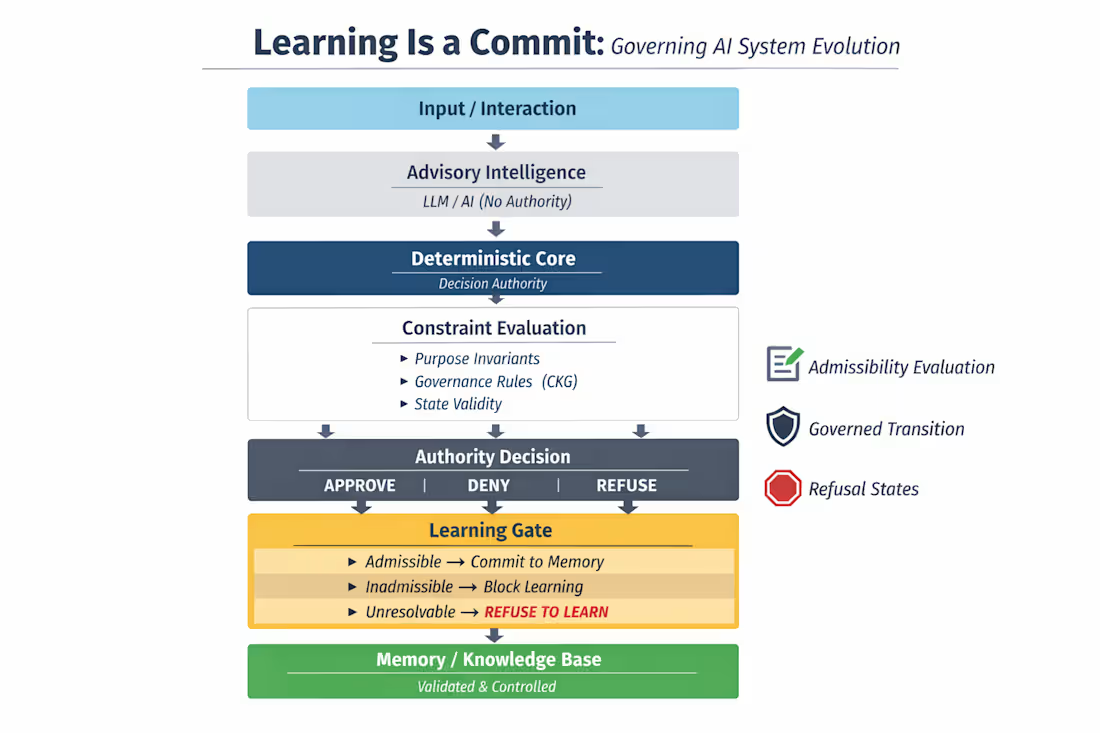

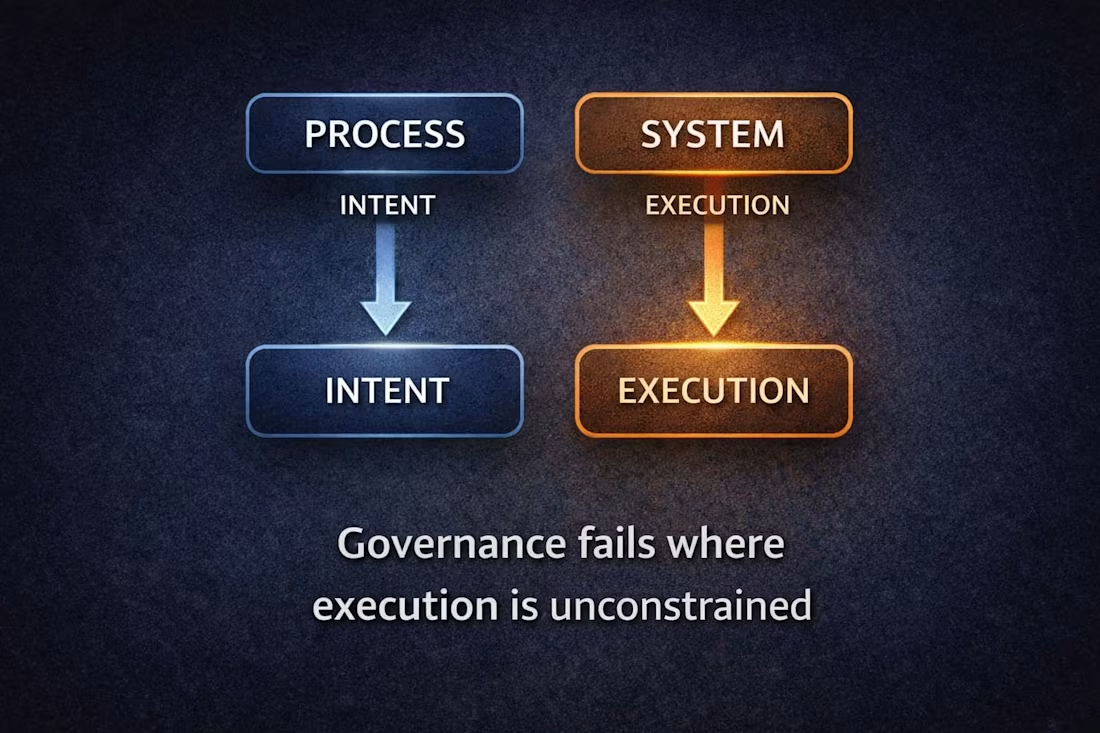

Constraining what becomes real

Most AI governance today is focused on decisions:

→ what systems are allowed to do

→ how actions are validated

→ how outcomes are explained

But there’s a deeper layer most frameworks don’t touch:

What the system is allowed to become over time

Systems don’t just act.

They learn.

And every learning event:

→ reshapes future decisions

→ redefines boundaries

→ shifts authority implicitly

Yet:

Learning is almost always unconstrained

This creates a system that can remain:

→ compliant

→ auditable

→ aligned on paper

…while gradually drifting away from a valid basis for action.

Not because a decision failed.

But because the system evolved beyond what was ever admissible.

The shift is simple, but structural:

Learning must be treated as a governed state transition

Not something that happens automatically.

Something that is:

→ evaluated

→ admitted

→ or refused

Before a system learns, it must resolve:

→ Is this grounded in a valid state?

→ Is the source admissible?

→ Does this fall within its mandate?

→ Can this be justified at the moment of incorporation?

If not:

The system should not learn.

We already ask:

“Is this decision valid at execution?”

But we don’t ask:

“Was the system allowed to learn what led to it?”

That’s the gap.

And that’s where governance breaks.

This is the first layer of something deeper:

Moving from:

→ governing decisions

to:

→ governing system evolution itself

I’ll be exploring this further:

→ execution boundaries

→ admissibility

→ authority layers

→ and now: learning control

Governance doesn’t end at execution.

It extends to what systems are allowed to become.

#AIGovernance #AIArchitecture #DecisionIntegrity #GovernedAI #AIControl

1

88

Most decisions don’t fail when they’re made.

They fail when they’re executed.

Because what looked valid at design time

doesn’t hold under real constraints.

I’ve been stress-testing decisions this way:

→ reconstruct context

→ map constraints

→ simulate execution

→ check if the decision is still admissible

In most cases, it isn’t.

If you’re working on:

– AI deployment

– risk / compliance decisions

– high-stakes operational changes

I’m opening a few slots for a Decision Integrity Stress Test

You bring 1–3 decisions

We test if they actually hold

No slides. No theory. Just truth.

DM me “TEST”

2

105

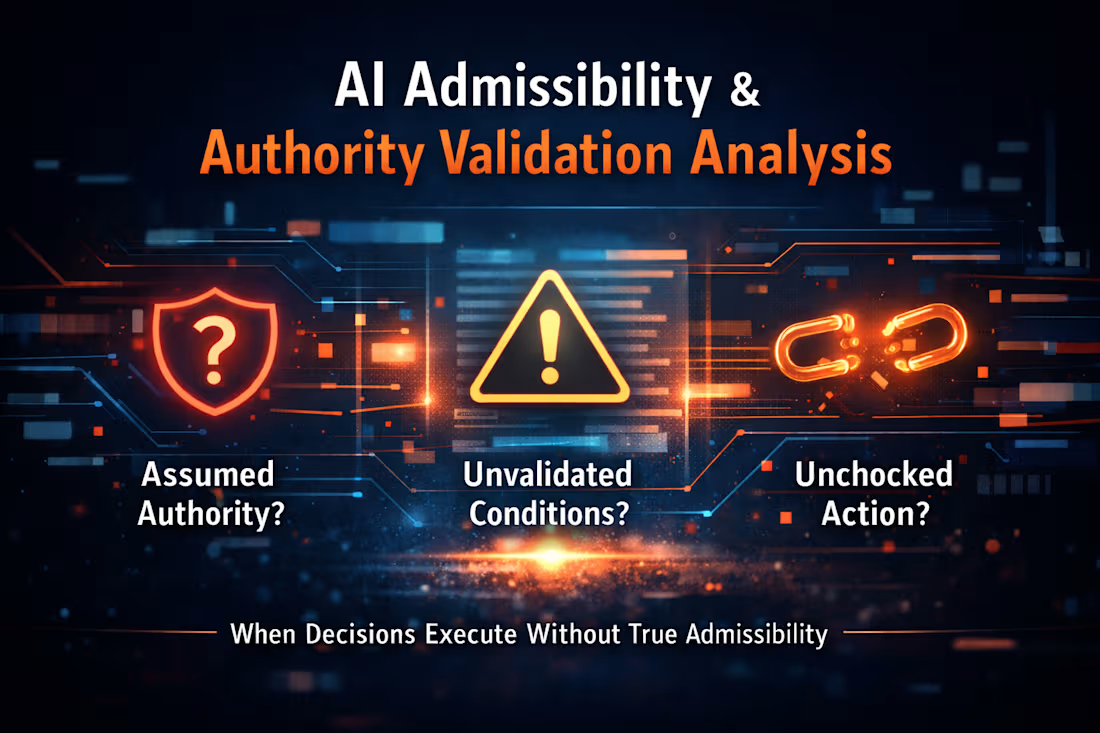

“When decisions become real without valid authority”

This work examines how AI-driven decisions can appear valid while lacking the authority or conditions required to become actionable.

It focuses on a critical gap:

– outputs are treated as admissible

– decisions are accepted as valid

– but authority and conditions are never fully established

The analysis highlights:

– where systems assume authority rather than explicitly validating it

– how admissibility is inferred instead of resolved

– where decisions become actionable without sufficient grounding

– why systems can execute correctly while lacking valid basis

The goal is to identify where decisions are allowed to become real,

not because they are admissible,

but because nothing prevents them from being treated as such.

3

120

Decision Integrity Stress Test

A structured approach to identifying where AI-driven decisions fail under real-world conditions.

This work focuses on testing whether decisions that are:

– correct

– compliant

– and authorized

…remain valid at the moment they are executed.

The stress test surfaces:

– where decisions drift from their original conditions

– where systems cannot re-establish grounding

– where inadmissible actions are still allowed to execute

It is designed to identify failure points that do not appear in audits, logs or standard governance processes.

Used before deployment, it helps ensure that AI systems can withstand real conditions - not just pass validation on paper.

2

80

This work demonstrates how AI outputs can appear valid while still being unsafe to act on under real conditions.

It focuses on the gap between:

– what a system produces

– and what a system is actually allowed to execute

The analysis highlights:

– how outputs can pass evaluation but fail at execution

– why risk emerges at the transition from intent to action

– where systems lack constraints at the point of commit

The goal is to identify where AI-driven decisions become actionable without being fully validated against current state, authority and conditions.

2

87

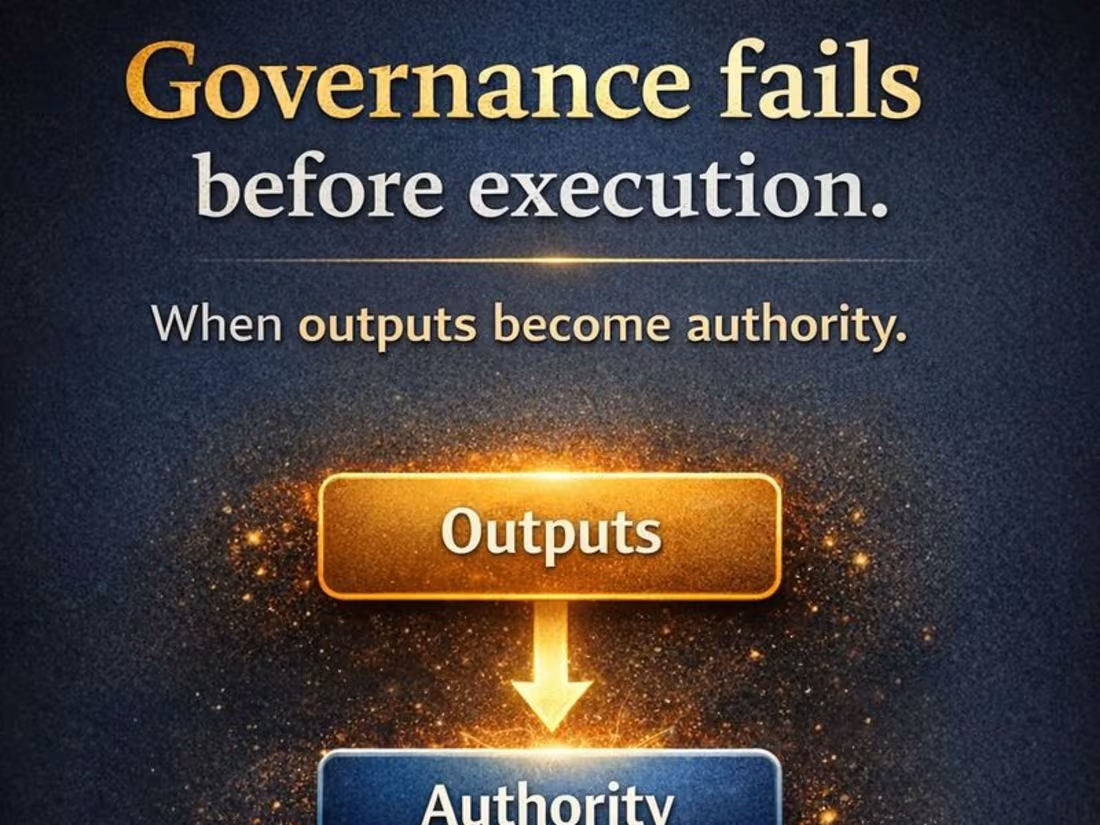

This work highlights a critical failure mode in AI systems:

decisions that are correct, compliant, and authorized - but no longer valid at the moment they are executed.

The focus is on how outputs transition into authority through repeated use, and how systems can begin to act on those outputs without re-validating whether they still hold under current conditions.

It explores:

– how authority forms through interaction, not just formal assignment

– why governance often fails before execution, not after

– where systems allow inadmissible actions to become real

This perspective is used to identify where AI-driven decisions drift from their original conditions - even when everything appears governed.

1

79