The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

This work highlights a critical failure mode in AI systems:

decisions that are correct, compliant, and authorized - but no longer valid at the moment they are executed.

The focus is on how outputs transition into authority through repeated use, and how systems can begin to act on those outputs without re-validating whether they still hold under current conditions.

It explores:

– how authority forms through interaction, not just formal assignment

– why governance often fails before execution, not after

– where systems allow inadmissible actions to become real

This perspective is used to identify where AI-driven decisions drift from their original conditions - even when everything appears governed.

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

awsome. love it

Played around with Lovable this time.

Still blown away by that mega menu, wild times, honestly. Built in under a minute with Lovable.

If your AI is still spitting out ugly designs, maybe the problem isn't the AI. It's you. You're just not prompting right.

AI doesn't replace humans. It needs direction. It needs someone who actually knows the game to steer it.

And that's exactly where I come in.

I make things with love, with Lovable 😅🩷

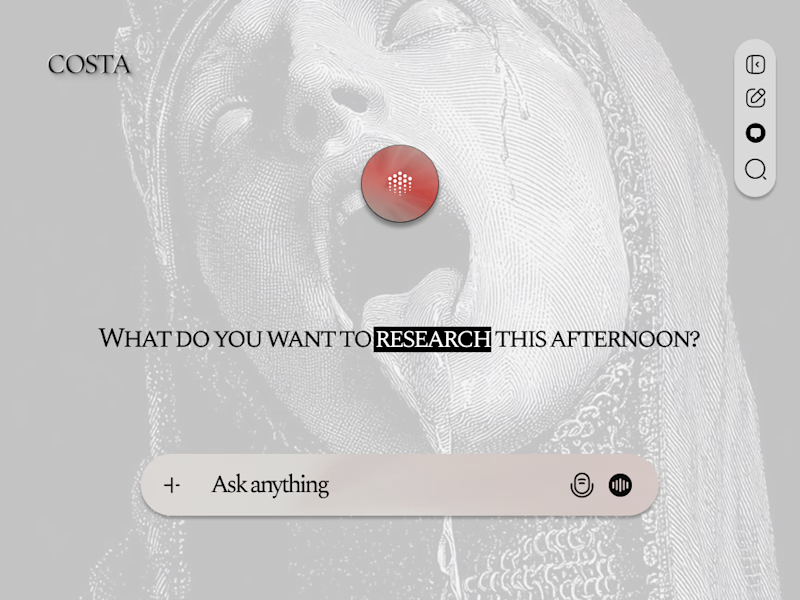

Which version of an AI interface actually earns its background?

A: the engraving bleeds through, noise, texture, weight.

B: stripped back. The orb floats in silence.

Same bones. Completely different tension.

I'm torn and I need you to settle it.

11 voted

34%

21 voted

66%

32 votes

Closed

Clean one for me

Trending

Runway

AI video generation is exploding. What are you dreaming up in Runway?

Contra University

Learn from expert creatives how to earn more using next-gen AI tools.

creativeaiflow

Creative AI workflows are evolving. What tools do you use, and what are their strengths and weaknesses?

portfolioreview

The best portfolios tell a story, not just show a grid. Share yours for feedback.

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?