The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

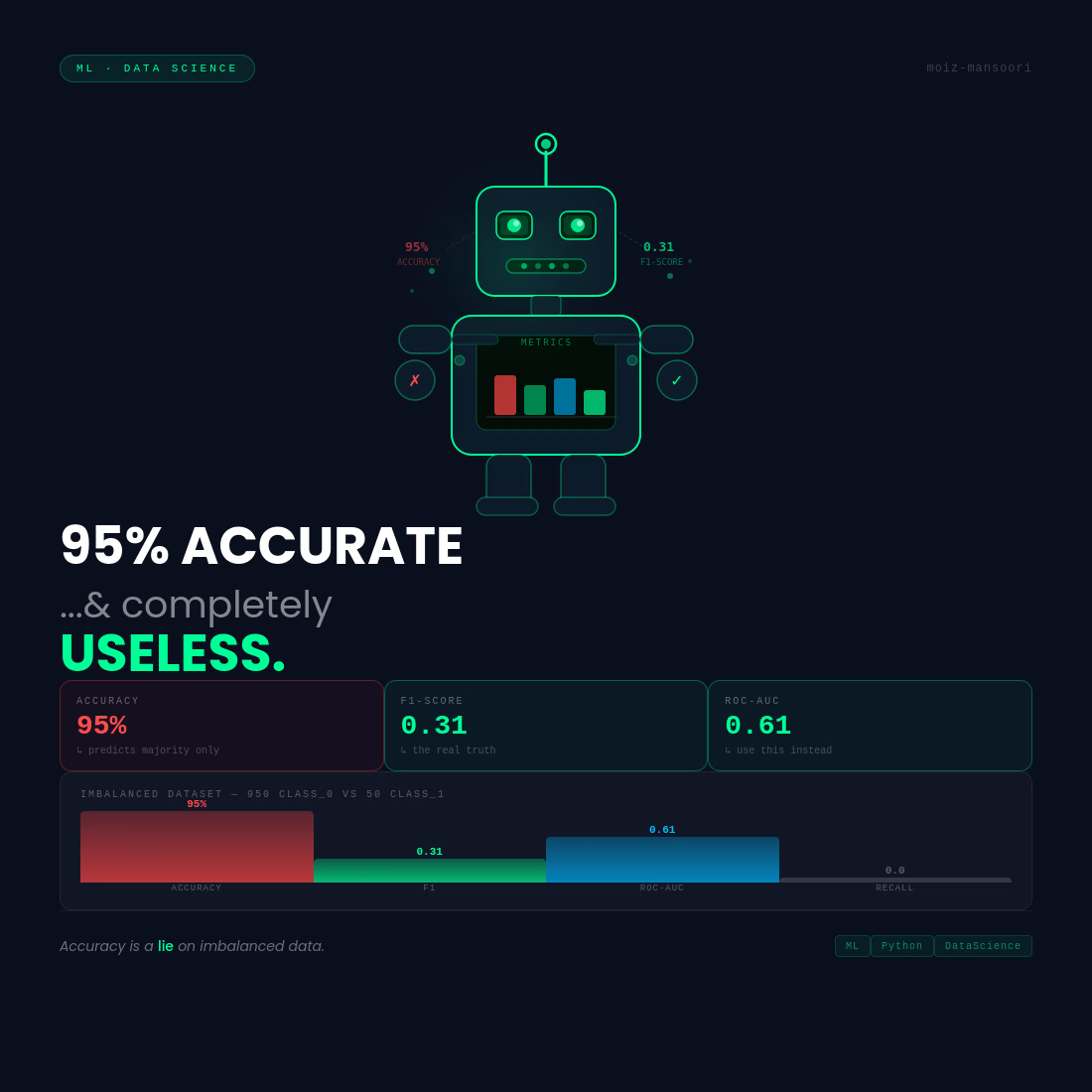

Your ML model is 95% accurate. It's also useless. Here's why 👇

Built a model. Got 95% accuracy. Celebrated.

Then checked the confusion matrix.

Out of 1000 predictions:

950 were class 0 (majority)

50 were class 1 (the ones that actually mattered)

The model learned one thing: predict class 0. Always.

95% accurate. 0% useful.

This is the accuracy trap. And it kills real ML projects.

The fix? Stop looking at accuracy. Start looking at:

F1 Score: balances precision and recall

ROC-AUC: how well your model separates classes

Confusion Matrix: shows exactly where it fails

I ran into this while building a fraud detection pipeline. Switching metrics changed everything.

Accuracy is a lie when your data is imbalanced.

What metric do you actually trust DM/comment me? 👇

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

“Designers are cooked”

still getting DMs from founders to design their websites.

Because it’s never just about output.

It’s about taste. Clear thinking. Knowing what actually makes someone stop, understand, and feel something.

AI can generate fast.

But it can’t judge what’s right.

And that gap? still wide.

Great insight!

BayFi ✦ Explored a conceptual AI mobile experience for Evia, designed to make intelligence feel calm, creative, and alive. Soft gradient surfaces, spacious layouts, and subtle glass effects help reduce friction while keeping the interface expressive. From quick chat creation to project-based thinking, every interaction is optimized for clarity, speed, and trust.

well details

I cleaned data for 3 days.

Model trained in 10 minutes.

Nobody talks about this part of Data Science.

80% of the job is:

— Missing values that make no sense

— Columns named "col_1", "col_2", "col_final_FINAL"

— Duplicate rows that shouldn't exist

— Dates in 6 different formats

The model is the easy part.

The data is the real work.

Next time someone says "just run a model on it" — you'll know why that sentence hurts.

Trending

FLORA

Reusable workflows are replacing one-off prompts in creative AI. Share what you're building in FLORA.

portfolioreview

The best portfolios tell a story, not just show a grid. Share yours for feedback.

brandguidelines

Brand guidelines are becoming living systems. What are you building for your clients?

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?

aivideo

AI video tools are moving at warp speed. Which ones are you experimenting with?