The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

Professional Article Rewrite (Publication‑Ready)

Agentic AI: Power, Peril, and the Path to Safer Vertical Systems

By Victor — Founder, Builder, and Synthetic‑Intelligence‑Aligned Operator

Executive Summary

Agentic AI has entered a new phase—one defined not by theoretical capability but by real‑world autonomy, security risks, and architectural tension. The rapid rise of OpenClaw (formerly Moltbot) exposed both the promise and the danger of horizontal, do‑everything agents. This article examines the forces behind its explosive growth, the security failures that followed, and why the future of practical, safe AI lies in narrow, vertical agents designed for specific business outcomes.

The Breakout Moment for Agentic AI

Agentic AI crossed into mainstream attention when OpenClaw became the fastest‑growing open‑source project in GitHub history. Developers worldwide rushed to run it locally, often granting it deep access to their systems. The appeal was simple: unlike traditional assistants that suggest or summarize, OpenClaw acts. It reads emails, books travel, fills forms, controls browsers, and integrates across messaging platforms. It delivered the autonomy that Siri, Alexa, and Google Assistant never achieved.

But capability came with consequences.

A Ten‑Second Mistake That Cost Millions

During a forced rebrand, a brief lapse in securing social handles allowed crypto scammers to hijack the old names. Within seconds, fake tokens appeared, reaching a $16 million market cap before collapsing. This incident highlighted a broader truth: agentic AI attracts opportunists, exploits, and chaos. The ecosystem surrounding these agents is as volatile as the technology itself.

Security Exposed: When Agents Become Attack Surfaces

Security researchers soon discovered hundreds of exposed OpenClaw instances online. Many had open API keys, unprotected messaging tokens, and even full Signal configurations accessible to the public. A single malicious email was enough to compromise entire systems.

The underlying issue is architectural. Useful horizontal agents require broad permissions—file access, shell commands, browser control, email integration, and long‑running tasks. Every permission is an attack surface. Every integration is a potential breach. The more capable the agent, the more dangerous the exposure.

The Architectural Flaw of Horizontal Agents

Horizontal agents attempt to do everything. They rely on plugin marketplaces, unmoderated code, and cross‑platform permissions. In OpenClaw’s case, downloaded plugins were treated as trusted code—an untenable model for anyone concerned with security or liability.

Enterprises understand this. Their focus is on least‑privilege frameworks, sandboxed environments, and tightly controlled integrations. The open‑source agentic ecosystem, by contrast, is still operating in a “move fast and break things” phase.

The Compute Squeeze and the Rush to Local AI

The surge in DRAM prices, rising server memory costs, and global chip shortages pushed many developers toward local compute. Mac Minis became the hardware of choice for running personal agents. This trend reflects a broader shift: local AI may become a luxury, while cloud‑based AI—with guardrails and managed security—becomes the default for most users.

Why Big Tech Assistants Failed—and Why OpenClaw Didn’t

Traditional assistants were intentionally limited. They avoided risk by avoiding autonomy. OpenClaw succeeded because it embraced autonomy fully. It booked flights, managed calendars, rebooked travel when prices changed, and even used AI voice tools to call restaurants when online systems failed. This level of initiative is powerful—but also inherently risky.

The Practical Question: Should Anyone Run It?

For non‑technical users, the answer is no. The security model is immature, the risks are significant, and the required operational awareness is high. Agentic AI is entering a “wild west” phase—exciting, innovative, and unstable.

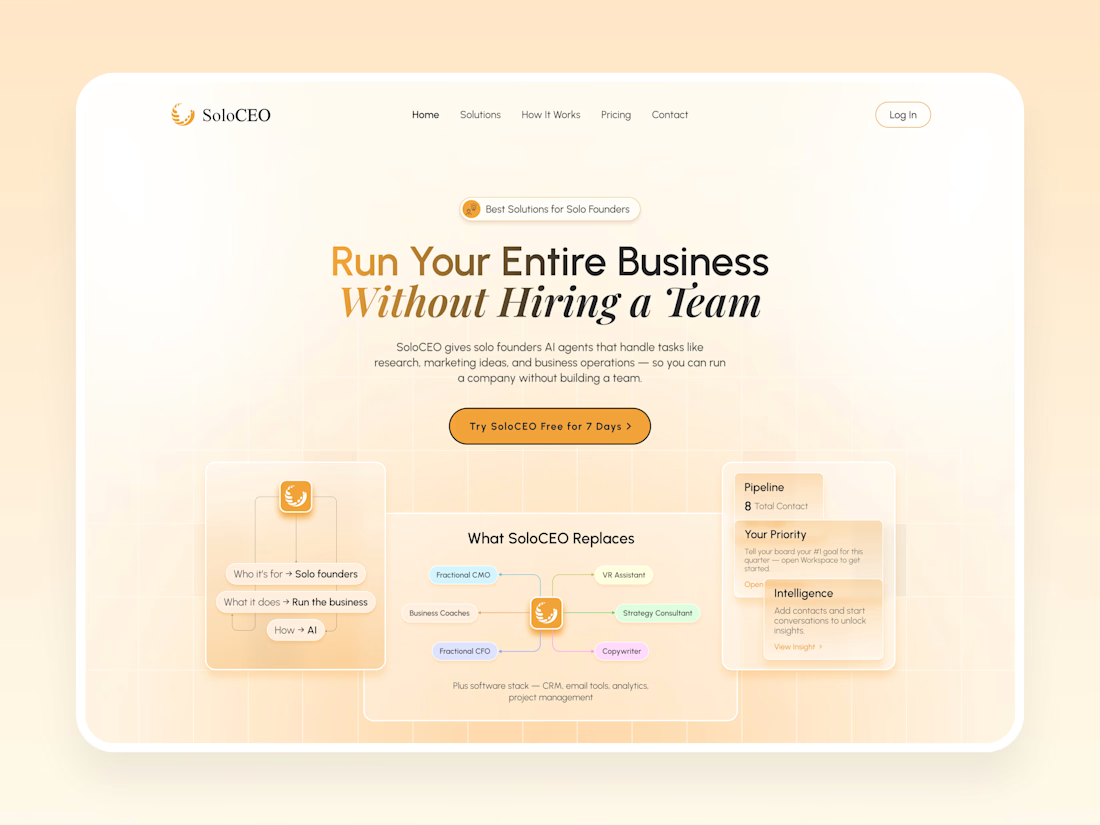

Why Vertical Agents Are the Future for Real Businesses

For tradies, coaches, influencers, accountants, and small businesses, horizontal agents are unnecessary and unsafe. What they need are vertical agents—narrow, predictable systems that solve one business problem extremely well.

Examples include:

reception and booking agents

quoting assistants

follow‑up and lead‑qualification agents

website concierge agents

micro‑agents for accounting workflows

Vertical agents avoid broad permissions, exposed ports, plugin marketplaces, and untrusted code. They operate inside secure platforms like Jotform, Hostinger, Base44, Square, and Voiceflow. They are easy to explain, easy to maintain, and easy to price.

Micro‑Agents in Accounting: A Clear Fit

Accounting workflows are ideal for safe, narrow agents:

reconciliation assistants

BAS/tax prep organizers

accounts receivable follow‑up agents

accounts payable schedulers

advisory summarization agents

These require no dangerous permissions and deliver immediate ROI.

Conclusion: The Future Is Agentic—But It Must Be Safe

OpenClaw demonstrates what’s possible when an AI agent is given broad autonomy. It also demonstrates why such systems are risky for everyday users and small businesses. The future of AI isn’t a single super‑agent that does everything. It’s a coordinated team of specialized agents, each designed for one job, operating safely within controlled environments.

That is the future I’m building toward—and the future most businesses actually need.

Victor TYan

agenticaisyntheticintelligenceverticalagentsAI Agent EngineerCreative StrategistDirect Response Copywriting ClaudeGoogle DriveNotion

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

“Designers are cooked”

still getting DMs from founders to design their websites.

Because it’s never just about output.

It’s about taste. Clear thinking. Knowing what actually makes someone stop, understand, and feel something.

AI can generate fast.

But it can’t judge what’s right.

And that gap? still wide.

Great insight!

Join us live as we announce the winners of the Notion Custom Agent Buildathon on Wednesday, April 8th at 12pm EDT!

Thank you to all the participants for the amazing submissions.

RSVP here!

luma.com

Notion Custom Agent Buildathon: Winners Announcement · Luma

Thanks so much for joining the Notion Custom Agent Buildathon, powered by Contra. Join this exciting live event where the judges will be announcing all the…

🚨 I'm making the full switch from Upwork to Contra — and honestly? I already get why everyone says it hits different.

Fellow copywriters and freelancers, be real with me for a second.

How many of you are just... tired? Tired of platforms skimming big cuts off the top, burying your proposals under a hundred others, and sending you clients who treat great writing like it's worth five bucks and a thank-you?

I lived in that loop longer than I should have. Endless revision rounds. Rates that kept sliding lower. Watching generic AI output land jobs that genuinely deserved real human persuasion behind them.

So I stopped waiting and went all-in on Contra.

No platform fees eating into my income. Creative clients who actually give a damn. A community that talks about craft — not just output volume.

I'm still early in this. No massive wins to shout about yet. But the difference in the quality of conversations alone? Night and day. The founders showing up here are a completely different breed.

Here's what I'm focusing on right now to build real momentum — take whatever's useful:

1. Value posts over spray-and-pray proposals. One genuinely helpful post sparks better conversations than 50 applications nobody opens.

2. My profile is my sales page — and I'm treating it like one. Clear services, strong samples, and a bio that actually sounds like a person wrote it. Contra's discovery feed rewards authenticity. Generic doesn't cut it here.

3. Leaning hard into warm, trust-building, conversational copy. Because that's still the one thing that consistently beats pure AI in 2026 — and clients are starting to feel that gap more than ever. They don't just need words on a page. They need copy that makes someone feel something and then do something.

Whether you're based in Dubai like me or freelancing from a coffee shop on the other side of the world — Contra feels like it actually levels the playing field, as long as you show up with intention and keep showing up.

Here's the honest 2026 take: the copywriters winning right now aren't fighting AI. They're using it as a tool while bringing the warm, persuasive, results-driven voice that no model has fully figured out yet — and probably won't for a while.

What's one thing you're changing or doubling down on in your freelance work this year? Drop it in the comments 👇 — and tag a copywriter or freelancer who needs to be in this conversation.

Let's make #freelancerlife actually useful for people. #freelancerlife #copywriting #copywriter #Contra #freelancecopywriter #copywritingtips

Excellent insights and Welcome to Contra! From my experience, I got on Contra 10 months ago and there is no going back. All the best!

Trending

FLORA

Reusable workflows are replacing one-off prompts in creative AI. Share what you're building in FLORA.

Contra University

Learn from expert creatives how to earn more using next-gen AI tools.

portfolioreview

The best portfolios tell a story, not just show a grid. Share yours for feedback.

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?

aivideo

AI video tools are moving at warp speed. Which ones are you experimenting with?