The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

Built a pipeline to process millions of Kafka events using AWS Lambda and stream only relevant data into BigQuery.

Instead of moving entire datasets, we filtered events at ingestion:

Lambda triggered by Kafka (event-driven, no polling)

Applied business filters in-flight

Forwarded only required events to BigQuery

Result:

Significant reduction in data volume, lower costs, and faster analytics.

Key lesson: Don’t move all data—move the right data.

If you're dealing with high-volume streams, filtering early can change everything.

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

Your product launch, visualized like a NASA mission.

FlowCast is an AI-powered product launch command center built entirely with Google Stitch — designed for founders, growth operators, and startup teams who need real-time launch intelligence in one place.

5 fully animated, interactive pages:

Launch Dashboard — live metrics, animated score ring, momentum feed

Launch Countdown — cinematic timer with GO LIVE trigger

Audience Intel — world heatmap, persona breakdown, sentiment arc

Content Command — AI draft generator with viral score probability

Settings — profile, integrations, notification toggles

🔗 Live Prototype: https://flowcast-mission-control-507808598152.europe-west2.run.app/

How I used Stitch:

Started from a blank canvas. Used streaming generation to build all 5 pages, in-place AI edits to fix and animate components without breaking existing layouts, and native HTML canvas motion for all animations — arcs, count-ups, pulsing maps, ghost cursor, and ripple effects. Exported directly to a live deployment link.

What makes it different:

Every metric moves. Every number counts up. Every page breathes. FlowCast doesn't just show you launch data — it makes you feel the momentum.

Nice buddy, Mobile UI's amazing

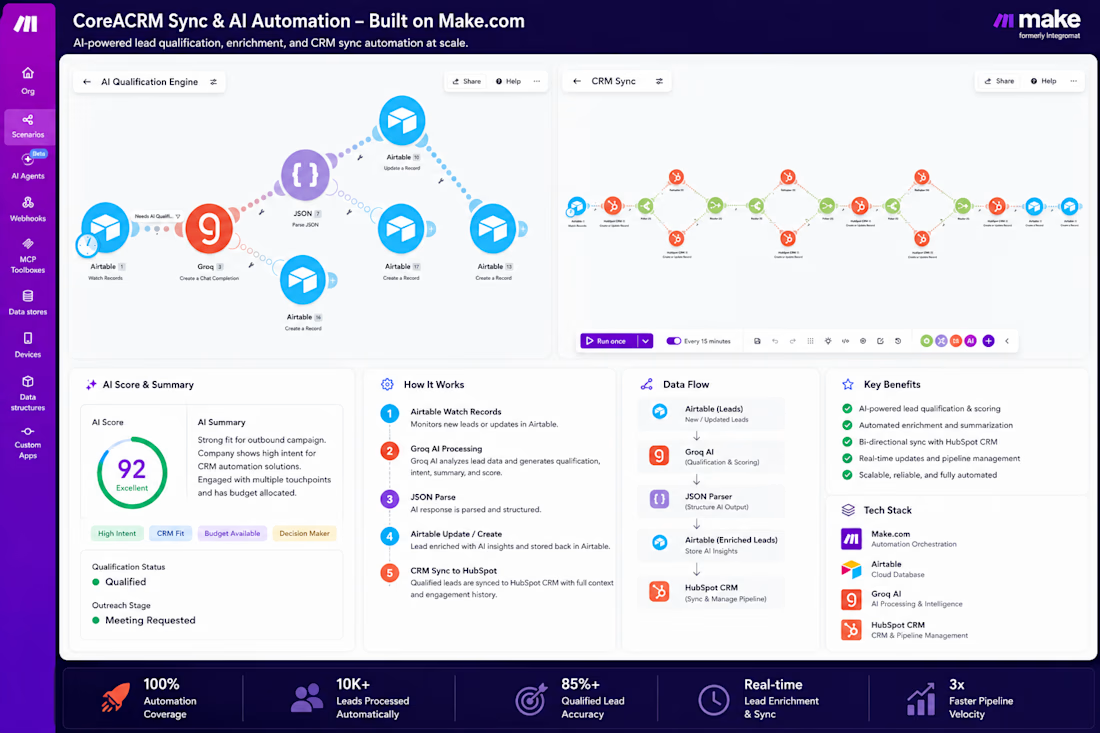

One of the most valuable components of this project was the AI Qualification & CRM Synchronization Engine.

Instead of pushing every incoming lead directly into the CRM, the system first evaluates and enriches lead data using AI.

The workflow automatically:

• Captures incoming leads

• Generates AI qualification summaries

• Assigns lead scores based on available data

• Identifies lead intent and priority

• Filters and routes qualified opportunities

• Synchronizes records into HubSpot CRM

• Creates associated contacts, companies, and deals

This ensures sales teams work with enriched, actionable opportunities rather than raw lead data.

The result is a cleaner CRM, faster lead prioritization, reduced manual review, and more efficient pipeline management.

Tech Stack: HubSpot CRM • Make.com • Airtable • Groq AI

🚀 Built a Fully Interactive Product Sales Analysis Dashboard using Google Sheets & Looker Studio

Not every business report requires complex tools or heavy infrastructure.

Sometimes, the fastest and smartest solution is combining Google Sheets with Looker Studio to create a powerful, automated, and real-time business intelligence system. 📊

For this project, I built a complete Product Sales Analysis Dashboard tracking:

💰 7.51M Total Revenue

📦 337 Total Orders

📈 Dynamic KPI Tracking

🎯 Interactive Filters & Slicers

🔄 Custom Transparent Reset Filter Button

But the real challenge wasn’t the dashboard itself — it was the data cleaning process behind it. 🛠️

The original dataset was extremely messy and inconsistent, so before visualization, I transformed and structured the data directly inside Google Sheets by:

✅ Automating date handling using formulas

✅ Creating data validation dropdown systems

✅ Building an automated Monthly Summary tracker

✅ Using conditional formatting to monitor sales targets instantly

✅ Organizing the backend into modular reporting tabs for scalable analysis

This project reminded me that strong data analytics is not about using the “biggest” tool — it’s about choosing the right workflow to deliver fast, actionable insights for decision-making.

Through projects like this, I continue improving my skills in:

📊 Data Analytics

📈 Dashboard Development

🧹 Data Cleaning

⚡ Automation

📂 Business Intelligence

📉 Data Visualization

I’m currently open to freelance projects and Data Analyst opportunities.

If your business needs help with:

Dashboard Development

Data Cleaning

Automated Reporting

Power BI / Excel / Google Sheets

SQL & Python-based analytics

feel free to connect with me.

🎥 Check out the 44-second walkthrough video below 👇

#DataAnalytics #DataAnalyst #GoogleSheets #LookerStudio #PowerBI #Excel #Dashboard #BusinessIntelligence #DataVisualization #DataCleaning #Analytics #FreelanceDataAnalyst #SQL #Python #Reporting #BusinessAnalytics

Trending

Claude

Claude has entered the design space. How are you using Claude Design?

Contra University

Learn from expert creatives how to earn more using next-gen AI tools.

creativeaiflow

Creative AI workflows are evolving. What tools do you use, and what are their strengths and weaknesses?

portfolioreview

The best portfolios tell a story, not just show a grid. Share yours for feedback.

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?