The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

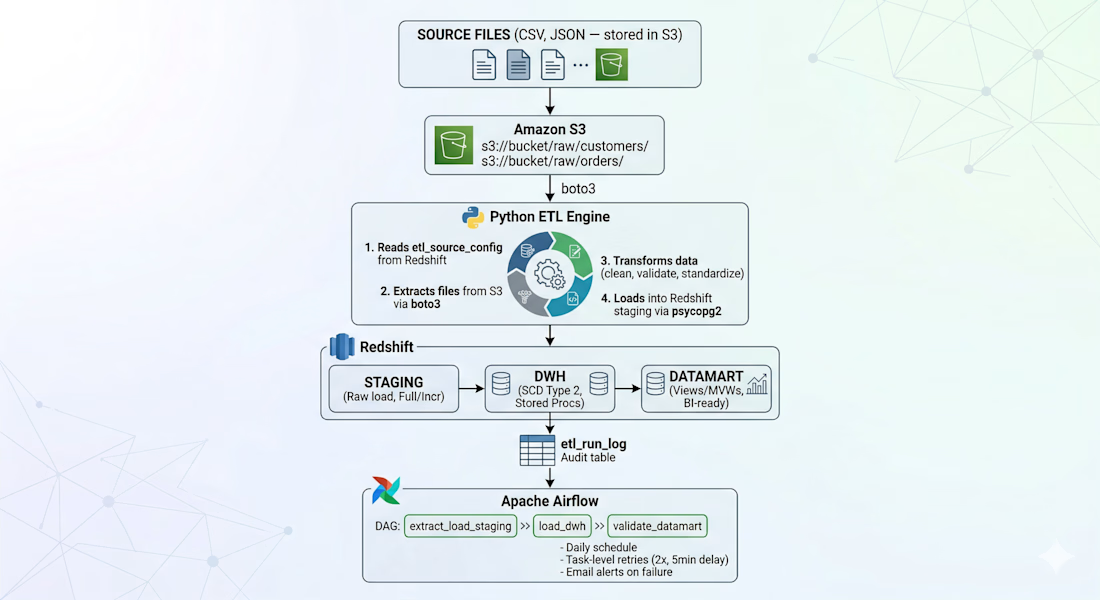

ApexFlow — Metadata-Driven ETL Framework

This project features a zero-code onboarding architecture that decouples ETL logic from data structures. By utilizing a configuration-first approach, new data sources can be integrated into the production pipeline solely through metadata updates, requiring no manual code changes or DAG modifications.

The Workflow

Dynamic Ingestion: A Python engine queries a etl_source_config table in Redshift to identify active tasks, then uses Boto3 to pull CSV/JSON files from specific S3 paths defined in the config.

Automated Processing: The engine dynamically validates and standardizes data based on the metadata schema before loading it into Redshift Staging via psycopg2.

Advanced Modeling: Data flows from Staging to a DWH layer (handling SCD Type 2 history via Stored Procedures) and finally into Datamarts for BI consumption.

Orchestration: Managed by Apache Airflow, the pipeline handles daily scheduling, 2x task retries, and automated failure alerts.

Key Impact

Scalability: Drastically reduces "Time-to-Data" by allowing non-developers to onboard new endpoints via config rows.

Resilience: Centralized etl_run_log provides a full audit trail for every automated run.

Efficiency: Eliminates redundant script creation, ensuring a single, hardened codebase manages all data movement.

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

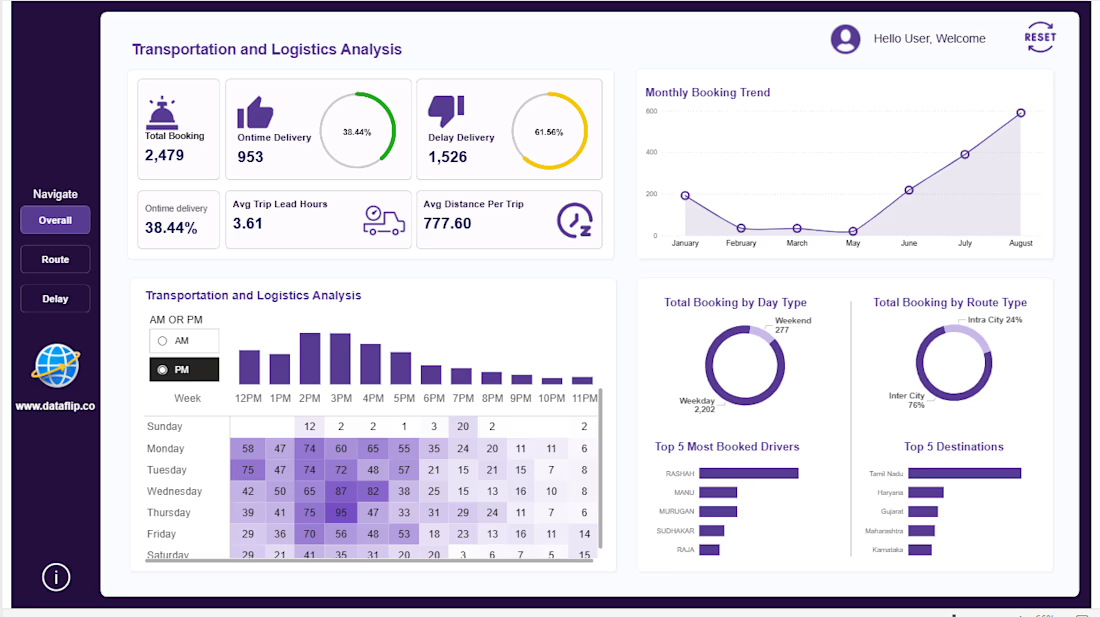

Managing logistics operations without clear insights can lead to delays, inefficiencies, and lost revenue. This dashboard is built to give you complete visibility into your transportation performance — all in one place.

A powerful analytics solution that helps you track bookings, delivery performance, delays, and operational efficiency in real time. Designed for clarity and speed, so you can make decisions without digging through raw data.

🔹Monitor on-time vs delayed deliveries instantly

🔹Track booking trends and operational workload over time

🔹Analyze trip efficiency with lead time and distance metrics

🔹Identify peak hours and demand patterns

🔹Evaluate top drivers and most frequent routes/destinations

🔹Compare weekday vs weekend performance

If you want a clean, professional dashboard that turns your logistics data into real operational insights, I can build a custom solution tailored to your business needs.

The PM peak hour heatmap by day is the most actionable view here — you can see Wednesday 3PM spiking immediately without digging through any data. That kind of instant pattern recognition is exactly what operations managers need to plan driver allocation. What industry was this built for?

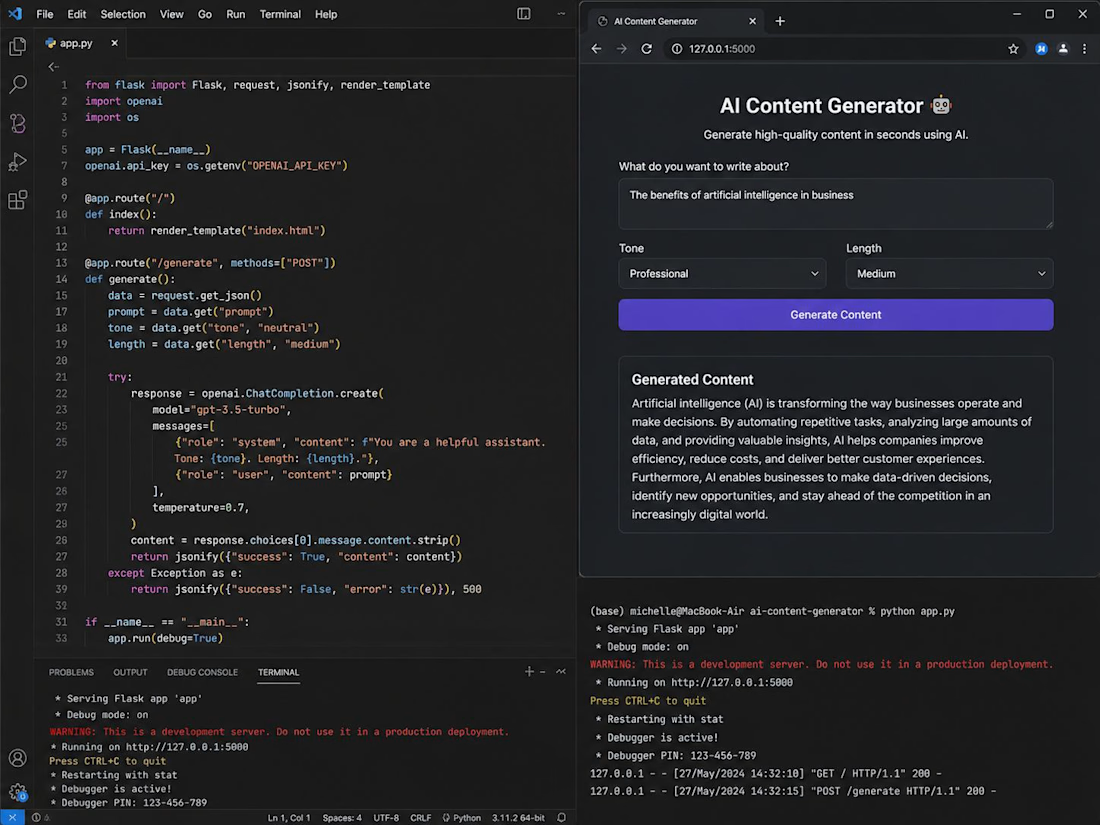

I built an AI-powered content generation tool designed to help users create high-quality written content instantly. The application allows users to input prompts, select tone and format, and generate structured outputs using advanced language models

The system was designed with a clean and intuitive interface, focusing on usability and efficiency. It automates content creation processes such as blog writing, email drafting, and social media copy, significantly reducing manual effort

This project demonstrates my ability to design and develop scalable AI-driven applications, combining backend logic, API integration, and user-focused design to deliver real-world solutions

🚀 Stop losing 15–20% of your Shopify revenue every month.

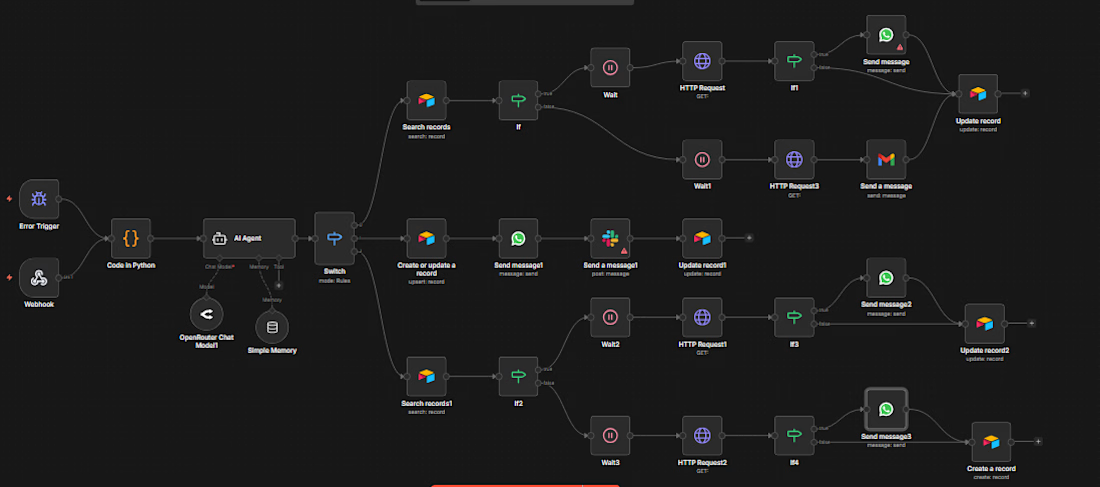

I build a fully automated AI command center for your Shopify store — powered by n8n, OpenRouter AI, and WhatsApp Business. No code required on your end. Setup in 48 hours.

━━━━━━━━━━━━━━━━━━━━

🤖 WHAT THE SYSTEM DOES

━━━━━━━━━━━━━━━━━━━━

✅ Abandoned Cart Recovery

When a customer leaves without buying, the AI analyzes their cart, waits for the perfect moment, then sends a hyper-personalized WhatsApp message — not a generic blast. Average recovery rate: 12–18%.

✅ Smart CRM (Auto-updated)

Every order triggers automatic customer profiling — new, loyal, or at-risk. Data synced to Airtable or Google Sheets in real time.

✅ Team Alerts via Slack

Big order? Low stock? Your team gets an instant Slack notification with all the details — before you lose the sale.

✅ Multi-channel Communication

WhatsApp + Email + Slack — all managed from one intelligent system that decides the right channel at the right time.

✅ Data Engineering (Python)

Raw Shopify webhooks are cleaned, normalized, and enriched automatically. 100% data accuracy.

━━━━━━━━━━━━━━━━━━━━

💡 WHY THIS IS DIFFERENT

━━━━━━━━━━━━━━━━━━━━

Unlike Klaviyo or Omnisend, this system uses real AI decision-making — not rigid rules. It thinks, adapts, and acts like a sales team that never sleeps.

WhatsApp open rate: 90% vs 20% for email.

━━━━━━━━━━━━━━━━━━━━

📦 WHAT YOU GET

━━━━━━━━━━━━━━━━━━━━

• Full n8n workflow (exported JSON, ready to import)

• Complete setup & configuration

• AI prompts optimized for your store

• WhatsApp + Slack + Email integration

• Google Sheets / Airtable CRM setup

• 7-day support after delivery

• Video walkthrough of the system

━━━━━━━━━━━━━━━━━━━━

🛠 TECH STACK

━━━━━━━━━━━━━━━━━━━━

n8n · OpenRouter AI · WhatsApp Business API · Python · Shopify Webhooks · Airtable · Slack · Gmail

Trending

Claude

Claude has entered the design space. How are you using Claude Design?

Contra University

Learn from expert creatives how to earn more using next-gen AI tools.

creativeaiflow

Creative AI workflows are evolving. What tools do you use, and what are their strengths and weaknesses?

portfolioreview

The best portfolios tell a story, not just show a grid. Share yours for feedback.

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?