The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

Deployed a multi-model CPU inference cluster on 2× HP ProLiant

DL360 Gen9 servers (376GB RAM total). Running 5 concurrent LLM

endpoints: Qwen3-Coder-30B, Qwen3-Next-80B, GLM-4.7-Flash,

Granite-4.0-Tiny — all quantized (GGUF/Q4_K_M). Optimized with

ik_llama + MKL for +62% throughput. Ollama-compatible API,

Open WebUI frontend, Opik observability.

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

Power your business with future-ready AI. ⚡

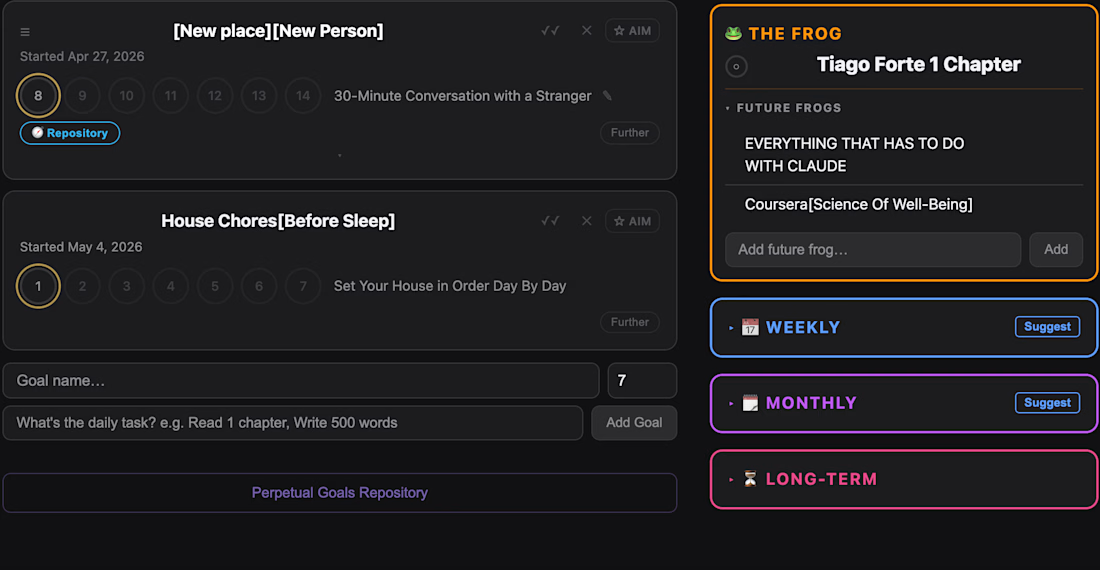

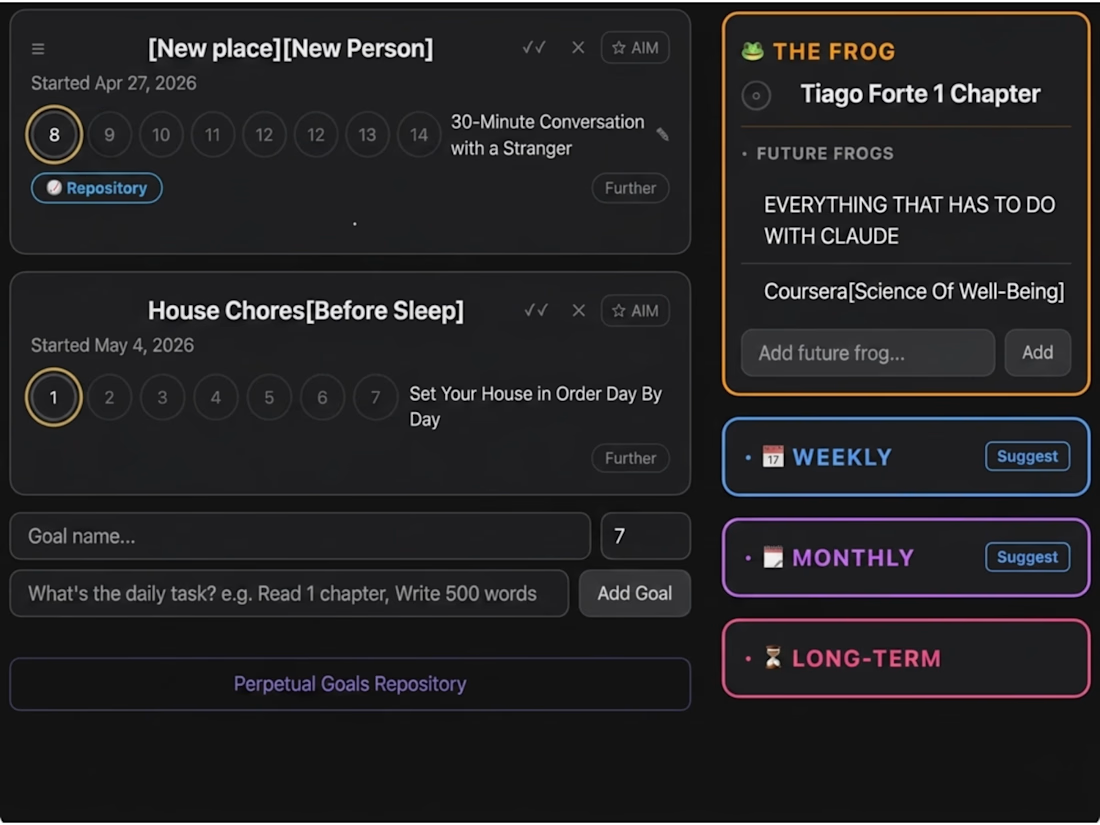

A goal-tracking sub-system that grounds an AI assistant in the user's full life-state — target amount, hard deadline, days remaining, current balance, pace status (ahead / on-pace / behind), and reflection streak.

Core features:

Multi-entry timestamped reflection log — plain Enter submits, Shift+Enter newline, optimistic UI, all entries inside one unified container with centered timestamp dividers

Ephemeral Summarize chat modal — loads today's braindumps as live AI context, brother-tone system prompt, conversation NOT persisted (privacy by design)

Nightly auto-summary cron at 23:55 — pulls reflections, runs through Claude, creates a standalone daily summary that downstream AI engines pick up automatically

Pace analysis — surfaces required-vs-actual daily rate, projected gap at deadline, status flag

Each AI engine pulling this data adapts its suggestions based on whether the user is ahead or behind on their goal — recursive context that compounds over time.

Tech: Claude API, SQLite, Node.js cron, custom retrieval logic.

A weekly and monthly AI suggestion engine that ingests the full user state and returns three ranked, goal-aligned recommendations per call.

What goes into the LLM context:

Chief Definite AIM (text, target, deadline, days remaining, current balance, pace status)

Perpetual goals (with AIM-link flags so aligned goals weight heavier)

Non-negotiable habits and their streaks

Recent insights with integration status (active / implemented / integrated:date)

Last 7–14 daily summaries

Past 28-day (weekly) or 90-day (monthly) suggestion history — for recursive learning

What it returns: three suggestion cards per call, each with priority + difficulty + AIM-alignment + tags + rationale.

Includes:

Intent gate — default AIM-aligned mode vs. custom prompt mode

Auto-detected response shape (ranked / analysis / hybrid)

Unified rate limiting across four endpoints (weekly default, monthly default, weekly custom, monthly custom)

Persistence to suggestion_history table for the next round's recursive context

Tech: Claude API, OpenAI API, custom prompt engineering, SQLite, Node.js, in-memory rate limiting.

Trending

Claude

Claude has entered the design space. How are you using Claude Design?

Contra University

Learn from expert creatives how to earn more using next-gen AI tools.

creativeaiflow

Creative AI workflows are evolving. What tools do you use, and what are their strengths and weaknesses?

portfolioreview

The best portfolios tell a story, not just show a grid. Share yours for feedback.

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?