The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

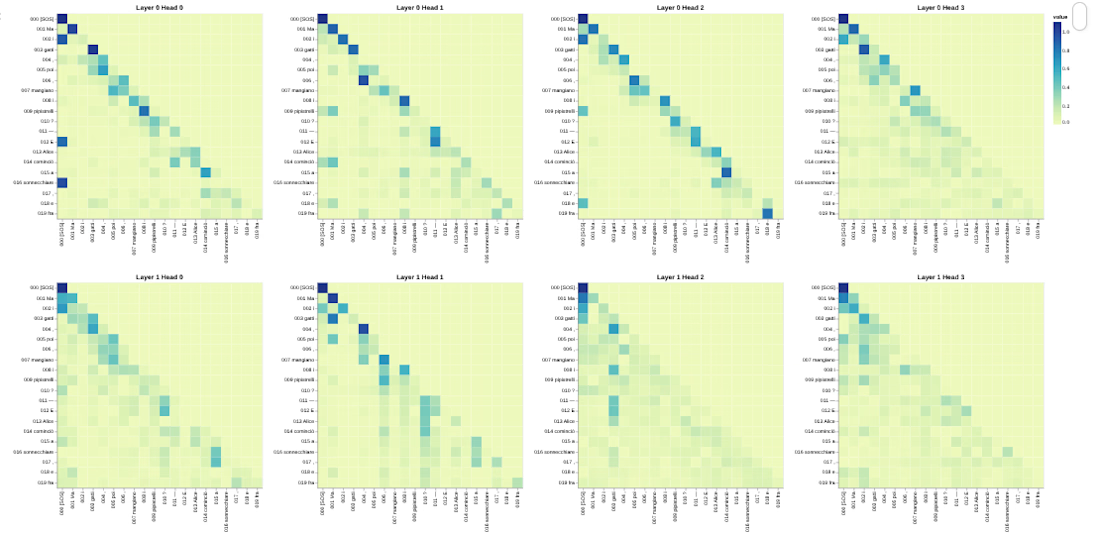

This project implements the Transformer architecture from scratch in PyTorch for bilingual neural machine translation.

This implementation follows the original paper: Attention Is All You Need – Vaswani et al., 2017 https://arxiv.org/abs/1706.03762

Instead of relying on high-level libraries like Hugging Face Transformers, every architectural component is implemented manually to demonstrate a deep understanding of attention mechanisms, masking, and sequence modeling.

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

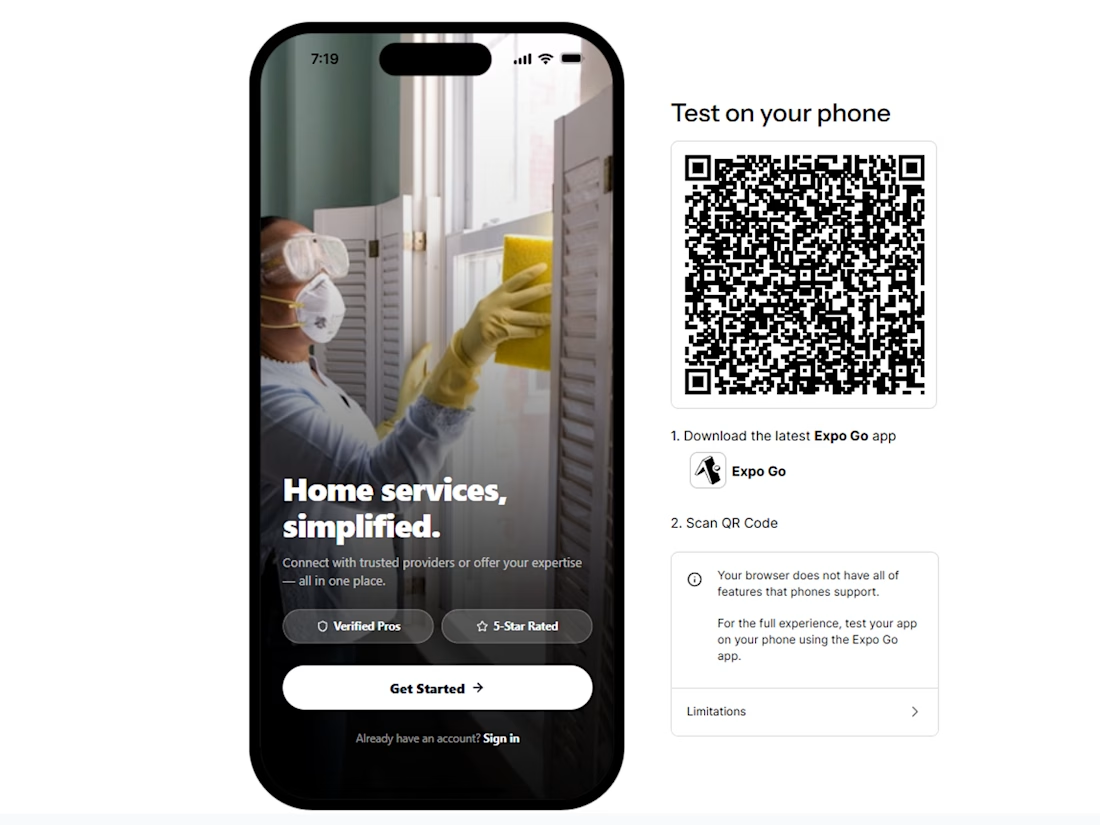

I’m building a Service Connect.

A remixable service marketplace app template for the Anything Marketplace challenge.

The idea is simple: a ready-to-customize mobile app for local service businesses, cleaning companies, home services, beauty services, maintenance teams, or any niche where clients need to book trusted providers.

The app is built as a multi-tenant service platform with two sides:

Clients can browse services, request jobs, manage bookings, chat with providers, track job status, leave reviews, and handle follow-ups.

Service providers can receive requests, manage availability, update job progress, communicate with clients, and keep all work organized in one place.

The template is designed to be localized, rebranded, and remixed into different verticals without rebuilding the whole product from scratch. A cleaning marketplace, handyman app, private home services platform, local beauty booking app, or niche maintenance service can all start from the same foundation.

Built entirely in Anything for the app template challenge.

Link to preview:

https://www.anything.com/mobile-preview/91e25555-84d7-443e-8860-f67fe0fb1c2e

Link to X post:

https://x.com/jonas_tmb/status/2050997515668144336?s=20

Looks cool, I think sign-in UI needs minor enhancement, but overall very clean good Luck!

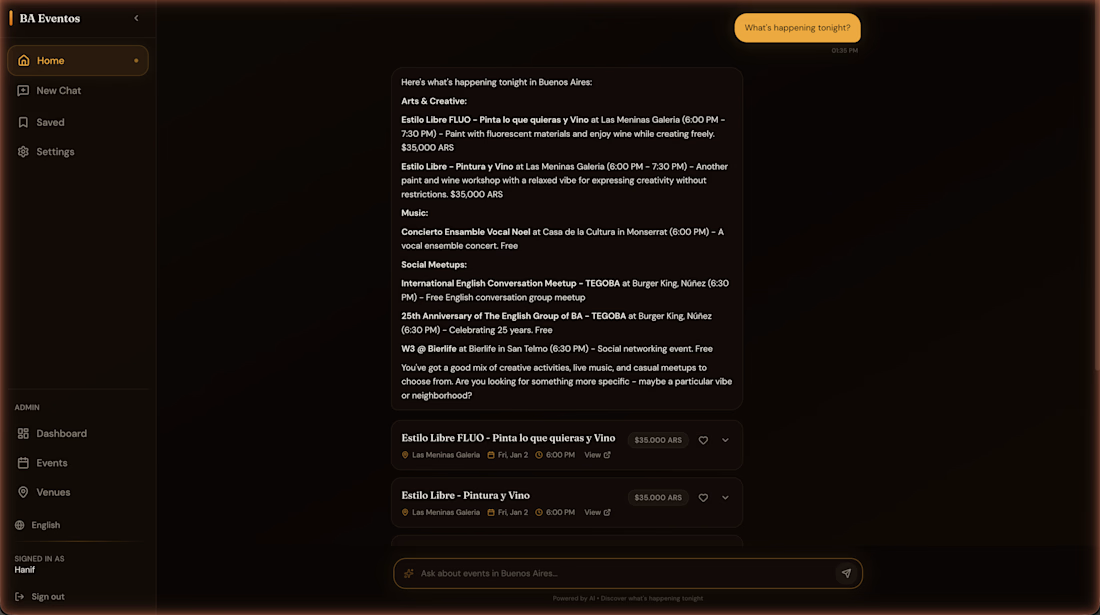

BA Eventos: built an AI event discovery product for Buenos Aires using 3,200+ events from five scraped sources. Users can search, save, share, and get directions through a Claude-powered agent with grounded answers, semantic search with OpenAI embeddings and pgvector, bilingual enrichment, and background scraping jobs. Case study: https://www.hanifcarroll.com/projects/ba-eventos/

Too many tools. Too much chaos😶🌫️

This is the all-in-one agency dashboard that helps you 🚀 📈

→ Find better clients 🌟

→ Close deals faster 💰

→ Manage influencers & campaigns 📝

→ Track performance in real time 🕰️

All from a single platform ✅

Work smarter. Scale faster.

Presenting: ClientFlow

Trending

Claude

Claude has entered the design space. How are you using Claude Design?

Contra University

Learn from expert creatives how to earn more using next-gen AI tools.

creativeaiflow

Creative AI workflows are evolving. What tools do you use, and what are their strengths and weaknesses?

portfolioreview

The best portfolios tell a story, not just show a grid. Share yours for feedback.

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?