Ayush Shukla

UI/UX Designer • No-code Builder • AI Architect

- $1k+

- Earned

- 54

- Followers

Some apps help you read.

I wanted to build one that makes you feel something while reading.

So I built Lumina — a calm, immersive reading sanctuary designed for people who romanticize books, late-night reading sessions, reflections, and quiet moments.

The idea started with a simple thought:

What if a reading app didn’t feel like a utility…

but like a peaceful space you actually wanted to return to every night?

That became the foundation of Lumina.

Every screen was crafted to feel soft, emotional, and intentional — blending editorial minimalism, cinematic atmospheres, ambient gradients, cozy typography, and immersive interactions into one seamless experience.

✨ Features of Lumina:

• Personalized home dashboard

• Immersive distraction-free reader

• Beautiful audiobook player

• Reflection & reading journal system

• Curated mood-based exploration

• Elegant library management

• Dynamic themes & typography controls

• Premium subscription experience

• Soft ambient UI with calming interactions

Try: Lumina (https://lumina-18257.bubbleapps.io/version-test?debug_mode=true)

The goal wasn’t just functionality.

It was creating a feeling.

A reading experience that feels warm, dreamy, and deeply personal.

Built entirely with @Bubble 🫧

1

5

142

Built “Blue Star” for the Melius Creative Challenge ✨

Blue Star is a surreal animated dream world inspired by Vincent van Gogh paintings and lucid dreaming.

I wanted to create something that felt less like “AI-generated content” and more like drifting through a living painting at midnight.

The workflow was built entirely inside Melius using connected image + video generation nodes to maintain a consistent painterly dream aesthetic across every scene.

The project moves through:

• floating staircases in endless skies

• dreamlike glowing hallways

• trains crossing oceans in the night

• cosmic sunflower fields

• surreal moonlit worlds

The goal wasn’t realism.

Project link: https://app.melius.com/projects/9a45f4e3-69f6-4ef8-b5ed-fc333c2647c8/canvas/fedf41cc-6f43-4843-a766-fc241927b11c

It was emotion, atmosphere, and the feeling of being inside a dream you don’t want to wake up from.

Huge thanks to the Melius team for creating such a visually creative workflow experience 🌌

13

10

380

Most interfaces are designed to be used.

I wanted to build one that wanted to play back.

And here's Monster Snacks — a living cartoon UI where tiny chaotic monsters exist inside the interface itself.

Buttons panic when hovered. Menus stretch like chewing gum. Cards bounce with jelly physics. Monsters eat UI elements, chase the cursor, trigger candy explosions, and turn the entire screen into a playable animated playground.

The goal wasn’t to make a “clean UI”.

It was to create something that feels: ✨ impossible ✨ alive ✨ weirdly joyful ✨ impossible to ignore

Every interaction was designed with exaggerated squash/stretch motion, playful chaos, cartoon physics, and nonstop visual reactions to make the interface feel more like an animated toy universe than a website.

Built with Rive for the Impossible UI Challenge 🍬👾

8

23

1.1K

It started with a simple thought —

why do pet apps feel so lifeless… when pets are anything but?

Scrolling through static listings never felt right.

No personality. No emotion. No reason to stay.

So I built something different.

MeowVerse 🐾

A playful, living world where discovering pets feels less like browsing…

and more like exploring.

Here’s what that looks like:

• Swipe through pets in a fun, Tinder-style explore feed

• Discover nearby pets on an interactive world map

• Each pet has its own personality, mood, and story

• Feed them, talk to them, or plan a visit

• Adopt seamlessly through a lightweight, playful flow

• Browse a pet shop that actually feels enjoyable to use

• Complete daily missions and interact beyond just “viewing”

Try: MeowVerse (https://www.anything.com/mobile-preview/964a37bd-106f-4a96-a2f0-a30a6e6d71da)

The focus wasn’t just features —

it was making every interaction feel alive.

Subtle animations. Expressive details. Smooth transitions.

No dead clicks. No static screens.

Just a small world you can tap, swipe, and get lost in.

Curious to hear what you think 👀

11

11

694

Healyo is a platform dedicated to helping orphaned and underprivileged children across India — giving them access to education, nutrition, shelter, and healthcare.

The name comes from one word: heal. Because that's what these kids need. Not pity. A future.

I built the entire site on Zo Computer — no code, just vision. I described what I wanted and Zo brought it to life screen by screen.

Featured screens:

- Welcome page

- Mission window

- Stories of Hope

- Programs & Initiatives

- Donation window

- About Us

- Gmail-Integrated Contact Page

Healyo: www.healyo.pro (https://www.healyo.pro)

Zo didn't just help me build a website. It removed every excuse I had for not starting.

We're in the process of making it official, and this build is the first real step toward that.

10

25

1.1K

I used to spend hours trying to make one product look consistent across different visuals.

Every style felt disconnected. Lighting changed. Colors drifted. Nothing felt like one brand.

So I built something to fix that.

Now I just drop in a single image—and it builds the entire visual system for me.

It analyzes the product, extracts its Brand DNA, and generates five cinematic visuals across different themes—while keeping everything consistent.

And then it turns those into a premium ad.

Same product. Same identity. Completely different worlds.

What it does:

– Extracts color, lighting, materials, and visual identity

– Generates 5 themed visuals (luxury, minimal, futuristic, street, bold)

– Maintains perfect consistency across all outputs

– Creates a cinematic, high-end ad automatically

– Works from just one input image

Try Brand DNA: https://morphic.com/workflows/019dc659-9f87-73a5-92b3-269ecbfc1ee9/brand-dna

(https://morphic.com/workflows/019dc659-9f87-73a5-92b3-269ecbfc1ee9/brand-dna)Walkthrough video: https://www.loom.com/share/43954ac8fe7d440c8b39621be08c1111

If you create anything visual, you should try this.

11

13

701

A product is easy to build.

A brand is what makes it unforgettable.

So I built BRANDRY!

A workflow that takes a single input—a keyboard—and expands it into a complete, premium brand system.

—

I didn’t want random outputs.

I wanted something that thinks like a design studio.

Everything starts with the input image.

I analyze:

• materials

• lighting

• form

• design language

From that, the entire brand direction is derived.

—

Then the system builds across four layers:

1. Logo:

A refined mark inspired by the product—never copied, always reinterpreted.

2. Moodboard:

Colors, textures, and visual cues extracted and elevated into a cohesive direction.

3. Brand Identity:

A dark, premium system—typography, palette, and visual language working together.

4. Website Mockup:

A cinematic presentation—displayed on devices, placed in a real environment, lit like a product ad.

And to bring everything together, I leaned heavily on the Compositor Node.

Every output is carefully layered:

• controlled lighting

• depth and shadows

• balanced composition

Then inside the Creative Code Node, I used Three.js to construct a 3D version of the product.

That added:

• real depth

• accurate lighting interaction

• natural integration into scenes

Workflow: https://app.fuser.studio/view/03af9496-bc89-4f76-a1b7-00c25c7fb513

The product doesn’t feel placed—it feels built into the frame.

…this one’s my second submission.

6

7

275

Most AI outputs look impressive.

But they still don’t feel like a real ad.

That gap is exactly what I set out to close with AUREL🚀

—

I didn’t approach this like prompting.

I approached it like building a creative pipeline.

Everything starts with structure.

I used the Compositor Node as the core—treating every visual like a layered campaign build:

• model as the primary subject

• product placed with intent

• controlled lighting and shadow passes

• grain and texture for depth

Nothing is random.

Every layer is composed to feel like it went through an actual art direction process.

That’s where the “ad feel” comes from.

—

Then I pushed the product beyond flat placement.

Inside the Creative Code Node, I brought in Three.js to generate a 3D version of the product.

This changed everything.

Now the product:

• has real depth

• reacts to light correctly

• sits naturally inside the frame

It’s not pasted in—it’s constructed into the scene.

—

From there, I designed the system to branch into four distinct campaign directions:

- Luxury Editorial — minimal, high-contrast, fashion-first

- Streetwear — gritty, neon-lit, high energy

- Futuristic — cinematic, immersive, sci-fi driven

- Surreal — abstract, dreamlike, expressive

Each theme has its own composition logic, not just a visual style.

Workflow: https://app.fuser.studio/view/fb2d0257-c565-493f-a2a8-099d2b9dd598

6

8

288

From Blank Slide to $12M Series A in 4 Seconds. 🚀

Most tools give you a template. I built an AI Agent that gives you a vision.

Pitch decks are hard. Staring at a blank screen at 3 AM is harder.

So I built PitchDeck to bridge that gap—a high-fidelity, autonomous presentation engine that doesn't just "generate text," it architects a cinematic experience.

Features:

💎 Deep Glassmorphism: Ultra-blur backdrops & micro-borders.

📊 SVG Visual Engine: Dynamic charts, roadmaps, and diagrams.

🕹️ 3D Presence: Spline-inspired cards with 3D tilt & motion.

⚡ Demo-Ready: Zero dependencies, zero external APIs, 100% stable.

The Secret Sauce: Paper MCP 🎨

I didn't just write code; I designed it. By leveraging Paper MCP, I fused high-end UI design with autonomous logic in real-time. It allowed me to fine-tune the staggered entrance animations and 3D physics directly on the canvas, ensuring a premium "Apple-level" finish.

One sentence idea. 10 world-class slides. Built to win.

Stack: Paper MCP • AntiGravity

Try PitchDeck: https://teal-daifuku-ac6404.netlify.app/

Looking for a high-fidelity prototype that wows? Let’s talk.

4

14

413

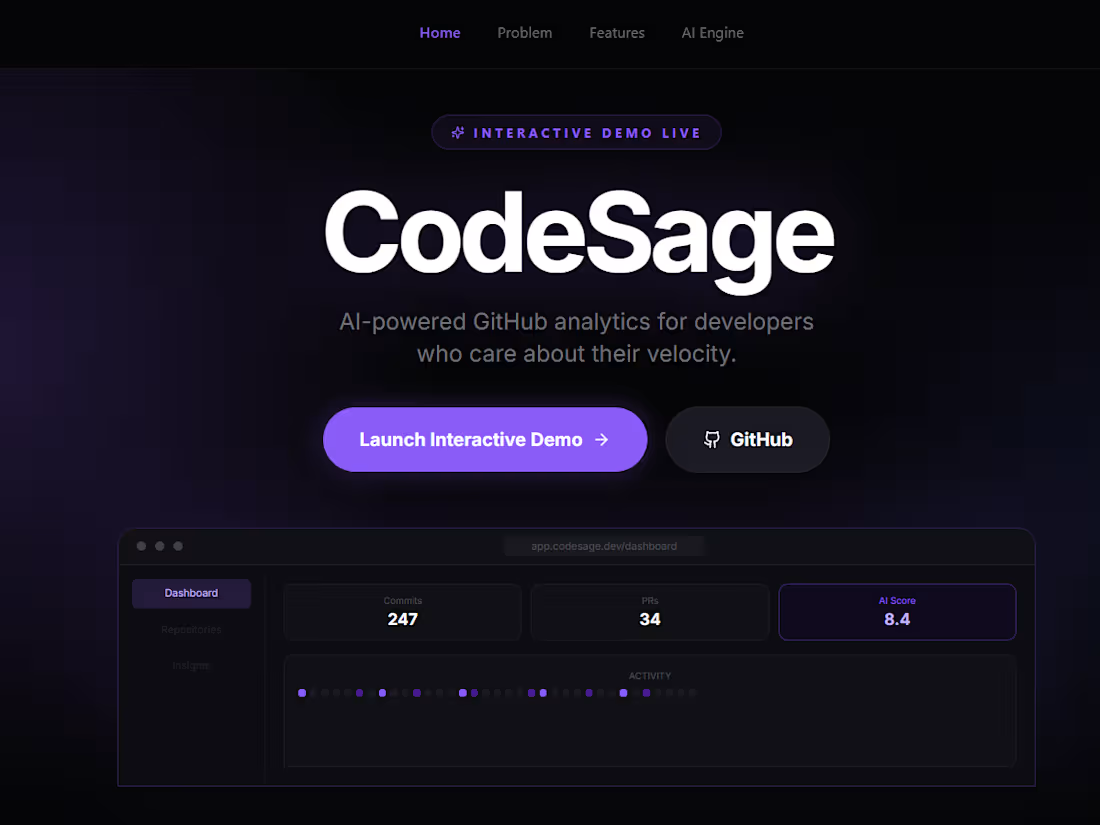

I built something wild for the Paper Challenge🚀

It turns any GitHub repo into a visual case study — instantly.

No writing. No designing.

Just drop a repo link… and the canvas builds itself.

That’s CodeSage💯

Most devs have solid projects…

but they’re buried in README files nobody reads.

CodeSage changes that.

It reads your actual code — architecture, stack, commits —

and transforms it into a clean, dark, presentation-ready case study.

The real unlock?

Paper MCP.

I connected my AI agent directly to the canvas.

So instead of designing manually…

the agent builds everything:

• Sections

• Layout

• Insights

• Structure

All generated directly on the canvas.

No drag. No drop.

Just output.

Pick any repo → get 3 analysis modes:

*Each one gives

→ Complexity score

→ PR velocity

→ Language breakdown

→ Full Refactor Roadmap

Try CodeSage: https://codesage-871524277866.us-central1.run.app/

Tech stack:

Paper MCP · Gemini API · GitHub API · Antigravity

22

58

1.4K

Most ads look the same.

So I built DooM🚀

All it needs:

→ 1 product image

→ 1 model (or your own photo)

And you don’t get one output.

You get a multi-aesthetic campaign:

• Luxury fashion editorial shots

• Bold street-style visuals

• Clean, minimal brand ads

• Cinematic storytelling frames

• Ultra-premium studio product shots

• Experimental artistic visuals

And then…

It goes beyond images.

DooM generates a cinematic art film — not a generic ad, but a moody, neon-lit, emotionally driven visual story.

The kind of thing that feels like a brand, not just shows one.

Now anyone can become their own model.

Try DooM: https://app.flora.ai/techniques/doom

No generic AI look.

Just high-end, agency-level output in minutes.

This isn’t content.

It’s brand presence.

5

7

391

Most UI/UX designers don’t have a design problem.

They have an idea problem.

Staring at a blank canvas → scrolling Dribbble → repeating the same patterns.

So I built something to fix that👉 UX Forge

Drop in any product, and it instantly generates:

• 2 website UI concepts

• 2 mobile app designs

• 1 cinematic 3D web experience

All with different styles, all based on the product’s actual visual identity.

No random inspiration.

No copy-paste designs.

Just structured, product-driven creativity.

It helps you:

→ Explore multiple design directions in seconds

→ Break creative blocks instantly

→ See how a single product translates across platforms

→ Push beyond safe, repetitive UI patterns

Try👉 https://app.flora.ai/techniques/ux-forge

This isn’t about replacing designers.

It’s about giving you better starting points—faster.

Because great design doesn’t start with pixels.

It starts with perspective.

And UX Forge gives you 5 at once.

4

4

316

Stop rebuilding brand identity from scratch!

I built Brandy — a Technique that turns simple inputs → complete brand system in minutes.

Not just logos.

Not just moodboards.

A full, structured pipeline:

→ Brand identity visual

→ Real-world mockup

→ 2 logo variations

→ Clean moodboard

All generated from one input layer.

Most designers: Rewrite the same prompts--> Rebuild the same systems-->Start from zero every time

Brandy fixes that🚀

What makes this different:

• Originality → Not a single output tool, but a full design system engine

• Usefulness → Solves repetitive brand setup work

• Craft → Clean inputs, controlled outputs, no AI chaos

• Repeatability → Same system works for any brand, instantly

• Speed → Minutes instead of hours

I focused on one thing:

Making AI outputs feel like real design work — not random generations.

Built using FLORA’s Technique Builder.

Try it yourself👉 https://app.flora.ai/techniques/brandy

(https://app.flora.ai/techniques/brandy)Also this was my 1st time using Flora- great experience

7

10

469

🎬 I Built Merrsion —a 3d experience hub. All in Omma.

So I rebuilt it.

Result: near-instant load + smooth playback.

🧪 Added a custom Vanilla JS engine with requestAnimationFrame + lerp smoothing

→ scroll controls the video timeline, but feels fluid—not robotic.

✨ Designed a cyberpunk-style UI:

Glassmorphism HUD, dynamic frame counter, scroll-reactive elements, adaptive typography.

🛠 Stack: Vanilla JS, HTML/CSS, GPU acceleration tricks. No heavy libraries.

Try: https://omma.build/p/futuristic-ai-product-website-a2c052

💡 Takeaway: You don’t need bloated frameworks to build cinematic experiences—just smart engineering.

5

13

603

I built a full 3D smart city monitoring system… with just AI prompts.

No traditional coding. No complex pipelines.

Introducing — Cortex City

A real-time urban intelligence dashboard running entirely in the browser.

🌆 Generated an entire city from scratch — bridges, skylines, parks

⚡ Monitor live energy grids + detect brownout risks

🚦 Track traffic density with dynamic flow visualization

🌧️ Analyze weather patterns and storm activity in real-time

🚨 Emergency Mode — city-wide alert system with scan waves + radar targeting

Visit: https://omma.build/p/cortex-city-futuristic-react-experience-gyu1j8

(https://omma.build/p/cortex-city-futuristic-react-experience-gyu1j8)This wasn’t built line-by-line…

It was orchestrated through prompting.

From zero → fully interactive 3D system.

Cortex City isn’t a demo. It’s a glimpse of how cities will be monitored.

And yes… this is my second submission😅

10

14

908

VoidCraft🚀 breaks the rules of how games are built.

A full 3D space exploration game… built entirely inside Omma💯

Everything runs in one complete file:

--> A living Solar System — 8 planets, solar corona, Saturn’s rings, Earth’s atmosphere, asteroid belt, Moon

--> Multiple galaxies — each with explorable star systems

--> A cinematic black hole — gravitational lensing, accretion disk, relativistic jets, particle pull

--> A spacecraft with 6-DOF controls, turbo boost, and cinematic camera modes

--> Film-grade visuals — ACES tone mapping, Unreal Bloom, parallax starfields

--> Full sci-fi HUD — navigation, minimap, proximity alerts, TURBO ACTIVE

Play VoidCraft--> https://omma.build/p/space-exploration-game-master-9p83dh

(https://omma.build/p/space-exploration-game-master-9p83dh)No assets & Zero imports for the engine.

Just a prompt → a playable universe.

This is what AI-native building looks like.

10

14

930

Finding freelance clients shouldn't take hours.

Prospectra is a Notion agent that hunts leads for you, scores them by priority, and writes your cold emails — automatically.

→ Finds real leads from across the web

→ Tells you who to reach out to first

→ Writes personalised cold emails instantly

→ Keeps your entire pipeline inside Notion Stop scrolling LinkedIn for hours.

Try it → https://www.notion.so/agent/3357b509492d801682d200929fc20ce0

Let AI fill your pipeline.

1

7

358

You finish building something for a client:

Now you have to explain it to someone who doesn't know what a webhook is.

I built Runbook AI Agent — a Notion agent that turns your raw dev notes into a complete client handoff doc in seconds.

It generates:

→ Plain-English breakdown of what the system does

→ Step-by-step daily operations guide

→ Troubleshooting table for every failure mode

→ Maintenance schedule with task owners

→ Quick Reference Card your client can bookmark

Try it → https://www.notion.so/marketplace/custom-agents/runbook-builder-client-control-center

(https://www.notion.so/marketplace/custom-agents/runbook-builder-client-control-center)Any client. Any system. Any industry.

Your handoff done in seconds🚀

2

10

391

Stop searching for songs. Start matching your energy. ⚡🎧

Introducing Synk🔥— a music experience that adapts to how you feel.

Low energy? It lifts you.

Locked in? It keeps you there.

And with a single Vibe Button — your entire session syncs instantly 🎵🔥

Inspired by Spotify, Apple Music, and Tidal through Mobbin — but redesigned to be emotion-first, not playlist-first.

🔗 Experience Synk: https://synk--ayush026643.replit.app/

Features:

• 🎯 Energy-based music matching

• ⚡ Vibe Button (instant mood sync)

• 🔄 Real-time adaptive playback

• 🧠 Emotion-first discovery (no endless scrolling)

This isn’t music streaming. It’s a soundtrack for your state.

6

12

576

Your focus is broken. Fix it. ⚡

Meet Healio — a focus-first experience designed to help you lock in without burning out.

No clutter. No overwhelm.

Just deep work, calming soundscapes, and guided sessions that actually work.

Built using inspiration from Calm, Forest, and Endel via Mobbin — blending mindfulness + productivity into one seamless flow.

Stop forcing productivity. Start flowing with it.

🔗 Try Healio: https://healio--as026643.replit.app/

2

8

373

Vault — A Financial Mirror💰

Most finance apps show numbers.

Vault shows meaning.

Studied these on Mobbin before designing:

• Apple Wallet → minimalism, hierarchy

• Stripe → data visualization, typography

• Robinhood → wealth summary screens

No clutter. No charts.

• Money Pulse → instant financial health

• Spend Story → behavior, not transactions

• Dual-mode UI → light ↔ deep gold dark

Fluid, minimal, intentional.

🔗 Try Vault: https://vault--as1482003.replit.app/

Vault doesn’t track money.

It reflects wealth.

9

19

938

🤠 Where the West Ends

Inspired by Red Dead Redemption 2 I built this game.

🔫 Be a gunslinger

🌵 Explore the unknown

🐎 Interact with a living world

☠️ Kill… or be killed

👉 Play now: https://where-the-west-ends.bubbleapps.io/version-test (Where the West Ends)

Just you, your choices, and a dying West.

Yes… this is my second submission for the same hackathon 😅

(guess I wasn’t done with the West yet)

12

36

1K

🚀 What if learning felt like a game—and you actually leveled up in real life?

Introducing SkillArena – Learn. Share. Compete.

A platform where you don’t just learn… you apply.

🎯 Join skill-based challenges

📚 Share what you build

⚔️ Compete with others

🏆 Earn XP, badges & rewards

No more passive learning—just real growth through action and community.

👥 Built for builders, students, and creators who want to stay consistent and improve faster.

🔗 Try it here: https://skill-arena-96558.bubbleapps.io/version-test (Skill Arena)

Built with @bubble

20

51

1.4K

Everyone saw the final 'My Way' video.

And Here’s exactly how I built my “My Way” cinematic video using Kittl — from idea → scenes → final story.

No fluff. Just the workflow.

Final Video: https://contra.com/community/ZXTRB8Wz-tell-your-story-in-60-seconds (My Way)

If you’re using AI for content, this is what actually matters 👇

3

263

What if your entire journey could be told in 60 seconds?

This AI-generated video captures the shift from doubt → growth → self-belief, inspired by “My Way” by Frank Sinatra.

Built in Kittl Video with custom scenes, smooth transitions, and minimal animated text to keep it cinematic and impactful.

Simple idea: Do it your way.

View Project: https://app.kittl.com/project/cmmv2valq4ix80i6gz82vk2ih

12

411

AI Trading Co-Pilot reimagines how humans interact with financial data.

Instead of overwhelming dashboards, the interface introduces an AI presence that observes, analyzes, and responds in real time. When a user selects a cryptocurrency, the AI activates — scanning price movement and liquidity signals through a cinematic analysis sequence before delivering a clear risk level and strategic suggestion.

https://ai-trading-co-pilot.figma.site/

The core innovation lies in the interaction:

A responsive AI panel that feels alive

Smooth state transitions from observation to insight

Subtle ambient motion that creates a futuristic, intelligent atmosphere

Built entirely in Figma Make, this prototype explores a new design language for collaborative decision-making — where AI acts as a co-pilot, not an autopilot.

5

273