AI Decision Integrity Stress TestKami Harcej

Don't Let Your AI's Interpretation Become Liability.

Validation proves the model works. Authorization proves the decision is allowed.

Most organizations treat AI governance as a policy exercise. They validate the model, document the intent, and add a "Human-in-the-Loop" checkbox.

But policy doesn't execute. People don't intervene in time. And models don't ask for permission.

In high-stakes environments (Healthcare, Finance, Critical Infra), AI doesn't just suggest; it frames reality. When an AI defines "risk,"

"fraud," or "eligibility," and that definition triggers an automated action, Interpretation becomes Binding Authority.

If your system can execute a decision based on an interpretation that no one explicitly authorized at the moment of commitment, you are not governed. You are gambling.

I help organizations move from "Good Faith Governance"

(documents) to "Operational Governance" (architecture).

We don't just check if the model is accurate. We determine if the system has the legitimate right to act on its output.

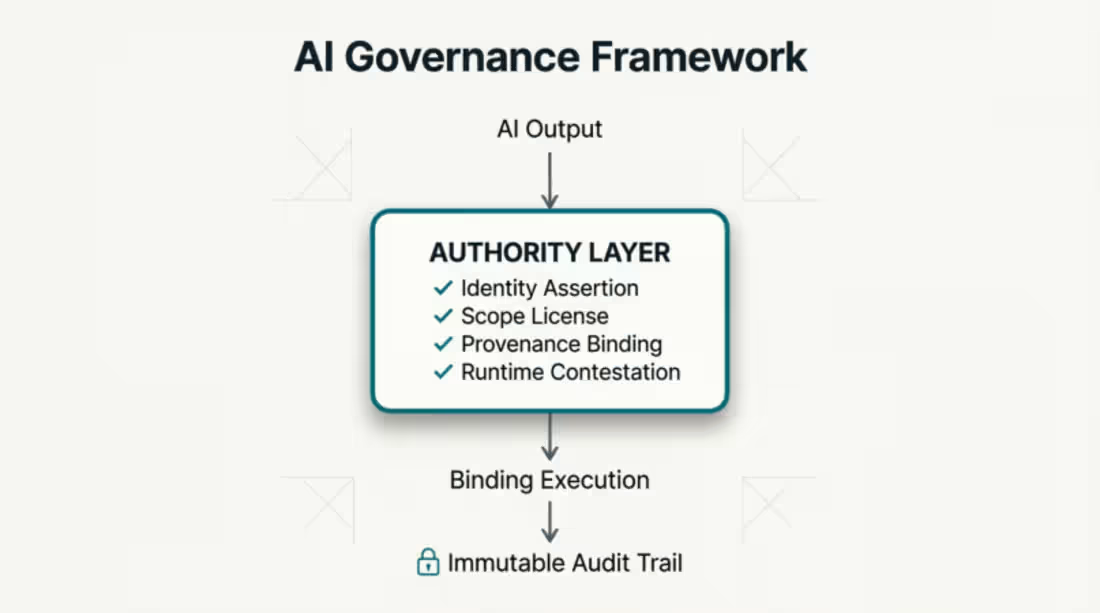

My Core Framework: The Authority Layer

Drawing from my research in "When Interpretation Becomes Binding," I implement a structural layer between AI output and execution that enforces:

✅ Verifiable Identity:

The system declares who it is (e.g., "Support Tool," not "Diagnostic Authority").

✅ Scope License:

The system proves the action is authorized

for this specific context.

✅ Provenance Binding:

The interpretation is cryptographically linked to the governing

standard (e.g., EU AI Act, Clinical Protocol).

✅ Runtime Contestation:

A mechanism to pause execution if conditions drift, before harm occurs.

SERVICES & ENGAGEMENTS

1. Decision Integrity Stress Test (The Entry Point)

Fast, high-value audit for AI leaders.You bring 3 live or planned AI decisions. I test if they hold at execution.

Deliverable:

A "Red Team" report showing exactly where your governance

fails the moment interpretation becomes binding.

Timeframe:

72 Hours.

Outcome:

Immediate visibility into your "Authority Gap."

2. Authority Layer Architecture Design

For enterprises deploying high-risk AI.

We design the governance primitives into your system architecture.

Scope:

Defining legitimate meaning ranges, designing refusal logic and

establishing audit trails that survive regulatory scrutiny.

Outcome:

A system that can say "No" before a bad decision becomes

real.

3. Executive Advisory & Board Readiness

For leaders facing EU AI Act and global regulatory pressure.

Scope:

Preparing your board for the shift from "Model Risk" to

"Interpretive Authority." Helping you answer the hardest

question: "Who authorized this system to decide this, right now?"

"Governance isn't what you write. It's what the system can refuse."

LET'S STRESS-TEST YOUR DECISIONS

Kamilla

Harcej

AI Governance & Decision Accountability Advisor

kamillaharcej@gmail.com | www.linkedin.com/in/kh001

"Opening 5 slots this month for Decision Integrity Stress Tests. DM 'TEST' to secure yours."

FAQs

Kami's other services

Starting at$750

Duration1 day

Tags

#AIAct

#AIAudit

#AIGovernance

#AIinProduction

#AIRegulation

AIRisk

#DecisionAccountability

#EnterpriseAI

#ExecutionBoundary

Service provided by

Kami Harcej Dublin, Ireland

- 2

- Followers

AI Decision Integrity Stress TestKami Harcej

Starting at$750

Duration1 day

Tags

#AIAct

#AIAudit

#AIGovernance

#AIinProduction

#AIRegulation

AIRisk

#DecisionAccountability

#EnterpriseAI

#ExecutionBoundary

Don't Let Your AI's Interpretation Become Liability.

Validation proves the model works. Authorization proves the decision is allowed.

Most organizations treat AI governance as a policy exercise. They validate the model, document the intent, and add a "Human-in-the-Loop" checkbox.

But policy doesn't execute. People don't intervene in time. And models don't ask for permission.

In high-stakes environments (Healthcare, Finance, Critical Infra), AI doesn't just suggest; it frames reality. When an AI defines "risk,"

"fraud," or "eligibility," and that definition triggers an automated action, Interpretation becomes Binding Authority.

If your system can execute a decision based on an interpretation that no one explicitly authorized at the moment of commitment, you are not governed. You are gambling.

I help organizations move from "Good Faith Governance"

(documents) to "Operational Governance" (architecture).

We don't just check if the model is accurate. We determine if the system has the legitimate right to act on its output.

My Core Framework: The Authority Layer

Drawing from my research in "When Interpretation Becomes Binding," I implement a structural layer between AI output and execution that enforces:

✅ Verifiable Identity:

The system declares who it is (e.g., "Support Tool," not "Diagnostic Authority").

✅ Scope License:

The system proves the action is authorized

for this specific context.

✅ Provenance Binding:

The interpretation is cryptographically linked to the governing

standard (e.g., EU AI Act, Clinical Protocol).

✅ Runtime Contestation:

A mechanism to pause execution if conditions drift, before harm occurs.

SERVICES & ENGAGEMENTS

1. Decision Integrity Stress Test (The Entry Point)

Fast, high-value audit for AI leaders.You bring 3 live or planned AI decisions. I test if they hold at execution.

Deliverable:

A "Red Team" report showing exactly where your governance

fails the moment interpretation becomes binding.

Timeframe:

72 Hours.

Outcome:

Immediate visibility into your "Authority Gap."

2. Authority Layer Architecture Design

For enterprises deploying high-risk AI.

We design the governance primitives into your system architecture.

Scope:

Defining legitimate meaning ranges, designing refusal logic and

establishing audit trails that survive regulatory scrutiny.

Outcome:

A system that can say "No" before a bad decision becomes

real.

3. Executive Advisory & Board Readiness

For leaders facing EU AI Act and global regulatory pressure.

Scope:

Preparing your board for the shift from "Model Risk" to

"Interpretive Authority." Helping you answer the hardest

question: "Who authorized this system to decide this, right now?"

"Governance isn't what you write. It's what the system can refuse."

LET'S STRESS-TEST YOUR DECISIONS

Kamilla

Harcej

AI Governance & Decision Accountability Advisor

kamillaharcej@gmail.com | www.linkedin.com/in/kh001

"Opening 5 slots this month for Decision Integrity Stress Tests. DM 'TEST' to secure yours."

FAQs

Kami's other services

$750