Finetuning Large Language Models (LLMs)Balaj Khalid

I specialize in fine-tuning large language models (LLMs) to optimize them for your specific use case. With extensive hands-on experience, I leverage expert frameworks to ensure high accuracy, efficiency, and usability. I focus on delivering tailored, actionable solutions that align with your business goals, ensuring efficiency and impact every step of the way.

What's included

Codebase with Detailed Comments

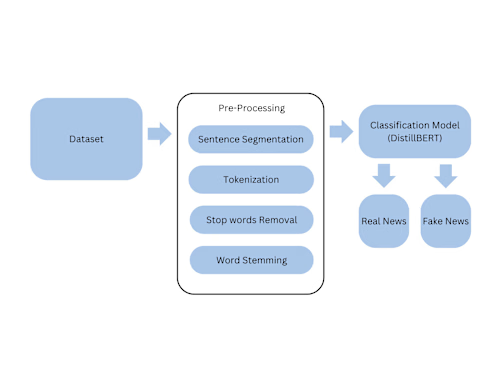

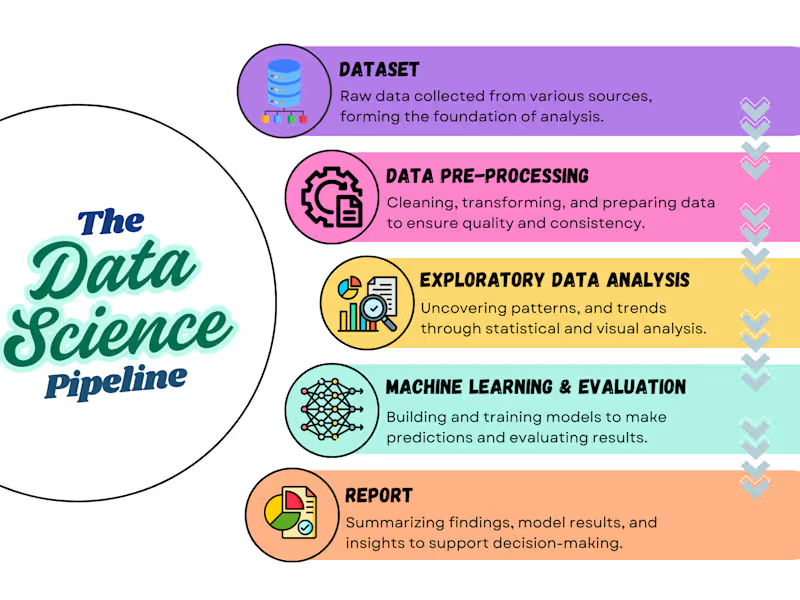

A clean and well-structured repository (e.g., GitHub or GitLab) containing all scripts for data preprocessing, model fine-tuning, and evaluation. The code will include detailed comments explaining each step, from loading and cleaning data to fine tuning models like GPT, Llama, DeepSeek, BERT, and DistilBERT to your unique needs. It will also provide instructions on setting up the environment, running the code, and reproducing the results.

FAQs

Example work

Balaj's other services

Starting at$100

Duration1 week

Tags

Bert

Hugging Face

Data Analyst

Data Modelling Analyst

Data Scientist

Service provided by

Balaj Khalid Los Angeles, USA

- 1

- Followers

Finetuning Large Language Models (LLMs)Balaj Khalid

Starting at$100

Duration1 week

Tags

Bert

Hugging Face

Data Analyst

Data Modelling Analyst

Data Scientist

I specialize in fine-tuning large language models (LLMs) to optimize them for your specific use case. With extensive hands-on experience, I leverage expert frameworks to ensure high accuracy, efficiency, and usability. I focus on delivering tailored, actionable solutions that align with your business goals, ensuring efficiency and impact every step of the way.

What's included

Codebase with Detailed Comments

A clean and well-structured repository (e.g., GitHub or GitLab) containing all scripts for data preprocessing, model fine-tuning, and evaluation. The code will include detailed comments explaining each step, from loading and cleaning data to fine tuning models like GPT, Llama, DeepSeek, BERT, and DistilBERT to your unique needs. It will also provide instructions on setting up the environment, running the code, and reproducing the results.

FAQs

Example work

Balaj's other services

$100