Custom AI RAG integration to your app | End-to-End developmentMohamed Dev

📦 RAG AI Implementation | End-to-End Service

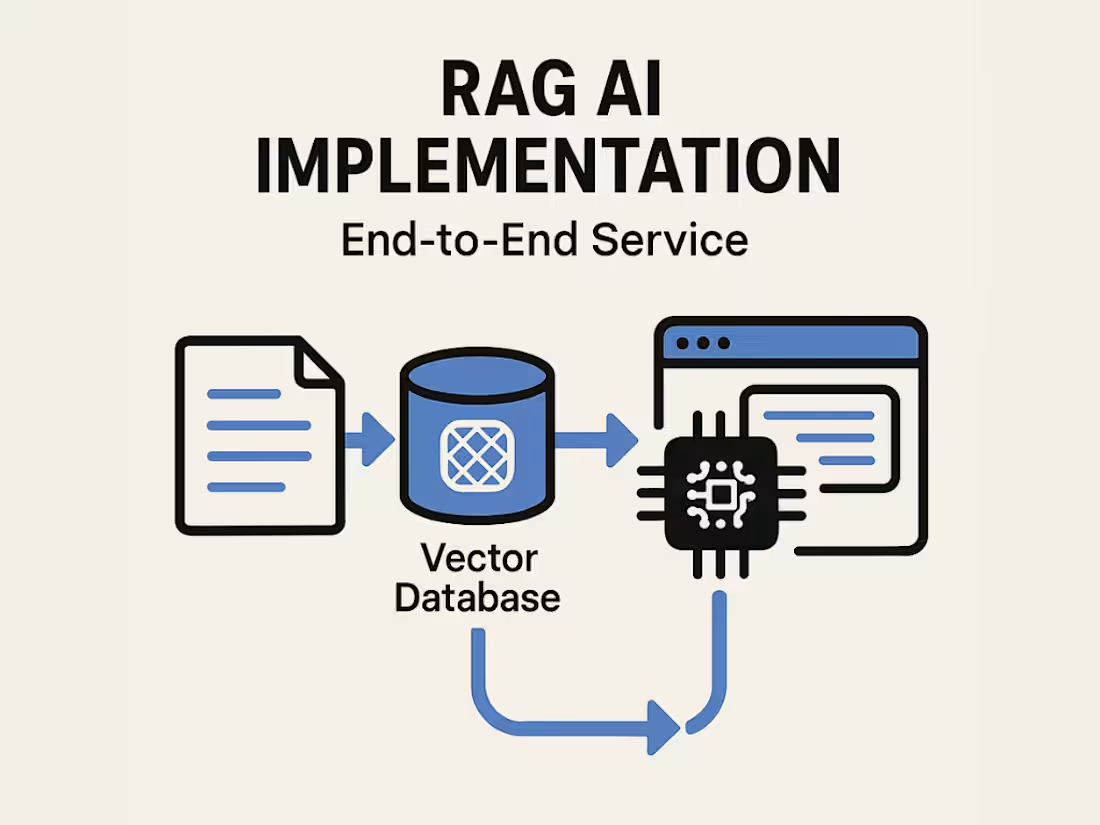

I help teams integrate Retrieval-Augmented Generation (RAG) into their existing applications, from raw data to lightning-fast, production-ready AI features.

What you get:

Full pipeline implementation: I handle everything, converting your training data, embedding it, uploading to a vector database, integrating RAG into your live app, and optimizing response times to under 500ms per query.

Tailored to your use case: Whether it’s internal knowledge search, customer support, or personalized content generation, I’ll adapt the setup to your exact needs.

What's included

Custom AI RAG

- Convert all of your PDFs, text files, voice notes, or any kind of data, into chunks to train the AI with.

- Set up and connect the server that holds the training data

- Connecting the server to your existing application and making it work with the LLM in it.

- Optimizing the build to reduce costs and speed up responses (caching, server connections, etc...) to get to 500 ms respond time per query.

FAQs

Contact for pricing

Tags

GraphQL

Node.js

React

Ruby

TypeScript

Backend Engineer

Software Engineer

Web Developer

Service provided by

Mohamed Dev Ottawa, Canada

- 5.00

- Rating

- 5

- Followers

Custom AI RAG integration to your app | End-to-End developmentMohamed Dev

Contact for pricing

Tags

GraphQL

Node.js

React

Ruby

TypeScript

Backend Engineer

Software Engineer

Web Developer

📦 RAG AI Implementation | End-to-End Service

I help teams integrate Retrieval-Augmented Generation (RAG) into their existing applications, from raw data to lightning-fast, production-ready AI features.

What you get:

Full pipeline implementation: I handle everything, converting your training data, embedding it, uploading to a vector database, integrating RAG into your live app, and optimizing response times to under 500ms per query.

Tailored to your use case: Whether it’s internal knowledge search, customer support, or personalized content generation, I’ll adapt the setup to your exact needs.

What's included

Custom AI RAG

- Convert all of your PDFs, text files, voice notes, or any kind of data, into chunks to train the AI with.

- Set up and connect the server that holds the training data

- Connecting the server to your existing application and making it work with the LLM in it.

- Optimizing the build to reduce costs and speed up responses (caching, server connections, etc...) to get to 500 ms respond time per query.

FAQs

Contact for pricing