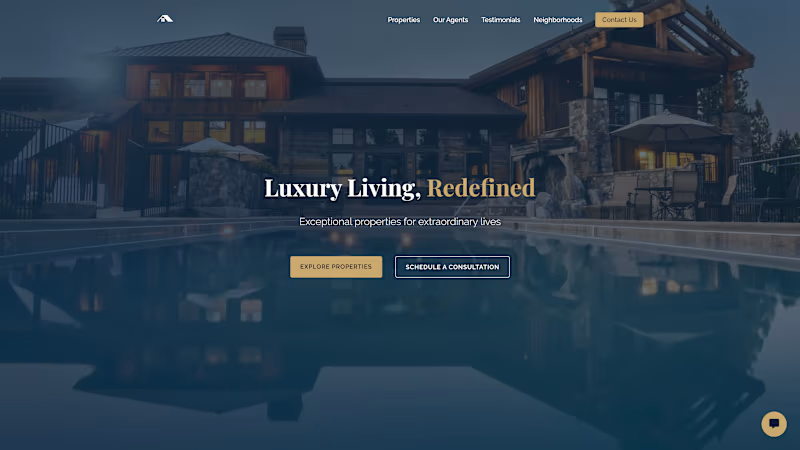

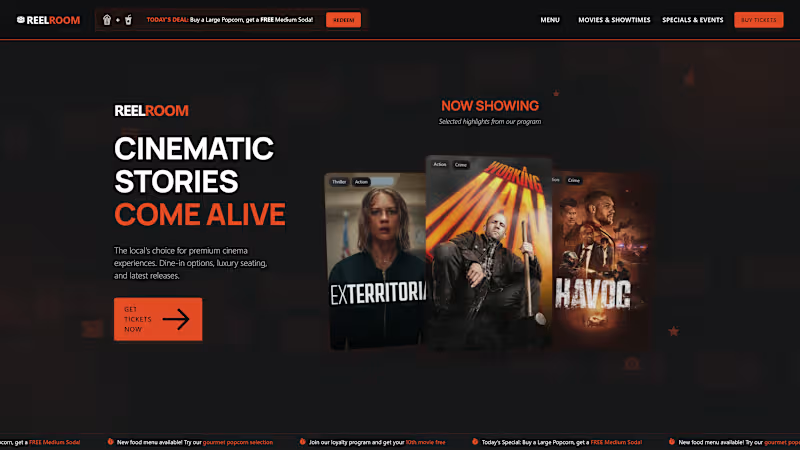

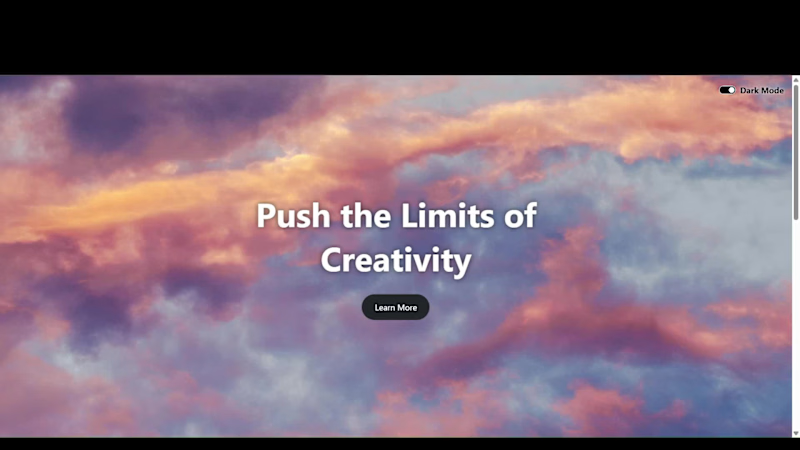

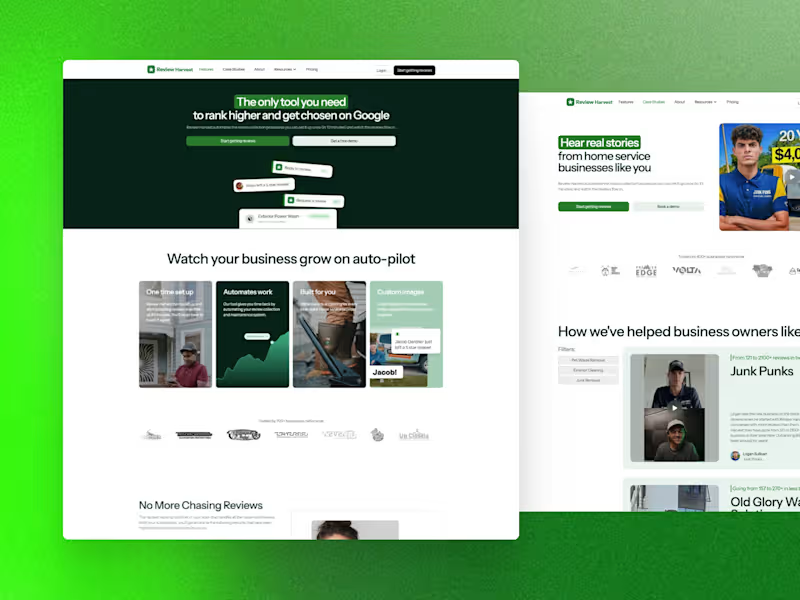

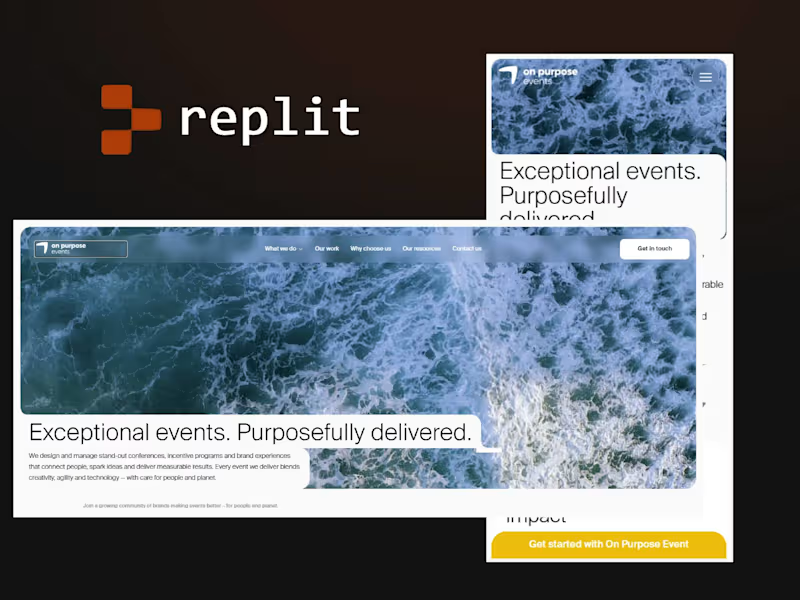

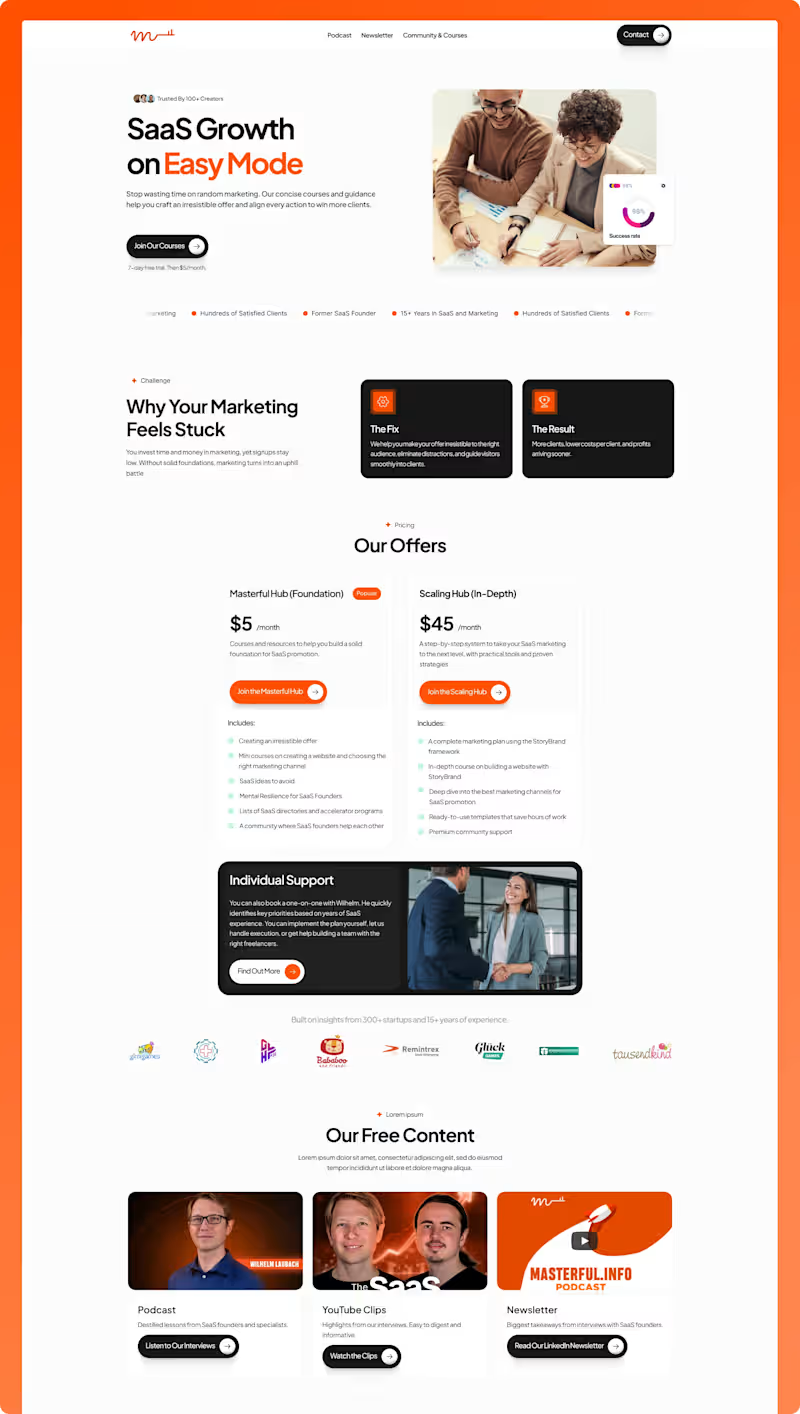

I build modern, responsive websites that stand out.

I build modern, responsive websites that stand out.

Web Dev & AI Evaluator: Clear, Creative, Reliable Work

Web Dev & AI Evaluator: Clear, Creative, Reliable Work

Design partner for early-stage startups 🚀

- $5k+

- Earned

- 2x

- Hired

- 5.0

- Rating

- 103

- Followers

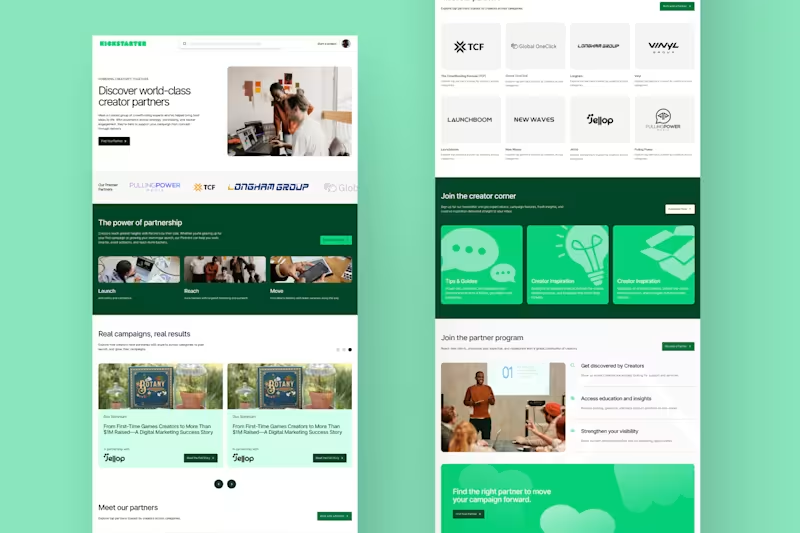

Design partner for early-stage startups 🚀

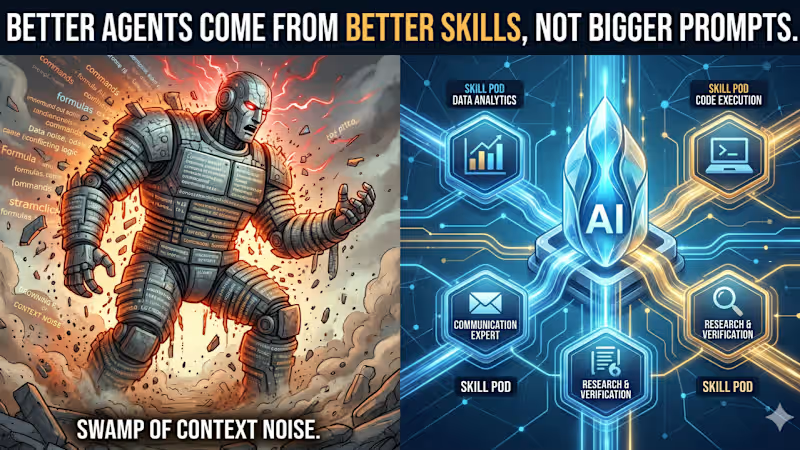

AI wizard | Vibe Coder

Framer & Graphic Designer | Web Designer | UI/UX Designer

Senior AI Solutions Architect | Agentic Systems & Product

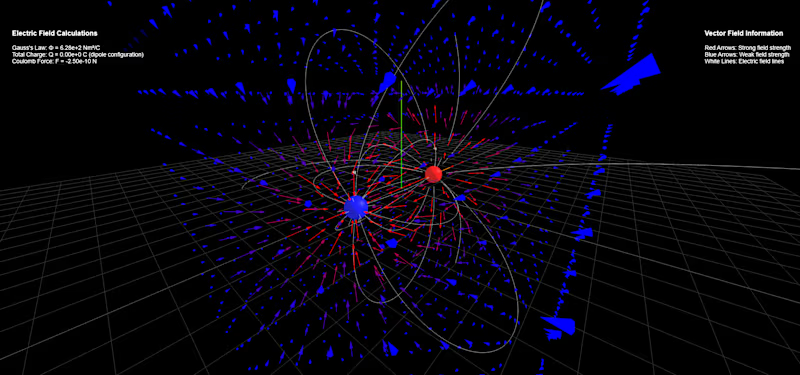

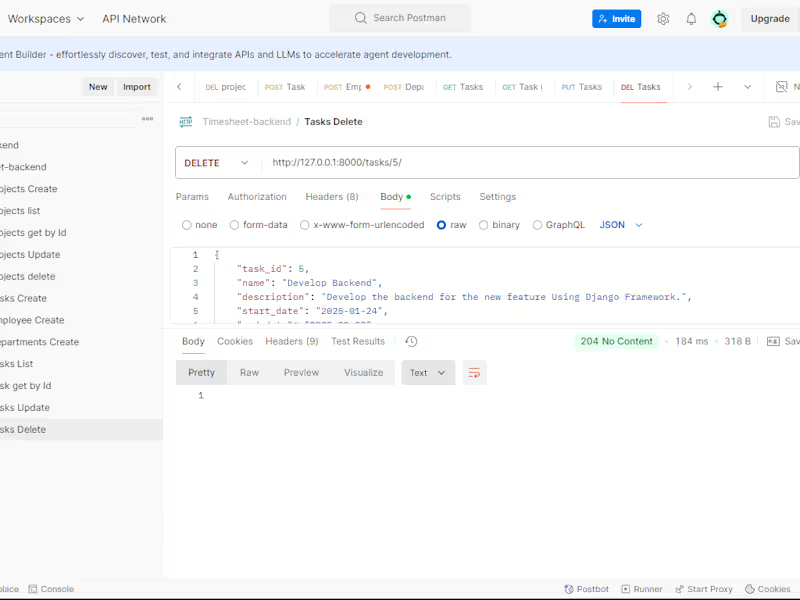

Software Engineer and data annotation and labelling.

Software Engineer and data annotation and labelling.

View more →

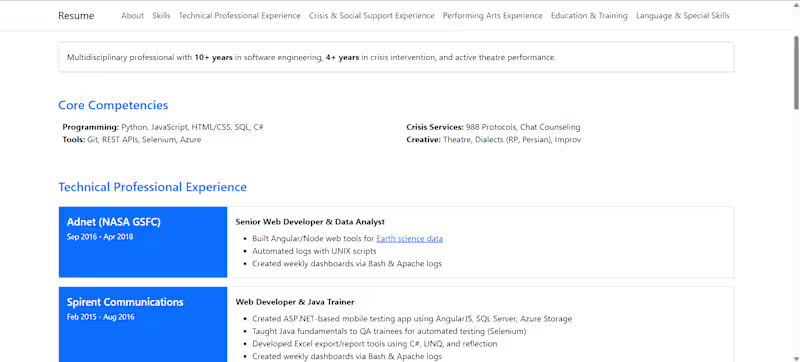

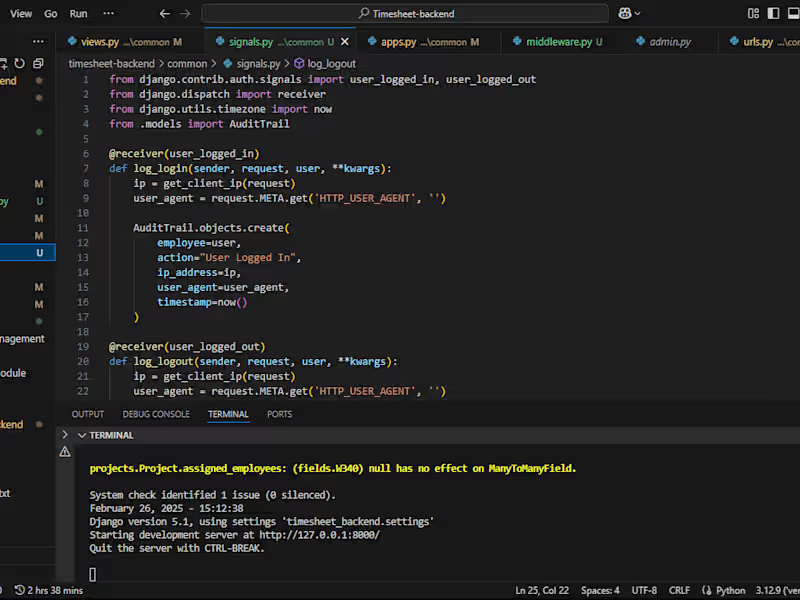

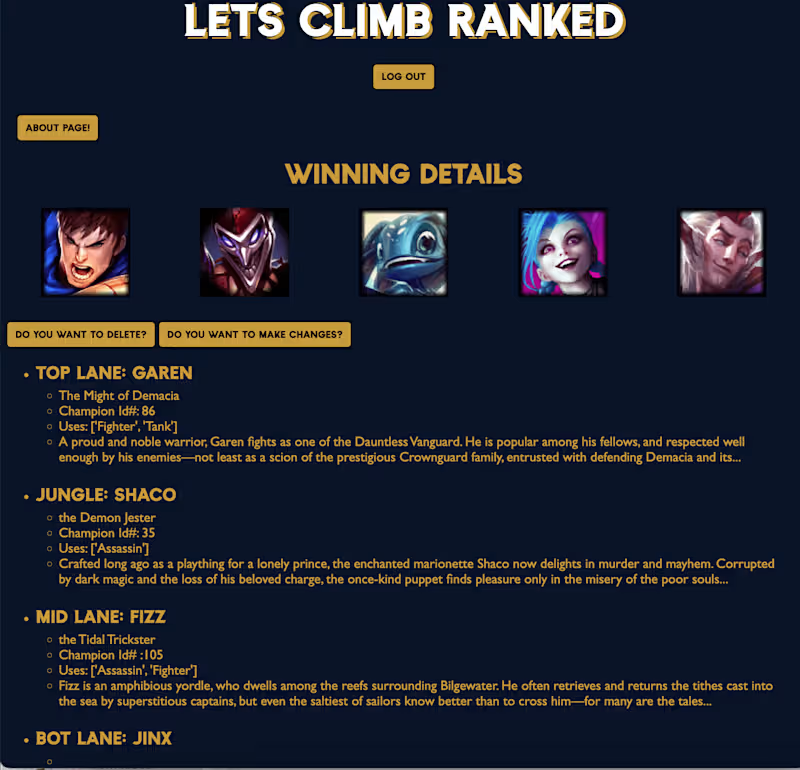

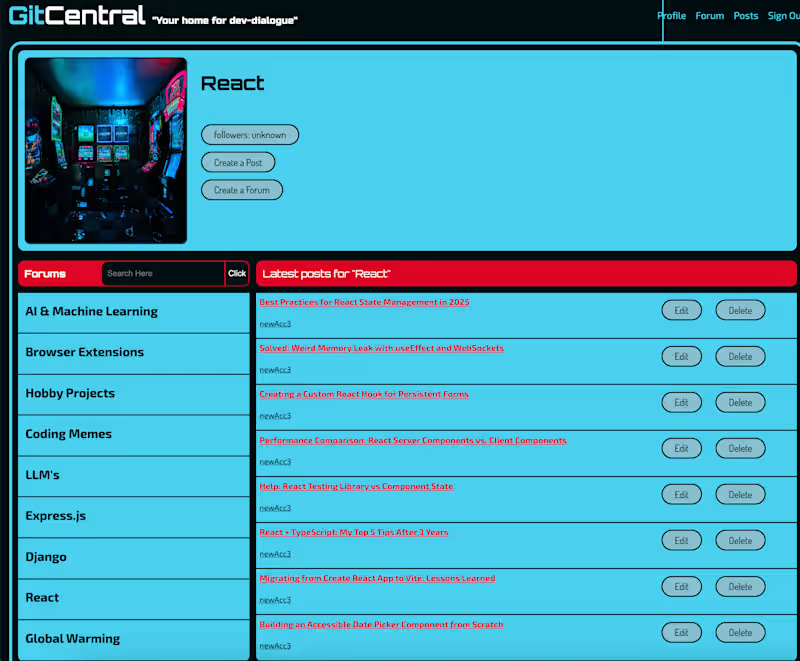

Fullstack engineer with dynamic design expertise.

Fullstack engineer with dynamic design expertise.