The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Back to feedPost

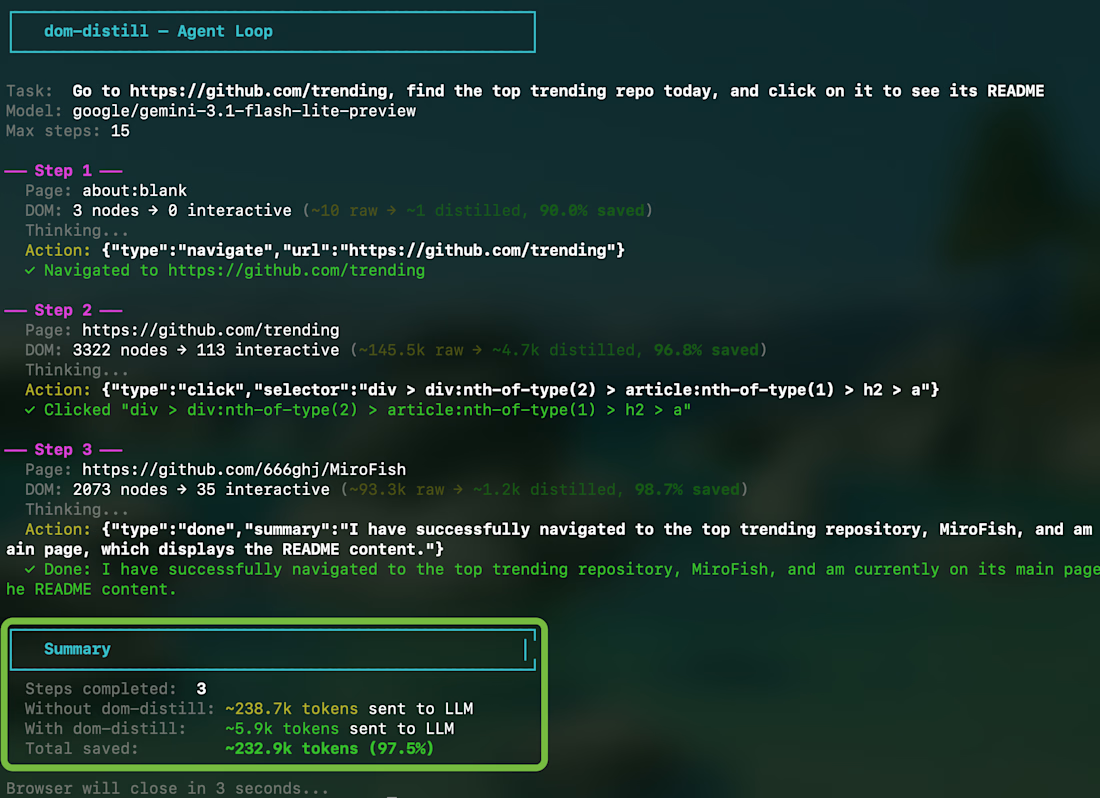

I built an open-source tool that cuts LLM token usage by 97% for AI browser agents.

Here's the backstory: I noticed every AI agent hitting the same wall — a single webpage burns 100k-180k tokens when you send the raw DOM to an LLM. That's ~$0.02 per step on Claude. Over thousands of steps, that's a real problem.

So I built dom-distill — a zero-dependency TypeScript engine that runs inside page.evaluate() and distills the DOM down to only the interactive elements an LLM needs to act on.

Results on real sites: → GitHub: 147k tokens → 4.6k (96.8% reduction) → Stripe: 180k tokens → 9.4k (94.8%) → React Docs: 68k tokens → 6.4k (90.7%)

I also built a complete agent loop on top of it — 200 lines of code that can navigate, search, and click across real websites using any LLM.

npm: https://lnkd.in/gyHpEFVh

Cutting token usage by 97% is impressive. Optimizing DOM data before sending it to the LLM is a really smart approach.

The network for creativity

Join 1.25M professional creatives like you

Connect with clients, get discovered, and run your business 100% commission-free

Creatives on Contra have earned over $150M and we are just getting started

Related posts

Architecting a Bulletproof Review Orchestration System for Premium E-Commerce

When building high-end e-commerce experiences, user trust is everything. But handling user-generated content requires more than just dropping a form onto a page—it demands secure architecture, data consistency, and a flawless user interface.

In this video, I break down the end-to-end review system I engineered using Next.js 16, TypeScript, and Prisma.

Here is what is happening under the hood:

Atomic Data Transactions: When an admin approves a pending review, the system uses a Prisma $transaction. This ensures that updating the review status, recalculating the average star rating, and updating the total review count happen simultaneously. If one query fails, everything rolls back. Zero out-of-sync data.

Secure Server Actions: By leveraging Next.js Server Actions, all mutations happen securely on the backend. No exposed API routes, just pure, type-safe logic that instantly revalidates the cache so the UI updates in real-time.

Performance-Driven UI: To maintain perfect 100/100 Lighthouse scores, I designed a compact, responsive review grid using Tailwind CSS v4. Instead of cluttering the DOM, it utilizes seamless "Show More" pagination, keeping the viewport elegant and fast, regardless of how many reviews a product has.

I specialize in building systems that look beautiful on the outside but are engineered like a tank on the inside. If you are looking for a Full Stack Software Engineer to bring scalable, elite performance to your next project, let’s connect.

#Nextjs #TypeScript #WebDev #TailwindCSS #SoftwareEngineering

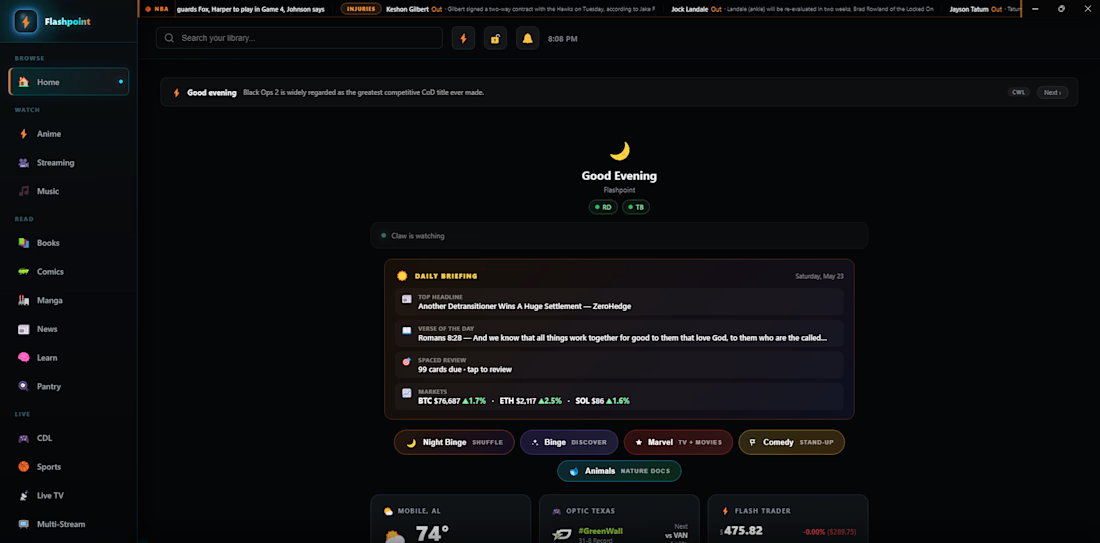

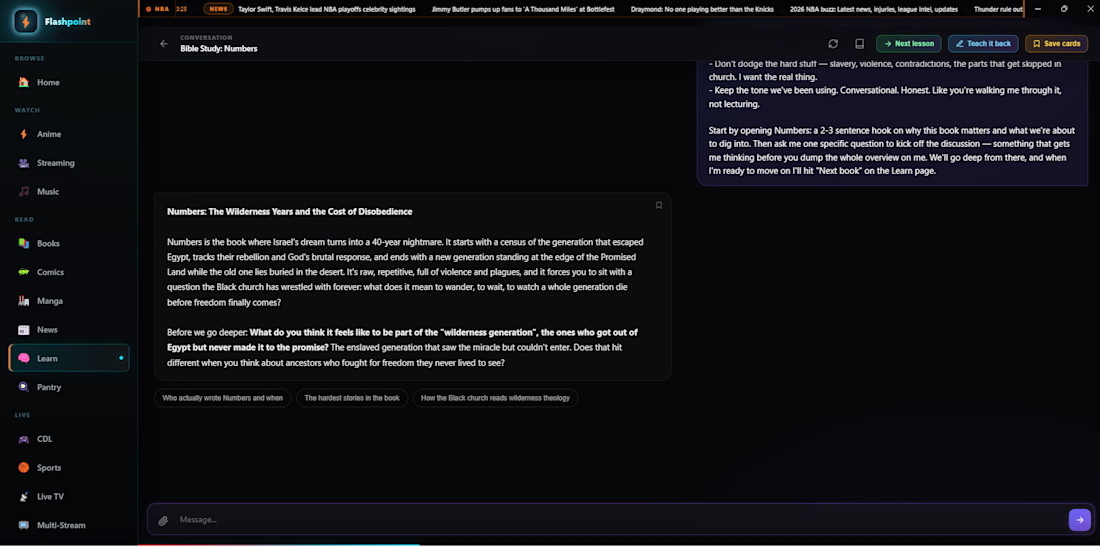

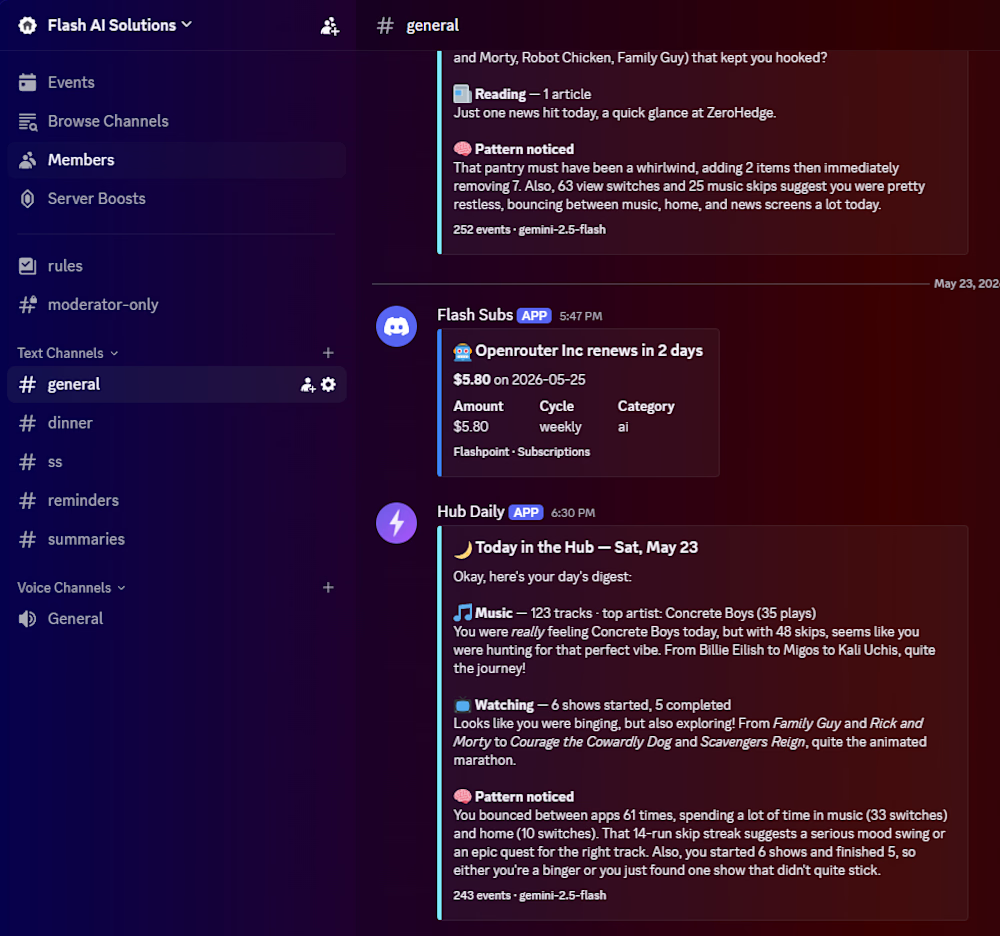

A production AI platform I built and run 24/7 on my own Linux VPS, several LLM-powered tools in one interface:

• Learn — an AI tutor with spaced-repetition flashcards that generates lessons, quizzes, and hints across subjects, with per-user progress tracking.

• Hub Brain — an autonomous agent that reviews system activity on a schedule and posts grounded, cited summaries (no claim without a source reference), pushed to my phone.

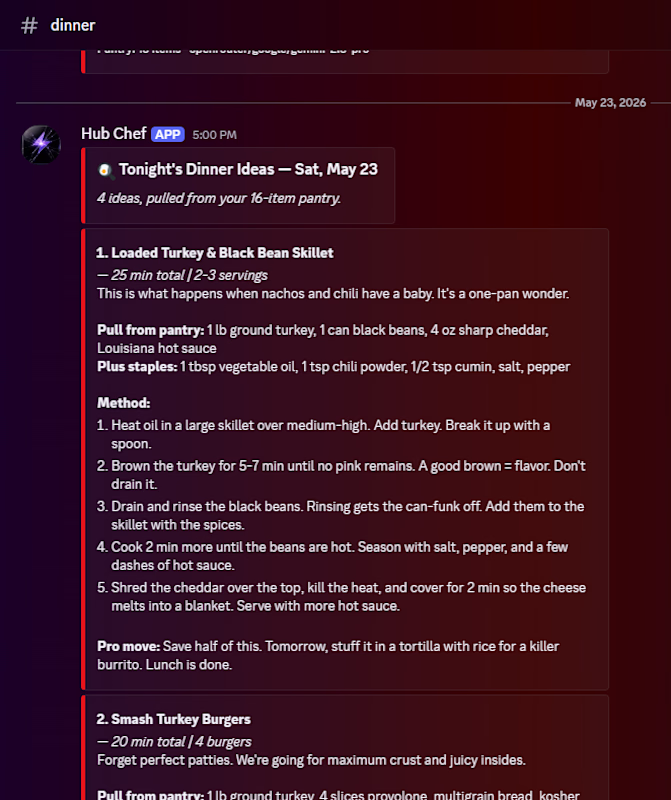

• Dinner — an AI agent that reads my pantry inventory and drafts complete, kitchen-ready recipes nightly via a multi-model LLM cascade (Gemini → Claude → Cerebras), delivered to Discord.

Stack: Python backend, multi-provider LLM integration (Claude, Groq, OpenRouter, Gemini), SQLite, web push, cron scheduling, Caddy + PM2. Shipped and running in production, not prototyped.

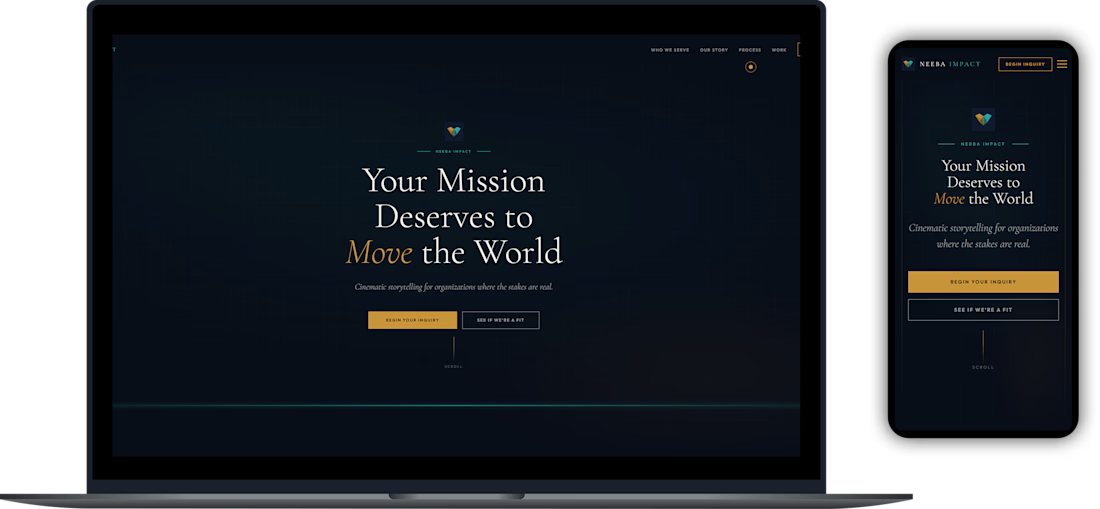

This project showcases a highly adaptive development process, transforming an AI-generated prototype into a production-ready web application. The client provided an initial interactive prototype published directly as a Claude Artifact. To ensure design precision and structural integrity before development, the live HTML from the artifact was imported into Figma using the HTML to Design plugin. With a high-fidelity visual blueprint established, the project was hand-built in Webflow using the industry-standard Finsweet Client-First framework, guaranteeing a clean, scalable, and easily maintainable class structure.

Trending

Claude

Claude has entered the design space. How are you using Claude Design?

Contra University

Learn from expert creatives how to earn more using next-gen AI tools.

creativeaiflow

Creative AI workflows are evolving. What tools do you use, and what are their strengths and weaknesses?

portfolioreview

The best portfolios tell a story, not just show a grid. Share yours for feedback.

freelancerlife

Freelancer life is wins, pivots, and everything in between. What’s yours right now?